Intel Optane SSD DC P4800X 750GB Hands-On Review

by Billy Tallis on November 9, 2017 12:00 PM ESTTest System

Along with the Optane SSD sample, Intel provided a new server based on their latest Xeon Scalable platform for our use as a testbed. The 2U server is equipped with two 18-core Xeon Gold 6154 processors, 192GB of DRAM, and an abundance of PCIe lanes.

Because this is a dual-socket NUMA system, care needs to be taken to avoid unnecessary data transfers between sockets. For this review, the tests were setup to largely emulate a single-socket configuration: All SSDs tested for this review were connected to PCIe ports provided by CPU #2. All of the benchmarks using the FIO tool were configured to only use cores on CPU #2 and only allocate memory connected to CPU #2, so inter-socket latency is not a factor. This setup would be quite limiting when doing full enterprise application testing, but for synthetic storage benchmarks one CPU is far more than necessary to stress any single SSD.

For this review, the test system was mostly configured to offer the highest and most predictable storage performance. HyperThreading was disabled, SpeedStep and processor C-states were off, and other motherboard settings were set for maximum performance. The one notable exception is fan speeds: since this test server was installed in a home office environment instead of a datacenter, the "acoustic" fan profile had to be used instead of "performance".

| Enterprise SSD Test System | |

| System Model | Intel Server R2208WFTZS |

| CPU | 2x Intel Xeon Gold 6154 (18C, 3.0GHz) |

| Motherboard | Intel S2600WFT |

| Chipset | Intel C624 |

| Memory | 192GB total, Micron DDR4-2666 16GB modules |

| Software | Linux kernel 4.13.11 FIO 3.1 |

The Linux kernel's NVMe driver is constantly evolving, and several new features have been added this year that are relevant to a drive like the Optane SSD. Rather than use an enterprise-oriented Linux distribution with a long-term support cycle for an older kernel version, this review was conducted using the very fresh 4.13 kernel series.

Earlier this year, the FIO storage benchmarking tool hit a major milestone with version 3.0 that switches timing measurements from microsecond precision to nanosecond precision. This makes it much easier to analyze the performance of Optane devices, where latency can be down in the single-digit microsecond territory.

The Competition

Intel SSD DC P3700 1.6TB

The Intel SSD DC P3700 was the flagship of their first generation of NVMe SSDs, and one of the first widely available NVMe SSDs. It was a great showcase for the advantages of NVMe, but is now outdated with its use of 20nm planar MLC NAND and capacities that top out at 2TB.

Intel launched a new P4x00 generation of flash-based enterprise NVMe SSDs this year using a new controller and 3D TLC NAND. However, due to the existence of the Optane SSD DC P4800X as the new flagship drive, the P3700 didn't get a direct successor: the P4600 is currently Intel's top flash-based enterprise SSD, and while it offers higher performance and capacities than the P3700 it does not match the rated write endurance of the P3700.

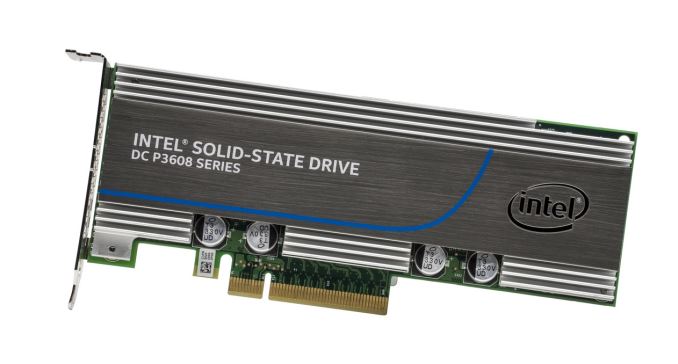

Intel SSD DC P3608 1.6TB

Intel's first-generation NVMe controller only supports drive capacities up to 2TB. To get around that limitation and to offer higher performance in some respects, Intel created the SSD DC P3608. Roughly equivalent to two P3600s on one card behind a PLX PCIe switch, the P3608 appears to the system as two SSDs but can be used with software RAID to create a large, high-performance volume. Our P3608 sample has a total of 1.6TB of accessible storage (800GB per controller) and has the highest built-in overprovisioning ratios in the P3608 family. This gives it the best aggregate random write performance, which is rated to match a single P3700 while random reads and sequential transfers can exceed the P3700.

For this review, one controller on the P3608 was tested as a stand-in for the P3600, and a software RAID 0 array spanning both controllers was also tested.

Intel has not officially announced the successor P4608, but they have submitted it for testing and inclusion on the NVMe Integrators List, so it will probably launch within the next few months.

Micron 9100 MAX 2.4TB

Launched in mid 2016, the Micron 9100 series was part of their first generation of NVMe SSDs. Micron wasn't new to the PCIe SSD market, but their early products predated the NVMe standard and instead used a proprietary protocol. The 9100 series uses Micron 16nm MLC NAND flash and a Microsemi Flashtec NVMe1032 controller.

Our Micron 9100 MAX 2.4TB sample is the fastest model from the 9100 series, and the second-largest. Micron recently announced the 9200 series that switched to 3D TLC NAND for a huge capacity boost and adopted the latest generation of Microsemi controllers to allow for a PCIe x8 connection, but we don't have a sample.

Intel Optane SSD 900p 280GB

Last month, Intel launched their consumer Optane SSD based on the same controller platform as the P4800X. The Optane SSD 900p has some enterprise-oriented features disabled and is rated for only a third the write endurance, but offers essentially the same performance as the P4800X for about a third the price.

This makes the 900p a very attractive option for users who need Optane level of performance but don't want to pay for the absolute highest write endurance. It isn't intended as an enterprise SSD, but the 900p can compete in this space.

58 Comments

View All Comments

tuxRoller - Friday, November 10, 2017 - link

Since this is for enterprise, the os vendor would be the one responsible (so, yes, third party) and one of the reasons why you pay them ridiculous support fees is for them to be your single point of contact for most issues.tuxRoller - Friday, November 10, 2017 - link

Very nice write-up.Might it be possible for us to get an idea of the difference in cell access times by running a couple tests on a loop device, and, even better, purely dram-based storage accessed over pcie?

Pork@III - Friday, November 10, 2017 - link

Has no normal only speed test? What are these creepy creations of this vc that?romrunning - Friday, November 10, 2017 - link

Is there any tests of the 4800X in a virtual host? Either Hyper-V or ESX, running multiple server OS clients with a variety of workloads. With the kind of low latency shown, I'd love to see how much more responsive Optane is compared to all flash storage like a P3608. Sort of a" rising tide floats all ships" kind of improvement, I hope.Klimax - Sunday, November 12, 2017 - link

That's nice review. How about some test using Windows too. (Aka something with more advanced I/O subsystem)Billy Tallis - Monday, November 13, 2017 - link

I'm not sure what you mean. Nobody seriously considers the Windows I/O system to be more advanced than what Linux provides. Even Intel's documentation states that the best latency they can get out of the Optane SSD on Windows is a few microseconds slower than on the Linux NVMe driver, and on Linux a few more microseconds can be saved using SPDK.tuxRoller - Tuesday, November 14, 2017 - link

"Advanced" may be the wrong way to look at it because ntkrnl can perform both sync and async operations, while Linux is essentially a sync-based kernel (the limitations surrounding its aio system are legendary). However, by focusing on doing that one thing well the block subsystem has become highly optimized for enterprise workloads.Btw, is there any chance you could run that block system (and nvme protocol, if possible) overhead test i asked about?