Intel Displays 10nm Wafer, Commits to 10nm ‘Falcon Mesa’ FPGAs

by Ian Cutress on September 19, 2017 8:30 AM EST

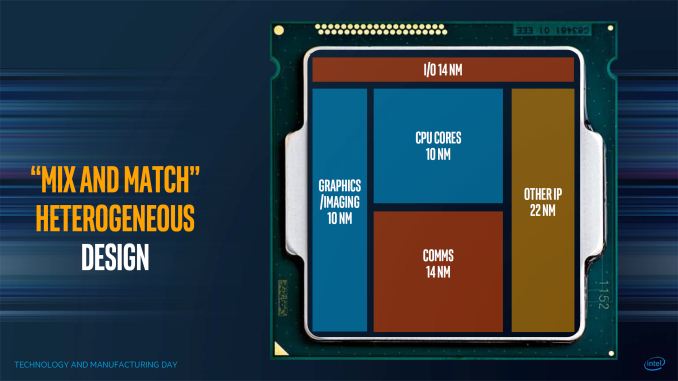

On the back of Intel’s Technology and Manufacturing Day in March, the company presented another iteration of the information at an equivalent event in Beijing this week. Most of the content was fairly similar to the previous TMD, with a few further insights into how some of the technology is progressing. High up on that list would be how Intel is coming along with its own 10nm process, as well as several plans regarding the 10nm product portfolio.

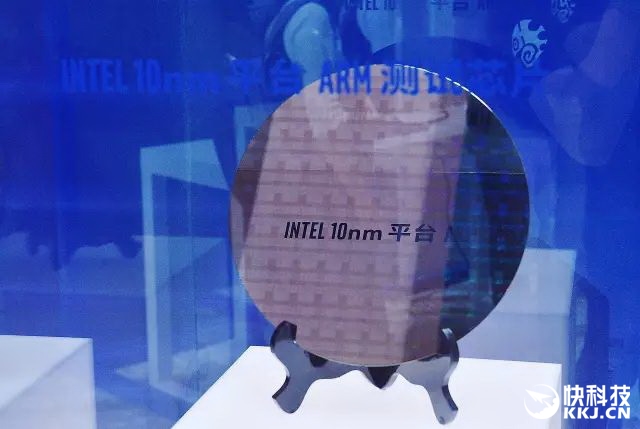

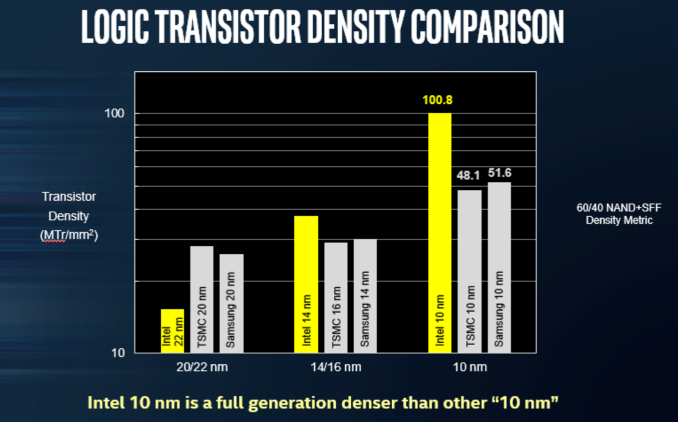

The headline here was ‘we have a wafer’, as shown in the image above. Intel disclosed that this wafer was from a production run of a 10nm test chip containing ARM Cortex A75 cores, implemented with ‘industry standard design flows’, and was built to target a performance level in excess of 3 GHz. Both TSMC and Samsung are shipping their versions of their ‘10nm’ processes, however Intel reiterated the claim that their technology uses tighter transistors and metal pitches for almost double the density of other competing 10nm technologies. While chips such as the Huawei Kirin 970 from TSMC’s 10nm are in the region of 55 million transistors per mm2, Intel is quoting over 100 million per mm2 with their 10nm (and using a new transistor counting methodology).

Intel quoted a 25% better performance and 45% lower power than 14nm, though failed to declare if that was 14nm, 14+, or 14++. Intel also stated that the optimized version of 10nm, 10++, will boost performance 15% or reduce power by 30% from 10nm. Intel’s Custom Foundry business, which will start on 10nm, is offering customers two design platforms on the new technology: 10GP (general purpose) and 10HPM (high performance mobile), with validated IP portfolios to include ARM libraries and POP kits and turnkey services. Intel has yet to announce a major partner in its custom foundry business, and other media outlets are reporting that some major partners that had signed up are now looking elsewhere.

Earlier this year Intel stated that its own first 10nm products would be aiming at the data center first (it has since been clarified that Intel was discussing 10nm++). At the time it was a little confusing, given Intel’s delayed cadence with typical data center products. However, since Intel acquired Altera, it seems appropriate that FPGAs would be the perfect fit here. Large-scale FPGAs, due to their regular repeating units, can take advantage of the smaller manufacturing process and still return reasonable yields by disabling individual gate arrays with defects and appropriate binning. Intel’s next generation of FPGAs will use 10nm, and they will go by the codename “Falcon Mesa”.

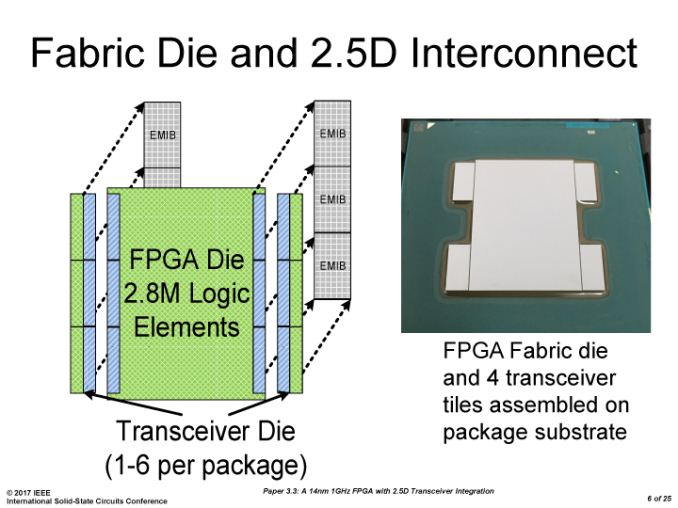

Falcon Mesa will encompass multiple technologies, most noticeably Intel’s second generation of their Embedded Multi-Die Interconnect Bridge (EMIB) packaging. This technology embeds the package with additional silicon substrates, providing a connection between separate active silicon parts much faster than standard packaging methods and much cheaper than using full-blown interposers. The result is a monolithic FPGA in the package, surrounded by memory or IP blocks, perhaps created at a different process node, but all using high-bandwidth EMIB for communication. On a similar theme, Falcon Mesa will also include support for next-generation HBM.

Among the IP blocks that can be embedded via EMIB with the new FPGAs, Intel lists both 112 Gbps serial transceiver links as well as PCIe 4.0 x16 connectivity, with support for data rates up to 16 GT/s per lane for future data center connectivity. This was discussed at the recent Hot Chips conference, in a talk I’d like to get some time to expand in a written piece.

No additional information was released regarding 10nm products for consumer devices.

Related Reading

- Intel Officially Reveals Post-8th Gen Core Code Name: Ice Lake, Built on 10nm+

- Hot Chips: Intel EMIB and 14nm Stratix 10 FPGA Live Blog

- CES 2017: Intel Press Event Live Blog

- Samsung and TSMC Roadmaps: 8 and 6 nm Added, Looking at 22ULP and 12FFC

Additional: 1:00pm September 19th

After doing some digging, we have come across several shots of the wafer up close.

From http://news.mydrivers.com/

This is from the presentational display. Detail is very hard to make out at the highest resolution we can find this image.

Additional: 1:20pm September 19th

Intel has also now put the presentation up on the website, which gives us this close-up:

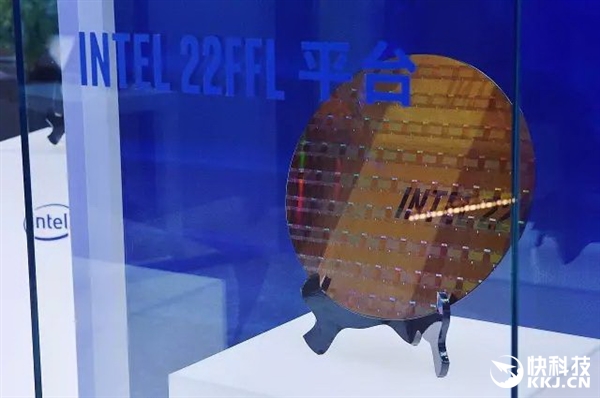

Surprisingly, this wafer looks completely bare. Either this is simply a 300mm wafer before production, or Intel have covered the wafer on purpose with a reflective material to stop prying eyes. It's a very odd series of events, as Intel did have other wafers at the event, including 10nm using ARM, and examples of the new 22FFL process.

From http://news.mydrivers.com/

From http://news.mydrivers.com/

From http://news.mydrivers.com/

Both of these wafers seem to have a repeating pattern we would typically see on a manufactured wafer. So either Intel does not want anyone to look at 10nm Cannon Lake just yet, or they were just holding up an unused disc of silicon.

Additional: 3:00pm September 20th

Intel got back to us with a more detailed Cannon Lake image, clearly showing the separate dies:

Manual counting puts the wafer at around 36 dies across and 35 dies down, which leads to a die size of around 8.2 mm by 8.6 mm, or ~70.5 mm2 per die. At that size, it would suggest we are likely looking at a base dual-core die with graphics: Intel's first 14nm chips in a 2+2 configuration, Broadwell-U, were 82 mm2, so it is likely that we are seeing a 2+2 configuration as well. At that size, we're looking at around 850 dies per wafer.

Source: Intel

52 Comments

View All Comments

Yorgos - Tuesday, September 19, 2017 - link

You could do a firmware update in your device, loading the new .bit file on the fpga, and actually doing a h/w update or fix h/w problems.The potential is enormous, the industry is not so willing to do it

willis936 - Tuesday, September 19, 2017 - link

That's for several very good reasons. The very first is that FPGAs cost an order of magnitude (or more in many cases) more than an ASIC. They're primarily for development and very expensive, low quantity products. The next and most obvious is that being able to rewrite hardware is dangerous. It would be a target for attackers. It would make even the most well hidden and aggressive keyloggers blush with how much control over a system and how well hidden FPGA based attacks could hide. Also if your chip manufacturer starts churning out updates every day you'll end up with a firefox running in your computer. Hardware can be done right the first time.MajGenRelativity - Tuesday, September 19, 2017 - link

Valid points!FunBunny2 - Tuesday, September 19, 2017 - link

-- Hardware can be done right the first time.which is why the marginally intelligent "do computers" with a comp. sci. degree. invented just to pander to them.

MajGenRelativity - Tuesday, September 19, 2017 - link

What?MajGenRelativity - Tuesday, September 19, 2017 - link

A. How often does the average consumer do a firmware update?B. I'm not sure it warrants adding a dedicated chip on 10nm in any situation that isn't industrial.

C. I'm sure you can do a lot, I'm just not aware of it

saratoga4 - Tuesday, September 19, 2017 - link

FPGAs are widely used in low volume electronics where the cost of running an ASIC would be prohibitive. They're not used very much in consumer electronics because they are slower, use more power, and are more expensive than ASICs when mass produced. By the time you get up to consumer electronics volumes, it is usually better/cheaper/faster to get a working ASIC out (possibly based on FPGA prototypes) than to ship everyone an FPGA.MajGenRelativity - Tuesday, September 19, 2017 - link

I wasn't aware of that. Good to know.willis936 - Tuesday, September 19, 2017 - link

What would be a real game changer to me is if the development tools around FPGAs became more accessible. After (trying) to use Xilinx ISE I can safely say I'm much more cozy doing things in traditional software. I don't want to spend 100 hours trying to make an IDE behave. Verilog and VHDL are both simple to write and are intuitive to think about for anyone who have spent time with combinational and sequential logic (something that I think is in every electrical engineer's curriculum). Why do the tools need to make it so hard to just implement a design? Hell it would be nice if they had a block diagram mode similar to schematic editors like orcad capture.saratoga4 - Tuesday, September 19, 2017 - link

>Hell it would be nice if they had a block diagram mode similar to schematic editors like orcad capture.These exist but are not very popular because the approach does not scale well to complex devices like would be implemented on an FPGA. They're more useful for devices like CPLDs though were you usually only want to "wire" a bread board sized "circuit".

The complexity of FPGA tools is a reflection of their target market, which is people designing very complex hardware devices.