The Intel Core i9-7980XE and Core i9-7960X CPU Review Part 1: Workstation

by Ian Cutress on September 25, 2017 3:01 AM ESTA Few Words on Power Consumption

When we tested the first wave of Skylake-X processors, one of the take away points was that Intel was starting to push the blurred line between thermal design power (TDP) and power consumption. Technically the TDP is a value, in Watts, to which a CPU cooler should be designed to cope with heat energy of that amount: a processor with a 140W TDP should be paired with a CPU cooler that can dissipate a minimum of 140W in order to avoid temperature spikes and ‘thermal runaway’. Failure to do so will cause the processor to hit thermal limits and reduce performance to compensate. Normally the TDP is, on average, also a good metric for power consumption values. A processor with a TDP of 140W should, in general, consume 140W of power (plus some efficiency losses).

In the past, particularly with mainstream processors, and even with the latest batch of mainstream processors, Intel typically rides the power consumption well under the rated TDP value. The Core i5-7600K for example has a TDP of 95W, and we measured a power consumption of ~61W, of which ~53W was from the CPU cores. So when we say that in the past Intel has been conservative with the TDP value, this is typically the sort of metric we will quote.

With the initial Skylake-X launch, things were a little different. Due to the high all-core frequencies, the new mesh topology, the advent of AVX-512, and the sheer number of cores in play, the power consumption was matching the TDP and even exceeding it in some cases. The Core i9-7900X is rated at 140W TDP, however we measured 149W, a 6.4% difference. The previous generation 10-core, the Core i7-6950X was also rated at 140W, but only draws 111W at load. Intel’s power strategy has changed with Skylake-X, particularly as we ramp up the number of cores.

Even though we didn’t perform the testing ourselves, our colleagues over at Tom’s Hardware, Paul Alcorn and Igor Wallossek, did extensive power testing on the Skylake-X processors. Along with showing that the power delivery system of the new motherboards works best with substantial heatsinks and active cooling (such as a VRM fan), they showed that with the right overclock, a user can draw over 330W without too much fuss.

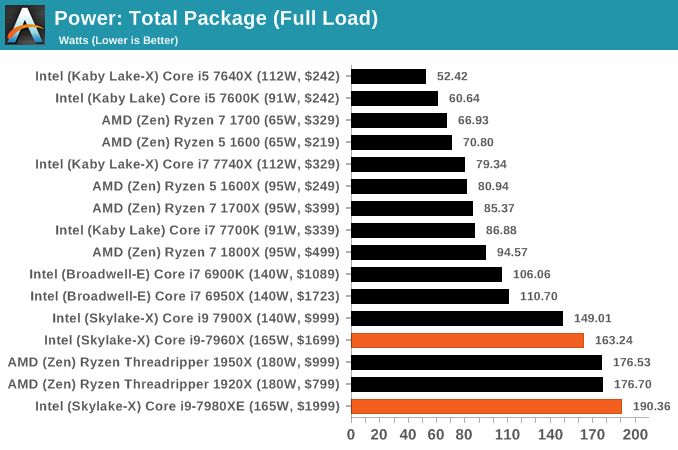

So for the two processors in the review today, the same high values ring true. Almost to the point of it being alarmingly so. Both the Core i9-7980XE and the Core i9-7960X have a TDP rating of 165W, and we start with the peak headline numbers first. Our power testing implements a Prime95 stress test, with the data taken from the internal power management registers that the hardware uses to manage power delivery and frequency response. This method is not as accurate as a physical measurement, but is more universal, it removes the need to tool up every single product, and the hardware itself uses these values to make decisions about the performance response.

At full load, the total package power consumption for the Core i9-7960X is almost on the money, drawing 163W.

However the Core i9-7980XE goes above and beyond (and not necessarily in a good way). At full load, running an all-core frequency of 3.4 GHz, we recorded a total package power consumption of 190.36W. This is a 25W increase over the TDP value, or a 15.4% gain. Assuming our singular CPU is ‘representative’, I’d hazard a guess and say that the TDP value of this processor should be nearer 190W, or 205W to be on the safe side. Unfortunately, when Intel started designing the Basin Falls platform, it only was designed to be rated at 165W. This is a case of Intel pushing the margins, perhaps a little too far for some. It will be interesting to get the Xeon-W processors in for equivalent testing.

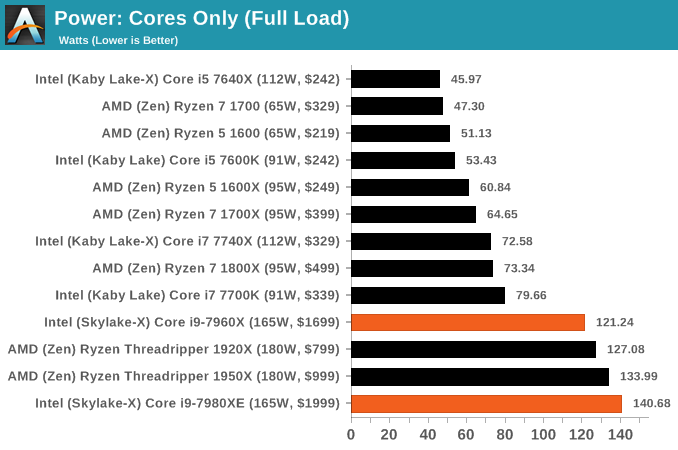

Our power testing program can also pull out a breakdown of the power consumption, depending if the registers are preconfigured in the software. In this case we were also able to pull out values for the DRAM controller(s) power consumption, although looking at the values this is likely to include the uncore/mesh as well. For both CPUs at load, we see that this DRAM and mesh combination is drawing ~42W. If we remove this from the load power numbers, that leaves 121W for the 16-core chip (7.5W per core) and 140W for the 18-core chip (7.8W per core).

Most of the rise of the power consumption, for both the cores and DRAM, happens when the processor is loaded to four threads - the Core i9-7980XE is drawing 100W+ when four threads are loaded. This is what we expect to see: when the processor is lightly loaded and in turbo mode, a core can consume upwards of 20W, while at load it will migrate down to a smaller value. We saw the same with with Ryzen, drawing 17W per core when lightly threaded down to 6W per core when loaded. Obviously the peak efficiency point for these cores is down nearer the 6-8W range than up at the 15-20W range.

Unfortunately, due to timing, we did not perform any overclocking to see the effect it has on power. There was one number in the review materials we received that will likely be checked with our other Purch colleagues: one motherboard vendor quoted the power consumption of the Core i9-7980XE, when overclocked to 4.4 GHz, will reach over 500W. I think someone wants IBM’s record. It also means that the choice of CPU cooler is an important factor in all of this: very few off-the-shelf solutions will happily deal with 300W properly, let alone 500W. These processors are unlikely to bring about a boom in custom liquid cooling loops, however for the professionals that want all the cores and also peak single thread performance, start looking at pre-built overclocked systems that emphasize a massive amount of cooling capability.

A Quick Run on Efficiency

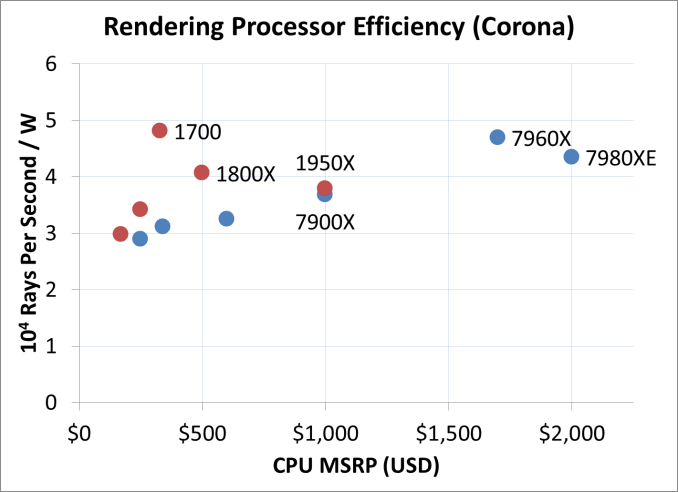

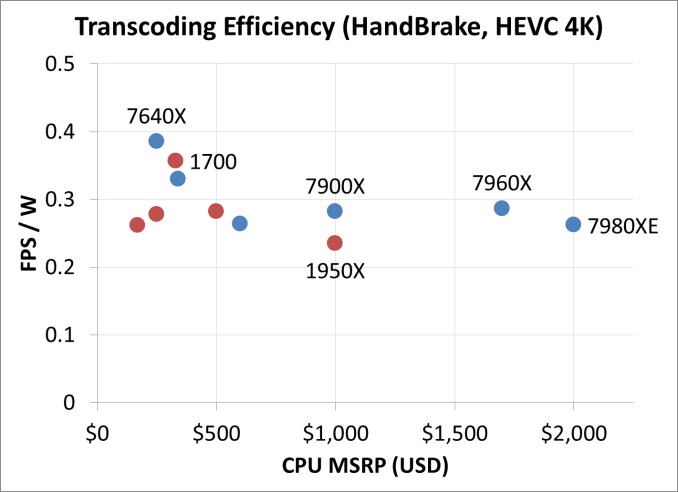

Some of our readers have requested a look into some efficiency numbers. We’re still in the process of producing a good way to represent this data, and take power numbers directly during the benchmark to get a full accurate reading. In the meantime, we’re going to take a benchmark we know hammers every thread of every CPU and put that against our load power readings.

First up is Corona. We take the benchmark result and divide by the load power, to get the efficiency value. This value is then reduced by a constant factor to provide a single digit number.

In a rendering task like Corona, where all the threads are hammered all the time, both the Skylake-X parts out-perform Threadripper for power efficiency, although not by twice as much. Interestingly the results show that as we reduce the clocks on TR, the 1700 comes out on top for pure efficiency in this test.

HandBrake’s HEVC efficiency with large frames actually peaks with the Core i5 here, with the 1700 not far behind. All the Skylake-X processors out-perform Threadripper on efficiency.

152 Comments

View All Comments

mapesdhs - Tuesday, September 26, 2017 - link

In that case, using Intel's MO, TR would have 68. What Intel is doing here is very misleading.iwod - Monday, September 25, 2017 - link

If we factor in the price of the whole system, rather then just CPU, ( AMD's MB tends to be cheaper ), then AMD is doing pretty well here. I am looking forward to next years 12nm Zen+.peevee - Monday, September 25, 2017 - link

From the whole line, only 7820X makes sense from price/performance standpoint.boogerlad - Monday, September 25, 2017 - link

Can an IPC comparison be done between this and Skylake-s? Skylake-x LCC lost in some cases to skylake, but is it due to lack of l3 cache or is it because the l3 cache is slower?IGTrading - Monday, September 25, 2017 - link

There will never be an IPC comparison of Intel's new processors, because all it would do is showcase how Intel's IPC actually went down from Broadwell and further down from KabyLake.Intel's IPC is a downtrend affair and this is not really good for click and internet traffic.

Even worse : it would probably upset Intel's PR and that website will surely not be receiving any early review samples.

rocky12345 - Monday, September 25, 2017 - link

Great review thank you. This is how a proper review is done. Those benchmarks we seen of the 18 core i9 last week were a complete joke since the guy had the chip over clocked to 4.2GHz on all core which really inflated the scores vs a stock Threadripper 16/32 CPU. Which was very unrealistic from a cooling stand point for the end users.This review had stock for stock and we got to see how both CPU camps performed out of the box states. I was a bit surprised the mighty 18 core CPU did not win more of the benches and when it did it was not by very much most of the time. So a 1K CPU vs a 2K CPU and the mighty 18 core did not perform like it was worth 1K more than the AMD 1950x or the 1920x for that matter. Yes the mighty i9 was a bit faster but not $1000 more faster that is for sure.

Notmyusualid - Thursday, September 28, 2017 - link

I too am interested to see 'out of the box performance' also.But if you think ANYONE would buy this and not overclock - you'd have to be out of your mind.

There are people out there running 4.5GHz on all cores, if you look for it.

And what is with all this 'unrealistic cooling' I keep hearing about? You fit the cooling that fits your CPU. My 14C/28T CPU runs 162W 24/7 running BOINC, and is attached to a 480mm 4-fan all copper radiator, and hand on my heart, I don't think has ever exceeded 42C, and sits at 38C mostly.

If I had this 7980XE, all I'd have to do is increase pump speed I expect.

wiyosaya - Monday, September 25, 2017 - link

Personally, I think the comments about people that spend $10K on licenses having the money to go for the $2K part are not necessarily correct. Companies will spend that much on a license because they really do not have any other options. The high end Intel part in some benchmarks gets 30 to may be 50 percent more performance on a select few benchmarks. I am not going to debate that that kind of performance improvement is significant even though it is limited to a few benchmarks; however, to me that kind of increased performance comes at an extreme price premium, and companies that do their research on the capabilities of each platform vs price are not, IMO, likely to throw away money on a part just for bragging rights. IMO, a better place to spend that extra money would be on RAM.HStewart - Monday, September 25, 2017 - link

In my last job, they spent over $100k for software version system.In workstation/server world they are looking for reliability, this typically means Xeon.

Gaming computers are different, usually kids want them and have less money, also they are always need to latest and greatest and not caring about reliability - new Graphics card comes out they replace it. AMD is focusing on that market - which includes Xbox One and PS 4

For me I looking for something I depend on it and know it will be around for a while. Not something that slap multiple dies together to claim their bragging rights for more core.

Competition is good, because it keeps Intel on it feat, I think if AMD did not purchase ATI they would be no competition for Intel at all in x86 market. But it not smart also - would anybody be serious about placing AMD Graphics Card on Intel CPU.

wolfemane - Tuesday, September 26, 2017 - link

Hate to burst your foreign bubble but companies are cheap in terms of staying within budgets. Specially up and coming corporations. I'll use the company I work for as an example. Fairly large print shop with 5 locations along the US West coast that's been in existence since the early 70's. About 400 employees in total. Server, pcs, and general hardware only sees an upgrade cycle once every 8 years (not all at once, it's spread out). Computer hardware is a big deal in this industry, and the head of IT for my company Has done pretty well with this kind of hardware life cycle. First off, macs rule here for preprocessing, we will never see a Windows based pc for anything more than accessing the Internet . But when it comes to our servers, it's running some very old xeons.As soon as the new fiscal year starts, we are moving to an epyc based server farm. They've already set up and established their offsite client side servers with epyc servers and IT absolutely loves them.

But why did I bring up macs? The company has a set budget for IT and this and the next fiscal year had budget for company wide upgrades. By saving money on the back end we were able to purchase top end graphic stations for all 5 locations (something like 30 new machines). Something they wouldn't have been able to do to get the same layout with Intel. We are very much looking forward to our new servers next year.

I'd say AMD is doing more than keeping Intel on their feet, Intel got a swift kick in the a$$ this year and are scrambling.