Retesting AMD Ryzen Threadripper’s Game Mode: Halving Cores for More Performance

by Ian Cutress on August 17, 2017 12:01 PM EST

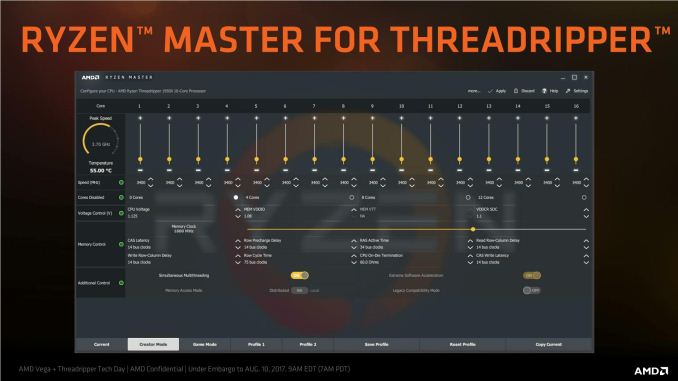

For the launch of AMD’s Ryzen Threadripper processors, one of the features being advertised was Game Mode. This was a special profile under the updated Ryzen Master software that was designed to give the Threadripper CPU more performance in gaming, at the expense of peak performance in hard CPU tasks. AMD’s goal, as described to us, was to enable the user to have a choice: a CPU that can be fit for both CPU tasks and for gaming at the flick of a switch (and a reboot) by disabling half of the chip.

Initially, we interpreted this via one of AMD’s slides as half of the threads (simultaneous multi-threading off), as per the exact wording. However, in other places AMD had stated that it actually disables half the cores: AMD returned to us and said it was actually disabling one of the two active dies in the Threadripper processor. We swallowed our pride and set about retesting the effect of Game Mode.

A Rose By Any Other Name

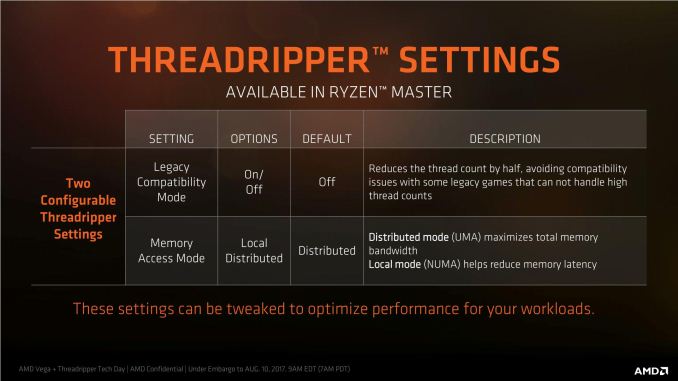

It’s not very often we have to retract some of our content at AnandTech – research is paramount. However in this instance a couple of things led to confusion. First was assumption related: in the original piece, we had assumed that AMD was making Game mode available through both the BIOS and through the Ryzen Master software. Second was communication: AMD had described Game Mode (and specifically, the Legacy Compatibility Mode switch it uses) at the pre-briefing at SIGGRAPH as having half the threads, but offered in diagrams that it was half the cores.

Based on the wording, we had interpreted that this was the equivalent of SMT being disabled, and adjusted the BIOS as such. After our review went live, AMD published and also reached out to us to inform of the error: where we had tested the part of Game Mode that deals with legacy core counts, we had disabled SMT rather than disabling a die and made the 16C/32T into to a 16C/16T system rather than an 8C/16T system. We were informed that the settings that deal with this feature are more complex than simply SMT being disabled, and as such was being offered primarily through Ryzen Master.

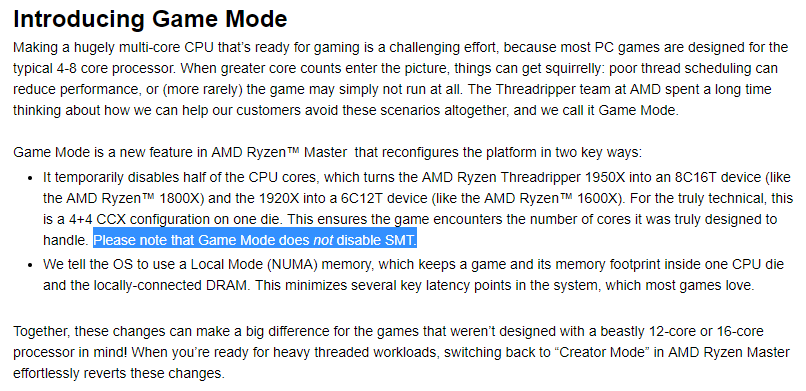

From AMD's Gaming Blog. Emphasis ours.

So for this review, we’re going to set the record straight, and test Threadripper in its Game Mode 8C/16T version. The previous review will be updated appropriately.

So What Is Game Mode?

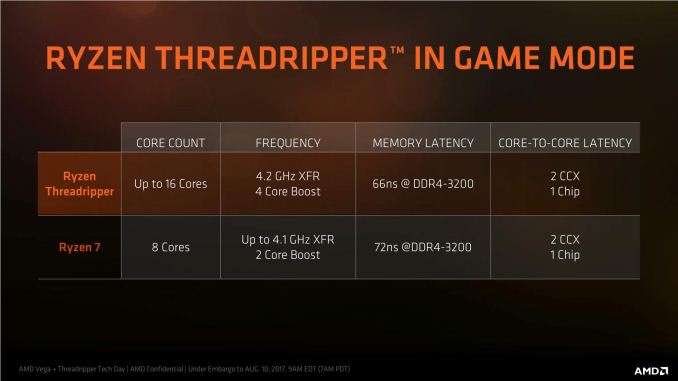

For Ryzen Threadripper, AMD has defined two modes of operation depending on the use case. The first is Creator Mode, which is enabled by default. This enables full cores, full threads, and gives the maximum available bandwidth across the two active Threadripper silicon dies in the package, at the expense of some potential peak latency. In our original review, we measured the performance of Creator Mode in our benchmarks as the default setting, but also looked into the memory latency.

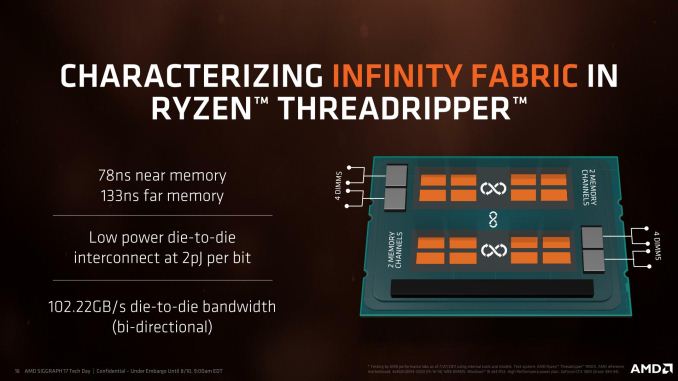

Each die can communicate to all four memory channels, but is only directly connected to two memory channels. Depending on where the data in DRAM is located, a core may have to search in near memory (the two channels closest) or far memory (the two channels over). This is commonly referred to a non-uniform memory architecture (NUMA). In a unified memory system (UMA), such as Creator mode, the system sees no difference between near memory and far memory, citing a single latency value for both which is typically the average between the near latency and the far latency. At DDR4-2400, we recorded this as 108 nanoseconds.

Game Mode does two things over Creator Mode. First, it changes the memory from UMA to NUMA, so the system can determine between near and far memory. At DDR4-2400, that 108ns ‘average’ latency becomes 79ns for near memory and 136ns for far memory (as per our testing). The system will ensure to use up all available near memory first, before moving to the higher latency far memory.

Second, Game Mode disables the cores in one of the silicon dies. This isn’t a full shutdown of the 8-core Zeppelin die, just the cores. The PCIe lanes, the DRAM channels and the various IO are still active, but the cores themselves are power gated such that the system does not use them or migrate threads to them. In essence, the 16C/32T processor becomes 8C/16T, but with quad-channel memory and 60 PCIe lanes still: the 1950X becomes an uber 1800X, and the 1920X becomes an uber 1600X. The act of disabling dies is called ‘Legacy Compatibility Mode’, which ensures that all active cores have access to near memory at the expensive of immediate bandwidth but enables games that cannot handle more than 20 cores (some legacy titles) to run smoothly.

The core count on the left is the absolute core count, not the core count in Game Mode. Which is confusing.

Some users might see paying $999 for a processor then disabling almost half of it as a major frustration (insert something about Intel integrated graphics). AMD’s argument is that the CPU is still good for gaming, and can offer a better gaming experience when given the choice. However if we consider the mantra surrounding these big processors around gaming adaptability: the ability to stream, transcode and game at the same time. It’s expected that in this mega-tasking (Intel’s term) scenario, having a beefy CPU helps even though there will be some game losses. Moving down to only 8 cores is likely to make this worse, and the only situation Game Mode assists is for a user who purely wants a gaming machine but quad-channel memory and all the PCIe lanes. There’s also a frequency argument – in a dual die configuration, active threads can be positioned at thermally beneficial points of the design to ensure the maximum frequency. Again, AMD reiterates that it offers choice, and users who want to stick with all or half the cores are free to do so, as this change in settings would have been available in BIOS even if AMD did not give a quick button to it.

As always, the proof is in the pudding. If there’s a significant advantage to gaming, then Game Mode will be a plus point in AMD’s cap.

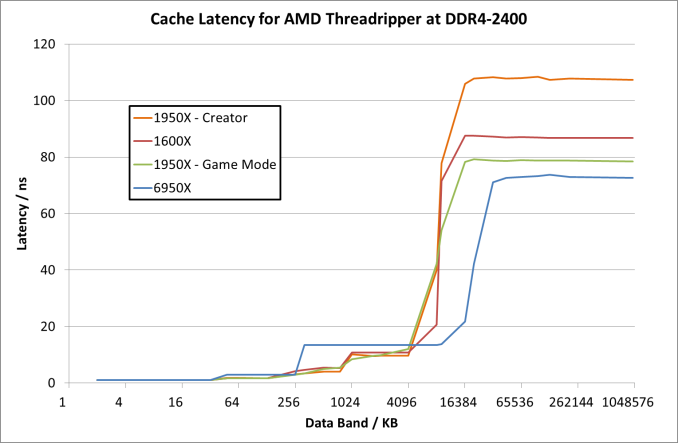

With regards how the memory and memory latency operates, Game Mode still incorporates NUMA, ensuring near memory is used first. The memory latency results are still the same as we tested before:

For the 1950X in the two modes, the results are essentially equal until we hit 8MB, which is the L3 cache limit per CCX. After this, the core bounces out to main memory, where the Game mode sits around 79ns when it probes near memory while the Creator mode is at 108 ns average. By comparison the Ryzen 5 1600X seems to have a lower latency at 8MB (20ns vs 41 ns), and then sits between the Creator and Game modes at 87 ns. It would appear that the bigger downside of Creator mode in this way is the fact that main memory accesses are much slower than normal Ryzen or in Game mode.

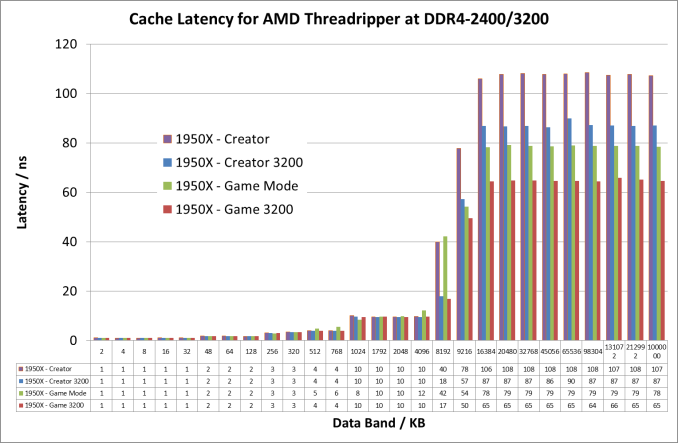

If we crank up the DRAM frequency to DDR4-3200 for the Threadripper 1950X, the numbers change a fair bit:

Up until the 8MB boundary where L3 hits main memory, everything is pretty much equal. At 8MB however, the latency at DDR4-2400 is 41ns compared to 18ns at DDR4-3200. Then out into full main memory sees a pattern: Creator mode at DDR4-3200 is close to Game Mode at DDR4-2400 (87ns vs 79ns), but taking Game mode to DDR4-3200 drops the latency down to 65ns.

Testing, Testing, One Two One Two

In our last review, we put the CPU in NUMA mode and disabled SMT. Both of the active dies were still active, although each thread had full CPU resources, and each set of CPUs would communicate to the nearest memory, however there would be potential die-to-die communication and more potential for far-memory access.

In this new testing, we use Ryzen Master to Game Mode, which enables NUMA and disables one of the silicon dies giving 8 cores and 16 threads.

104 Comments

View All Comments

Lieutenant Tofu - Friday, August 18, 2017 - link

"... we get an interesting metric where the 1950X still comes out on top due to the core counts, but because the 1920X has fewer cores per CCX, it actually falls behind the 1950X in Game Mode and the 1800X despite having more cores. "Would you mind elaborating on this? How does the proportion of cores per CCX affect performance?

JasonMZW20 - Sunday, August 20, 2017 - link

The only thing I can think of is CCX cache locality. Given a choice, you want more cores per CCX to keep data on that CCX rather than using cross-communication between CCXes through L2/L3. Once you have to communicate with the other CCX, you automatically incur a higher average latency penalty, which in some cases, is also a performance penalty (esp. if data keeps moving between the two CCXes).Lieutenant Tofu - Friday, August 18, 2017 - link

On the compile test (prev page):"... we get an interesting metric where the 1950X still comes out on top due to the core counts, but because the 1920X has fewer cores per CCX, it actually falls behind the 1950X in Game Mode and the 1800X despite having more cores. "

Would you mind elaborating on this? How does the proportion of cores per CCX affect performance?

rhoades-brown - Friday, August 18, 2017 - link

This gaming mode intrigues me greatly- the article states that the PCIe lanes and memory controller is still enabled, but the cores are turned off as shown in this diagram:http://images.anandtech.com/doci/11697/kevin_lensi...

If these are two complete processors on one package (as the diagrams and photos show), what impact does having gaming mode enabled and a PCIe device connected to the PCIe controller on the 'inactive' side? The NUMA memory latency seems to be about 1.35 surely this must affect the PCIe devices too- further how much bandwidth is there between the two processors? Opteron processors use HyperTransport for communication, do these do the same?

I work in the server world and am used to NUMA systems- for two separate processor packages in a 2 socket system, cross-node memory access times is normally 1.6x that of local memory access. For ESXi hosts, we also have particular PCIe slots that we place hardware in, to ensure that the different controllers are spread between PCIe controllers ensuring the highest level of availability due to hardware issue and peek performance (we are talking HBAs, Ethernet adapters, CNAs here). Although, hardware reliability is not a problem in the same way in a Threadripper environment, performance could well be.

I am intrigued to understand how this works in practice. I am considering building one of these systems out for my own home server environment- I yet to see any virtualisation benchmarks.

versesuvius - Friday, August 18, 2017 - link

So, what is a "Game"? Uses DirectX? Makes people act stupidly? Is not capable of using what there is? Makes available hardware a hindrance to smooth computing? Looks like a lot of other apps (that are not "Game") can benefit from this "Gaming Mode".msroadkill612 - Friday, August 18, 2017 - link

A shame no Vega GPU in the mix :(It may have revealed interesting synergies between sibling ryzen & vega processors as a bonus.

BrokenCrayons - Friday, August 18, 2017 - link

The only interesting synergy you'd get from a Threadripper + Vega setup is an absurdly high electrical demand and an angry power supply. Nothing makes less sense than throwing a 180W CPU plus a 295W GPU at a job that can be done with a 95W CPU and a 180W GPU just as well in all but a few many-threaded workloads (nevermind the cost savings on the CPU for buying Ryzen 7 or a Core i7).versesuvius - Friday, August 18, 2017 - link

I am not sure if I am getting it right, but apparently if the L3 cache on the first Zen core is full and the core has to go to the second core's L3 cache there is an increase in latency. But if the second core is power gated and does not take any calls, then the increase in latency is reduced. Is it logical to say that the first core has to clear it with the second core before it accesses the second core's cache and if the second core is out it does not have to and that checking with the second core does not take place and so latency is reduced? Moving on if the data is not in the second core's cache then the first core has to go to DRAM accessing which supposedly does not need clearance from the second core. Or does it always need to check first with the second core and then access even the DRAM?BlackenedPies - Friday, August 18, 2017 - link

Would Threadripper be bottlenecked by dual channel RAM due to uneven memory access between dies? Is the optimal 2 DIMM setup one per die channel or two on one die?Fisko - Saturday, August 19, 2017 - link

Anyone working on daily basis just to view and comment pdf won't use acrobat DC. Exception can be using OCR for pdf. Pdfxchange viewer uses more threads and opens pdf files much faster than Adobe DC. I regularly open files from 25 to 80 mb of CAD pdf files and difference is enormous.