Retesting AMD Ryzen Threadripper’s Game Mode: Halving Cores for More Performance

by Ian Cutress on August 17, 2017 12:01 PM ESTConclusions

In this mini-test, we compared AMD’s Game Mode as originally envisioned by AMD. Game Mode sits as an extra option in the AMD Ryzen Master software, compared to Creator Mode which is enabled by default. Game Mode does two things: firstly, it adjusts the memory configuration. Rather than seeing the DRAM as one uniform block of memory with an ‘average’ latency, the system splits the memory into near memory closest to the active CPU, and far memory for DRAM connected via the other silicon die. The second thing that Game Mode does is disable the cores on one of the silicon dies, but retains the PCIe lanes, IO, and DRAM support. This disables cross-die thread migration, offers faster memory for applications that need it, and aims to lower the latency of the cores used for gaming by simplifying the layout. The downside of Game Mode is raw performance when peak CPU is needed: by disabling half the cores, any throughput limited task is going to be cut by losing half of the throughput resources. The argument here is that Game mode is designed for games, which rarely use above 8 cores, while optimizing the memory latency and PCIe connectivity.

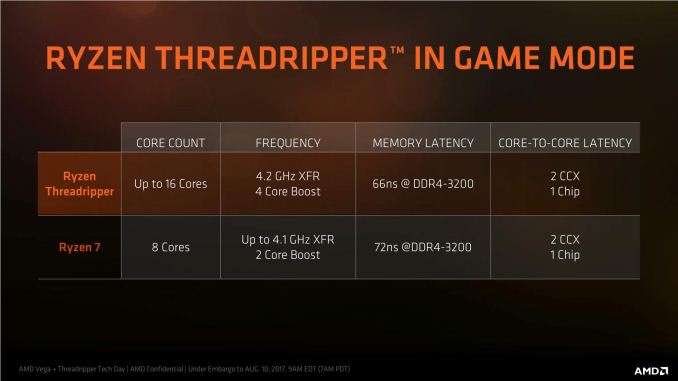

A simpler way to imagine Game Mode is this: enabling Game Mode brings the top tier Threadripper 1950X down to the level of a Ryzen 7 processor for core count at around the same frequency, but still gets the benefits of quad channel memory and all 60 PCIe lanes for add-in cards. In this mode, the CPU will preferentially use the lower latency memory available first, attempting to ensure a better immediate experience. You end up with an uber-Ryzen 7 for connectivity.

AMD states that a Threadripper in Game Mode will have lower latency than a Ryzen 7, as well as a higher boost and larger boost window (up to 4 cores rather than 2)

In our testing, we did the full gamut of CPU and CPU Gaming tests, at 1080p and 4K with Game Mode enabled.

On the CPU results, they were perhaps to be expected: single threaded tests with Game Mode enabled performed similar to Ryzen 7 and the 1950X, but multithreaded tests were almost halved to the 1950X, and slightly slower than the Ryzen 7 1800X due to the lower all-core turbo.

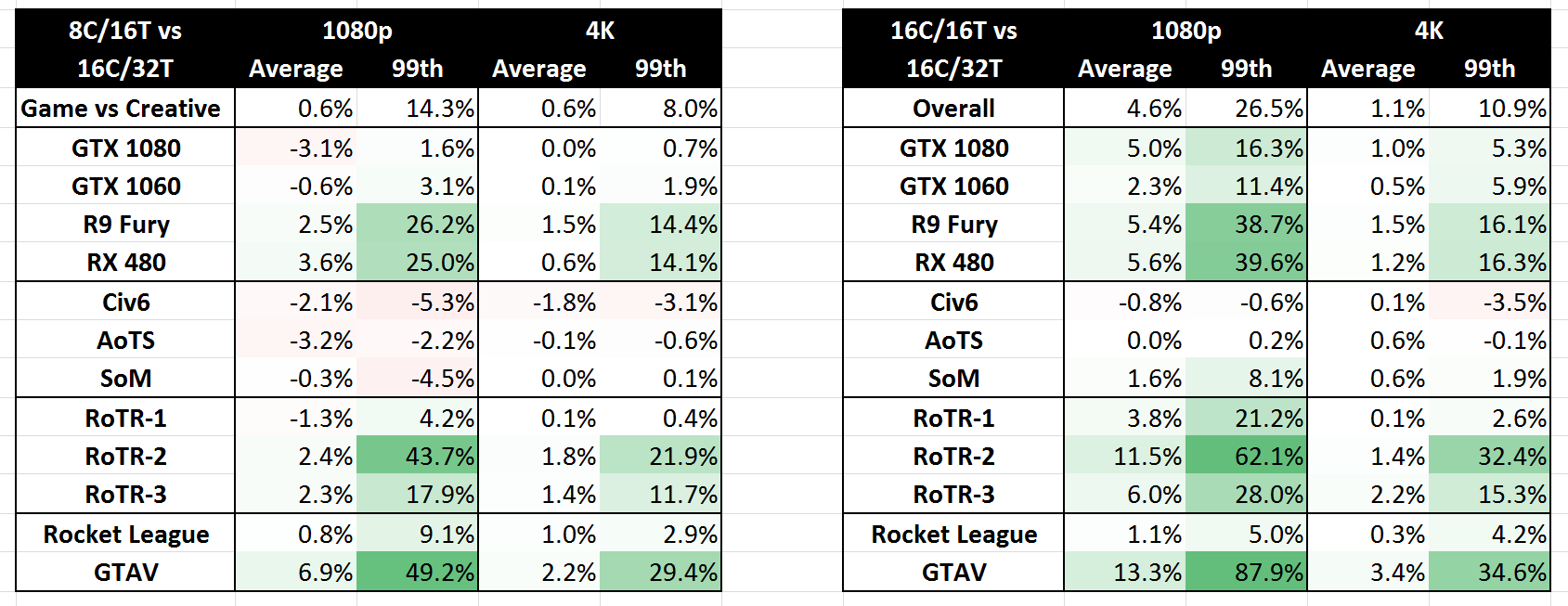

The CPU gaming tests were instead a mixed bunch. Any performance difference from Game Mode over Creator Mode was highly dependant on the game, on the graphics card, and on the resolution. Overall, the results could be placed into buckets:

- Noted minor losses in Civilization 6, Ashes of the Singularity and Shadow of Mordor

- Minor loss to minor gain on GTX 1080 and GTX 1060 overall in all games

- Minor gain for AMD cards on Average Frame Rates, particularly RoTR and GTA

- Sizeable (10-25%) gain for AMD cards on 99th Percentile Frame Rates, particularly RoTR and GTA

- Gains are more noticable for 1080p gaming than 4K gaming

- Most gains across the board are on 99th Percentile data

Which leads to the following conclusions

- No real benefit on GTX 1080 or GTX 1060, stay in Creator Mode

- Benefits for Rise of the Tomb Raider, Rocket League and GTA

- Benefit more at 1080p, but still gains at 4K

The pros and cons of enabling Game Mode are meant to be along the lines of faster and lower latency gaming, at the expense of raw compute power. The fact that it requires a reboot to switch between Creator Mode and Game Mode is a main detractor - if it were a simple in-OS switch, then it could be enabled for specific titles on specific graphics cards just before the game is launched. This will not ever be possible, due to how PCs decide what resources are available when. That being said however, perhaps AMD has missed a trick here.

Could AMD have Implemented Game Mode Differently?

By virtue of misinterpreting AMD's slide deck, and testing a bunch of data with SMT disabled instead, we have an interesting avenue as to how users might do something akin to Game Mode but not specifically AMD's game mode. This also leads to the question if AMD implemented and labeled the Game Mode environment in the right way.

By enabling NUMA and disabling SMT, the 16C/32T chip moves down to 16C/16T. It still has 16 full cores, but has to deal with communication across the two eight-core silicon dies. Nonetheless it still satisfies the need for cores to access the lowest latency memory near to that specific core, as well as enabling certain games that need fewer total threads to actually work. It should, by the description alone, enable the 'legacy' part of legacy gaming.

The underlying performance analysis between the two modes becomes this:

When in 16C/16T mode, performance in CPU benchmarks was higher than in 8C/16T mode.

When in 16C/16T mode, performance in CPU gaming benchmarks was higher than in 8C/16T mode.

Some of the suggestions from comparing AMD's defined 8C/16T Game Mode for CPU gaming actually change when in 16C/16T mode: games that saw slight regressions with 8 cores became neutral at 16C or even had slight improvements, particularly at 1080p.

One of the main detractors to the 8C/16T mode is that it requires a full restart in order to enable it. Disabling SMT could theoretically be done at the OS level before certain games come in to play. If the OS is able to determine which core IDs are associated to standard threads and which ones would be hyperthreads, it is perhaps possible for the OS just to refuse to dispatch threads in flight to the hyperthreads, allowing only one thread per core. (There's a small matter of statically shared resources to deal with as well.) The mobile world deals with thread migration between fast cores and slow cores every day, and some cores can be hotplug disabled on the fly. One could postulate that Windows could do something similar with the equivalent of hyperthreads.

Would this issue need to be solved by Windows, or by AMD? I suspect a combination of both, really.

Update:

Robert Hallock on AMD's Threadripper webcast has stated that Windows Scheduler is not capable of specifically zeroing out a full die to have the same effect. The UMA/NUMA implementation can be managed with Windows Scheduler to assign threads to where the data is (or assign data to where the threads are), but as far as fully disabling a die in the OS requires a restart.

104 Comments

View All Comments

MrSpadge - Thursday, August 17, 2017 - link

It's definitely good that reviewers test the game mode and the others, so that we know what to expect from them. If they only tested creator mode the internets would be full of people shouting foul play to bash AMD.deathBOB - Thursday, August 17, 2017 - link

Ian - why not just enable NUMA and leave SMT on?Ian Cutress - Thursday, August 17, 2017 - link

The fourth corner of testing :)lelitu - Thursday, August 17, 2017 - link

Looking at setting up something for a home VM host, and linux development workstation makes NUMA with SMT the most useful set of benchmarks for my usecase.I'm particularly interested in TR, because it's brought the price of entry low enough that I can actually consider building such a system.

Ratman6161 - Friday, August 18, 2017 - link

ThreadRipper is big bucks for your purposes if I'm reading this correctly. For a home lab sort of environment a lot of cores helps as does a lot of RAM, but you don't necessarily need a boatload of CPU power. For example, in my home ESXi system I've got an FX8350 which VMWare sees as an 8 Core CPU. I've also given it 32 GB of DDR3 RAM (purchased when that was cheap). The 990FX motherboards work great for this since they have plenty of PCIe lanes available. In my case, those are used for an ancient ATI video card I happened to have in a drawer, an LSI x8 RAID card and an x4 Intel dual port gigabit NIC. The RAID card has 4 1 TB desktop drives hooked up to it in a RAID 5.All of the above can be had pretty cheap these days. I'm thinking of upgrading my storage to 4x2 TB SAS drives - available for $35 each on Amazon...brand new (but old models). The system is running 6 to 7 VM's (Windows Servers mostly) at any given time. But with only two users, I don't run into many cases where more than two VM's are actually doing anything at the same time. Example: Web server and SQL Server serving up a web app.

For this environment, having a storage setup where the VM's are not contending for the disks and also having plenty of RAM seems to make a lot more difference than the CPU.

Of course if you have the bucks and just want to, ThreadRipper would be terrific for this - just way to expensive and overkill for me.

lelitu - Monday, August 21, 2017 - link

That depends a lot on what you want the VMs for. Unfortunately for the sort of performance testing and development I do a VM toaster isn't actually good enough. Each VM needs at least 4 uncontended cores, and 10GB uncontended RAM. Two VMs is the absolute minimum, 3 would be better.That's not going to fit into anything less than a ryzen 7 minimum, and a Threadripper, *if* it performs as I expect in SMT + NUMA mode would be almost perfect. Unfortunately, you're right, it's a *lot* of coin to drop on something I don't know will actually do what I need well enough.

Thus, I wish there were SMT+NUMA workstation and VM benchmarks here.

JasonMZW20 - Thursday, August 17, 2017 - link

Seems like Game Mode should have bumped up the base clocks to 1800X levels, especially for Nvidia cards using a software scheduler that seems to scale with CPU frequency. AMD's hardware scheduler is apparent in overall FPS stability and being mostly CPU agnostic.Matching base clocks with 1800X or even 1900X (3.8GHz) might be better on TR for gaming in Game Mode.

lordken - Friday, August 18, 2017 - link

Also for some weird reason that 1800X is much faster with higher fps in civilization and tomb rider?peevee - Thursday, August 17, 2017 - link

"because the 1920X has fewer cores per CCX, it actually falls behind the 1950X in Game Mode and the 1800X despite having more cores. "Sorry, but when 12 cores with twice memory bandwidth are compiling slower than 8, you are doing something wrong. Yes, Anandtech, you. I'd seriously investigate. For example, the maximum number of threads were set at 24 or something.

Ian Cutress - Thursday, August 17, 2017 - link

When you have a bank of cores that communicate with each other, and replace it with more cores but uneven communication latencies, it is a difference and it can affect code paths.