The AMD Radeon RX Vega 64 & RX Vega 56 Review: Vega Burning Bright

by Ryan Smith & Nate Oh on August 14, 2017 9:00 AM ESTRapid Packed Math: Fast FP16 Comes to Consumer Cards (& INT16 Too!)

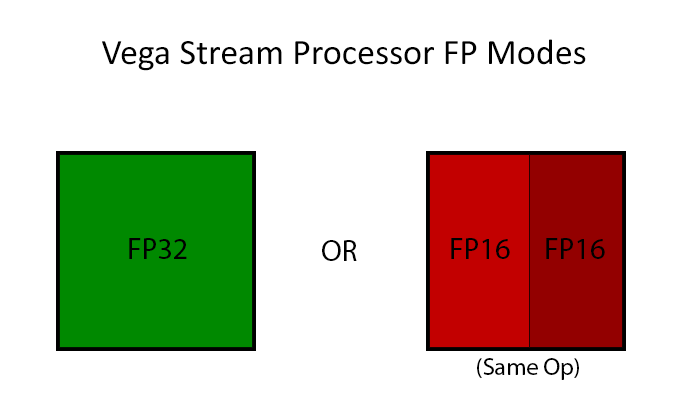

Arguably AMD’s marquee feature from a compute standpoint for Vega is Rapid Packed Math. Which is AMD’s name for packing two FP16 operations inside of a single FP32 operation in a vec2 style. This is similar to what NVIDIA has done with their high-end Pascal GP100 GPU (and Tegra X1 SoC), which allows for potentially massive improvements in FP16 throughput. If a pair of instructions are compatible – and by compatible, vendors usually mean instruction-type identical – then those instructions can be packed together on a single FP32 ALU, increasing the number of lower-precision operations that can be performed in a single clock cycle. This is an extension of AMD’s FP16 support in GCN 3 & GCN 4, where the company supported FP16 data types for the memory/register space savings, but FP16 operations themselves were processed no faster than FP32 operations.

The purpose of integrating fast FP16 and INT16 math is all about power efficiency. Processing data at a higher precision than is necessary unnecessarily burns power, as the extra work required for the increased precision accomplishes nothing of value. In this respect fast FP16 math is another step in GPU designs becoming increasingly min-maxed; the ceiling for GPU performance is power consumption, so the more energy efficient a GPU can be, the more performant it can be.

Taking advantage of this feature, in turn, requires several things. It requires API support and it requires compiler support, but above all it requires code that explicitly asks for FP16 data types. The reason why that matters is two-fold: virtually no existing programs use FP16s, and not everything that is FP32 is suitable for FP16. In the compute world especially, precisions are picked for a reason, and compute users can be quite fussy on the matter. Which is why fast FP64-capable GPUs are a whole market unto themselves. That said, there are whole categories of compute tasks where the high precision isn’t necessary; deep learning is the poster child right now, and for Vega Instinct AMD is practically banking on it.

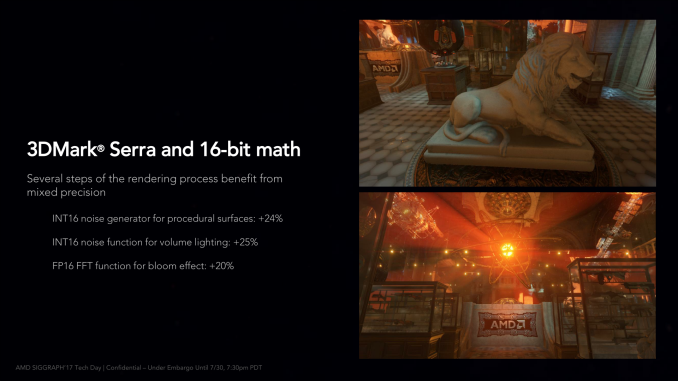

As for gaming, the situation is more complex still. While FP16 operations can be used for games (and in fact are somewhat common in the mobile space), in the PC space they are virtually never used. When PC GPUs made the jump to unified shaders in 2006/2007, the decision was made to do everything at FP32 since that’s what vertex shaders typically required to begin with, and it’s only recently that anyone has bothered to look back. So while there is some long-term potential here for Vega’s fast FP16 math to become relevant for gaming, at the moment it doesn’t do much outside of a couple of benchmarks and some AMD developer relations enhanced software. Vega will, for the present, live and die in the gaming space primarily based on its FP32 performance.

The biggest obstacle for AMD here in the long-term is in fact NVIDIA. NVIDIA also supports native FP16 operations, however unlike AMD, they restrict it to their dedicated compute GPUs (GP100 & GV100). GP104, by comparison, offers a painful 1/64th native FP16 rate, making it just useful enough for compatibility/development purposes, but not fast enough for real-world use. So for AMD there’s a real risk of developers not bothering with FP16 support when 70% of all GPUs sold similarly don’t support it. It will be an uphill battle, but one that can significantly improve AMD’s performance if they can win it, and even more so if NVIDIA chooses not to budge on their position.

Though overall it’s important to keep in mind here that even in the best case scenario, only some operations in a game are suitable for FP16. So while FP16 execution is twice as fast as FP32 execution on paper specifically for a compute task, the percentage of such calculations in a game will be lower. In AMD’s own slide deck, they illustrate this, pointing out that using 16-bit functions makes specific rendering steps of 3DMark Serra 20-25% faster, and those are just parts of a whole.

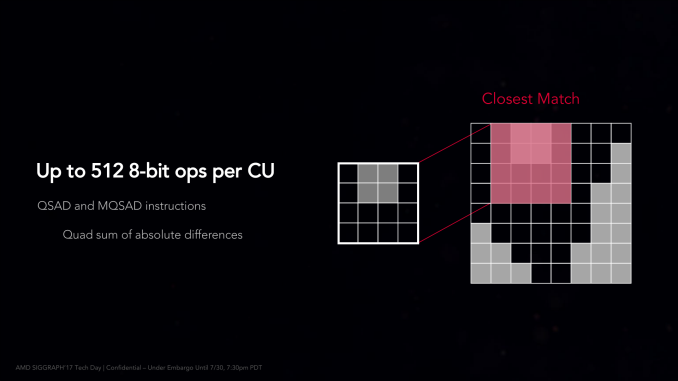

Moving on, AMD is also offering limited native 8-bit support via a pair of specific instructions. On Vega the Quad Sum of Absolute Differences (QSAD) and its masked variant can be executed on Vega in a highly packed form using 8-bit integers. SADs are a rather common image processing operation, and are particularly relevant for AMD’s Instinct efforts since they are used in image recognition (a major deep learning task).

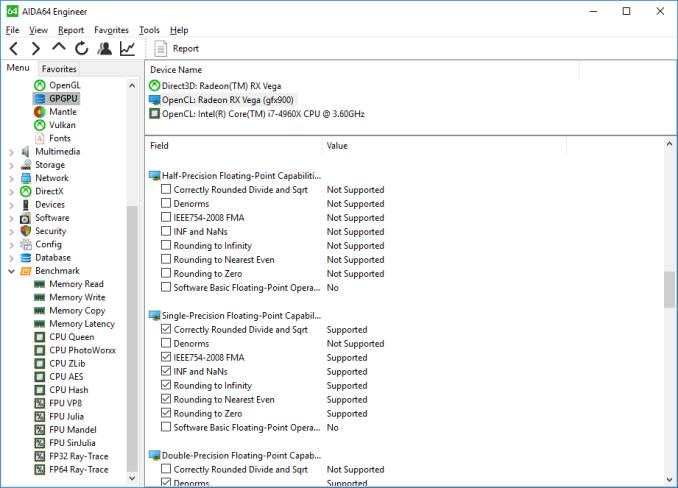

Finally, let’s talk about API support for FP16 operations. The situation isn’t crystal-clear across the board, but for certain types of programs, it’s possible to use native FP16 operations right now.

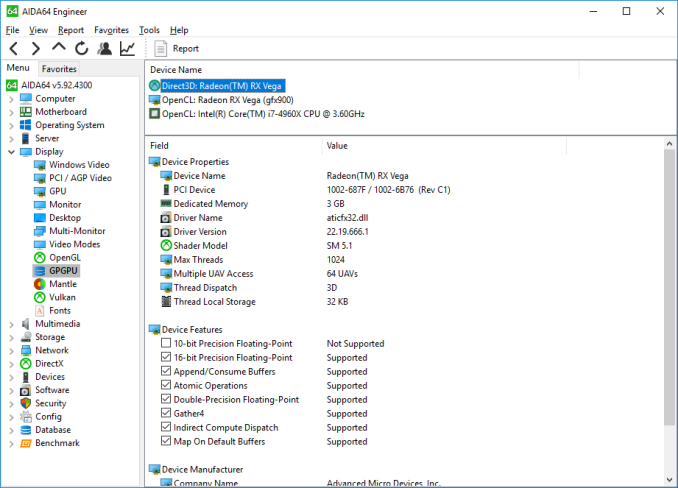

Surprisingly, native FP16 operations are not currently exposed to OpenCL, according to AIDA64. So within a traditional AMD compute context, it doesn’t appear to be possible to use them. This obviously is not planned to remain this way, and while AMD hasn’t been able to offer more details by press time, I expect that they’ll expose FP16 operations under OpenCL (and ROCm) soon enough.

Meanwhile, High Level Shader Model 5.x, which is used in DirectX 11 and 12, does support native FP16 operations. And so does Vulkan, for that matter. So it is possible to use FP16 right now, even in games. Running SiSoftware’s Sandra GP GPU benchmark with a DX compute shader shows a clear performance advantage, albeit not a complete 2x advantage, with the switch to FP16 improving compute throughput by 70%.

However based on some other testing, I suspect that native FP16 support may only be enabled/working for compute shaders at this time, and not for pixel shaders. In which case AMD may still have some work to do. But for developers, the message is clear: you can take advantage of fast FP16 performance today.

213 Comments

View All Comments

Ryan Smith - Tuesday, August 15, 2017 - link

3 CUs per array is a maximum, not a fixed amount. Each Hawaii shader engine had a 4/4/3 configuration, for example.http://images.anandtech.com/doci/7457/HawaiiDiagra...

So in the case of Vega 10, it should be a 3/3/3/3/2/2 configuration.

watzupken - Tuesday, August 15, 2017 - link

I think the performance is in line with recent rumors and my expectation. The fact that AMD beats around the bush to release Vega was a tell tale sign. Unlike Ryzen where they are marketing how well it runs in the likes of Cinebench and beating the gong and such, AMD revealed nothing on benchmarks throughout the year for Vega just like they did when they first released Polaris.The hardware no doubt is forward looking, but where it needs to matter most, I feel AMD may have fallen short. It seems like the way around is probably to design a new GPU from scratch.

Yojimbo - Wednesday, August 16, 2017 - link

"It seems like the way around is probably to design a new GPU from scratch. "Well, perhaps, but I do think with more money they could be doing better with what they've got. They made the decision to focus on reviving their CPU business with their resources, however.

They probably have been laying the groundwork for an entirely new architecture for some time, though. My belief is that APUs were of primary concern when originally designing GCN. They were hoping to enable heterogeneous computing, but it didn't work out. If that strategy did tie them down somewhat, their next gen architecture should free them from those tethers.

Glock24 - Tuesday, August 15, 2017 - link

Nice review, I'll say the outcome was expected given the Vega FE reviews.Other reviews state that the Vega 64 has a switch that sets the power limts, and you have "power saving", "normal" and "turbo" modes. From what I've read the difference between the lowest and highest power limit is as high as 100W for about 8% more performance.

It seems AMD did not reach the expected performance levels so they just boosted the clocks and voltage. Vega is like Skylake-X in that sense :P

As others have mentioned, it would be great to have a comparison of Vega using Ryzen CPUs vs. Intel's CPUs.

Vertexgaming - Wednesday, August 16, 2017 - link

It sucks so much that price drops on GPUs aren't a thing anymore because of miners. I have been upgrading my GPU every year and getting an awesome deal on the newest generation GPU, but now the situation has changed so much, that I will have to skip a generation to justify a $600-$800 (higher than MSRP) price tag for a new graphics card. :-(prateekprakash - Wednesday, August 16, 2017 - link

In my opinion, it would have been great if Vega 64 had a 16gb vram version at 100$ more... That would be 599$ apiece for the air cooled version... That would future proof it to run future 4k games (CF would benefit too)...It's too bad we still don't have 16gb consumer gaming cards, the Vega pro being not strictly for gamers...

Dosi - Wednesday, August 16, 2017 - link

So the system does consumes 91W more with Vega 64, cant imagine with the LC V64... it can be 140W more? Actually what you saved on the GPU (V64 instead 1080) you already spent on electricity bill...versesuvius - Wednesday, August 16, 2017 - link

NVIDIA obviously knows how to break down the GPU tasks into chunks and processing those chunks and sending them out the door better than AMD. And more ROPs can certainly help AMD cards a lot.peevee - Thursday, August 17, 2017 - link

"as electrons can only move so far on a single (ever shortening) clock cycle"Seriously? Electrons? You think that how far electrons move matters? Sheesh.

FourEyedGeek - Tuesday, August 22, 2017 - link

You being serious or sarcastic? If serious then you are ignorant.