The AMD Radeon RX Vega 64 & RX Vega 56 Review: Vega Burning Bright

by Ryan Smith & Nate Oh on August 14, 2017 9:00 AM ESTThe Vega Architecture: AMD’s Brightest Day

From an architectural standpoint, AMD’s engineers consider the Vega architecture to be their most sweeping architectural change in five years. And looking over everything that has been added to the architecture, it’s easy to see why. In terms of core graphics/compute features, Vega introduces more than any other iteration of GCN before it.

Speaking of GCN, before getting too deep here, it’s interesting to note that at least publicly, AMD is shying away from the Graphics Core Next name. GCN doesn’t appear anywhere in AMD’s whitepaper, while in programmers’ documents such as the shader ISA, the name is still present. But at least for the purposes of public discussion, rather than using the term GCN 5, AMD is consistently calling it the Vega architecture. Though make no mistake, this is still very much GCN, so AMD’s basic GPU execution model remains.

So what does Vega bring to the table? Back in January we got what has turned out to be a fairly extensive high-level overview of Vega’s main architectural improvements. In a nutshell, Vega is:

- Higher clocks

- Double rate FP16 math (Rapid Packed Math)

- HBM2

- New memory page management for the high-bandwidth cache controller

- Tiled rasterization (Draw Stream Binning Rasterizer)

- Increased ROP efficiency via L2 cache

- Improved geometry engine

- Primitive shading for even faster triangle culling

- Direct3D feature level 12_1 graphics features

- Improved display controllers

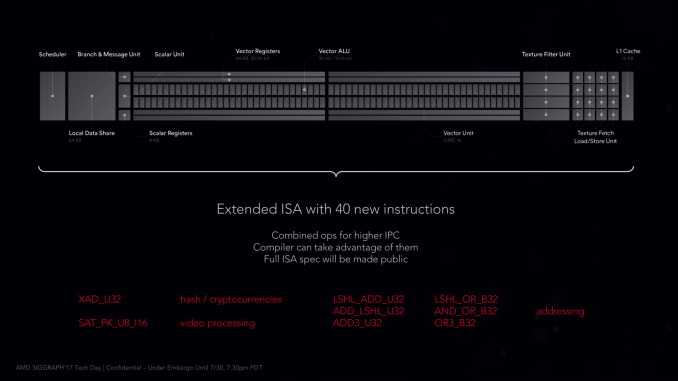

The interesting thing is that even with this significant number of changes, the Vega ISA is not a complete departure from the GCN4 ISA. AMD has added a number of new instructions – mostly for FP16 operations – along with some additional instructions that they expect to improve performance for video processing and some 8-bit integer operations, but nothing that radically upends Vega from earlier ISAs. So in terms of compute, Vega is still very comparable to Polaris and Fiji in terms of how data moves through the GPU.

Consequently, the burning question I think many will ask is if the effective compute IPC is significantly higher than Fiji, and the answer is no. AMD has actually taken significant pains to keep the throughput latency of a CU at 4 cycles (4 stages deep), however strictly speaking, existing code isn’t going to run any faster on Vega than earlier architectures. In order to wring the most out of Vega’s new CUs, you need to take advantage of the new compute features. Note that this doesn’t mean that compilers can’t take advantage of them on their own, but especially with the datatype matters, it’s important that code be designed for lower precision datatypes to begin with.

213 Comments

View All Comments

BrokenCrayons - Monday, August 14, 2017 - link

The hypothetical APU that contains Zen, Polaris/Vega, and HBM2 would be interesting if AMD can keep the power and heat down. Outside of the many cores Threadripper, Zen doesn't do badly on power versus performance so something like 4-6 CPU cores plus a downclocked and smaller GPU would be good for the industry if the package's TDP ranged from 25-95W for mobile and desktop variants.By itself though, Vega is an inelegant and belated response to the 1080. It shares enough in common with Fiji that it strikes me as an inexpensive (to engineer) stopgap that tweaks GCN just enough to keep it going for one more generation. I'm hopeful that AMD will have a better, more efficient design for their next generation GPU. The good news is that with the latest product announcements, AMD will likely avoid bankruptcy and get a bit healthier looking in the near term. Things were looking pretty bad for them until Ryzen's announcement, but we'll need to see a few more quarters of financials that ideally show a profit in order to be certain the company can hang in there. I'm personally willing to go out on a limb and say AMD will be out of the red in Q1 of FY18 even without tweaking the books on a non-GAAP basis. Hopefully, they'll have enough to pay down liabilities and invested in the R&D necessary to stay competitive. With process node shrinks coming far less often these days, there's an several years' long opening for them right now.

TheinsanegamerN - Monday, August 14, 2017 - link

" It shares enough in common with Fiji that it strikes me as an inexpensive (to engineer) stopgap that tweaks GCN just enough to keep it going for one more generation. "We thought the same thing about polaris. I think the reality is that AMD cannot afford to do a full up arch, and can only continue to tweak GCN in an attempt to stay relevant.

They still have not done a Maxwell-Esq redesign of their GPUs streamlining them for consumer use. They continue to put tons of compute in their chips which is great, but it restricts clock rates and pushes power usage sky high.

mapesdhs - Monday, August 14, 2017 - link

I wonder if AMD decided it made more sense to get back into the CPU game first, then focus later on GPUs once the revenue stream was more healthy.Manch - Tuesday, August 15, 2017 - link

Just like there CPU's it's a jack of all trades design. Cheaper R&D to use one chip for many but you got to live with the trade offs.The power requirement doesn't bother me. Maybe after the third party customs coolers, I'll buy one if it's the better deal. I have a ventilated comm closet. All my equipment stays in there, including my PCs. I have outlets on the wall to plug everything else into. Nice and quiet regardless of what I run.

Sttm - Monday, August 14, 2017 - link

That Battlefield 1 Power Consumption with Air, is that actually correct? 459 watts.... WTF AMD.Aldaris - Monday, August 14, 2017 - link

Buggy driver? Something is totally out of whack there.Ryan Smith - Monday, August 14, 2017 - link

Yes, that is correct.I also ran Crysis 3 on the 2016 GPU testbed. That ended up being 464W at the wall.

haukionkannel - Monday, August 14, 2017 - link

Much better than I expected!Nice to see competition Also in GPU highend. I was expecting the Vega to suffer deeply in DX11, but it is actuallu doing very nice in those titles... I am really surpriced!

Leyawiin - Monday, August 14, 2017 - link

A day late and a dollar short (and a power pig at that). Shame. I was hoping for a repeat of Ryzen's success, but they'll sell every one they make to miners so I guess its still a win.Targon - Monday, August 14, 2017 - link

I would love to see a proper comparison between an AMD Ryzen 7 and an Intel i7-7700k at this point with Vega to see how they compare, rather than testing only on an Intel based system, since the 299X is still somewhat new. All of the Ryzen launch reviews were done on a new platform, and the AMD 370X is mature enough where reviews will be done with a lot more information. Vega is a bit of a question mark in terms of how well it does when you compare between the two platforms. Even how well drivers should have matured in how well the 370X chipset deals with the Geforce 1080 is worth looking at in my opinion.I've had the thought, without resources, that NVIDIA drivers may not do as well on an AMD based machine compared to an Intel based machine, simply because of driver issues, but without a reasonably high end video card from AMD, there has been no good way to do a comparison to see if some of the game performance differences between processors could have been caused by NVIDIA drivers as well.