Intel Introduces "Ruler" Server SSD Form-Factor: SFF-TA-1002 Connector, PCIe Gen 5 Ready

by Billy Tallis on August 9, 2017 3:00 PM EST

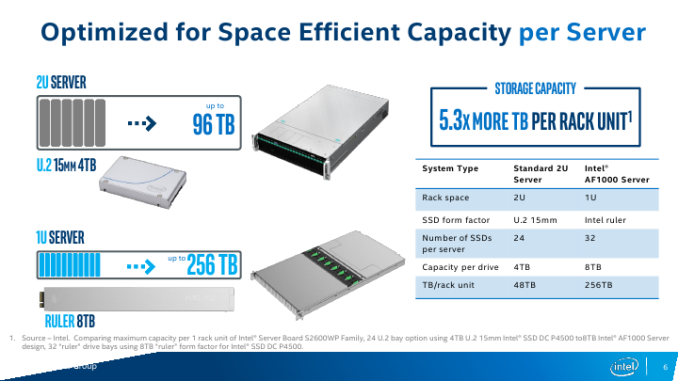

Intel on Tuesday introduced its new form-factor for server-class SSDs. The new "ruler" design is based on the in-development Enterprise & Datacenter Storage Form Factor (EDSFF), and is intended to enable server makers to install up to 1 PB of storage into 1U machines while supporting all enterprise-grade features. The first SSDs in the ruler form-factor will be available “in the near future” and the form-factor itself is here for a long run: it is expandable in terms of interface performance, power, density and even dimensions.

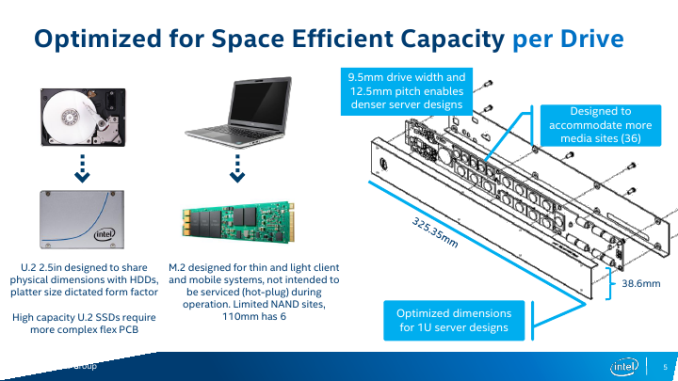

For many years SSDs relied on form-factors originally designed for HDDs to ensure compatibility between different types of storage devices in PCs and servers. Meanwhile, the 2.5” and the 3.5” form-factors are not always optimal for SSDs in terms of storage density, cooling, and other aspects. To better address client computers and some types of servers, Intel developed the M.2 form-factor for modular SSDs several years ago. While such drives have a lot of advantages when it comes to storage density, they were not designed to support such functionality as hot-plugging, whereas their cooling is a yet another concern. By contrast, the ruler form-factor was developed specifically for server drives and is tailored for requirements of datacenters. As Intel puts it, the ruler form-factor “delivers the most storage capacity for a server, with the lowest required cooling and power needs”.

From technical point of view, each ruler SSD is a long hot-swappable module that can accommodate tens of NAND flash or 3D XPoint chips, and thus offer capacities and performance levels that easily exceed those of M.2 modules.

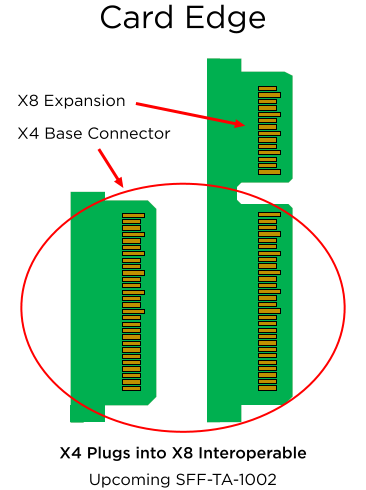

The initial ruler SSDs will use the SFF-TA-1002 "Gen-Z" connector, supporting PCIe 3.1 x4 and x8 interfaces with a maximum theoretical bandwidth of around 3.94 GB/s and 7.88 GB/s in both directions. Eventually, the modules could gain an x16 interface featuring 8 GT/s, 16 GT/s (PCIe Gen 4) or even 25 - 32 GT/s (PCIe Gen 5) data transfer rate (should the industry need SSDs with ~50 - 63 GB/s throughput). In fact, connectors are ready for PCIe Gen 5 speeds even now, but there are no hosts to support the interface.

One of the key things about the ruler form-factor is that it was designed specifically for server-grade SSDs and therefore offers a lot more than standards for client systems. For example, when compared to the consumer-grade M.2, a PCIe 3.1 x4-based EDSFF ruler SSD has extra SMBus pins for NVMe management, additional pins to charge power loss protection capacitors separately from the drive itself (thus enabling passive backplanes and lowering their costs). The standard is set to use +12 V lane to power the ruler SSDs and Intel expects the most powerful drives to consume 50 W or more.

Servers and backplanes compatible with the rulers will be incompatible with DFF SSDs and HDDs, as well as with other proprietary form-factors (so, think of flash-only machines). EDSFF itself has yet to be formalized as a standard, however the working group for the standard already counts Dell, Lenovo, HPE, and Samsung as among its promotors, and Western Digital as one of several contributors.

It is also noteworthy that Intel has been shipping ruler SSDs based on planar MLC NAND to select partners (think of the usual suspects - large makers of servers as well as owners of huge datacenters) for about eight months now. While the drives did not really use all the advantages of the proposed standard – and I'd be surprised if they were even compliant with the final standard – they helped the EDSFF working group members prepare for the future. Moreover, some of Intel's partners have even added their features to the upcoming EDSFF standard, and still other partners are looking at using the form factor for GPU and FPGA accelerator devices. So it's clear that there's already a lot of industry interest and now growing support for the ruler/EDSFF concept.

Finally, one of the first drives to be offered in the ruler form-factor will be Intel’s DC P4500-series SSDs, which feature Intel’s enterprise-grade 3D NAND memory and a proprietary controller. Intel does not disclose maximum capacities offered by the DC P4500 rulers, but expect them to be significant. Over time Intel also plans to introduce 3D XPoint-based Optane SSDs in the ruler form-factor.

Related Reading:

Source: Intel

50 Comments

View All Comments

ddriver - Thursday, August 10, 2017 - link

Yeah yeah, if it comes from intel it is intrinsically much needed and utterly awesome, even if a literal turd.Zero downtime servicing is perfectly possible from engineering point of view. You have a 2U cassis with 4 drawers, each of them fitting 32 2.5" SSDs, its own motherboard and status indicators on the front. Some extra cable for power and network will allow you to pull the drawer open while still operational, from the right side you can pull out or plug in drives, from the left you can service or even replace the motherboard, something that you actually can't do in intel's ruler form factor. Obviously, you will have to temporary shut down the system to replace the motherboard, but it will only take out 1/4 of the chassis, and it will take no more than a minute, while replacing individual drives or ram sticks will take seconds.

In contrast the ruler only allows for easy replacement of drives, if you want to replace the motherboard, you will have to pull out the whole thing, and shut down the whole thing, and your downtime will be significantly longer.

The reason I went for proprietary server chassis is that standard stuff is garbage. Which is the source of all servicing and capacity issues. There is absolutely nothing wrong with the SSD form factor, it is the server form factor that is problematic.

In this context, introducing a new, incompatible with existing infrastructure SSD form factor, which will in all likelihood also come with a premium is just stupid. Especially when you can get even better serviceability, value, compatibility and efficiency with an improved server form factor.

Samus - Thursday, August 10, 2017 - link

per usual, ddriver the internet forum troll knows more than industry pioneer Intel...SkiBum1207 - Friday, August 11, 2017 - link

If your service encounters downtime when you shut a server down, you have a seriously poor architecture. We run ~3000 servers powering our main stack - we constantly lose machines due to a myriad of issues and it literally doesn't matter. Mesos handles re-routing of tasks, and new instances are created to replace the lost capacity.At scale, if the actual backplane does have issues, the DC provider simply replaces it with a new unit - the time it takes to diagnose, repair, and re-rack the unit is a complete waste of money.

petteyg359 - Wednesday, August 16, 2017 - link

To the contrary, nearly all (every single one I've seen, so "nearly" Matt he incorrect) SATA drives support hot swapping. It's part of the damn protocol. There are *host controllers* that don't support it, but finding those on modern hardware is challenging.Valantar - Wednesday, August 9, 2017 - link

If this form factor is designed to cool devices up to 50w, and you can stick 32 of them in a 1U server, that sounds like a dream come true for GPU accelerators. Good luck fitting eight 250W (which is what you'd need to exceed the performance of that assuming perfect scaling) GPUs in a 1U server, after all.Deicidium369 - Tuesday, June 23, 2020 - link

Haven't seen that FF used for GPUs - but Intel did release some Nervana that use the m.2 type connector...mode_13h - Wednesday, August 9, 2017 - link

I'm sad to see the gap between HEDT and server/datacenter widening into a chasm.Also, I'm not clear on how these are meant to dissipate 50 W. Will such high-powered devices have spacing requirements so they can cool through their case? Or would you pack them in like sardines, and let them bake as if in an oven?

mode_13h - Wednesday, August 9, 2017 - link

Just to let you know where I'm coming from, I have a LGA2011 workstation board with a Xeon and a DC P3500 that I got in a liquidation sale. I love being able to run old server HW for cheap, and in a fairly normal desktop case.I'm worried that ATX boards with LGA3647 sockets are going to be quite rare, and using these "ruler" drives in any kind of desktop form factor seems ungainly and impractical, at best.

cekim - Wednesday, August 9, 2017 - link

12.5mm pitch 9.5mm width - I'm assuming this indicates an air-gap on both sides to allow for cooling.As for (E)ATX and EEB MB's I hear ya - ASROCK has some up on their page, but certainly they are not looking like they will be as easy to get as C612 boards were/are.

I'm not worried about storage tech for consumers, but the CPU divide is definitely widening. Hopefully AMD keeps Intel honest and we start to see dual TR boards that force Intel to enable ECC and do dual i9 systems.

Software has to get better through, it is still lagging behind and just got roughly 2x the cores consumer software could have ever imagined when they had just started digesting more than 4.

Deicidium369 - Tuesday, June 23, 2020 - link

they are not designed to be used in anything other then the 1U case that is designed for them.