The AMD Ryzen Threadripper 1950X and 1920X Review: CPUs on Steroids

by Ian Cutress on August 10, 2017 9:00 AM ESTGrand Theft Auto

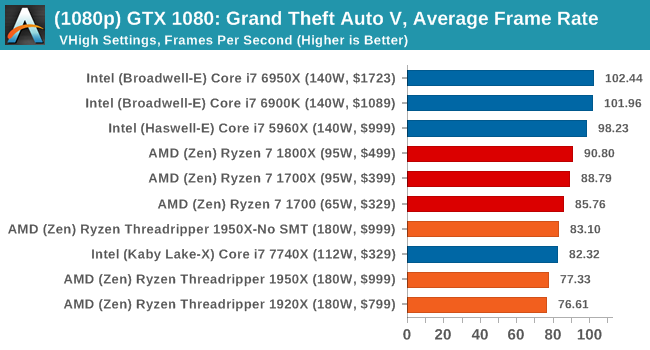

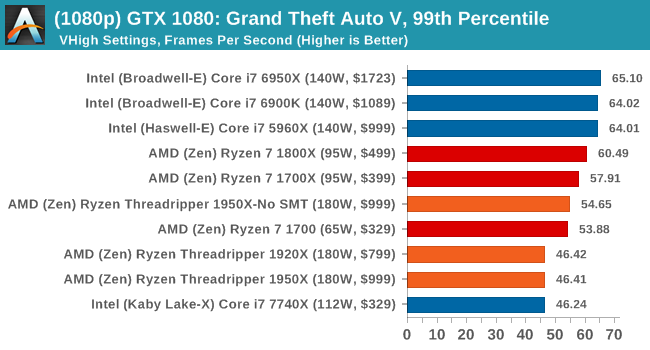

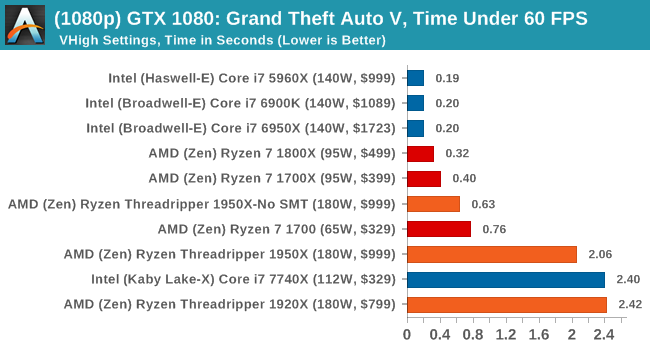

The highly anticipated iteration of the Grand Theft Auto franchise hit the shelves on April 14th 2015, with both AMD and NVIDIA in tow to help optimize the title. GTA doesn’t provide graphical presets, but opens up the options to users and extends the boundaries by pushing even the hardest systems to the limit using Rockstar’s Advanced Game Engine under DirectX 11. Whether the user is flying high in the mountains with long draw distances or dealing with assorted trash in the city, when cranked up to maximum it creates stunning visuals but hard work for both the CPU and the GPU.

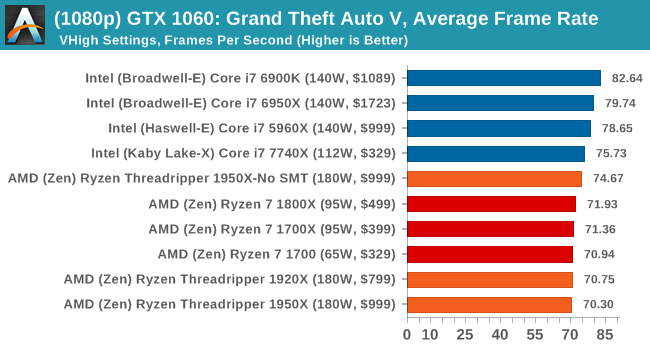

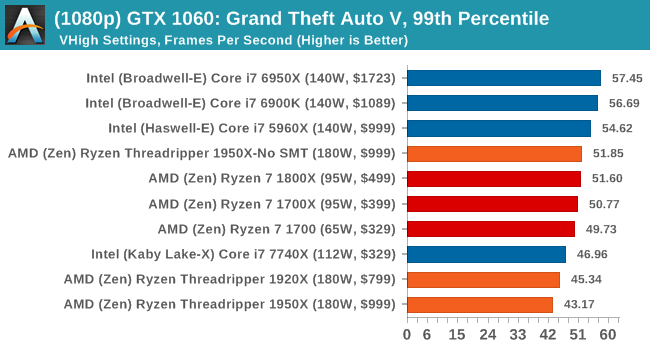

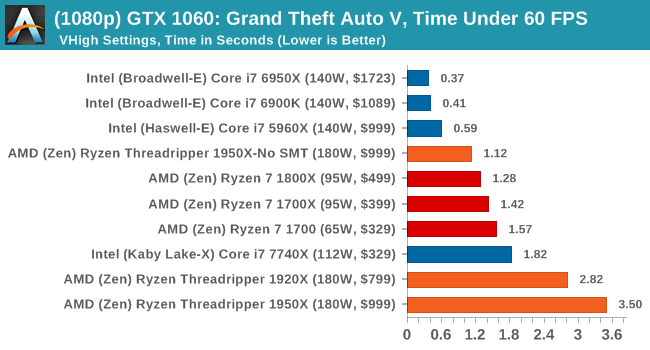

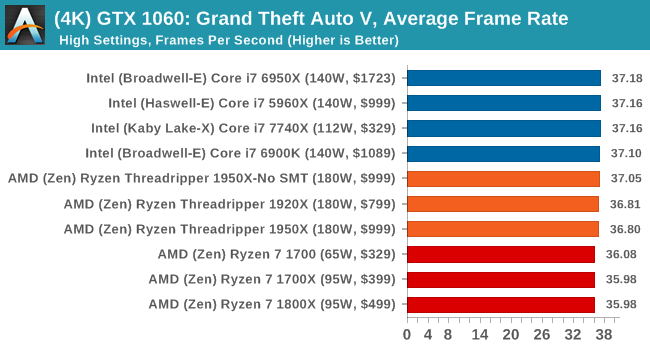

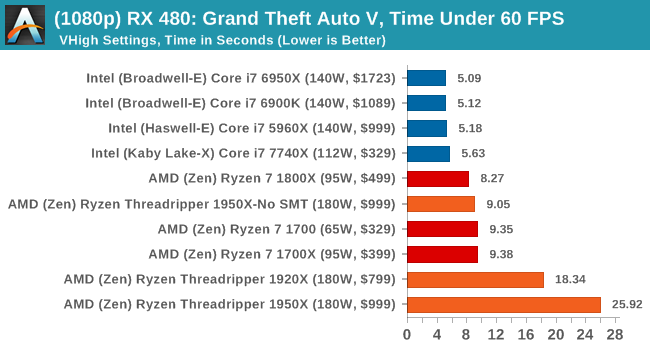

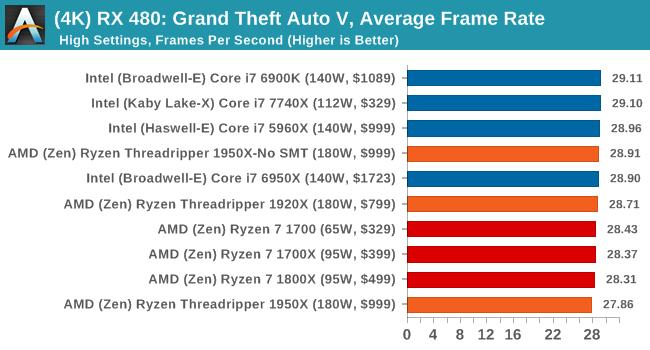

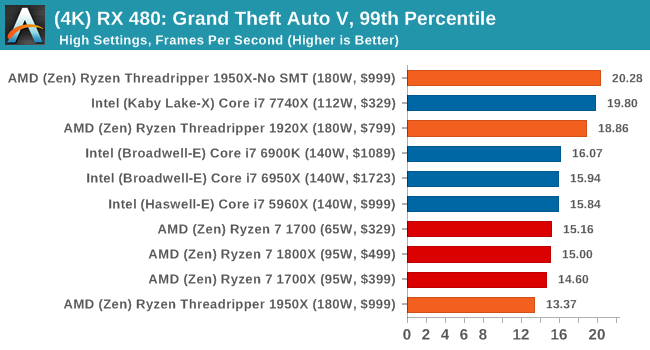

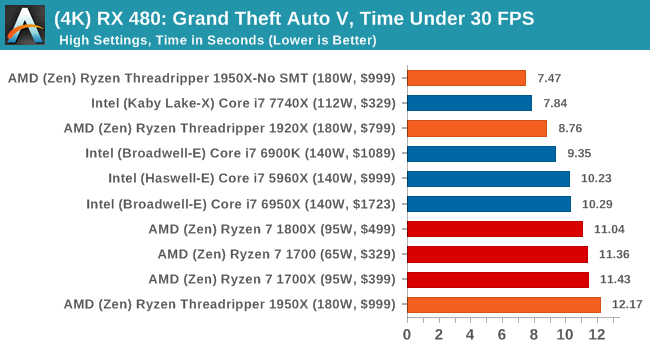

For our test we have scripted a version of the in-game benchmark. The in-game benchmark consists of five scenarios: four short panning shots with varying lighting and weather effects, and a fifth action sequence that lasts around 90 seconds. We use only the final part of the benchmark, which combines a flight scene in a jet followed by an inner city drive-by through several intersections followed by ramming a tanker that explodes, causing other cars to explode as well. This is a mix of distance rendering followed by a detailed near-rendering action sequence, and the title thankfully spits out frame time data.

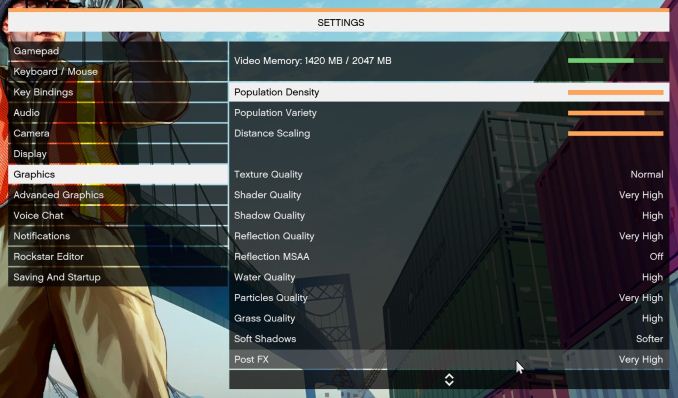

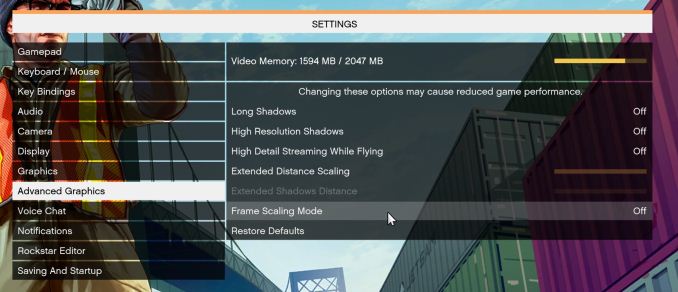

There are no presets for the graphics options on GTA, allowing the user to adjust options such as population density and distance scaling on sliders, but others such as texture/shadow/shader/water quality from Low to Very High. Other options include MSAA, soft shadows, post effects, shadow resolution and extended draw distance options. There is a handy option at the top which shows how much video memory the options are expected to consume, with obvious repercussions if a user requests more video memory than is present on the card (although there’s no obvious indication if you have a low-end GPU with lots of GPU memory, like an R7 240 4GB).

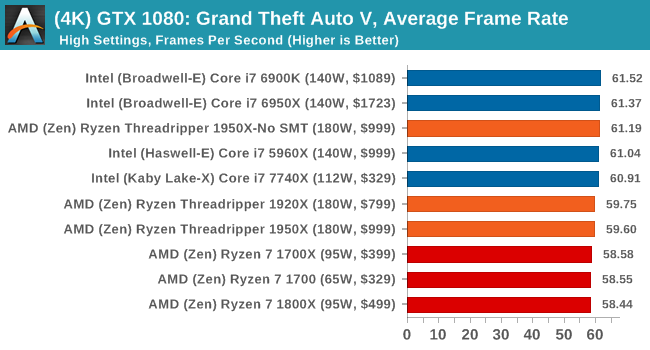

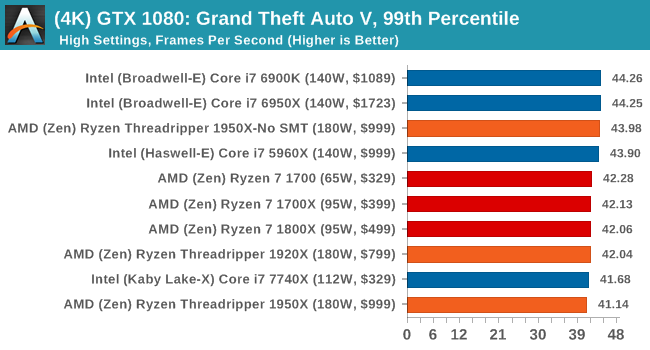

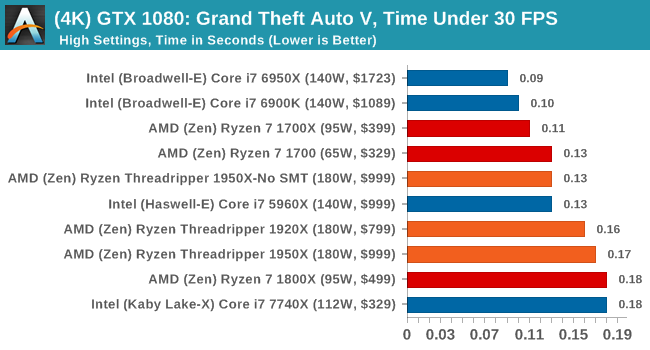

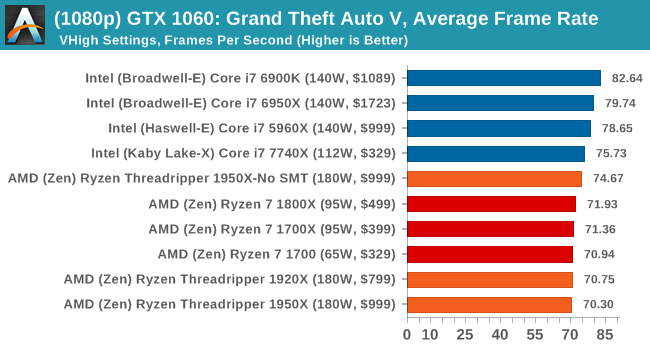

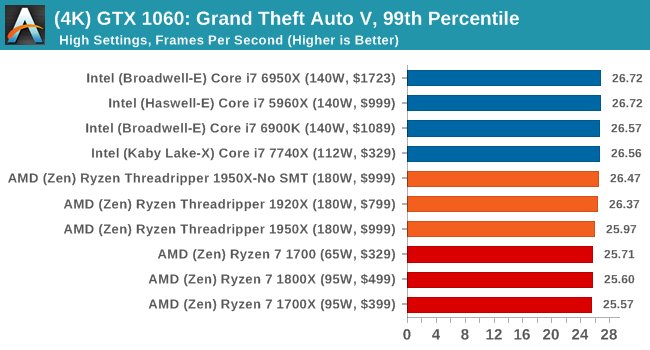

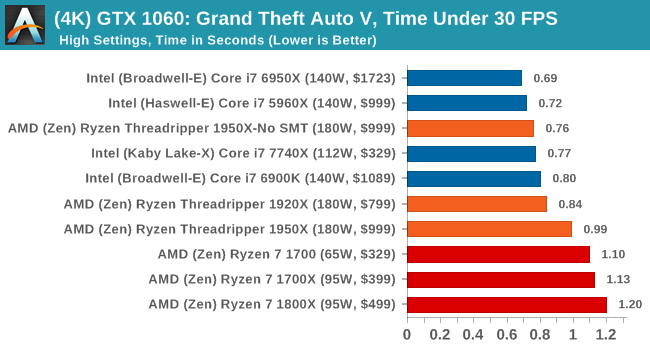

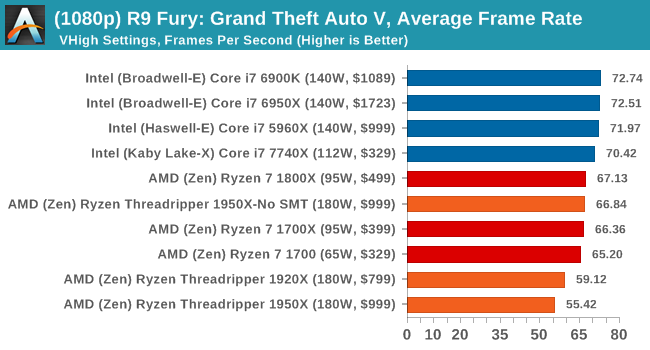

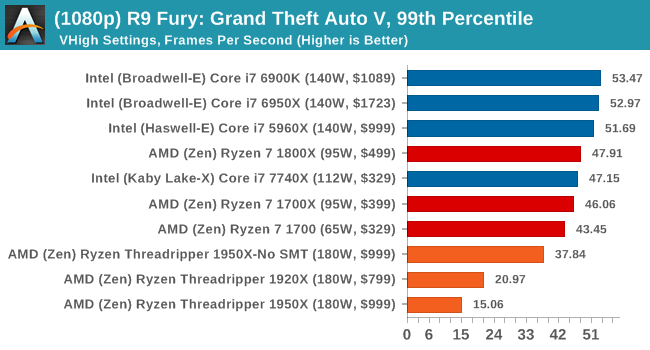

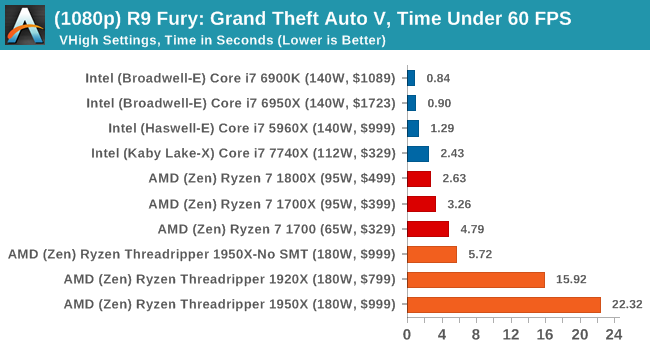

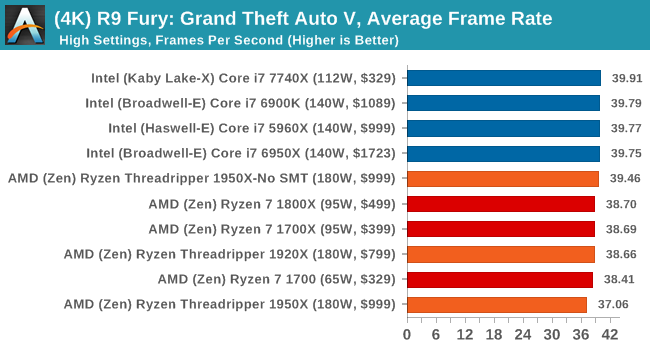

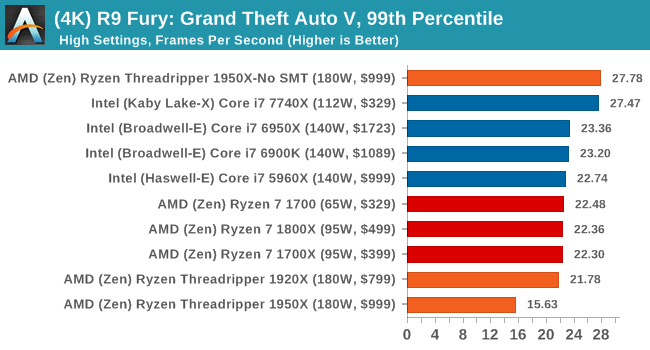

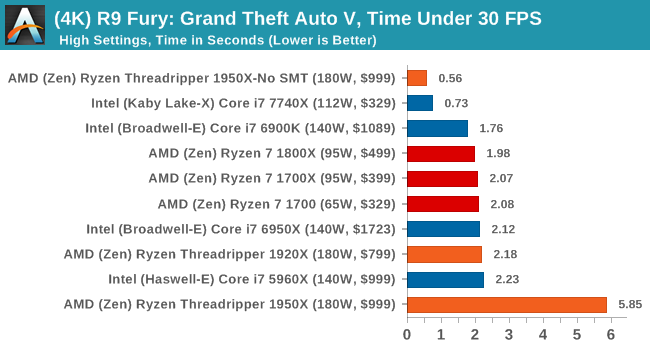

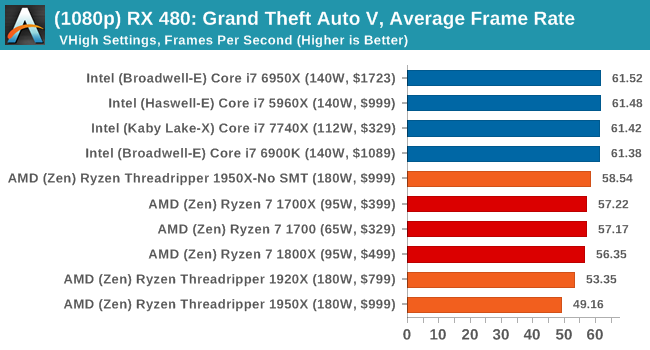

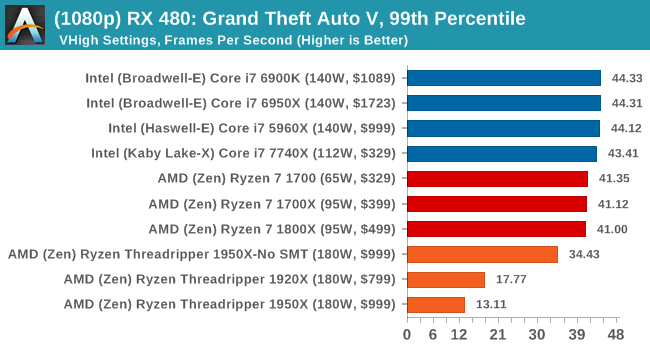

To that end, we run the benchmark at 1920x1080 using an average of Very High on the settings, and also at 4K using High on most of them. We take the average results of four runs, reporting frame rate averages, 99th percentiles, and our time under analysis.

All of our benchmark results can also be found in our benchmark engine, Bench.

MSI GTX 1080 Gaming 8G Performance

1080p

4K

ASUS GTX 1060 Strix 6G Performance

1080p

4K

Sapphire Nitro R9 Fury 4G Performance

1080p

4K

Sapphire Nitro RX 480 8G Performance

1080p

4K

Depending on the CPU, for the most part Threadripper performs near to Ryzen or just below it.

347 Comments

View All Comments

Ian Cutress - Thursday, August 10, 2017 - link

Anand hasn't worked at the website for a few years now. The author (me) is clearly stated at the top.Just think about what you're saying. If I was in Intel's pocket, we wouldn't be being sampled by AMD, period. If they were having major beef with how we were reporting, I'd either be blacklisted or consistently on a call every time there's been an AMD product launch (and there's been a fair few this year).

I've always let the results do the talking, and steered clear from hype generated by others online. We've gone in-depth into the how things are done the way they are, and the positives and negatives as to the methods of each action (rather than just ignoring the why). We've run the tests, and been honest about our results, and considered the market for the product being reviewed. My background is scientific, and the scientific method is applied rigorously and thoroughly on the product and the target market. If I see bullshit, I point it out and have done many times in the past.

I'm not exactly sure what you're problem is - you state that the review is 'slanted journalism', but fail to give examples. We've posted ALL of our review data that we have, and we have a benchmark database for anyone that ones to go through all the data at any time. That benchmark database is continually being updated with new CPUs and new tests. Feel free to draw your own conclusions if you don't agree with what is written.

Just note that a couple of weeks ago I was being called a shill for AMD. A couple of weeks before that, a shill for Intel. A couple before that... Nonetheless both companies still keep us on their sampling lists, on their PR lists, they ask us questions, they answer our questions. Editorial is a mile away from anything ad related and the people I deal with at both companies are not the ones dealing with our ad teams anyway. I wouldn't have it any other way.

MajGenRelativity - Thursday, August 10, 2017 - link

I personally always enjoy reading your reviews Ian. Even though they don't always reach the conclusions I hoped they would reach before reading, you have the evidence and benchmarks to back it up. Keep up the good work!Diji1 - Thursday, August 10, 2017 - link

Agreed!Zstream - Thursday, August 10, 2017 - link

For me, it isn't about "scientific benchmarking", it's about what benchmarks are used and what story is being told. I think, along with many others, would never buy a threadripper to open a single .pdf. I could be wrong, but I don't think that's the target audience Intel or AMD is aiming for.I mean, why not forgo the .pdf and other benchmarks that are really useless for this product and add multi-threaded use cases. For instance, why not test how many VM's and I/O is received, or launching a couple VM's, running a SQL DB benchmark, and gaming at the same time?

It could just be me, but I'm not going to buy a 7900x or 1950x for opening up .pdf files, or test SunSpider/Kraken lol. Hopefully we didn't include those benchmarks to tell a story, as mentioned above.

We're goingto be compiling, 3d rendering with multi-gpu's, running multiple VM's, all while multi-tasking with other apps.

My 2 cents.

DanNeely - Thursday, August 10, 2017 - link

Single threaded use cases aren't why people buy really wide CPUs. But performing badly in them, since they represent a lot of ordinary basic usage, can be a reason not to buy one. Also running the same benches on all products allows for them all to be compared readily vs having to hunt for benches covering the specific pair you're interested in.VM type benchmarks are more Johan's area since that's a traditional server workload. OTOH there's a decent amount of overlap with developer workloads there too so adding it now that we've got a compile test might not be a bad idea. On the gripping hand, any new benchmarks need to be fully automated so Ian can push an easy button to collect data while he works on analysis of results. Also the value of any new benchmark needs to be weighed against how much it slows the entire benching run down, and how much time rerunning it on a large number of existing platforms will take to generate a comparison set.

iwod - Thursday, August 10, 2017 - link

It really depends on use case. 20% slower on PDF opening? I dont care, because the time has reached diminishing returns and Intel needs to be MUCH faster for this to be a UX problem.But I think at $999 Intel has a strong case for its i9. But factoring in the MB AMD is still cheaper. Not sure if that is mentioned in the article.

Also note Intel is on their third iteration of 14nm, against a new 14nm from AMD GloFlo.

I am very excited for 7nm Zen 2 coming next year. I hope all the software and compiler as well as optimisation has time to catch up for Zen.

Zstream - Thursday, August 10, 2017 - link

I won't get into an argument, but I and many of my friends, who are on the developer side of the house have been waiting for this review, and it doesn't provide me with any useful information. I understand it might be Johan's wheelhouse, but come on... opening a damn .pdf file, and testing SunSpider/Kraken/gaming benchmarks? That won't provide anyone interested in either CPU any validation of purchase. I'm not trying to be salty, I just want some more damn details vs. trying to put both vendors in a good light.Ian Cutress - Thursday, August 10, 2017 - link

Rather than have 20 different tests for each set of different CPUs and very minimal overlap, we have a giant glove that has all the tests for every CPU in a single script. So 80 test points, rather than 4x20. The idea is that there are benchmarks for everyone, so you can ignore the ones that don't matter, rather than expect 100% of the benchmarks to matter (e.g. if you care about five tests, does it matter to you if the tests are published alongside 75 other tests, or do they have to be the only five tests in the review?). It's not a case of trying to put both vendors in a good light, it's a case of this is a universal test suite.Zstream - Thursday, August 10, 2017 - link

Well, show me a database benchmark, virtual machine benchmark, 3dmax benchmark, blender benchmark and I'll shutty ;)It's hard for me to look at this review outside of a gamers perspective, which I'm not. Sorry, just the way I see it. I'll wait for more pro-consumer benchmarks?

Johan Steyn - Thursday, August 10, 2017 - link

This is exactly my point as well. Why on earth so much focus on single threaded tests and games, since we all knew from way back TR was not going to be a winner here. Where are all the other benches as you mention. Oh, no, this will have Intel look bad!!!!!