The AMD Ryzen Threadripper 1950X and 1920X Review: CPUs on Steroids

by Ian Cutress on August 10, 2017 9:00 AM ESTCPU Legacy Tests

Our legacy tests represent benchmarks that were once at the height of their time. Some of these are industry standard synthetics, and we have data going back over 10 years. All of the data here has been rerun on Windows 10, and we plan to go back several generations of components to see how performance has evolved.

All of our benchmark results can also be found in our benchmark engine, Bench.

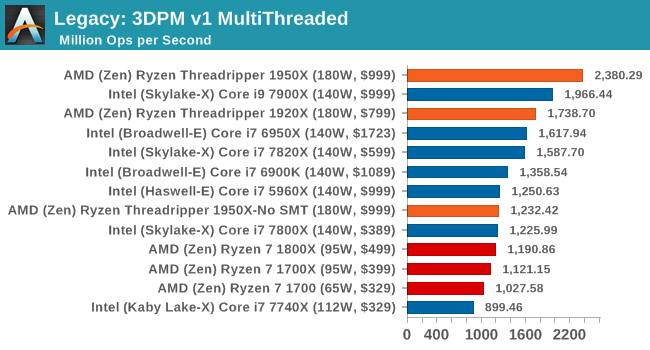

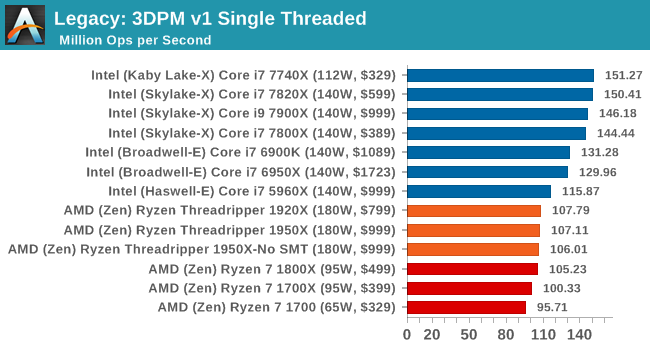

3D Particle Movement v1

3DPM is a self-penned benchmark, taking basic 3D movement algorithms used in Brownian Motion simulations and testing them for speed. High floating point performance, MHz and IPC wins in the single thread version, whereas the multithread version has to handle the threads and loves more cores. This is the original version, written in the style of a typical non-computer science student coding up an algorithm for their theoretical problem, and comes without any non-obvious optimizations not already performed by the compiler, such as false sharing.

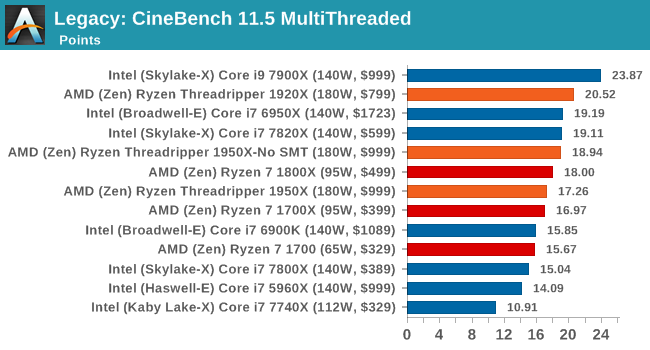

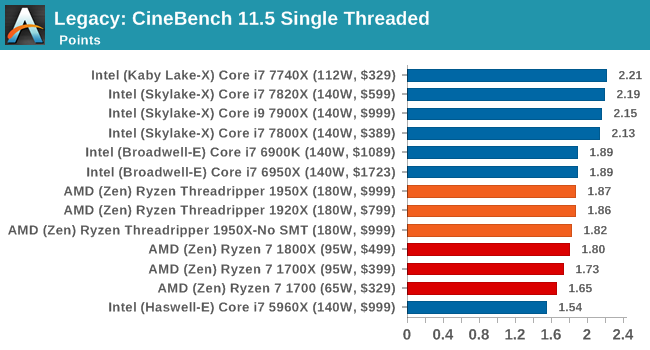

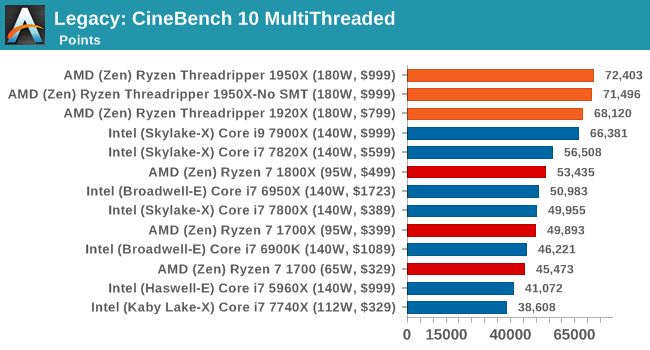

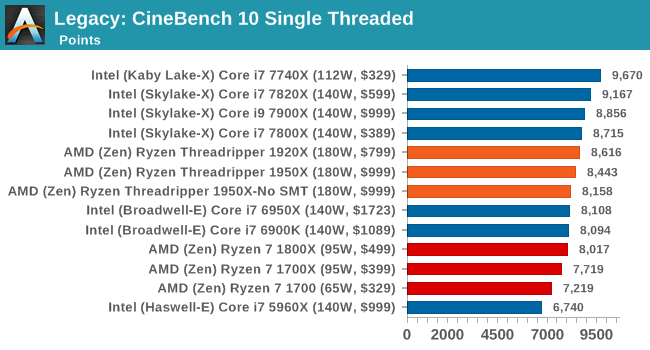

CineBench 11.5 and 10

Cinebench is a widely known benchmarking tool for measuring performance relative to MAXON's animation software Cinema 4D. Cinebench has been optimized over a decade and focuses on purely CPU horsepower, meaning if there is a discrepancy in pure throughput characteristics, Cinebench is likely to show that discrepancy. Arguably other software doesn't make use of all the tools available, so the real world relevance might purely be academic, but given our large database of data for Cinebench it seems difficult to ignore a small five minute test. We run the modern version 15 in this test, as well as the older 11.5 and 10 due to our back data.

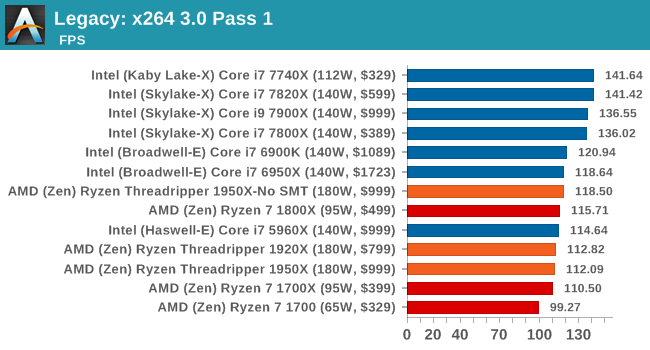

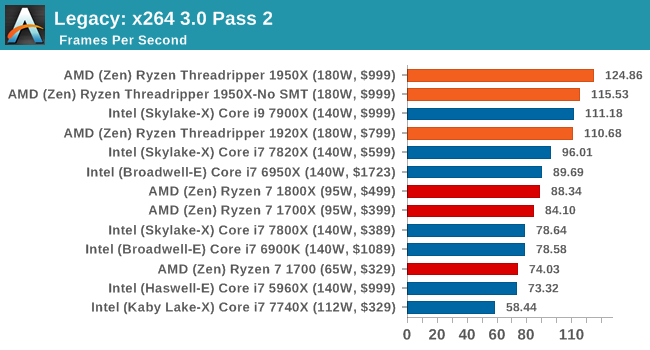

x264 HD 3.0

Similarly, the x264 HD 3.0 package we use here is also kept for historic regressional data. The latest version is 5.0.1, and encodes a 1080p video clip into a high-quality x264 file. Version 3.0 only performs the same test on a 720p file, and in most circumstances the software performance hits its limit on high-end processors, but still works well for mainstream and low-end. Also, this version only takes a few minutes, whereas the latest can take over 90 minutes to run.

The 1950X: the first CPU to score higher on the 2nd pass of this test than it does on the first pass.

347 Comments

View All Comments

Ian Cutress - Thursday, August 10, 2017 - link

Anand hasn't worked at the website for a few years now. The author (me) is clearly stated at the top.Just think about what you're saying. If I was in Intel's pocket, we wouldn't be being sampled by AMD, period. If they were having major beef with how we were reporting, I'd either be blacklisted or consistently on a call every time there's been an AMD product launch (and there's been a fair few this year).

I've always let the results do the talking, and steered clear from hype generated by others online. We've gone in-depth into the how things are done the way they are, and the positives and negatives as to the methods of each action (rather than just ignoring the why). We've run the tests, and been honest about our results, and considered the market for the product being reviewed. My background is scientific, and the scientific method is applied rigorously and thoroughly on the product and the target market. If I see bullshit, I point it out and have done many times in the past.

I'm not exactly sure what you're problem is - you state that the review is 'slanted journalism', but fail to give examples. We've posted ALL of our review data that we have, and we have a benchmark database for anyone that ones to go through all the data at any time. That benchmark database is continually being updated with new CPUs and new tests. Feel free to draw your own conclusions if you don't agree with what is written.

Just note that a couple of weeks ago I was being called a shill for AMD. A couple of weeks before that, a shill for Intel. A couple before that... Nonetheless both companies still keep us on their sampling lists, on their PR lists, they ask us questions, they answer our questions. Editorial is a mile away from anything ad related and the people I deal with at both companies are not the ones dealing with our ad teams anyway. I wouldn't have it any other way.

MajGenRelativity - Thursday, August 10, 2017 - link

I personally always enjoy reading your reviews Ian. Even though they don't always reach the conclusions I hoped they would reach before reading, you have the evidence and benchmarks to back it up. Keep up the good work!Diji1 - Thursday, August 10, 2017 - link

Agreed!Zstream - Thursday, August 10, 2017 - link

For me, it isn't about "scientific benchmarking", it's about what benchmarks are used and what story is being told. I think, along with many others, would never buy a threadripper to open a single .pdf. I could be wrong, but I don't think that's the target audience Intel or AMD is aiming for.I mean, why not forgo the .pdf and other benchmarks that are really useless for this product and add multi-threaded use cases. For instance, why not test how many VM's and I/O is received, or launching a couple VM's, running a SQL DB benchmark, and gaming at the same time?

It could just be me, but I'm not going to buy a 7900x or 1950x for opening up .pdf files, or test SunSpider/Kraken lol. Hopefully we didn't include those benchmarks to tell a story, as mentioned above.

We're goingto be compiling, 3d rendering with multi-gpu's, running multiple VM's, all while multi-tasking with other apps.

My 2 cents.

DanNeely - Thursday, August 10, 2017 - link

Single threaded use cases aren't why people buy really wide CPUs. But performing badly in them, since they represent a lot of ordinary basic usage, can be a reason not to buy one. Also running the same benches on all products allows for them all to be compared readily vs having to hunt for benches covering the specific pair you're interested in.VM type benchmarks are more Johan's area since that's a traditional server workload. OTOH there's a decent amount of overlap with developer workloads there too so adding it now that we've got a compile test might not be a bad idea. On the gripping hand, any new benchmarks need to be fully automated so Ian can push an easy button to collect data while he works on analysis of results. Also the value of any new benchmark needs to be weighed against how much it slows the entire benching run down, and how much time rerunning it on a large number of existing platforms will take to generate a comparison set.

iwod - Thursday, August 10, 2017 - link

It really depends on use case. 20% slower on PDF opening? I dont care, because the time has reached diminishing returns and Intel needs to be MUCH faster for this to be a UX problem.But I think at $999 Intel has a strong case for its i9. But factoring in the MB AMD is still cheaper. Not sure if that is mentioned in the article.

Also note Intel is on their third iteration of 14nm, against a new 14nm from AMD GloFlo.

I am very excited for 7nm Zen 2 coming next year. I hope all the software and compiler as well as optimisation has time to catch up for Zen.

Zstream - Thursday, August 10, 2017 - link

I won't get into an argument, but I and many of my friends, who are on the developer side of the house have been waiting for this review, and it doesn't provide me with any useful information. I understand it might be Johan's wheelhouse, but come on... opening a damn .pdf file, and testing SunSpider/Kraken/gaming benchmarks? That won't provide anyone interested in either CPU any validation of purchase. I'm not trying to be salty, I just want some more damn details vs. trying to put both vendors in a good light.Ian Cutress - Thursday, August 10, 2017 - link

Rather than have 20 different tests for each set of different CPUs and very minimal overlap, we have a giant glove that has all the tests for every CPU in a single script. So 80 test points, rather than 4x20. The idea is that there are benchmarks for everyone, so you can ignore the ones that don't matter, rather than expect 100% of the benchmarks to matter (e.g. if you care about five tests, does it matter to you if the tests are published alongside 75 other tests, or do they have to be the only five tests in the review?). It's not a case of trying to put both vendors in a good light, it's a case of this is a universal test suite.Zstream - Thursday, August 10, 2017 - link

Well, show me a database benchmark, virtual machine benchmark, 3dmax benchmark, blender benchmark and I'll shutty ;)It's hard for me to look at this review outside of a gamers perspective, which I'm not. Sorry, just the way I see it. I'll wait for more pro-consumer benchmarks?

Johan Steyn - Thursday, August 10, 2017 - link

This is exactly my point as well. Why on earth so much focus on single threaded tests and games, since we all knew from way back TR was not going to be a winner here. Where are all the other benches as you mention. Oh, no, this will have Intel look bad!!!!!