The AMD Ryzen Threadripper 1950X and 1920X Review: CPUs on Steroids

by Ian Cutress on August 10, 2017 9:00 AM ESTCPU Office Tests

The office programs we use for benchmarking aren't specific programs per-se, but industry standard tests that hold weight with professionals. The goal of these tests is to use an array of software and techniques that a typical office user might encounter, such as video conferencing, document editing, architectural modeling, and so on and so forth.

All of our benchmark results can also be found in our benchmark engine, Bench.

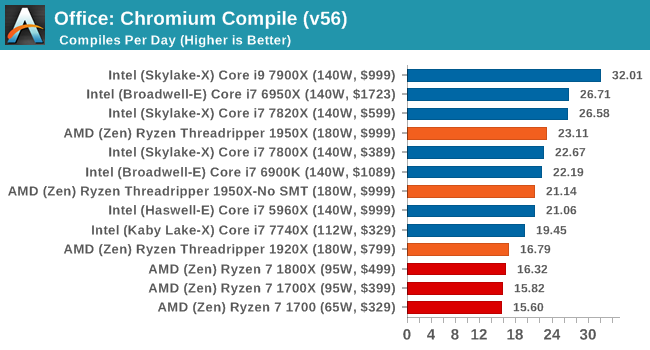

Chromium Compile (v56)

Our new compilation test uses Windows 10 Pro, VS Community 2015.3 with the Win10 SDK to compile a nightly build of Chromium. We've fixed the test for a build in late March 2017, and we run a fresh full compile in our test. Compilation is the typical example given of a variable threaded workload - some of the compile and linking is linear, whereas other parts are multithreaded.

One of the interesting data points in our test is the Compile, and it is surprising to see the 1920X only just beat the Ryzen 7 chips. Because this test requires a lot of cross-core communication, the fewer cores per CCX there are, the worse the result. This is why the 1950X in SMT-off mode beats the 3 cores-per-CCX 1920X, along with lower latency memory support. We know that this test is not too keen on victim caches either, but it does seem that the 2MB per core ratio does well for the 1950X, and could explain the performance difference moving from 8 to 12 to 16 cores under the Zen microarchitecture.

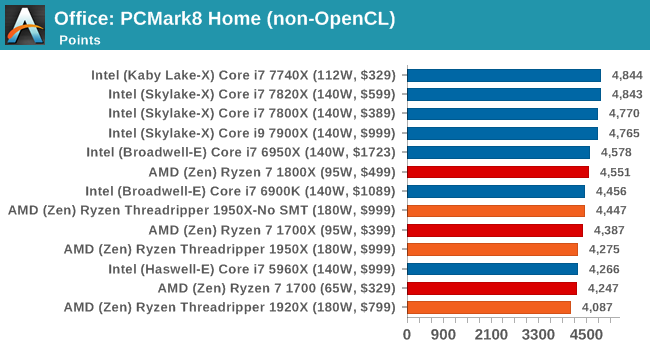

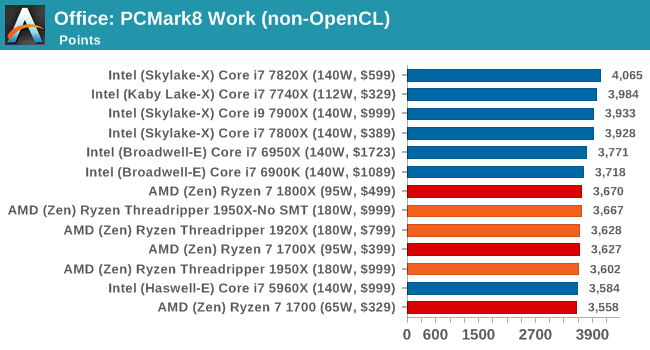

PCMark8: link

Despite originally coming out in 2008/2009, Futuremark has maintained PCMark8 to remain relevant in 2017. On the scale of complicated tasks, PCMark focuses more on the low-to-mid range of professional workloads, making it a good indicator for what people consider 'office' work. We run the benchmark from the commandline in 'conventional' mode, meaning C++ over OpenCL, to remove the graphics card from the equation and focus purely on the CPU. PCMark8 offers Home, Work and Creative workloads, with some software tests shared and others unique to each benchmark set.

Strangely, PCMark 8's Creative test seems to be failing across the board. We're trying to narrow down the issue.

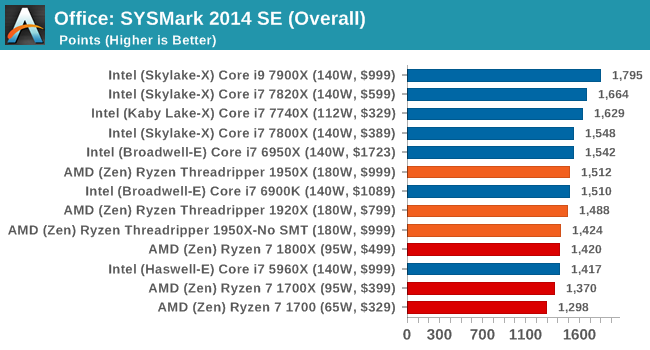

SYSmark 2014 SE: link

SYSmark is developed by Bapco, a consortium of industry CPU companies. The goal of SYSmark is to take stripped down versions of popular software, such as Photoshop and Onenote, and measure how long it takes to process certain tasks within that software. The end result is a score for each of the three segments (Office, Media, Data) as well as an overall score. Here a reference system (Core i3-6100, 4GB DDR3, 256GB SSD, Integrated HD 530 graphics) is used to provide a baseline score of 1000 in each test.

A note on context for these numbers. AMD left Bapco in the last two years, due to differences of opinion on how the benchmarking suites were chosen and AMD believed the tests are angled towards Intel processors and had optimizations to show bigger differences than what AMD felt was present. The following benchmarks are provided as data, but the conflict of opinion between the two companies on the validity of the benchmark is provided as context for the following numbers.

347 Comments

View All Comments

lefty2 - Thursday, August 10, 2017 - link

except that they haven'tDr. Swag - Thursday, August 10, 2017 - link

How so? You have the performance numbers, and they gave you power draw numbers...bongey - Thursday, August 10, 2017 - link

Just do a avx512 benchmark and Intel will jump over 300watts , 400watts(overclocked) only from the cpu. (prime95 avx512 benchmark).See der8auer's video "The X299 VRM Disaster (en)"DanNeely - Thursday, August 10, 2017 - link

The Chromium build time results are interesting. Anandtech's results have the 1950X only getting 3/4ths of the 7900X's performance. Arstechnica's getting almost equal results on both CPUs, but at 16 compiles per day vs 24 or 32 is seeing significantly worse numbers all around.I'm wondering what's different between the two compile benchmarks to see such a large spread.

cknobman - Thursday, August 10, 2017 - link

I think it has a lot to do with the RAM used by Anandtech vs Arstechnica .For all the regular benchmarking Anand used DDR4 2400, only the DDR 3200 was used in some overcloking.

Arstechnica used DDR4 3200 for all benchmarking.

Everyone already knows how faster DDR4 memory helps the Zen architecture.

DanNeely - Thursday, August 10, 2017 - link

If ram was the determining factor, Ars should be seeing faster build times though not slower ones.carewolf - Thursday, August 10, 2017 - link

Anandtech must have misconfigured something. Building chromium is scales practically linearly. You can move jobs all the way across a slow network and compile on another machine and you still get linear speed-ups with more added cores.Ian Cutress - Thursday, August 10, 2017 - link

We're using a late March v56 code base with MSVC.Ars is using a newer v62 code base with clang-cl and VC++ linking

We locked in our versions when we started testing Windows 10 a few months ago.

supdawgwtfd - Friday, August 11, 2017 - link

Maybe drop it then as it is not at all usefull info.Johan Steyn - Thursday, August 10, 2017 - link

I refrained from posting on the previous article, but now I'm quite sure Anand is being paid by Intel. It is not that I argue against the benchmarks, but how it is presented. I was even under the impression that this was an Intel review.The previous article was stated as "Introducing Intel's Desktop Processor" Huge marketing research is done on how to market products. By just stating one thing first or in a different way, quite different messages can be conveyed without lying outright.

By making the "Most Powerful, Most Scalable" Bold, that is what the readers read first, then they read "Desktop Processor" without even reading that is is Intel's. This is how marketing works, so Anand used slanted journalism to favour Intel, yet most people will just not realise it eat it up.

In this review there are so many slanted journalism problems, it is just sad. If you want, just compare it to other sites reviews. They just omit certain tests and list others at which Intel excel.

I have lost my respect for Anandtech with these last two articles of them, and I have followed Anandtech since its inception. Sad to see that you are also now bought by Intel, even though I suspected this before. Congratulations for making this so clear!!!