The AMD Ryzen Threadripper 1950X and 1920X Review: CPUs on Steroids

by Ian Cutress on August 10, 2017 9:00 AM ESTAnalyzing Creator Mode and Game Mode

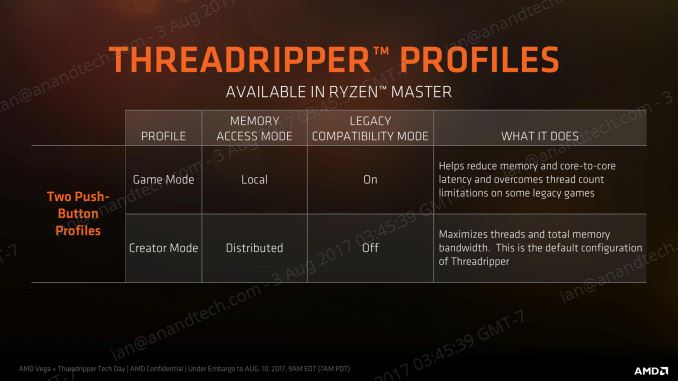

Way back on page 3, this review explained that AMD was promoting two modes: Creator Mode with all cores enabled and a uniform memory access (UMA) architecture, and Game Mode that disabled one of the dies and adjusted to a non-uniform memory architecture (NUMA). The idea was that in Creator Mode you had all the threads and bandwidth, while Game Mode focused on compatibility with games that freaked out if you had too many cores, but also memory and core-to-core latency by pinning data as close to the core as possible, and keeping related threads all within the same Zeppelin die. Both methods have their positives and negatives, and although they can be enabled through a button press in Ryzen Master and a reboot, most users who care enough about these settings are likely to set it and forget it. (And then notice that if the BIOS resets, so does the settings…)

*This page has been edited on 8/17, due to a misinterpretation in the implementation of Game Mode. This original review has been updated to reflect this. We have written a secondary mini-article with fresh testing on the effects of Game Mode.

347 Comments

View All Comments

mapesdhs - Friday, August 11, 2017 - link

And consoles are on the verge of moving to many-cores main CPUs. The inevitable dev change will spill over into PC gaming.RoboJ1M - Friday, August 11, 2017 - link

On the verge?All major consoles have had a greater core count than consumer CPUs, not to mention complex memory architectures, since, what, 2005?

One suspects the PC market has been benefiting from this for quite some time.

RoboJ1M - Friday, August 11, 2017 - link

Specifically, the 360 had 3 general purpose CPU coresAnd the PS3 had one general purpose CPU core and 7 short pipeline coprocessors that could only read and write to their caches. They had to be fed by the CPU core.

The 360 had unified program and graphics ram (still not common on PC!)

As well as it's large high speed cache.

The PS3 had septate program and video ram.

The Xbox one and PS4 were super boring pcs in boxes. But they did have 8 core CPUs. The x1x is interesting. It's got unified ram that runs at ludicrous speed. Sadly it will only be used for running games in 1800p to 2160p at 30 to 60 FPS :(

mlambert890 - Saturday, August 12, 2017 - link

Why do people constantly assume this is purely time/market economics?Not everything can *be* parallelized. Do people really not get that? It isn't just developers targeting a market. There are tasks that *can't be parallelized* because of the practical reality of dependencies. Executing ahead and out of order can only go so far before you have an inverse effect. Everyone could have 40 core CPUs... It doesn't mean that *gaming workloads* will be able to scale out that well.

The work that lends itself best to parallelization is the rendering pipeline and that's already entirely on the GPU (which is already massively parallel)

Magichands8 - Thursday, August 10, 2017 - link

I think what AMD did here though is fantastic. In my mind, creating a switch to change modes vastly adds to the value of the chip. I can now maximize performance based upon workload and software profile and that brings me closer to having the best of both worlds from one CPU.Notmyusualid - Sunday, August 13, 2017 - link

@ rtho782I agree it is a mess, and also, it is not AMDs fault.

I've have a 14c/28t Broadwell chip for over a year now, and I cannot launch Tomb Raider with HT on, nor GTA5. But most s/w is indifferent to the amount of cores presented to them, it would seem to me.

BrokenCrayons - Thursday, August 10, 2017 - link

Great review but the word "traditional" is used heavily. Given the short lifespan of computer parts and the nature of consumer electronics, I'd suggest that there isn't enough time or emotional attachment to establish a tradition of any sort. Motherboards sockets and market segments, for instance, might be better described in other ways unless it's becoming traditional in the review business to call older product designs traditional. :)mkozakewich - Monday, August 14, 2017 - link

Oh man, but we'll still gnash our teeth at our broken tech traditions!lefty2 - Thursday, August 10, 2017 - link

It's pretty useless measuring power alone. You need to measure efficiency (performance /watt).So yeah, a 16 core CPU draws more power than a 10 core, but it also probably doing a lot more work.

Diji1 - Thursday, August 10, 2017 - link

Er why don't you just do it yourself, they've already given you the numbers.