The AMD Ryzen Threadripper 1950X and 1920X Review: CPUs on Steroids

by Ian Cutress on August 10, 2017 9:00 AM ESTPower Consumption and Distribution

With Threadripper weighing in at a TDP of 180W, it comes across as a big jump from previous AMD platforms that hover at 45-95W, or Intel platforms that are sub-95W for mainstream and up to 140W for the high-end desktop. Let us not forget that AMD actually released a 220W TDP processor in the form of the FX-9590 running at 5 GHz, which was initially sold for 12+ months as a part purely for OEMs and system integrators in order to ensure that users had sufficient cooling. Eventually it was released as a consumer product, bundled with a beefy double width liquid cooler and a pair of fans. AMD sampled us a CPU, not before I went and spent £300 on one myself and reviewed it:

Nonetheless, 180W for AMD isn’t a new concept for TDP. For this review I’ve been using the liquid cooler AMD shipped with our FX-9590 sample, because it was designed to handle at least 220W. (AMD also sampled a 3x120 Thermaltake cooler with Threadripper, which would have taken a lot longer to organise on the test bed.)

For our power testing, we run Prime95 for at least 60 seconds then use software to poll the integrated power counters on the chip to get results. Depending on the CPU, we can get data for the full chip, per core, DRAM, uncore or integrated graphics – it relies on our tool being up to date or the registers for this data to be known. Normally this way of reading the power consumption can be a smidge inaccurate compared to more invasive methods, it is quick and scriptable, and it is this data that governs if and when a CPU is hitting power limits and needs to adjust fan speeds/frequencies to compensate.

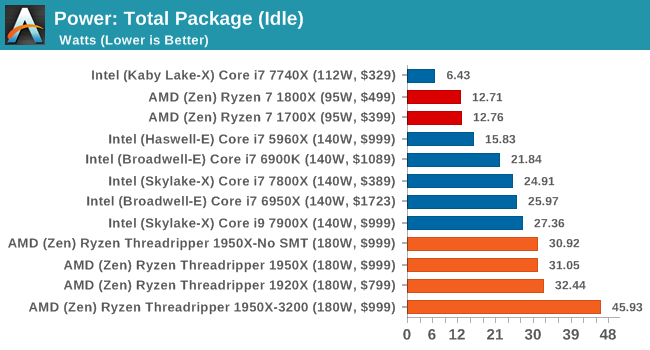

To start, let us take the full package power consumption for Threadripper.

On the whole, Threadripper is a hungry chip even at idle. Most of the power here is being consumed by the memory controller and PCIe bus to keep the GPU ticking over with a static display. The fact that the 1950X running DDR4-3200 memory is pulling another 13W+ from the CPU shows how much of an impact the memory controller has on total power consumption. For all the chips, we’re recording sub 2W power draw from the cores.

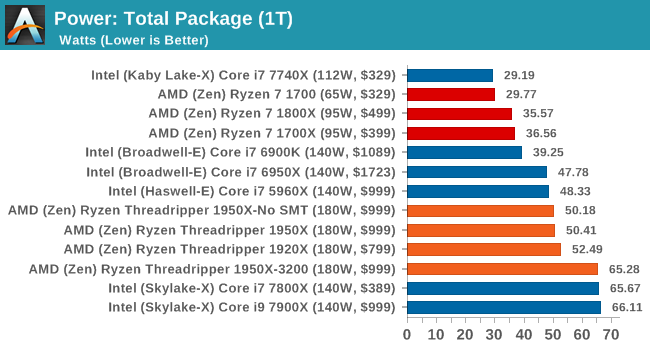

When we load up the package with a single thread, it fires up the uncore/mesh as well as the memory and puts the system into its peak turbo state. Depending on the way the CPU is designed, this could fire up a single core or a bank of cores, so although in a bank of cores only one will be doing the work, it can still train power to be noticeable.

The results show all the Threadripper CPUs again hitting around the same mark, well above the Ryzen CPUs, and matching the 10C/8C parts from Broadwell-E and Haswell-E respectively. The 1950X running DDR4-3200 is still pulling an additional +13W, but interestingly the Skylake-X cores have jumped in power consumption to around this level. It would appear that the MoDe-X interconnect used in Skylake-X can also draw substantial power.

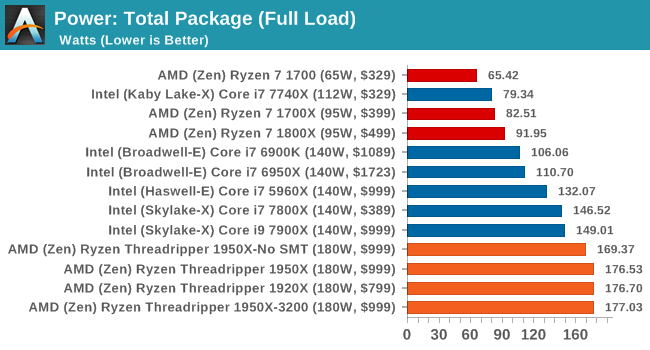

The next test is running the CPU will a full complement of threads for the design of the chip. This usually puts maximum strain on all the cores, the interconnect and the memory controller.

All the Threadripper CPUs hit around 177W, just under the 180W TDP, while the Skylake-X CPUs move to their 140W TDP. The 1950X in Game Mode seems to draw a little less power, which might be due to how the DRAM is being run in a NUMA environment.

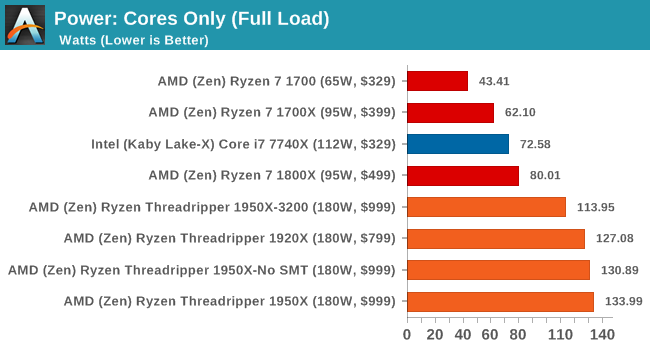

One of the other graphs we have for some of the chips is the ‘cores-only’ power draw. At full load, we get an interesting plot:

The key element to this graph is the 1950X running at DDR4-3200. Because the faster DRAM requires the memory controller to draw more power, it leaves less power for the CPU cores, potentially resulting in a lower turbo core frequency. So while the faster memory might guarantee faster performance in memory limited scenarios, the core frequency might end up lower given worse performance overall. It’s an interesting thought, so we plotted the per-core power for the 1950X at DDR4-2400 and DDR4-3200.

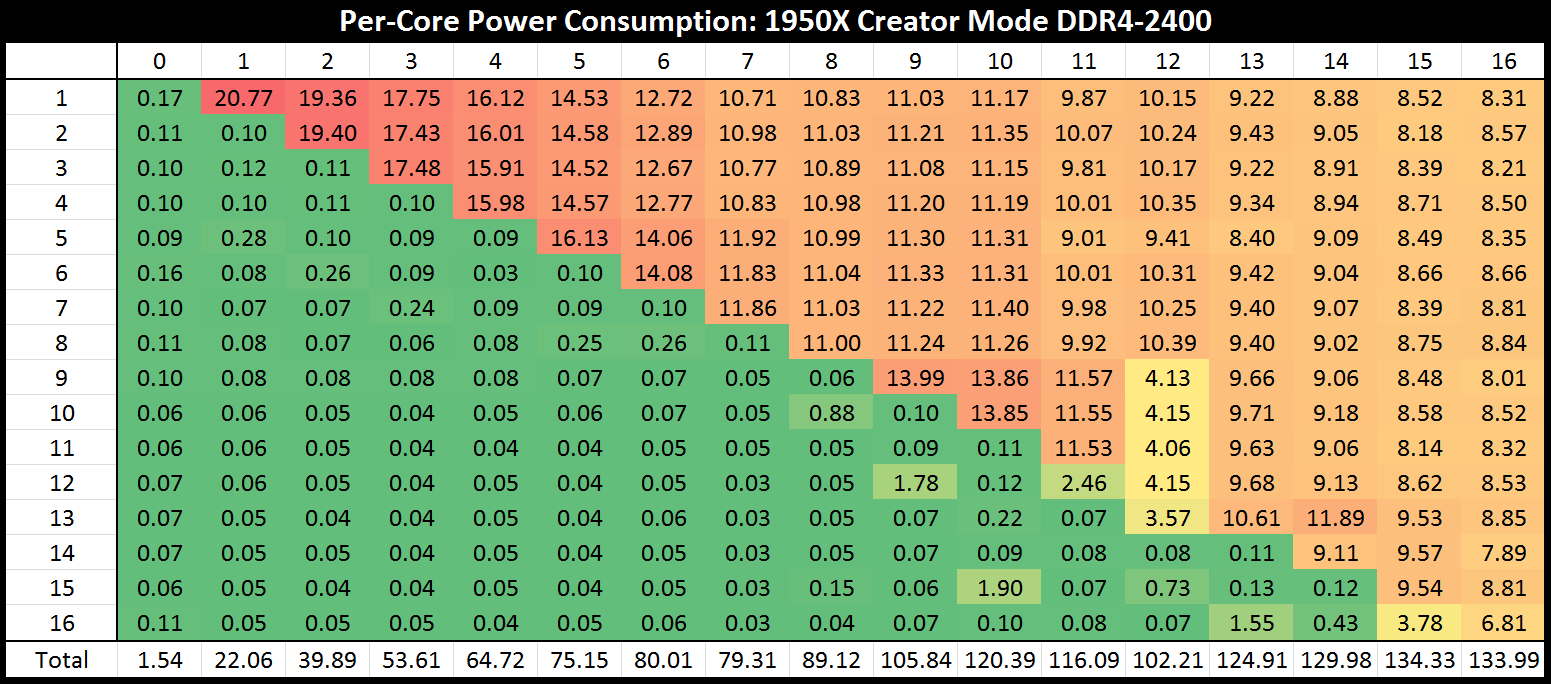

In this graph, the core number on the vertical axis is where the power measurement is taken, while from left to right is where we are loading up the cores, two threads at a time.

Initially we see that with two threads being loaded onto one core, that single core is drawing 20.77W. This quickly moves down to 19W, 17W, 16W to 11W by the time that half of the chip is loaded. At this point, with 8 cores loaded, the cores on their own are drawing 89W – if we add in the DRAM controllers, this would certainly be more than a Ryzen CPU. However, as we move past 10 cores loaded, something odd happens – the total power consumption of the cores drops from 120W to 116W to 102W when 24 threads are in play. This is indicated by the second silicon die drawing less power per core. It then ramps up again, with the full chip giving each core about 8.2W.

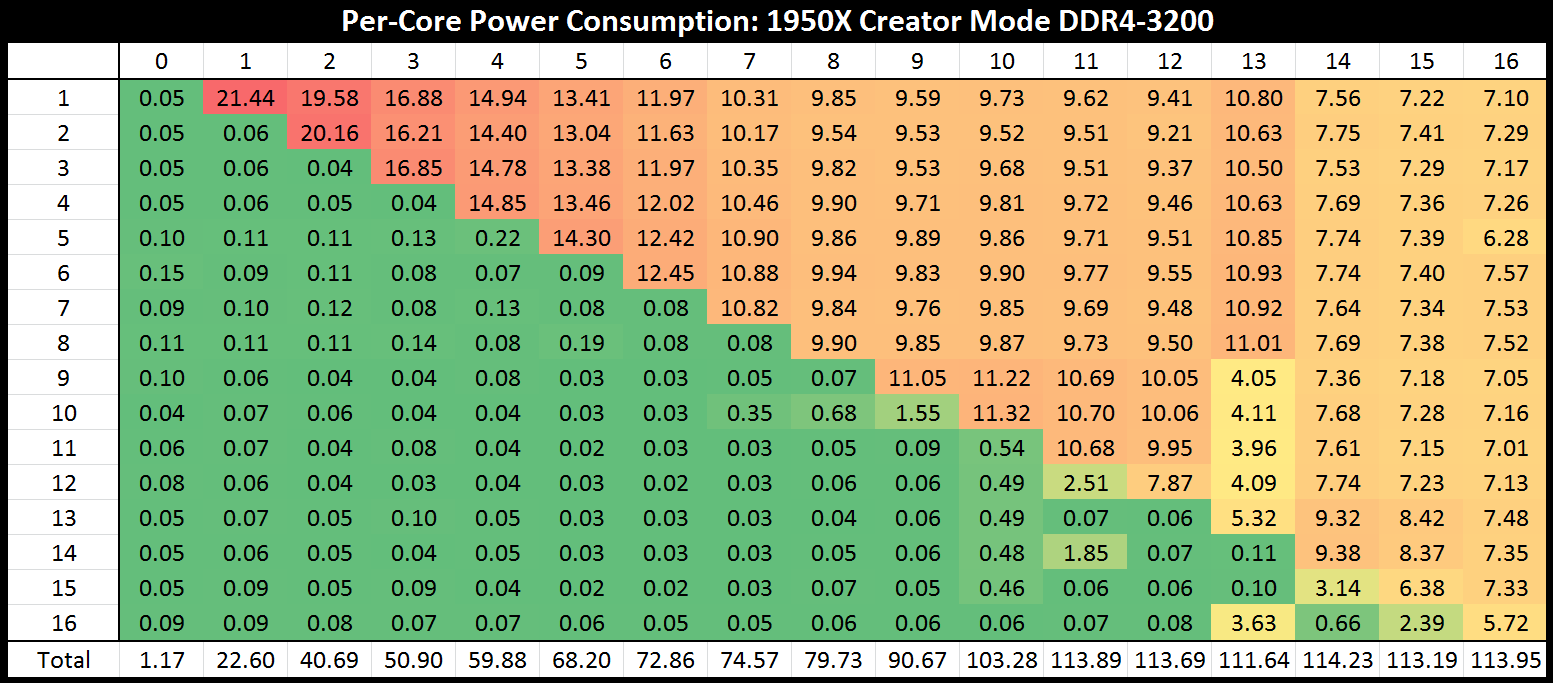

Moving onto the DDR4-3200 graph shows a similar scenario:

At first, the single core gets a big 21W, although as we load up the cores by the time it hits 4 cores/8 threads, the sub-15W per core at DDR4-3200 is being eclipsed by the 16W per core at DDR4-2400. Moving through we see a small wobble at 24-26 threads again, with the final tally putting only 114W onto the cores, 20W less than at DDR4-2400.

Some of the data for Game Mode did not come through properly, so we can’t draw many conclusions from what we have, although an interesting point should be made. In Game Mode, when a system requires a low number of threads, say anywhere from 2-8, because SMT is disabled these threads need to run on different CCXes. In Creator Mode, these threads would group into 1-4 cores over one CCX, and consume less power. At DDR4-2400, this means 65W in Creator mode for 8 threads (4 cores) compared to 89W in Game mode for 8 cores active.

347 Comments

View All Comments

mapesdhs - Friday, August 11, 2017 - link

And consoles are on the verge of moving to many-cores main CPUs. The inevitable dev change will spill over into PC gaming.RoboJ1M - Friday, August 11, 2017 - link

On the verge?All major consoles have had a greater core count than consumer CPUs, not to mention complex memory architectures, since, what, 2005?

One suspects the PC market has been benefiting from this for quite some time.

RoboJ1M - Friday, August 11, 2017 - link

Specifically, the 360 had 3 general purpose CPU coresAnd the PS3 had one general purpose CPU core and 7 short pipeline coprocessors that could only read and write to their caches. They had to be fed by the CPU core.

The 360 had unified program and graphics ram (still not common on PC!)

As well as it's large high speed cache.

The PS3 had septate program and video ram.

The Xbox one and PS4 were super boring pcs in boxes. But they did have 8 core CPUs. The x1x is interesting. It's got unified ram that runs at ludicrous speed. Sadly it will only be used for running games in 1800p to 2160p at 30 to 60 FPS :(

mlambert890 - Saturday, August 12, 2017 - link

Why do people constantly assume this is purely time/market economics?Not everything can *be* parallelized. Do people really not get that? It isn't just developers targeting a market. There are tasks that *can't be parallelized* because of the practical reality of dependencies. Executing ahead and out of order can only go so far before you have an inverse effect. Everyone could have 40 core CPUs... It doesn't mean that *gaming workloads* will be able to scale out that well.

The work that lends itself best to parallelization is the rendering pipeline and that's already entirely on the GPU (which is already massively parallel)

Magichands8 - Thursday, August 10, 2017 - link

I think what AMD did here though is fantastic. In my mind, creating a switch to change modes vastly adds to the value of the chip. I can now maximize performance based upon workload and software profile and that brings me closer to having the best of both worlds from one CPU.Notmyusualid - Sunday, August 13, 2017 - link

@ rtho782I agree it is a mess, and also, it is not AMDs fault.

I've have a 14c/28t Broadwell chip for over a year now, and I cannot launch Tomb Raider with HT on, nor GTA5. But most s/w is indifferent to the amount of cores presented to them, it would seem to me.

BrokenCrayons - Thursday, August 10, 2017 - link

Great review but the word "traditional" is used heavily. Given the short lifespan of computer parts and the nature of consumer electronics, I'd suggest that there isn't enough time or emotional attachment to establish a tradition of any sort. Motherboards sockets and market segments, for instance, might be better described in other ways unless it's becoming traditional in the review business to call older product designs traditional. :)mkozakewich - Monday, August 14, 2017 - link

Oh man, but we'll still gnash our teeth at our broken tech traditions!lefty2 - Thursday, August 10, 2017 - link

It's pretty useless measuring power alone. You need to measure efficiency (performance /watt).So yeah, a 16 core CPU draws more power than a 10 core, but it also probably doing a lot more work.

Diji1 - Thursday, August 10, 2017 - link

Er why don't you just do it yourself, they've already given you the numbers.