The AMD Ryzen Threadripper 1950X and 1920X Review: CPUs on Steroids

by Ian Cutress on August 10, 2017 9:00 AM ESTGrand Theft Auto

The highly anticipated iteration of the Grand Theft Auto franchise hit the shelves on April 14th 2015, with both AMD and NVIDIA in tow to help optimize the title. GTA doesn’t provide graphical presets, but opens up the options to users and extends the boundaries by pushing even the hardest systems to the limit using Rockstar’s Advanced Game Engine under DirectX 11. Whether the user is flying high in the mountains with long draw distances or dealing with assorted trash in the city, when cranked up to maximum it creates stunning visuals but hard work for both the CPU and the GPU.

For our test we have scripted a version of the in-game benchmark. The in-game benchmark consists of five scenarios: four short panning shots with varying lighting and weather effects, and a fifth action sequence that lasts around 90 seconds. We use only the final part of the benchmark, which combines a flight scene in a jet followed by an inner city drive-by through several intersections followed by ramming a tanker that explodes, causing other cars to explode as well. This is a mix of distance rendering followed by a detailed near-rendering action sequence, and the title thankfully spits out frame time data.

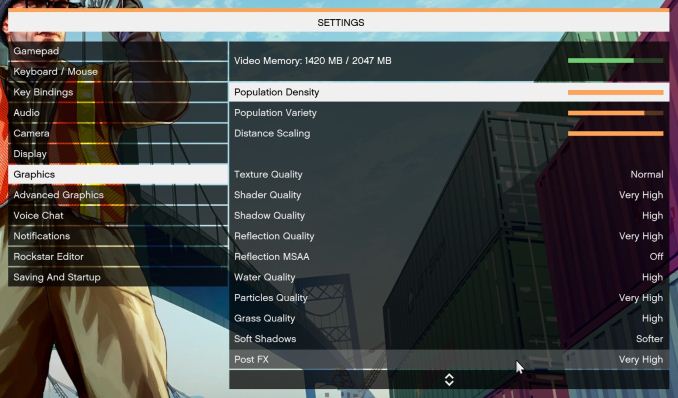

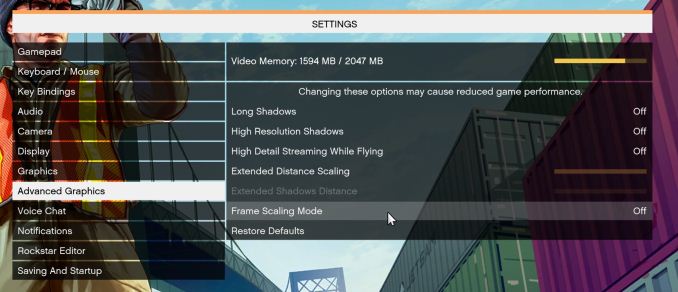

There are no presets for the graphics options on GTA, allowing the user to adjust options such as population density and distance scaling on sliders, but others such as texture/shadow/shader/water quality from Low to Very High. Other options include MSAA, soft shadows, post effects, shadow resolution and extended draw distance options. There is a handy option at the top which shows how much video memory the options are expected to consume, with obvious repercussions if a user requests more video memory than is present on the card (although there’s no obvious indication if you have a low-end GPU with lots of GPU memory, like an R7 240 4GB).

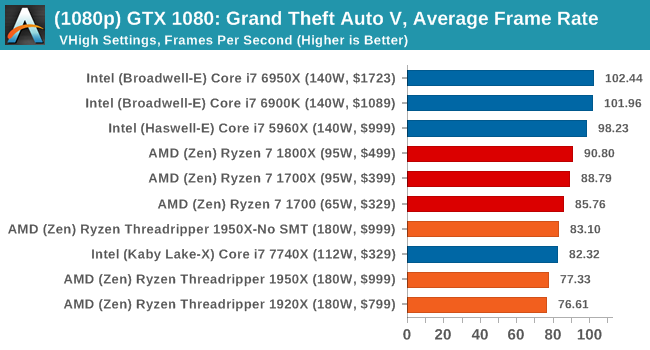

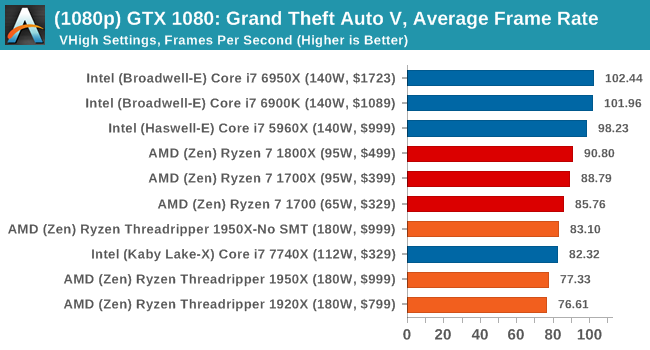

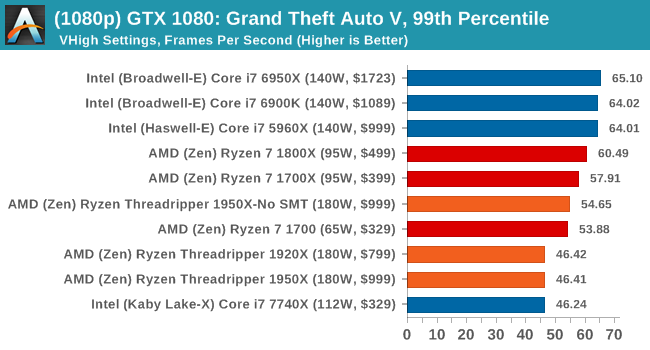

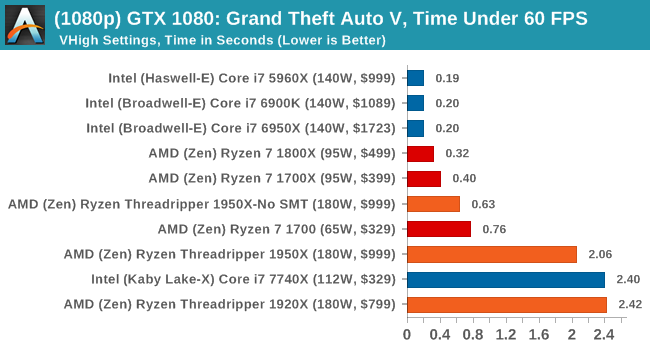

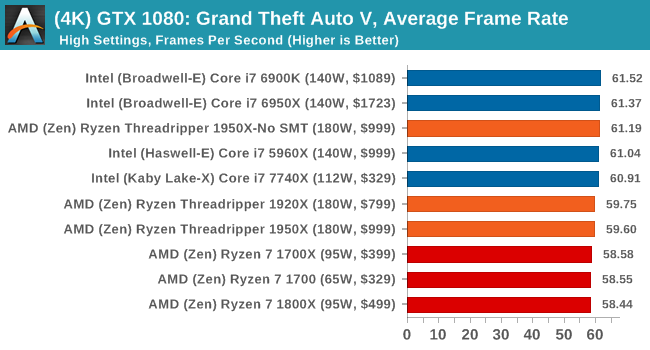

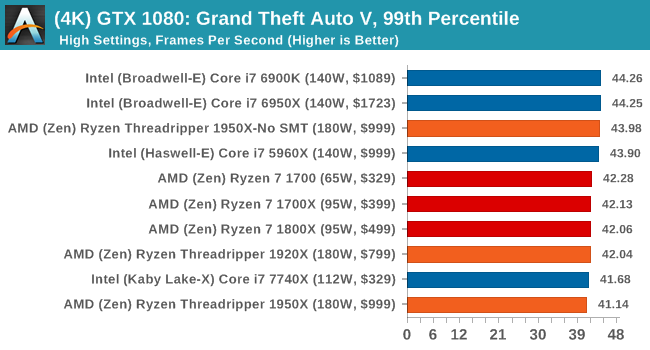

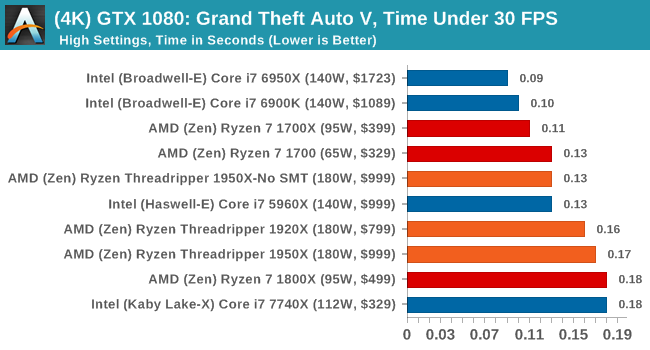

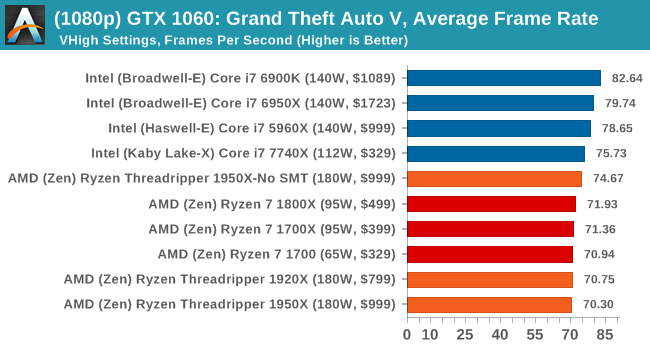

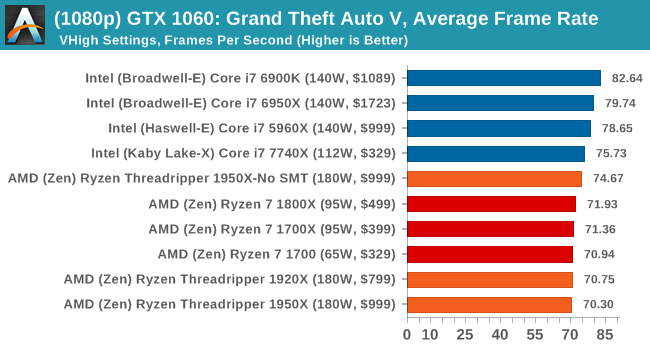

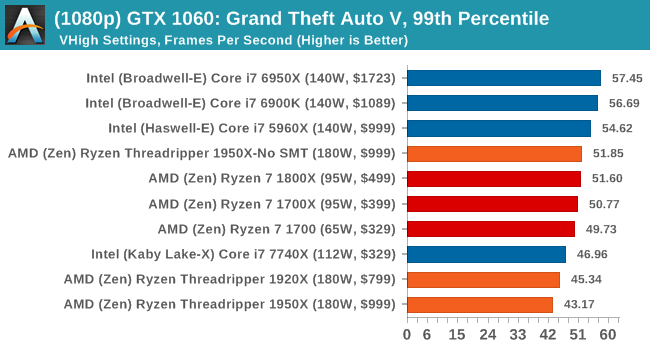

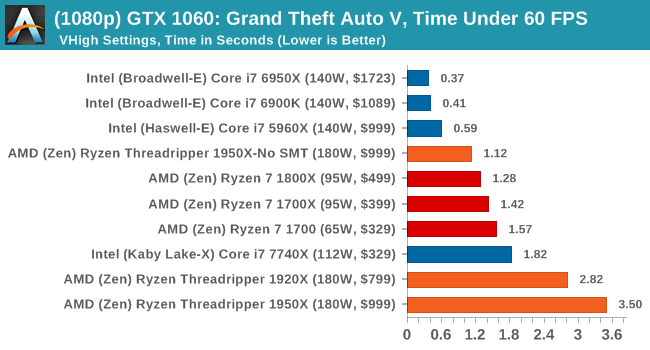

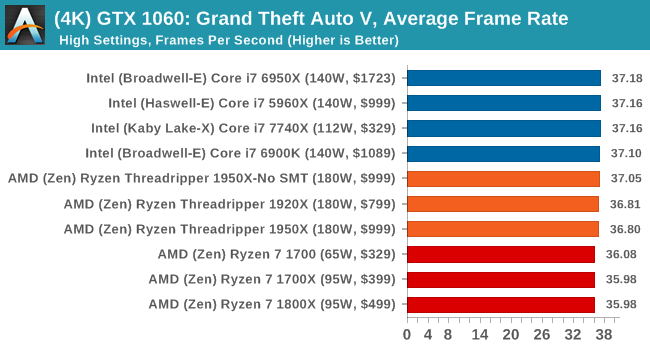

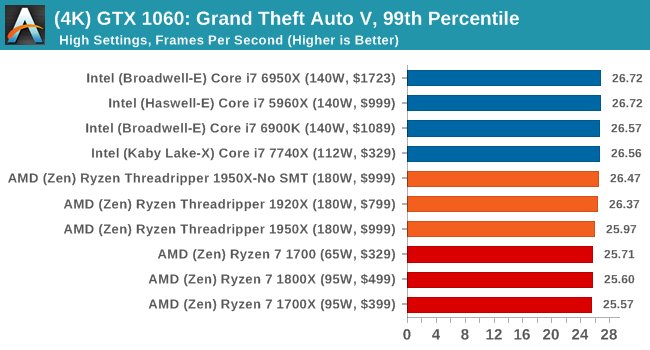

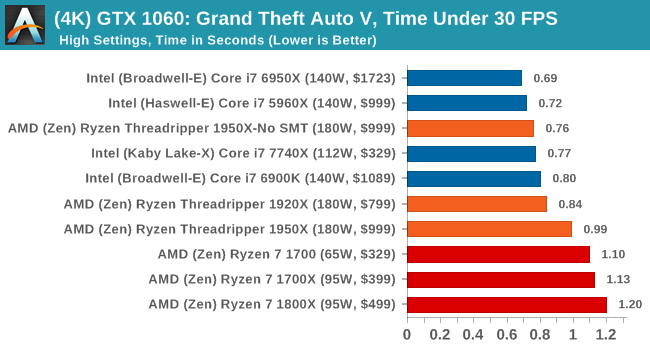

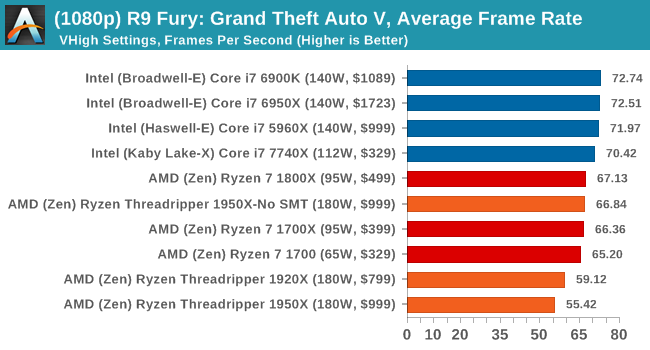

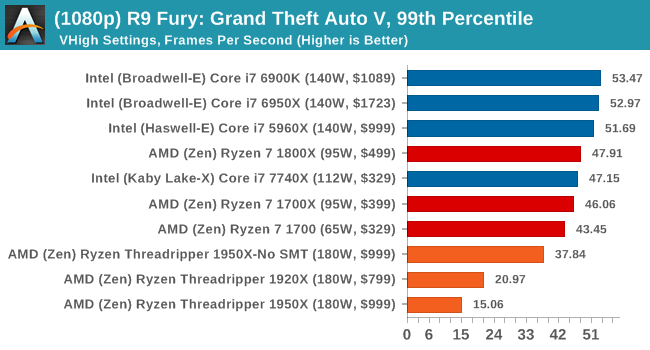

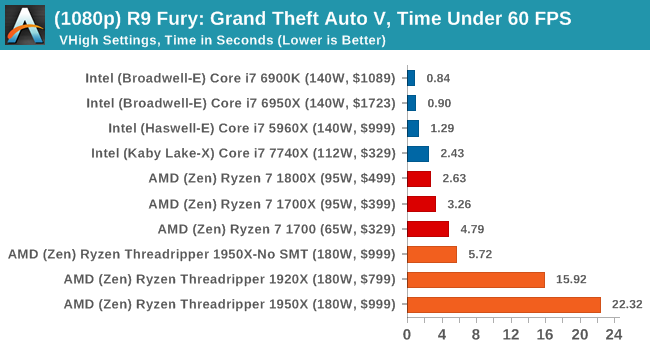

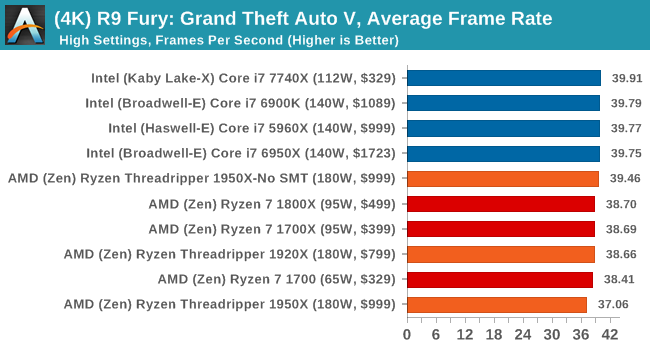

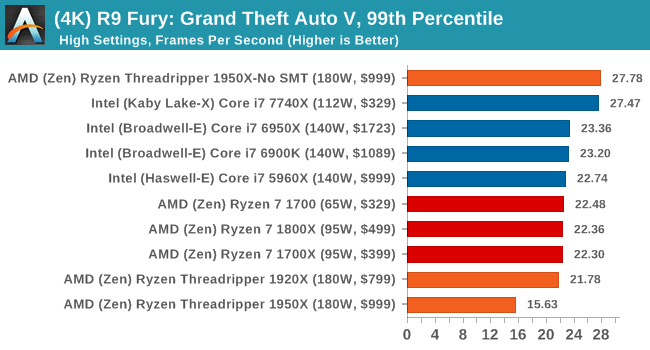

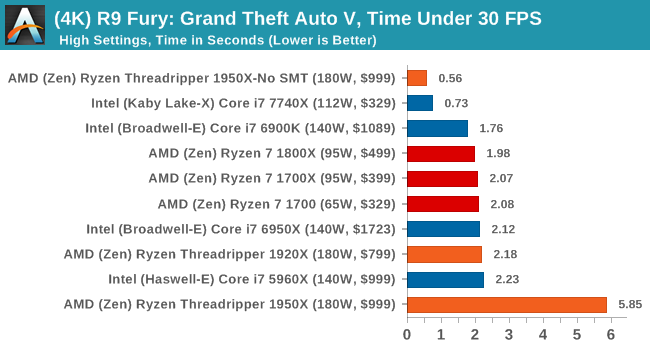

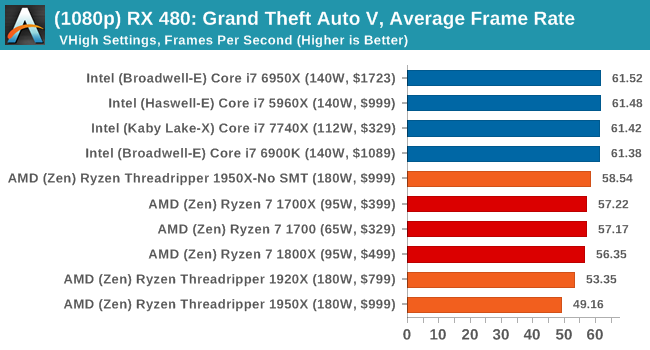

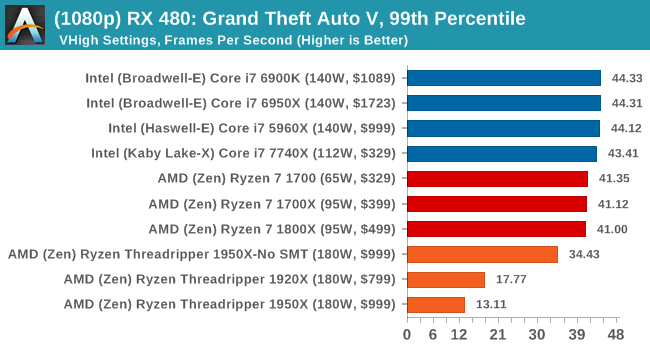

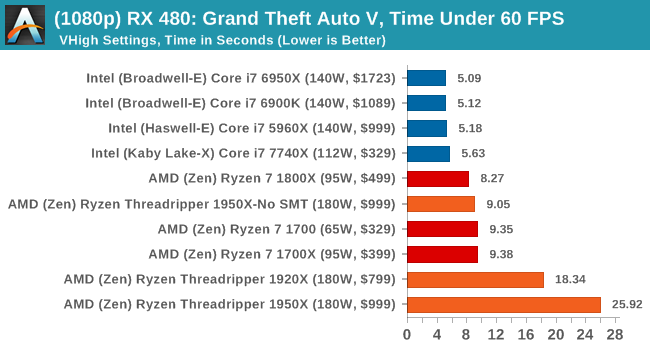

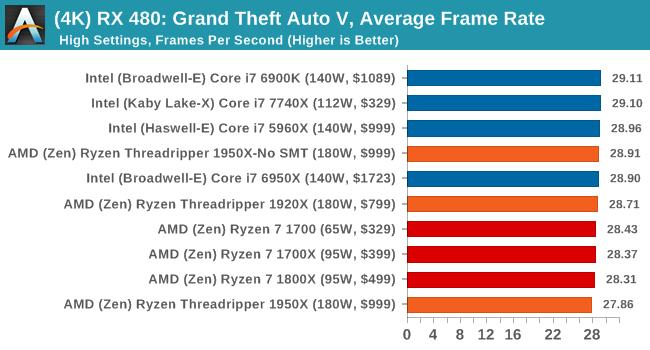

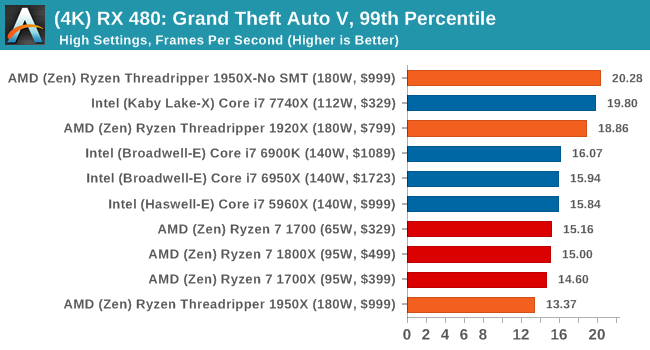

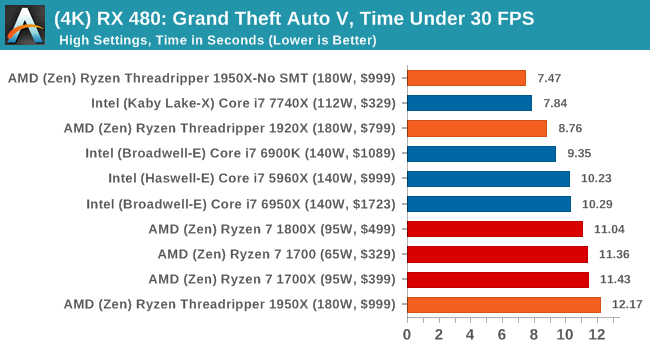

To that end, we run the benchmark at 1920x1080 using an average of Very High on the settings, and also at 4K using High on most of them. We take the average results of four runs, reporting frame rate averages, 99th percentiles, and our time under analysis.

All of our benchmark results can also be found in our benchmark engine, Bench.

MSI GTX 1080 Gaming 8G Performance

1080p

4K

ASUS GTX 1060 Strix 6G Performance

1080p

4K

Sapphire Nitro R9 Fury 4G Performance

1080p

4K

Sapphire Nitro RX 480 8G Performance

1080p

4K

Depending on the CPU, for the most part Threadripper performs near to Ryzen or just below it.

347 Comments

View All Comments

ddriver - Thursday, August 10, 2017 - link

Yeah if all you do all day is compile chromium with visual studio... Take that result with a big spoon of salt.Samus - Thursday, August 10, 2017 - link

This thing can also decompress my HD pr0n RARs in record time!carewolf - Thursday, August 10, 2017 - link

The jokes is on you. More cores and more memory bandwidth is always faster for compiling. Anandtech must have butched the benchmark here. Other sites show ThreadRipper whipping i9 ass as expected.bongey - Thursday, August 10, 2017 - link

They did without a doubt screw up the compile test. The 6950x is a 10 core /20 thread intel cpu, but somehow the 7900x has 20% improvement, when no other test even comes close to that much of an improvement. The 7900x is basically just bump in clock speed for a 6950x.Ian Cutress - Thursday, August 10, 2017 - link

'The 7900X is basically just bump in clock speed for a 6950X'L2 cache up to 1MB, L3 cache is a victim cache, mesh interconnect rather than rings.

mlambert890 - Saturday, August 12, 2017 - link

It's basically as far from 'just a bump in clock speed' as any follow up release short of a full architecture revamp, but yeah ok.rtho782 - Thursday, August 10, 2017 - link

The whole game mode/creator mode, UMA/NUMA, etc seems a mess. Games not working with more than 20 threads is a joke although not AMDs fault....mapesdhs - Thursday, August 10, 2017 - link

Why is it a mess if peope choose to buy into this level of tech? It's bring formerly Enterprise-level tech to the masses, the very nature of how this stuff works makes it clear there are tradeoffs in design. AMD is forced to start off by dealing with a sw market that for years has focused on the prevalence of moderately low core count Intel CPUs with strong(er) IPC. Offering a simple hw choice to tailor the performance slant is a nice idea. I mean, what's your problem here? Do you not understand UMA vs. NUMA? If not, probably shouldn't be buying this level of tech. :DprisonerX - Thursday, August 10, 2017 - link

That will change. Why invest masses of expensive brainpower in aggressively multithreading your game or app when no-one has the hardware to use it? No they do.Hurr Durr - Friday, August 11, 2017 - link

Only in lala-land will HEDT processors occupy any meaningful part of the gaming market. We`re bound by consoles, and that is here to stay for years.