The AMD Ryzen 3 1300X and Ryzen 3 1200 CPU Review: Zen on a Budget

by Ian Cutress on July 27, 2017 9:30 AM EST- Posted in

- CPUs

- AMD

- Zen

- Ryzen

- Ryzen 3

- Ryzen 3 1300X

- Ryzen 3 1200

Benchmarking Performance: CPU System Tests

Our first set of tests is our general system tests. These set of tests are meant to emulate more about what people usually do on a system, like opening large files or processing small stacks of data. This is a bit different to our office testing, which uses more industry standard benchmarks, and a few of the benchmarks here are relatively new and different.

All of our benchmark results can also be found in our benchmark engine, Bench.

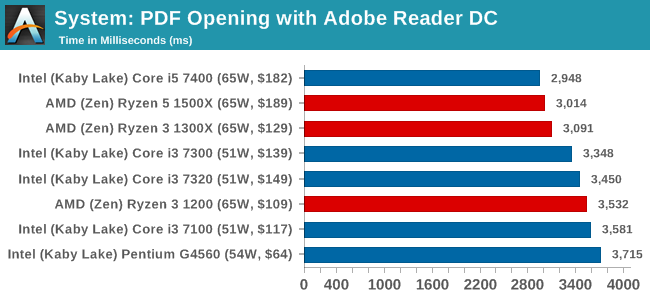

PDF Opening

First up is a self-penned test using a monstrous PDF we once received in advance of attending an event. While the PDF was only a single page, it had so many high-quality layers embedded it was taking north of 15 seconds to open and to gain control on the mid-range notebook I was using at the time. This put it as a great candidate for our 'let's open an obnoxious PDF' test. Here we use Adobe Reader DC, and disable all the update functionality within. The benchmark sets the screen to 1080p, opens the PDF to in fit-to-screen mode, and measures the time from sending the command to open the PDF until it is fully displayed and the user can take control of the software again. The test is repeated ten times, and the average time taken. Results are in milliseconds.

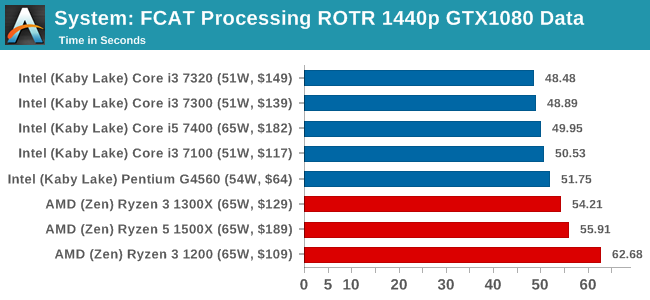

FCAT Processing: link

One of the more interesting workloads that has crossed our desks in recent quarters is FCAT - the tool we use to measure stuttering in gaming due to dropped or runt frames. The FCAT process requires enabling a color-based overlay onto a game, recording the gameplay, and then parsing the video file through the analysis software. The software is mostly single-threaded, however because the video is basically in a raw format, the file size is large and requires moving a lot of data around. For our test, we take a 90-second clip of the Rise of the Tomb Raider benchmark running on a GTX 980 Ti at 1440p, which comes in around 21 GB, and measure the time it takes to process through the visual analysis tool.

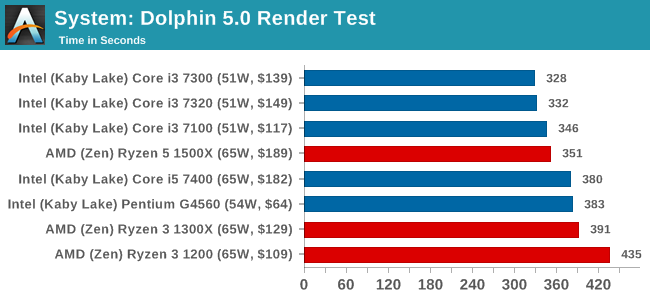

Dolphin Benchmark: link

Many emulators are often bound by single thread CPU performance, and general reports tended to suggest that Haswell provided a significant boost to emulator performance. This benchmark runs a Wii program that ray traces a complex 3D scene inside the Dolphin Wii emulator. Performance on this benchmark is a good proxy of the speed of Dolphin CPU emulation, which is an intensive single core task using most aspects of a CPU. Results are given in minutes, where the Wii itself scores 17.53 minutes.

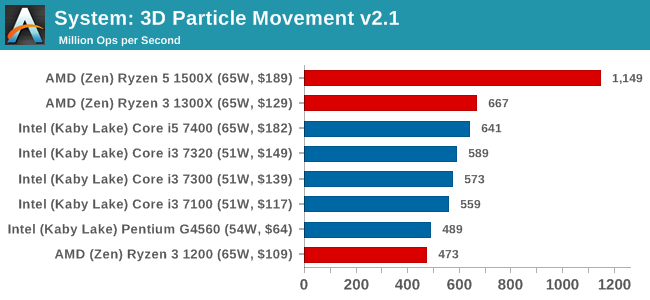

3D Movement Algorithm Test v2.1: link

This is the latest version of the self-penned 3DPM benchmark. The goal of 3DPM is to simulate semi-optimized scientific algorithms taken directly from my doctorate thesis. Version 2.1 improves over 2.0 by passing the main particle structs by reference rather than by value, and decreasing the amount of double->float->double recasts the compiler was adding in. It affords a ~25% speed-up over v2.0, which means new data.

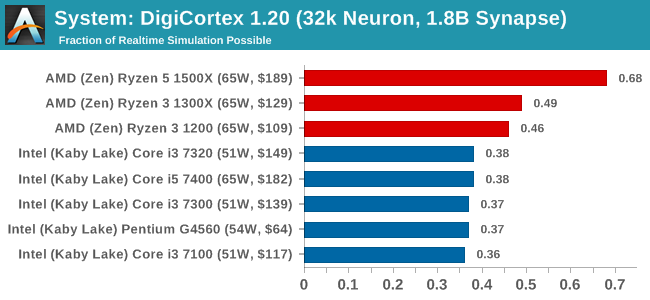

DigiCortex v1.20: link

Despite being a couple of years old, the DigiCortex software is a pet project for the visualization of neuron and synapse activity in the brain. The software comes with a variety of benchmark modes, and we take the small benchmark which runs a 32k neuron/1.8B synapse simulation. The results on the output are given as a fraction of whether the system can simulate in real-time, so anything above a value of one is suitable for real-time work. The benchmark offers a 'no firing synapse' mode, which in essence detects DRAM and bus speed, however we take the firing mode which adds CPU work with every firing.

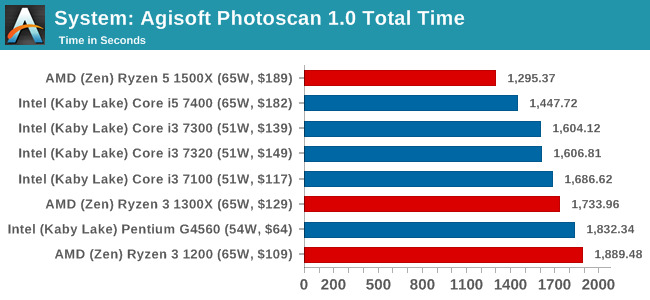

Agisoft Photoscan 1.0: link

Photoscan stays in our benchmark suite from the previous version, however now we are running on Windows 10 so features such as Speed Shift on the latest processors come into play. The concept of Photoscan is translating many 2D images into a 3D model - so the more detailed the images, and the more you have, the better the model. The algorithm has four stages, some single threaded and some multi-threaded, along with some cache/memory dependency in there as well. For some of the more variable threaded workload, features such as Speed Shift and XFR will be able to take advantage of CPU stalls or downtime, giving sizeable speedups on newer microarchitectures.

140 Comments

View All Comments

ampmam - Thursday, July 27, 2017 - link

Great review but biased conclusion.tvdang7 - Thursday, July 27, 2017 - link

No overclock?Oxford Guy - Thursday, July 27, 2017 - link

No, just a RAM underclock.zodiacfml - Thursday, July 27, 2017 - link

overclocking tests on the ryzen 3 1200 please. the only weakness of the chip is for non-gaming or htpc usage as it will require purchasing a discrete graphics card. otherwise, it presents good value for most things like gaming and multi-threaded applications, add overclocking, and it gets even better.kaesden - Thursday, July 27, 2017 - link

one thing to not overlook with the ryzen 1300x is the platform. Its competitive with budget intel offerings and can take a drop in 8 core 16 thread upgrade with no other changes except maybe a better cooling solution, Something intel can't match. Intel has the same "strategy" at their high end with the new X299 platform, but they seem to have lost focus of the big picture. The HEDT platform is too expensive to fit this type of scenario. Anyone who's shelling out the cash for a HEDT system isn't the type of budget user who is going to go for the 7740x. they're just going to get a higher end cpu from the start if they can afford it at all, not to mention the confusion about what features work with what cpu's and what doesn't, etc...TLDR; AMD has a winner of a platform here that will only get better as time goes on.

peevee - Thursday, July 27, 2017 - link

From the tests, looks like Razen 3 does not make much sense. Zen arch provides quite a boost from SMT in practically all applications where performance actually matters (which are all multithreaded for years now), and AMD artificially disabled this feature for that stupid Intel-like market segmentation.Also I am sure there are not that many CPUs where exactly 2 out of 4 cores on each CCX is broken. So in effect, in cases like one CCX has 4 good cores and another has only 2 they kill 2 good cores, kill half of L3, kill hyperthreading...

It would be better to create a separate 1-CCX chip for the line, which would have much higher (more that twice per wafer) yield being half the size, and release 2, 3 and 4 core CPUs as Ryzen 2, 3 and 4 accordingly. With hyperthreading and everything. I am sure it does not cost "tens of millions of dollars" to create a new mask as even completely custom chips cost less, let alone that simple derivative.

Oxford Guy - Thursday, July 27, 2017 - link

"It would be better to create a separate 1-CCX chip for the line"Or, it could be explained by this article why AMD can't release a Zen chip with 1 CCX enabled and one disabled. Instead, we just get "obviously".

silverblue - Friday, July 28, 2017 - link

He did explain it. Page 1.Oxford Guy - Saturday, July 29, 2017 - link

Where?All I see is this: "Number 3 leads to a lop-sided silicon die, and obviously wasn’t chosen."

That is not an explanation.

peevee - Tuesday, August 1, 2017 - link

That is still be half the yield per wafer compared to a dedicated 1-CCX line. Twice the cost. Cost matters.And the 3rd chip must be 1CCX+1GPU. SMT must be on everywhere though, it is too good to artificially lower value of your product by disabling it by segmentation.