The AMD Ryzen 3 1300X and Ryzen 3 1200 CPU Review: Zen on a Budget

by Ian Cutress on July 27, 2017 9:30 AM EST- Posted in

- CPUs

- AMD

- Zen

- Ryzen

- Ryzen 3

- Ryzen 3 1300X

- Ryzen 3 1200

Grand Theft Auto

The highly anticipated iteration of the Grand Theft Auto franchise hit the shelves on April 14th 2015, with both AMD and NVIDIA in tow to help optimize the title. GTA doesn’t provide graphical presets, but opens up the options to users and extends the boundaries by pushing even the hardest systems to the limit using Rockstar’s Advanced Game Engine under DirectX 11. Whether the user is flying high in the mountains with long draw distances or dealing with assorted trash in the city, when cranked up to maximum it creates stunning visuals but hard work for both the CPU and the GPU.

For our test we have scripted a version of the in-game benchmark. The in-game benchmark consists of five scenarios: four short panning shots with varying lighting and weather effects, and a fifth action sequence that lasts around 90 seconds. We use only the final part of the benchmark, which combines a flight scene in a jet followed by an inner city drive-by through several intersections followed by ramming a tanker that explodes, causing other cars to explode as well. This is a mix of distance rendering followed by a detailed near-rendering action sequence, and the title thankfully spits out frame time data.

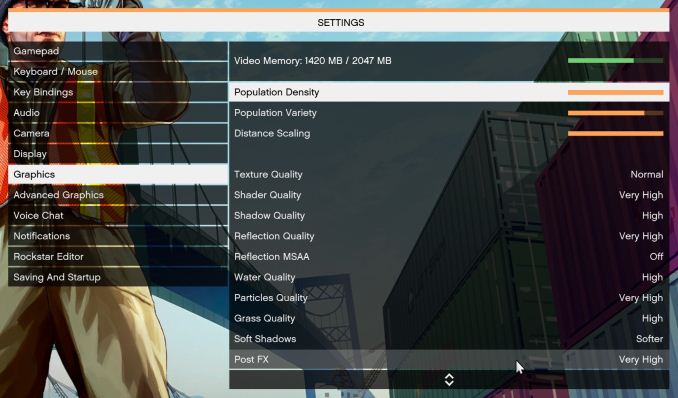

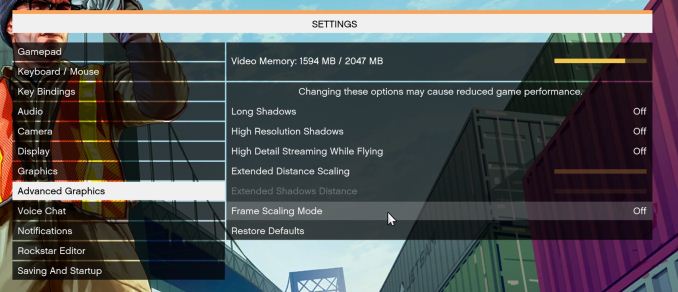

There are no presets for the graphics options on GTA, allowing the user to adjust options such as population density and distance scaling on sliders, but others such as texture/shadow/shader/water quality from Low to Very High. Other options include MSAA, soft shadows, post effects, shadow resolution and extended draw distance options. There is a handy option at the top which shows how much video memory the options are expected to consume, with obvious repercussions if a user requests more video memory than is present on the card (although there’s no obvious indication if you have a low end GPU with lots of GPU memory, like an R7 240 4GB).

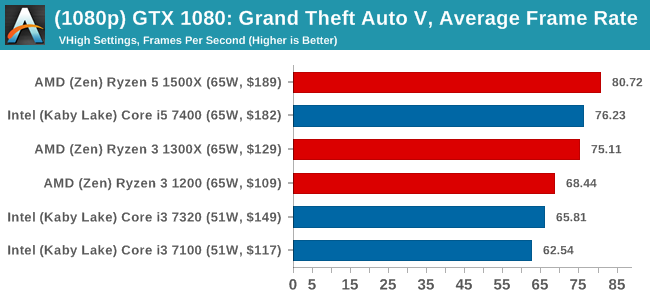

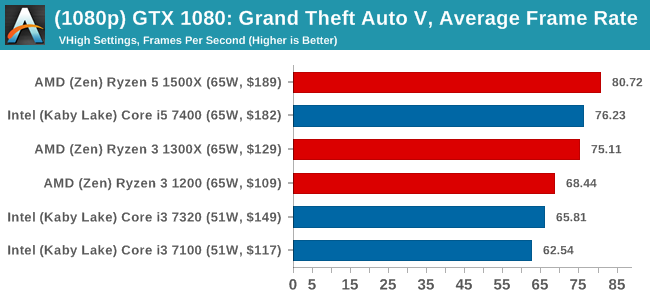

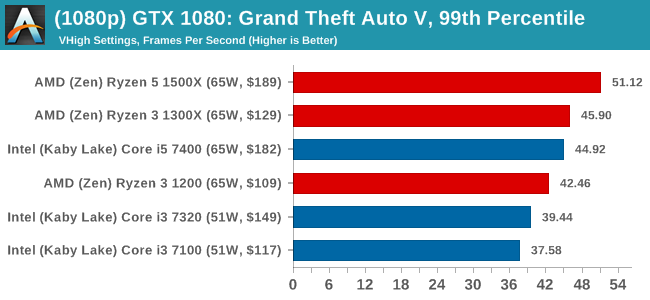

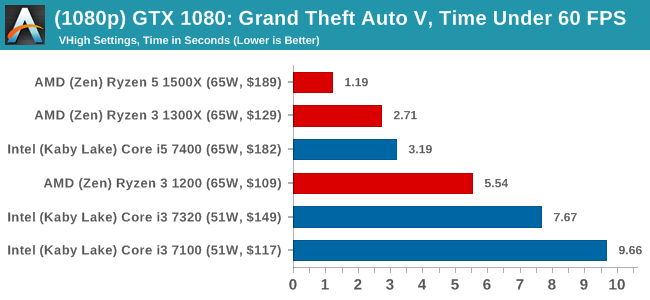

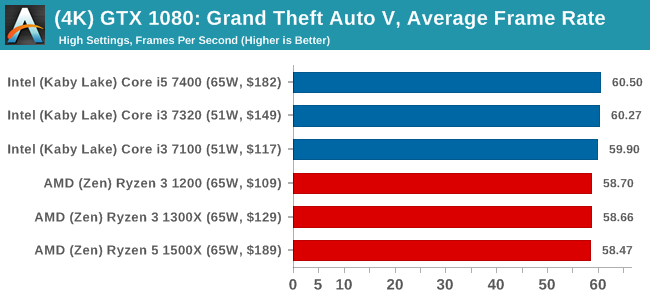

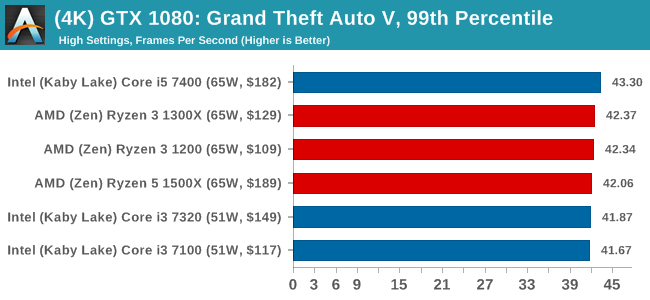

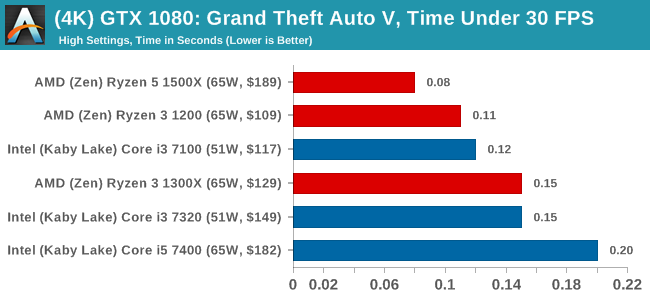

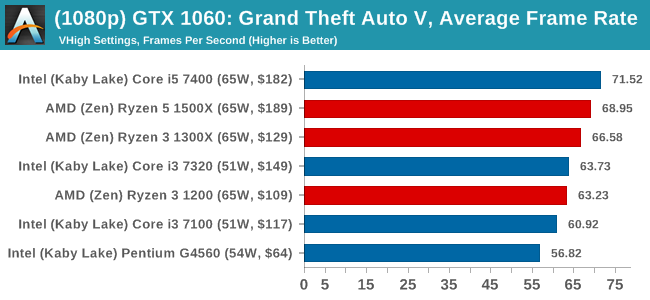

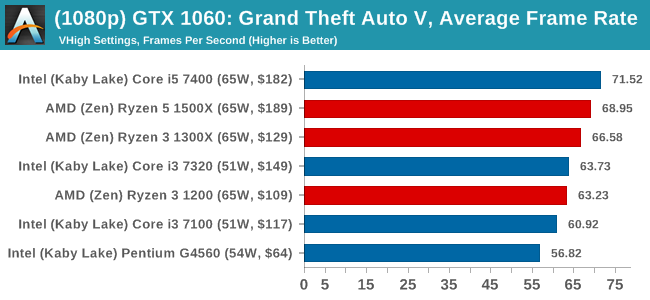

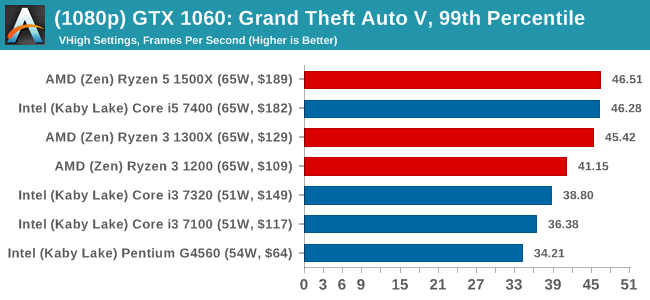

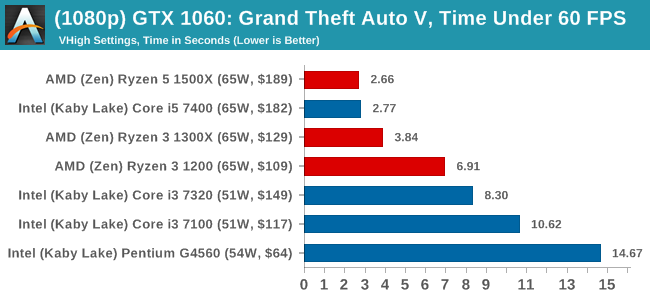

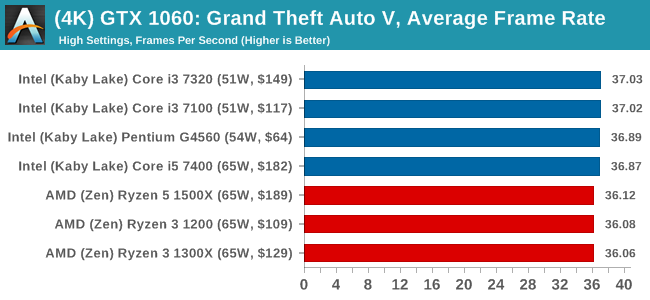

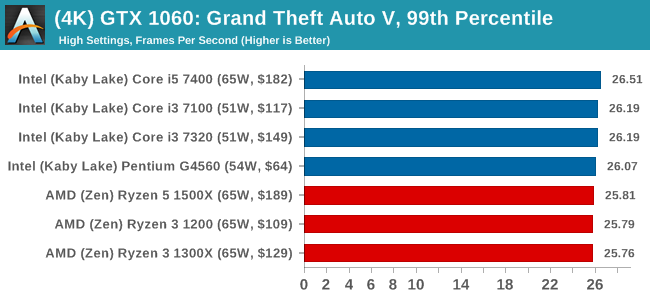

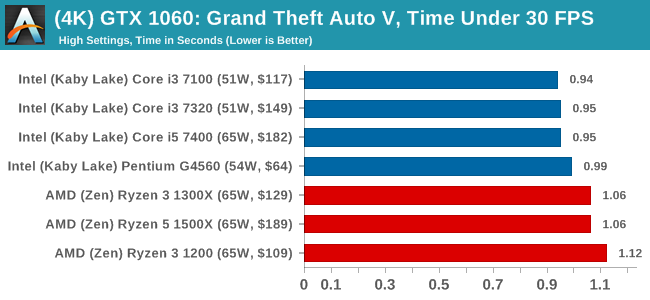

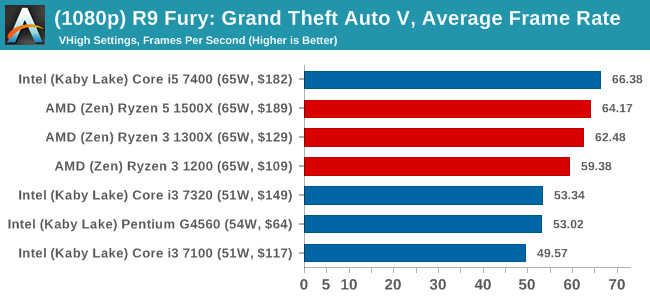

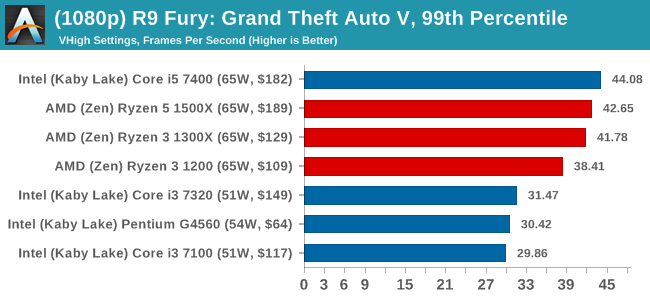

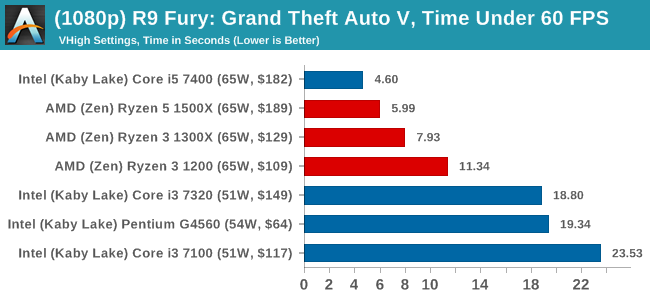

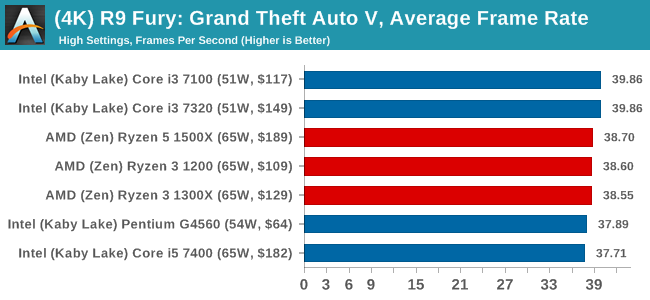

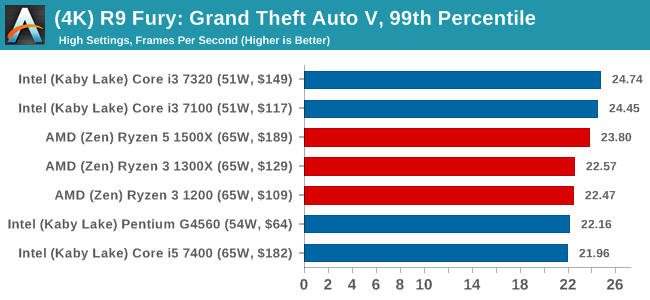

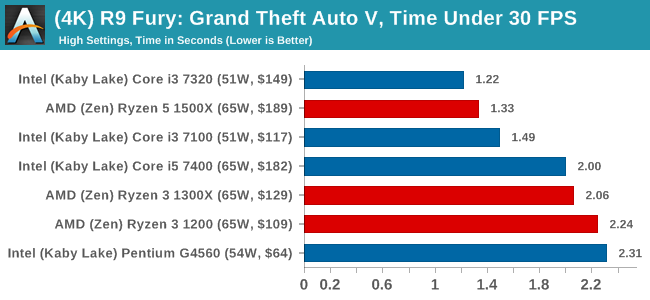

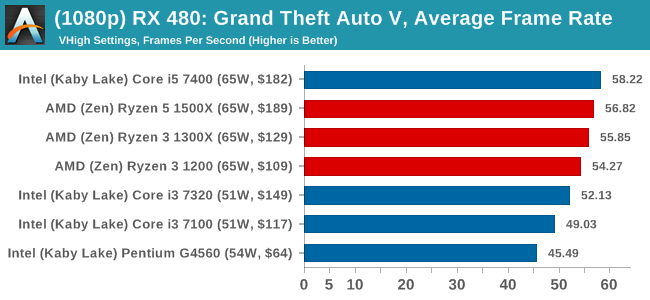

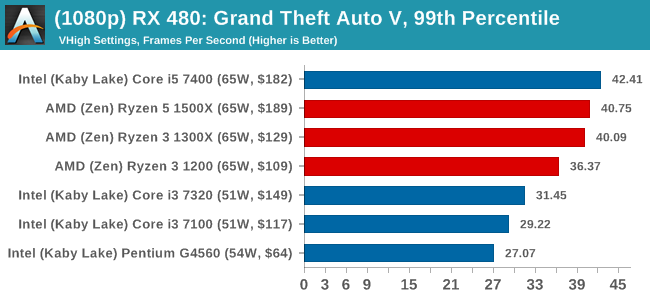

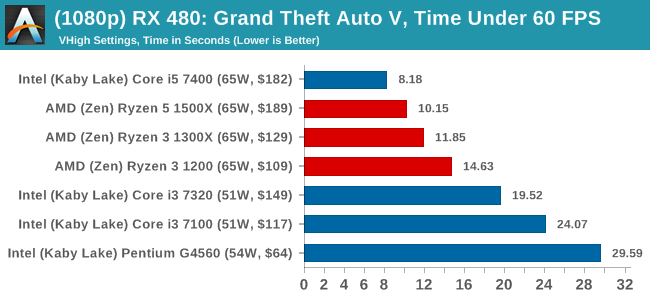

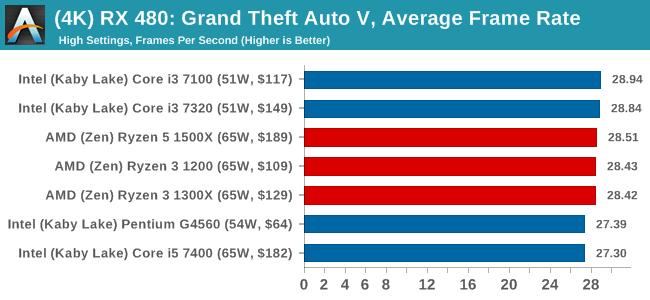

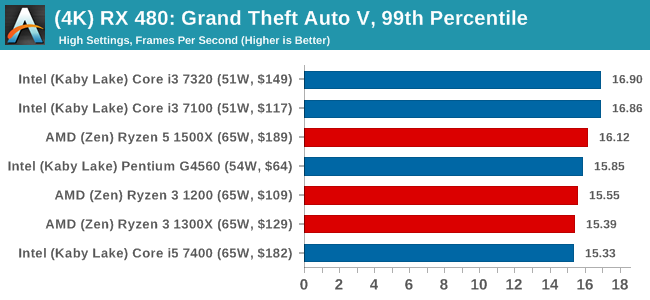

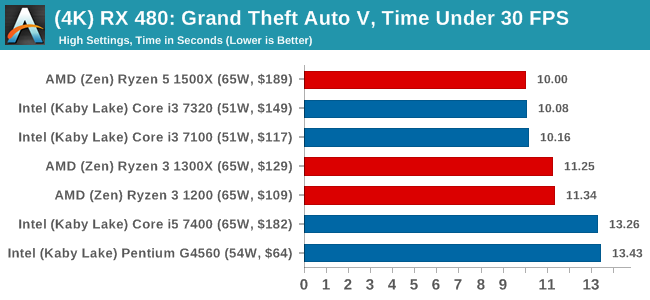

To that end, we run the benchmark at 1920x1080 using an average of Very High on the settings, and also at 4K using High on most of them. We take the average results of four runs, reporting frame rate averages, 99th percentiles, and our time under analysis.

For all our results, we show the average frame rate at 1080p first. Mouse over the other graphs underneath to see 99th percentile frame rates and 'Time Under' graphs, as well as results for other resolutions. All of our benchmark results can also be found in our benchmark engine, Bench.

MSI GTX 1080 Gaming 8G Performance

1080p

4K

ASUS GTX 1060 Strix 6GB Performance

1080p

4K

Sapphire R9 Fury 4GB Performance

1080p

4K

Sapphire RX 480 8GB Performance

1080p

4K

140 Comments

View All Comments

ampmam - Thursday, July 27, 2017 - link

Great review but biased conclusion.tvdang7 - Thursday, July 27, 2017 - link

No overclock?Oxford Guy - Thursday, July 27, 2017 - link

No, just a RAM underclock.zodiacfml - Thursday, July 27, 2017 - link

overclocking tests on the ryzen 3 1200 please. the only weakness of the chip is for non-gaming or htpc usage as it will require purchasing a discrete graphics card. otherwise, it presents good value for most things like gaming and multi-threaded applications, add overclocking, and it gets even better.kaesden - Thursday, July 27, 2017 - link

one thing to not overlook with the ryzen 1300x is the platform. Its competitive with budget intel offerings and can take a drop in 8 core 16 thread upgrade with no other changes except maybe a better cooling solution, Something intel can't match. Intel has the same "strategy" at their high end with the new X299 platform, but they seem to have lost focus of the big picture. The HEDT platform is too expensive to fit this type of scenario. Anyone who's shelling out the cash for a HEDT system isn't the type of budget user who is going to go for the 7740x. they're just going to get a higher end cpu from the start if they can afford it at all, not to mention the confusion about what features work with what cpu's and what doesn't, etc...TLDR; AMD has a winner of a platform here that will only get better as time goes on.

peevee - Thursday, July 27, 2017 - link

From the tests, looks like Razen 3 does not make much sense. Zen arch provides quite a boost from SMT in practically all applications where performance actually matters (which are all multithreaded for years now), and AMD artificially disabled this feature for that stupid Intel-like market segmentation.Also I am sure there are not that many CPUs where exactly 2 out of 4 cores on each CCX is broken. So in effect, in cases like one CCX has 4 good cores and another has only 2 they kill 2 good cores, kill half of L3, kill hyperthreading...

It would be better to create a separate 1-CCX chip for the line, which would have much higher (more that twice per wafer) yield being half the size, and release 2, 3 and 4 core CPUs as Ryzen 2, 3 and 4 accordingly. With hyperthreading and everything. I am sure it does not cost "tens of millions of dollars" to create a new mask as even completely custom chips cost less, let alone that simple derivative.

Oxford Guy - Thursday, July 27, 2017 - link

"It would be better to create a separate 1-CCX chip for the line"Or, it could be explained by this article why AMD can't release a Zen chip with 1 CCX enabled and one disabled. Instead, we just get "obviously".

silverblue - Friday, July 28, 2017 - link

He did explain it. Page 1.Oxford Guy - Saturday, July 29, 2017 - link

Where?All I see is this: "Number 3 leads to a lop-sided silicon die, and obviously wasn’t chosen."

That is not an explanation.

peevee - Tuesday, August 1, 2017 - link

That is still be half the yield per wafer compared to a dedicated 1-CCX line. Twice the cost. Cost matters.And the 3rd chip must be 1CCX+1GPU. SMT must be on everywhere though, it is too good to artificially lower value of your product by disabling it by segmentation.