The Intel Skylake-X Review: Core i9 7900X, i7 7820X and i7 7800X Tested

by Ian Cutress on June 19, 2017 9:01 AM ESTI Keep My Cache Private

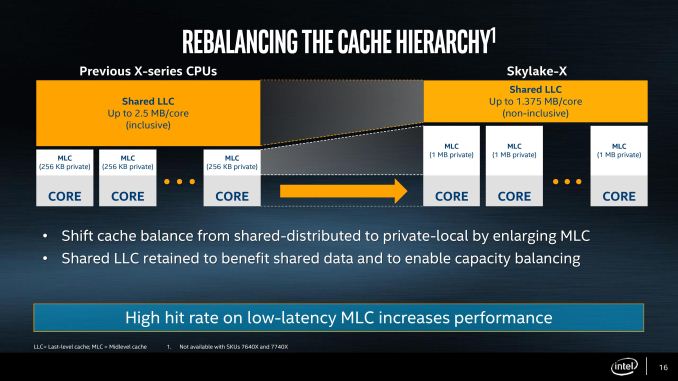

As mentioned in the original Skylake-X announcements, the new Skylake-SP cores have shaken up the cache hierarchy compared to previous generations. What used to be simple inclusive caches have now been adjusted in size, policy, latency, and efficiency, which will have a direct impact on performance. It also means that Skylake-S and Skylake-SP will have different instruction throughput efficiency levels. They could be the difference between chalk and cheese and a result, or the difference between stilton and aged stilton.

Let us start with a direct compare of Skylake-S and Skylake-SP.

| Comparison: Skylake-S and Skylake-SP Caches | ||

| Skylake-S | Features | Skylake-SP |

| 32 KB 8-way 4-cycle 4KB 64-entry 4-way TLB |

L1-D | 32 KB 8-way 4-cycle 4KB 64-entry 4-way TLB |

| 32 KB 8-way 4KB 128-entry 8-way TLB |

L1-I | 32 KB 8-way 4KB 128-entry 8-way TLB |

| 256 KB 4-way 11-cycle 4KB 1536-entry 12-way TLB Inclusive |

L2 | 1 MB 16-way 11-13 cycle 4KB 1536-entry 12-way TLB Inclusive |

| < 2 MB/core Up to 16-way 44-cycle Inclusive |

L3 | 1.375 MB/core 11-way 77-cycle Non-inclusive |

The new core keeps the same L1D and L1I cache structures, both implementing writeback 32KB 8-way caches for each. These caches have a 4-cycle access latency, but differ in their access support: Skylake-S does 2x32-byte loads and 1x32-byte store per cycle, whereas Skylake-SP offers double on both.

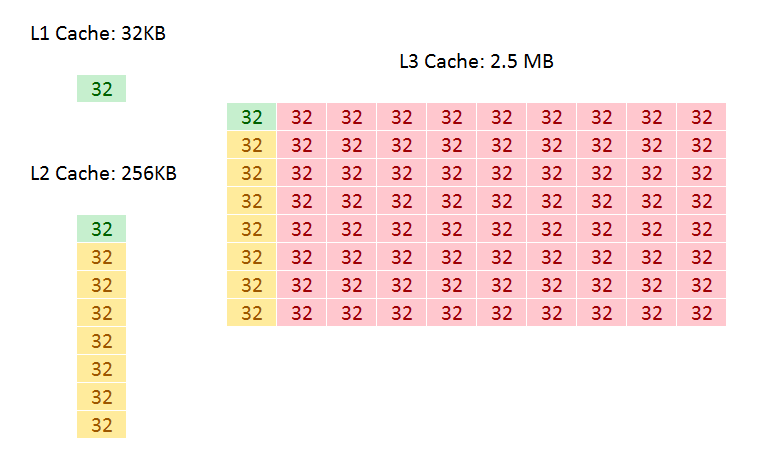

The big changes are with the L2 and the L3. Skylake-SP has a 1MB private L2 cache with 16-way associativity, compared to the 256KB private L2 cache with 4-way associativity in Skylake-S. The L3 changes to an 11-way non-inclusive 1.375MB/core, from a 20-way fully-inclusive 2.5MB/core arrangement.

That’s a lot to unpack, so let’s start with inclusivity:

An inclusive cache contains everything in the cache underneath it and has to be at least the same size as the cache underneath (and usually a lot bigger), compared to an exclusive cache which has none of the data in the cache underneath it. The benefit of an inclusive cache means that if a line in the lower cache is removed due it being old for other data, there should still be a copy in the cache above it which can be called upon. The downside is that the cache above it has to be huge – with Skylake-S we have a 256KB L2 and a 2.5MB/core L3, meaning that the L2 data could be replaced 10 times before a line is evicted from the L3.

A non-inclusive cache is somewhat between the two, and is different to an exclusive cache: in this context, when a data line is present in the L2, it does not immediately go into L3. If the value in L2 is modified or evicted, the data then moves into L3, storing an older copy. (The reason it is not called an exclusive cache is because the data can be re-read from L3 to L2 and still remain in the L3). This is what we usually call a victim cache, depending on if the core can prefetch data into L2 only or L2 and L3 as required. In this case, we believe the SKL-SP core cannot prefetch into L3, making the L3 a victim cache similar to what we see on Zen, or Intel’s first eDRAM parts on Broadwell. Victim caches usually have limited roles, especially when they are similar in size to the cache below it (if a line is evicted from a large L2, what are the chances you’ll need it again so soon), but some workloads that require a large reuse of recent data that spills out of L2 will see some benefit.

So why move to a victim cache on the L3? Intel’s goal here was the larger private L2. By moving from 256KB to 1MB, that’s a double double increase. A general rule of thumb is that a doubling of the cache increases the hit rate by 41% (square root of 2), which can be the equivalent to a 3-5% IPC uplift. By doing a double double (as well as doing the double double on the associativity), Intel is effectively halving the L2 miss rate with the same prefetch rules. Normally this benefits any L2 size sensitive workloads, which some enterprise environments such as databases can be L2 size sensitive (and we fully suspect that a larger L2 came at the request of the cloud providers).

Moving to a larger cache typically increases latency. Intel is stating that the L2 latency has increased, from 11 cycles to ~13, depending on the type of access – the fastest load-to-use is expected to be 13 cycles. Adjusting the latency of the L2 cache is going to have a knock-on effect given that codes that are not L2 size sensitive might still be affected.

So if the L2 is larger and has a higher latency, does that mean the smaller L3 is lower latency? Unfortunately not, given the size of the L2 and a number of other factors – with the L3 being a victim cache, it is typically used less frequency so Intel can give the L3 less stringent requirements to remain stable. In this case the latency has increased from 44 in SKL-X to 77 in SKL-SP. That’s a sizeable difference, but again, given the utility of the victim cache it might make little difference to most software.

Moving the L3 to a non-inclusive cache will also have repercussions for some of Intel’s enterprise features. Back at the Broadwell-EP Xeon launch, one of the features provided was L3 cache partitioning, allowing limited size virtual machines to hog most of the L3 cache if it was running a mission-critical workflow. Because the L3 cache was more important, this was a good feature to add. Intel won’t say how this feature has evolved with the Skylake-SP core at this time, as we will probably have to wait until that launch to find out.

As a side note, it is worth noting here that Broadwell-E was a 256KB private L2 but 8-way, compared to Skylake-S which was a 256KB private L2 but 4-way. Intel stated that the Skylake-S base core went down in associativity for several reasons, but the main one was to make the design more modular. In this case it means the L2 in both size and associativity are 4x from Skylake-S by design, and shows that there may be 512KB 8-way variants in the future.

264 Comments

View All Comments

Tuna-Fish - Tuesday, June 20, 2017 - link

Just a tiny nitpick about the cache hierarchy table:TLBs are grouped with cache levels, that is, L1TLBs are with the L1 caches and the L2 TLB is with the L2 cache, as if the level of TLB is associated with the level of cache. This is not how they work -- any request only has to have it's address translated once, when it's loaded from the L1 cache. If there is a miss when accessing the L1 TLB, the L2 TLB is accessed before the L1 cache is.

PeterCordes - Monday, July 3, 2017 - link

This common mistake bugs me too! The transistors for the TLB's 2nd level are probably not even near the L2 cache. (And the L2 cache is physically indexed / physically tagged, so it doesn't care about translations or virtual addresses at all). The multi-level TLB is a separate hierarchy from the normal caches.I also commented earlier to point out several other errors in [the uarch details](http://www.anandtech.com/comments/11550/the-intel-... e.g. mixing up the register-file sizes with the scheduler size.

yeeeeman - Tuesday, June 20, 2017 - link

What this review shows just how good of a deal AMD Ryzen CPUs are. I mean, R7 1700 is like 300$ and it keeps up in many of the tests with the big boys from Intel.Carmen00 - Tuesday, June 20, 2017 - link

Small typo on the first page, Ian: "For $60 less than the price of the Core i7-7800X...". But the comparison shows $389 vs $299, which is a $90 difference. Otherwise a fantastic, in-depth review, thank you very much!Ian Cutress - Tuesday, June 20, 2017 - link

Official MSRPs haven't changed. What distributors do with their stock is a different story.Carmen00 - Wednesday, June 21, 2017 - link

I'm talking about the MSRPs. There's a table ("Comparison: Core i7-7800X vs. Ryzen 7 1700") on Page 1 with the MSRPs as $299 and $389, a $90 difference. The text just above this table says that there's a $60 difference, but 389-299=90, not 60. So either the text is incorrect, or the MSRPs in the table are incorrect.Tephereth - Tuesday, June 20, 2017 - link

Missing temps and in-game benchmarks... u're the only one in the whole web that has an 7800x to test, so please post those :(Gothmoth - Tuesday, June 20, 2017 - link

after reading a dozend reviews i say:great now we have the choice between two buggy platforms.... well done.

i am not going to be a bios betatester for AMD or Intel.

these two release are the worst in many years i would say.

i hope AMD has threadripper ironed out.

AntDX316 - Tuesday, June 20, 2017 - link

The new processors are in totally another level/league/class. It dominates in everything and more except a couple benches.AnandTechReader2017 - Tuesday, June 20, 2017 - link

Of course they are, Ryzen is mainstream, Thread Ripper is the competitor.Thread Ripper will be quite interesting, the scaling of the "infinity fabric" will come to the fore and show if AMD's new architecture is a worthy competitor.