The Intel Skylake-X Review: Core i9 7900X, i7 7820X and i7 7800X Tested

by Ian Cutress on June 19, 2017 9:01 AM ESTFavored Core

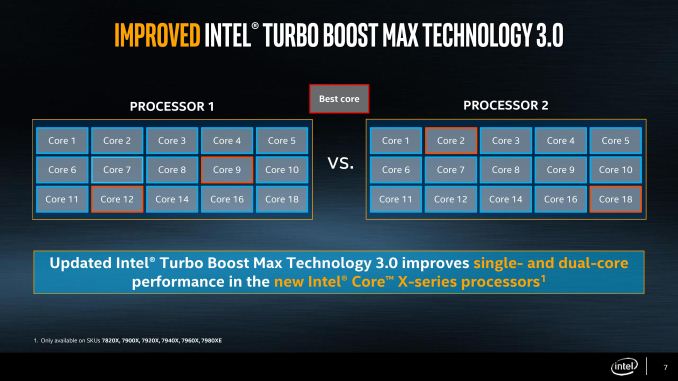

For Broadwell-E, the last generation of Intel’s HEDT platform, we were introduced to the term ‘Favored Core’, which was given the title of Turbo Boost Max 3.0. The idea here is that each piece of silicon that comes off of the production line is different (which is then binned to match to a SKU), but within a piece of silicon the cores themselves will have different frequency and voltage characteristics. The one core that is determined to be the best is called the ‘Favored Core’, and when Intel’s Windows 10 driver and software were in place, single threaded workloads were moved to this favored core to run faster.

In theory, it was good – a step above the generic Turbo Boost 2.0 and offered an extra 100-200 MHz for single threaded applications. In practice, it was flawed: motherboard manufacturers didn’t support it, or they had it disabled in the BIOS by default. Users had to install the drivers and software as well – without the combination of all of these at work, the favored core feature didn’t work at all.

Intel is changing the feature for Skylake-X, with an upgrade and for ease-of-use. The driver and software are now part of Windows updates, so users will get them automatically (if you don’t want it, you have to disable it manually). With Skylake-X, instead of one core being the favored core, there are two cores in this family. As a result, two apps can be run at the higher frequency, or one app that needs two cores can participate.

Speed Shift

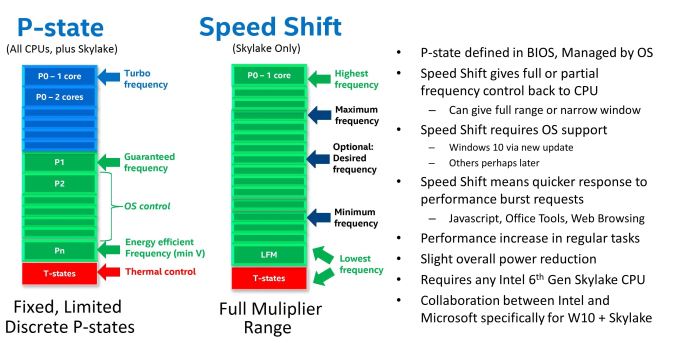

In Skylake-S, the processor has been designed in a way that with the right commands, the OS can hand control of the frequency and voltage back to the processor. Intel called this technology 'Speed Shift'. We’ve discussed Speed Shift before in the Skylake architecture analysis, and it now comes to Skylake-X. One of the requirements for Speed Shift is that it requires operating system support to be able to hand over control of the processor performance to the CPU, and Intel has had to work with Microsoft in order to get this functionality enabled in Windows 10.

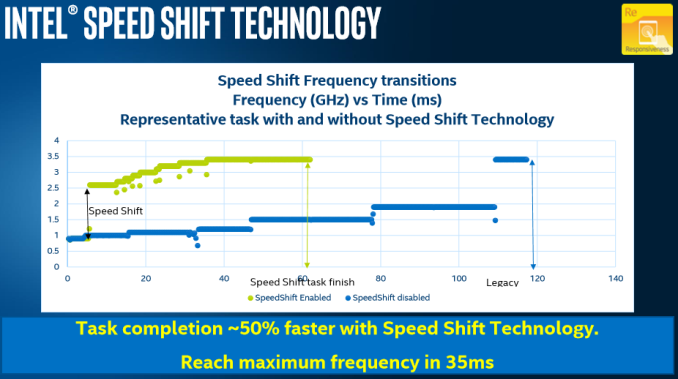

Compared to Speed Step / P-state transitions, Intel's new Speed Shift terminology changes the game by having the operating system relinquish some or all control of the P-States, and handing that control off to the processor. This has a couple of noticeable benefits. First, it is much faster for the processor to control the ramp up and down in frequency, compared to OS control. Second, the processor has much finer control over its states, allowing it to choose the most optimum performance level for a given task, and therefore using less energy as a result. Specific jumps in frequency are reduced to around 1ms with Speed Shift's CPU control from 20-30 ms on OS control, and going from an efficient power state to maximum performance can be done in around 35 ms, compared to around 100 ms with the legacy implementation. As seen in the images below, neither technology can jump from low to high instantly, because to maintain data coherency through frequency/voltage changes there is an element of gradient as data is realigned.

The ability to quickly ramp up performance is done to increase overall responsiveness of the system, rather than linger at lower frequencies waiting for OS to pass commands through a translation layer. Speed Shift cannot increase absolute maximum performance, but on short workloads that require a brief burst of performance, it can make a big difference in how quickly that task gets done. Ultimately, much of what we do falls more into this category, such as web browsing or office work. As an example, web browsing is all about getting the page loaded quickly, and then getting the processor back down to idle.

Again, Speed Shift is something that needs to be enabled on all levels - CPU, OS, driver, and motherboard BIOS. It has come to light that some motherboard manufacturers are disabling Speed Shift on desktops by default, negating the feature. In the BIOS is it labeled either as Speed Shift or Hardware P-States, and sometimes even has non-descript options. Unfortunately, a combination of this and other issues has led to a small problem on X299 motherboards.

X299 Motherboards

When we started testing for this review, the main instructions we were given was that when changing between Skylake-X and Kaby Lake-X processors, be sure to remove AC power and hold the reset BIOS button for 30 seconds. This comes down to an issue with supporting both sets of CPUs at once: Skylake-X features some form of integrated voltage regulator (somewhat like the FIVR on Broadwell), whereas Kaby Lake-X is more motherboard controlled. As a result, some of the voltages going in to the CPU, if configured incorrectly, can cause damage. This is where I say I broke a CPU: our Kaby Lake-X Core i7 died on the test bed. We are told that in the future there should be a way to switch between the two without having this issue, but there are some other issues as well.

After speaking with a number of journalists in my close circle, it was clear that some of the GPU testing was not reflective of where the processors sat in the product stack. Some results were 25-50% worse than we expected for Skylake-X (Kaby Lake-X seemingly unaffected), scoring disastrously low frame rates. This was worrying.

Speaking with the motherboard manufacturers, it's coming down to a few issues: managing the mesh frequency (and if the mesh frequency has a turbo), controlling turbo modes, and controlling features like Speed Shift. 'Controlling' in this case can mean boosting voltages to support it better, overriding the default behavior for 'performance' which works on some tests but not others, or disabling the feature completely.

We were still getting new BIOSes two days before launch, right when I need to fly half-way across the world to cover other events. Even retesting the latest BIOS we had for the boards we had, there still seems to be an underlying issue with either the games or the power management involved. This isn't necessarily a code optimization issue for the games themselves: the base microarchitecture on the CPU is still the same with a slight cache adjustment, so if a Skylake-X starts performing below an old Sandy Bridge Core i3, it's not on the game.

We're still waiting to hear for BIOS updates, or reasons why this is the case. Some games are affected a lot, others not at all. Any game we are testing which ends up being GPU limited is unaffected, showing that this is a CPU issue.

264 Comments

View All Comments

Ian Cutress - Monday, June 19, 2017 - link

Prime95AnandTechReader2017 - Tuesday, June 20, 2017 - link

Are you sure the numbers are correct as the i7 6950X on your graph here states less than the 135W on your original review of it under an all-core load.Ian Cutress - Tuesday, June 20, 2017 - link

We're running a new test suite, different OSes, updated BIOSes, with different metrics/data gathering (might even be a different CPU, as each one is slightly different). There's going to be some differences, unfortunately.gerz1219 - Monday, June 19, 2017 - link

Power draw isn't relevant in this space. High-end users who work from a home office can write off part of their electric bill as a business expense. Price/performance isn't even that much of an issue for many users in this space for the same reason -- if you're using the machine to earn a living, a faster machine pays for itself after a matter of weeks. The only thing that matters is performance. I don't understand why so many gamers read reviews for non-gamer parts and apply gamer complaints.demMind - Monday, June 19, 2017 - link

This kind of response keeps popping up and is highly short sighted. Price for performance matters to high end especially if you use it for your livelihood.If you go large-scale movie rendering studios will definitely be going with what can soften the blow to a large scale project. This is a fud response.

Spunjji - Tuesday, June 20, 2017 - link

Power efficiency will matter again when Intel lead in it. Been watching the same see-saw on the graphics side with nVidia. They lead in it now, so now it's the most important factor.Marketing works, folks.

JKflipflop98 - Thursday, June 22, 2017 - link

Ah, AMD fanbots. Always with the insane conspiracy theories.AnandTechReader2017 - Tuesday, June 20, 2017 - link

Power draw is important as well as temps, it will allow you to push to higher clocks and cut costs.Say your work had to get 500 of these machines, if you can use a cheaper PSU, cheaper CPU and lower power use, the saving could be quite extreme. We're talking 95W vs 140W, a 50% increase versus the Ryzen. That's quite a bit in the long run.

I run 4 high-end desktops in my household, the power draw saving would be quite advantageous form me. All depends on circumstances, information is king.

Ian posted that everything is running at stock speeds, each version overclocked with power draw would also be interesting, also the difference different RAM clock speeds make (there was a huge fiasco with people claiming nice performance increases by using higher RAM clocks with the Ryzen CPU, how much is Intel's new line-up influenced? Can we cut costs and spend more on GPU/monitor/keyboard/pretty much anything else?)

psychok9 - Sunday, July 23, 2017 - link

It's scandalous... no one graph about temperature!? I suspect that if it had been an AMD cpu we would have mass hysteria and daily news... >:(I'm looking for Iy 7820X and understand how can I manage with an AIO.

cknobman - Monday, June 19, 2017 - link

Nope this CPU is a turd IMO.Intel cheaped out on thermal paste again and this chip heats up big time.

Only 44PCIE lanes, shoddy performance, and a rushed launch.

Only a sucker would buy now before seeing AMD Threadripper and that is exactly why, and who, Intel released these things so quickly for.