The Intel Kaby Lake-X i7 7740X and i5 7640X Review: The New Single-Threaded Champion, OC to 5GHz

by Ian Cutress on July 24, 2017 8:30 AM EST- Posted in

- CPUs

- Intel

- Kaby Lake

- X299

- Basin Falls

- Kaby Lake-X

- i7-7740X

- i5-7640X

Test Bed and Setup

As per our processor testing policy, we take a premium category motherboard suitable for the socket, and equip the system with a suitable amount of memory running at the manufacturer's maximum supported frequency. This is also typically run at JEDEC subtimings where possible. It is noted that some users are not keen on this policy, stating that sometimes the maximum supported frequency is quite low, or faster memory is available at a similar price, or that the JEDEC speeds can be prohibitive for performance. While these comments make sense, ultimately very few users apply memory profiles (either XMP or other) as they require interaction with the BIOS, and most users will fall back on JEDEC supported speeds - this includes home users as well as industry who might want to shave off a cent or two from the cost or stay within the margins set by the manufacturer. Where possible, we will extend our testing to include faster memory modules either at the same time as the review or a later date.

| Test Setup | |

| Processor | Intel Core i7-7740X (4C/8T, 112W, 4.3 GHz) Intel Core i5-7640X (4C/4T, 112W, 4.0 GHz) |

| Motherboards | ASRock X299 Taichi MSI X299 Gaming Pro Carbon GIGABYTE X299 Gaming 9 |

| Cooling | Thermalright TRUE Copper Silverstone AR10-115XS (for LGA1151) |

| Power Supply | Corsair AX760i PSU Corsair AX1200i Platinum PSU |

| Memory | Corsair Vengeance Pro DDR4-2666 2x8 GB |

| Video Cards | MSI GTX 1080 Gaming 8GB ASUS GTX 1060 Strix Sapphire R9 Fury 4GB Sapphire RX 480 8GB Sapphire RX 460 2GB |

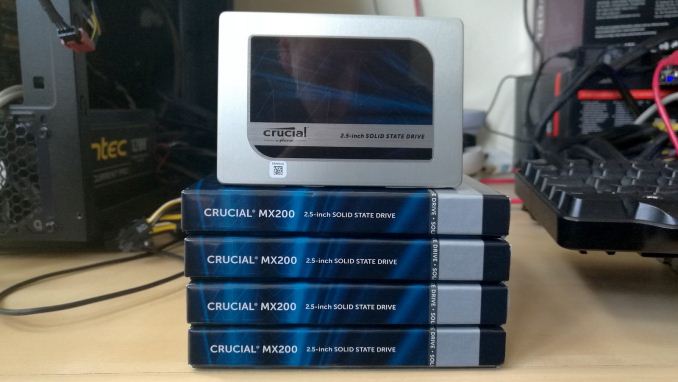

| Hard Drive | Crucial MX200 1TB |

| Optical Drive | LG GH22NS50 |

| Case | Open Test Bed |

| Operating System | Windows 10 Pro 64-bit |

Many thanks to...

We must thank the following companies for kindly providing hardware for our multiple test beds. Some of this hardware is not in this test bed specifically, but is used in other testing.

Thank you to Sapphire for providing us with several of their AMD GPUs. We met with Sapphire back at Computex 2016 and discussed a platform for our future testing on AMD GPUs with their hardware for several upcoming projects. As a result, they were able to sample us the latest silicon that AMD has to offer. At the top of the list was a pair of Sapphire Nitro R9 Fury 4GB GPUs, based on the first generation of HBM technology and AMD’s Fiji platform. As the first consumer GPU to use HDM, the R9 Fury is a key moment in graphics history, and this Nitro cards come with 3584 SPs running at 1050 MHz on the GPU with 4GB of 4096-bit HBM memory at 1000 MHz.

Further Reading: AnandTech’s Sapphire Nitro R9 Fury Review

Following the Fury, Sapphire also supplied a pair of their latest Nitro RX 480 8GB cards to represent AMD’s current performance silicon on 14nm (as of March 2017). The move to 14nm yielded significant power consumption improvements for AMD, which combined with the latest version of GCN helped bring the target of a VR-ready graphics card as close to $200 as possible. The Sapphire Nitro RX 480 8GB OC graphics card is designed to be a premium member of the RX 480 family, having a full set of 8GB of GDDR5 memory at 6 Gbps with 2304 SPs at 1208/1342 MHz engine clocks.

Further Reading: AnandTech’s AMD RX 480 Review

With the R9 Fury and RX 480 assigned to our gaming tests, Sapphire also passed on a pair of RX 460s to be used as our CPU testing cards. The amount of GPU power available can have a direct effect on CPU performance, especially if the CPU has to spend all its time dealing with the GPU display. The RX 460 is a nice card to have here, as it is powerful yet low on power consumption and does not require any additional power connectors. The Sapphire Nitro RX 460 2GB still follows on from the Nitro philosophy, and in this case is designed to provide power at a low price point. Its 896 SPs run at 1090/1216 MHz frequencies, and it is paired with 2GB of GDDR5 at an effective 7000 MHz.

We must also say thank you to MSI for providing us with their GTX 1080 Gaming X 8GB GPUs. Despite the size of AnandTech, securing high-end graphics cards for CPU gaming tests is rather difficult. MSI stepped up to the plate in good fashion and high spirits with a pair of their high-end graphics. The MSI GTX 1080 Gaming X 8GB graphics card is their premium air cooled product, sitting below the water cooled Seahawk but above the Aero and Armor versions. The card is large with twin Torx fans, a custom PCB design, Zero-Frozr technology, enhanced PWM and a big backplate to assist with cooling. The card uses a GP104-400 silicon die from a 16nm TSMC process, contains 2560 CUDA cores, and can run up to 1847 MHz in OC mode (or 1607-1733 MHz in Silent mode). The memory interface is 8GB of GDDR5X, running at 10010 MHz. For a good amount of time, the GTX 1080 was the card at the king of the hill.

Further Reading: AnandTech’s NVIDIA GTX 1080 Founders Edition Review

Thank you to ASUS for providing us with their GTX 1060 6GB Strix GPU. To complete the high/low cases for both AMD and NVIDIA GPUs, we looked towards the GTX 1060 6GB cards to balance price and performance while giving a hefty crack at >1080p gaming in a single graphics card. ASUS offered a hand here, supplying a Strix variant of the GTX 1060. This card is even longer than our GTX 1080, with three fans and LEDs crammed under the hood. STRIX is now ASUS’ lower cost gaming brand behind ROG, and the Strix 1060 sits at nearly half a 1080, with 1280 CUDA cores but running at 1506 MHz base frequency up to 1746 MHz in OC mode. The 6 GB of GDDR5 runs at a healthy 8008 MHz across a 192-bit memory interface.

Further Reading: AnandTech’s ASUS GTX 1060 6GB STRIX Review

Thank you to Crucial for providing us with MX200 SSDs. Crucial stepped up to the plate as our benchmark list grows larger with newer benchmarks and titles, and the 1TB MX200 units are strong performers. Based on Marvell's 88SS9189 controller and using Micron's 16nm 128Gbit MLC flash, these are 7mm high, 2.5-inch drives rated for 100K random read IOPs and 555/500 MB/s sequential read and write speeds. The 1TB models we are using here support TCG Opal 2.0 and IEEE-1667 (eDrive) encryption and have a 320TB rated endurance with a three-year warranty.

Further Reading: AnandTech's Crucial MX200 (250 GB, 500 GB & 1TB) Review

Thank you to Corsair for providing us with an AX1200i PSU. The AX1200i was the first power supply to offer digital control and management via Corsair's Link system, but under the hood it commands a 1200W rating at 50C with 80 PLUS Platinum certification. This allows for a minimum 89-92% efficiency at 115V and 90-94% at 230V. The AX1200i is completely modular, running the larger 200mm design, with a dual ball bearing 140mm fan to assist high-performance use. The AX1200i is designed to be a workhorse, with up to 8 PCIe connectors for suitable four-way GPU setups. The AX1200i also comes with a Zero RPM mode for the fan, which due to the design allows the fan to be switched off when the power supply is under 30% load.

Further Reading: AnandTech's Corsair AX1500i Power Supply Review

Thank you to G.Skill for providing us with memory. G.Skill has been a long-time supporter of AnandTech over the years, for testing beyond our CPU and motherboard memory reviews. We've reported on their high capacity and high-frequency kits, and every year at Computex G.Skill holds a world overclocking tournament with liquid nitrogen right on the show floor.

Further Reading: AnandTech's Memory Scaling on Haswell Review, with G.Skill DDR3-3000

176 Comments

View All Comments

iwod - Monday, July 24, 2017 - link

Intel has 10nm and 7nm by 2020 / 2021. Core Count is basically a solved problem, limited only by price.What we need is a substantial breakthrough in single thread performance. May be there are new material that could bring us 10+Ghz. But those aren't even on the 5 years roadmap.

mapesdhs - Monday, July 24, 2017 - link

That's more down to better sw tech, which alas lags way behind. It needs skills that are largely not taught in current educational establishments.wolfemane - Monday, July 24, 2017 - link

Under Handbrake testing, just above the first graph you state:"Low Quality/Resolution H264: He we transcode a 640x266 H264 rip of a 2 hour film, and change the encoding from Main profile to High profile, using the very-fast preset."

I think you mean to say "HERE we transcode..."

Great article overall. Thank you!

Ian Cutress - Monday, July 24, 2017 - link

Thanks, corrected :)wolfemane - Monday, July 24, 2017 - link

I wish your team would finally add in an edit button to comments! :)On the last graph ENCODING: Handbrake HEVC (4k) you don't list the 1800x, but it is present in the previous two graphs @ LQ and HQ. Was there an issue with the 1800x preventing 4k testing? Quite interested in it's results if you have them.

Ian Cutress - Monday, July 24, 2017 - link

When I first did the HEVC testing for the Ryzen 7 review, there was a slight issue in it running and halfway through I had to change the script because the automation sometimes dropped a result (like the 1800X which I didn't notice until I was 2-3 CPUs down the line). I need to put the 1800X back on anyway for AGESA 1006, which will be in an upcoming article.IanHagen - Monday, July 24, 2017 - link

One thing that caught my eye for a while is how compile tests using GCC or clang show much better results on Ryzen compared to using Microsoft's VS compiler. Phoronix tests clearly shows that. Thus, I cannot really believe yet on Ian's recurring explanation of Ryzen suffering from its victim L3 cache. After all, the 1800X beats the 7700K by a sizable margin when compiling the Linux kernel.Isn't Ryzen relatively poor performance compiling Chromium due to idiosyncrasies of the VS compiler?

Ian Cutress - Monday, July 24, 2017 - link

The VS compiler seems to love L3 cache, then. The 1800X does have 2x threads and 2x cores over the 7700K, accounting for the difference. We saw a -17% drop going from SKL-S with its fully inclusive L3 to SKL-SP with a victim L3, clock for clock.Chromium was the best candidate for a scripted, consistent compile workflow I could roll into our new suite (and runs on Windows). Always open for suggestions that come with an ELI5.

ddriver - Monday, July 24, 2017 - link

So we are married to chromium, because it only compiles with msvc on windows?Or maybe because it is a shitty implementation that for some reason stacks well with intel's offerings?

Pardon my ignorance, I've only been a multi-platform software developer for 8 years, but people who compile stuff a lot usually don't compile chromium all day.

I'd say go GCC or Clang, because those are quality community drive open source compilers that target a variety of platforms, unlike msvc. I mean if you really want to illustrate the usefulness of CPUs for software developers, which at this point is rather doubtful...

Ian Cutress - Monday, July 24, 2017 - link

Again, find me something I can rope into my benchmark suite with an ELI5 guide and I try and find time to look into it. The Chromium test took the best part of 2-3 days to get in a position where it was scripted and repeatable and fit with our workflow - any other options I examined weren't even close. I'm not a computer programmer by day either, hence the ELI5 - just years old knowledge of using Commodore BASIC, batch files, and some C/C++/CUDA in VS.