Sizing Up Servers: Intel's Skylake-SP Xeon versus AMD's EPYC 7000 - The Server CPU Battle of the Decade?

by Johan De Gelas & Ian Cutress on July 11, 2017 12:15 PM EST- Posted in

- CPUs

- AMD

- Intel

- Xeon

- Enterprise

- Skylake

- Zen

- Naples

- Skylake-SP

- EPYC

Closing Thoughts

First of all, we have to emphasize that we were only able to spend about a week on the AMD server, and about two weeks on the Intel system. With the complexity of both server hardware and especially server software, that is very little time. There is still a lot to test and tune, but the general picture is clear.

We can continue to talk about Intel's excellent mesh topology and AMD strong new Zen architecture, but at the end of the day, the "how" will not matter to infrastructure professionals. Depending on your situation, performance, performance-per-watt, and/or performance-per-dollar are what matters.

The current Intel pricing draws the first line. If performance-per-dollar matters to you, AMD's EPYC pricing is very competitive for a wide range of software applications. With the exception of database software and vectorizable HPC code, AMD's EPYC 7601 ($4200) offers slightly less or slightly better performance than Intel's Xeon 8176 ($8000+). However the real competitor is probably the Xeon 8160, which has 4 (-14%) fewer cores and slightly lower turbo clocks (-100 or -200 MHz). We expect that this CPU will likely offer 15% lower performance, and yet it still costs about $500 more ($4700) than the best EPYC. Of course, everything will depend on the final server system price, but it looks like AMD's new EPYC will put some serious performance-per-dollar pressure on the Intel line.

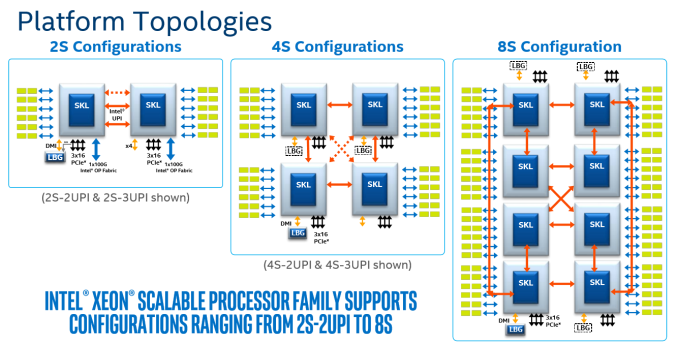

The Intel chip is indeed able to scale up in 8 sockets systems, but frankly that market is shrinking fast, and dual socket buyers could not care less.

Meanwhile, although we have yet to test it, AMD's single socket offering looks even more attractive. We estimate that a single EPYC 7551P would indeed outperform many of the dual Silver Xeon solutions. Overall the single-socket EPYC gives you about 8 cores more at similar clockspeeds than the 2P Intel, and AMD doesn't require explicit cross socket communication - the server board gets simpler and thus cheaper. For price conscious server buyers, this is an excellent option.

However, if your software is expensive, everything changes. In that case, you care less about the heavy price tags of the Platinum Xeons. For those scenarios, Intel's Skylake-EP Xeons deliver the highest single threaded performance (courtesy of the 3.8 GHz turbo clock), high throughput without much (hardware) tuning, and server managers get the reassurance of Intel's reliable track record. And if you use expensive HPC software, you will probably get the benefits of Intel's beefy AVX 2.0 and/or AVX-512 implementations.

The second consideration is the type of buyer. It is clear that you have to tune more and work harder to get the best performance out of AMD EPYC CPUs. In many ways it is basically a "virtual octal socket" solution. For enterprises with a small infrastructure crew and server hardware on premise, spending time on hardware tuning is not an option most of the time. For the cloud vendors, the knowledge will be available and tuning for EPYC will be a one-time investment. Microsoft is already deploying AMD's EPYC in their Azure Cloud Datacenters.

Looking Towards the Future

Looking towards the future, Intel has the better topology to add more cores in future CPU generations. However AMD's newest core is a formidable opponent. Scalar floating point operations are clearly faster on the AMD core, and integer performance is – at the same clock – on par with Intel's best. The dual CCX layout and quad die setup leave quite a bit of performance on the table, so it will be interesting how much AMD has learned from this when they launch the 7 nm "Rome" successor. Their SKU line-up is still very limited.

All in all, it must be said that AMD executed very well and delivered a new server CPU that can offer competitive performance for a lower price point in some key markets. Server customers with non-scalar sparse matrix HPC and Big Data applications should especially take notice.

As for Intel, the company has delivered a very attractive and well scaling product. But some of the technological advances in Skylake-SP are overshadowed by the heavy price tags and somewhat "over the top" market segmentation.

219 Comments

View All Comments

CajunArson - Tuesday, July 11, 2017 - link

Would a high-end server that was built in 2014 necessarily update? Maybe not.Should a high-end server with a brand new microarchitecture use the most recent version of the software if it has any expectation of seeing a real benefit? Absolutely.

If this was a GPU review and Anandtech used 2 year old drivers on a new GPU (assuming they even worked at all) we wouldn't even be having this conversation.

BrokenCrayons - Tuesday, July 11, 2017 - link

Home users playing video games are in a different environment than you find in a business datacenter. There's a lot less money to be lost when a driver update causes a performance regression or eliminates a feature. Conversely, needlessly updating software in the aforementioned datacenter can result in the loss of many millions if something goes wrong.wallysb01 - Tuesday, July 11, 2017 - link

Conversely, having stuff working, but unnecessarily slowly costs money as well. Its a balance, and if you're spending hundreds of thousands or even millions on a cluster/data center/what have you, you'd probably want to spend at least a little bit of time optimizing it, right?Icehawk - Tuesday, July 11, 2017 - link

Most of the businesses I have worked for, ranging from 10 people to 50k, use severely outdated software and the barest minimum of patching. Optimization? HA!For example I work for a manufacturer & retailer currently, our POS system was last patched in 2012 by the vendor and has been replaced by at least two versions newer. We have XP machines in each of our stores as that is the only OS that can run the software.

The above is very typical. The 50k company I worked for had software so old and deeply entrenched that modernizing it is virtually impossible. My current company is working on getting to a new product... that was new in 2012 and has also been replaced with a newer version. Whee!

Icehawk - Tuesday, July 11, 2017 - link

One other thing - maybe the big shops actually do test/size but none of the places I have worked at and have been involved in do any testing, benchmarking, etc. They just buy whatever their preferred vendor gives them that meets the budget and they *think* will work. My coworker is in charge (lol) of selecting servers for a new office... he has no clue what anything in this article is. He has never read a single review, overview, or test of a processor. I could keep going on like this :(0ldman79 - Wednesday, July 12, 2017 - link

Icehawk's comments are so accurate it is scary.I can't tell you how many businesses running custom *nix software running in a VM on a Windows server.

They're not all about speed. Reliability is the single most important factor, speed is somewhere down the line. The people that make those decisions and the people that drink coffee while they're waiting on the machines are very different.

Neither understand that it could all be done so much better and almost all of them are utterly terrified at the concept of speeding up the process if it means *any* changes are made.

JohanAnandtech - Friday, July 21, 2017 - link

We did test with NAMD 2.12 (Dec 2016).sutamatamasu - Tuesday, July 11, 2017 - link

Glad, AMD make back again to this segment, now we can only see what can Raja to do for server market with Radeon instinct.Kaotika - Tuesday, July 11, 2017 - link

So this confirms that the previous information regarding Skylake-X core configurations was wrong, and 12-core variant is in fact using HCC-core instead of LCC-core?Ian Cutress - Tuesday, July 11, 2017 - link

We corrected that in our Skylake-X review.