Sizing Up Servers: Intel's Skylake-SP Xeon versus AMD's EPYC 7000 - The Server CPU Battle of the Decade?

by Johan De Gelas & Ian Cutress on July 11, 2017 12:15 PM EST- Posted in

- CPUs

- AMD

- Intel

- Xeon

- Enterprise

- Skylake

- Zen

- Naples

- Skylake-SP

- EPYC

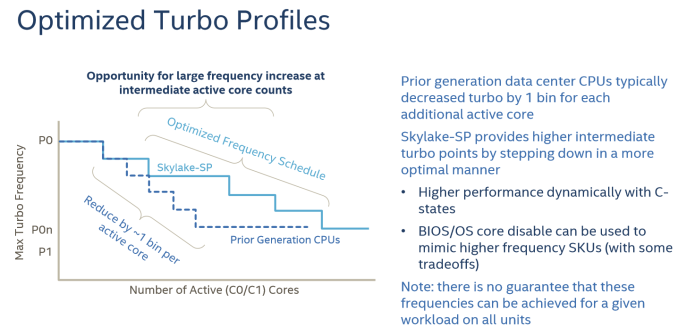

Intel's Optimized Turbo Profiles

Also new to Skylake-SP, Intel has also further enhanced turbo boosting.

There are also some security and virtualization enhancements (MBE, PPK, MPX) , but these are beyond the scope this article as we don't test them.

Summing It All Up: How Skylake-SP and Zen Compare

The table below shows you the differences in a nutshell.

| AMD EPYC 7000 |

Intel Skylake-SP | Intel Broadwell-EP |

|

| Package & Dies | Four dies in one MCM | Monolithic | Monolithic |

| Die size | 4x 195 mm² | 677 mm² | 456 mm² |

| On-Chip Topology | Infinity Fabric (1-Hop Max) |

Mesh | Dual Ring |

| Socket configuration | 1-2S | 1-8S ("Platinum") | 1-2S |

| Interconnect (Max.) Bandwidth (*)(Max.) |

4x16 (64) PCIe lanes 4x 37.9 GB/s |

3x UPI 20 lanes 3x 41.6 GB/s |

2x QPI 20 lanes 2x 38.4 GB/s |

| TDP | 120-180W | 70-205W | 55-145W |

| 8-32 | 4-28 | 4-22 | |

| LLC (max.) | 64MB (8x8 MB) | 38.5 MB | 55 MB |

| Max. Memory | 2 TB | 1.5 TB | 1.5 TB |

| Memory subsystem Fastest sup. DRAM |

8 channels DDR4-2666 |

6 channels DDR4-2666 |

4 channels DDR4-2400 |

| PCIe Per CPU in a 2P |

64 PCIe (available) | 48 PCIe 3.0 | 40 PCIe 3.0 |

(*) total bandwidth (bidirectional)

At a high level, I would argue that Intel has the most advanced multi-core topology, as they're capable of integrating up to 28 cores in a mesh. The mesh topology will allow Intel to add more cores in future generations while scaling consistently in most applications. The last level cache has a decent latency and can accommodate applications with a massive memory footprint. The latency difference between accessing a local L3-cache chunk and one further away is negligible on average, allowing the L3-cache to be a central storage for fast data synchronization between the L2-caches. However, the highest performing Xeons are huge, and thus expensive to manufacture.

AMD's MCM approach is much cheaper to manufacture. Peak memory bandwidth and capacity is quite a bit higher with 4 dies and 2 memory channels per die. However, there is no central last level cache that can perform low latency data coordination between the L2-caches of the different cores (except inside one CCX). The eight 8 MB L3-caches acts like - relatively low latency - spill over caches for the 32 L2-caches on one chip.

219 Comments

View All Comments

psychobriggsy - Tuesday, July 11, 2017 - link

Indeed it is a ridiculous comment, and puts the earlier crying about the older Ubuntu and GCC into context - just an Intel Fanboy.In fact Intel's core architecture is older, and GCC has been tweaked a lot for it over the years - a slightly old GCC might not get the best out of Skylake, but it will get a lot. Zen is a new core, and GCC has only recently got optimisations for it.

EasyListening - Wednesday, July 12, 2017 - link

I thought he was joking, but I didn't find it funny. So dumb.... makes me sad.blublub - Tuesday, July 11, 2017 - link

I kinda miss Infinity Fabric on my Haswell CPU and it seems to only have on die - so why is that missing on Haswell wehen Ryzen is an exact copy?blublub - Tuesday, July 11, 2017 - link

Your actually sound similar to JuanRGA at SAKevin G - Wednesday, July 12, 2017 - link

@CajunArson The cache hierarchy is radically different between these designs as well as the port arrangement for dispatch. Scheduling on Ryzen is split between execution resources where as Intel favors a unified approach.bill.rookard - Tuesday, July 11, 2017 - link

Well, that is something that could be figured out if they (anandtech) had more time with the servers. Remember, they only had a week with the AMD system, and much like many of the games and such, optimizing is a matter of run test, measure, examine results, tweak settings, rinse and repeat. Considering one of the tests took 4 hours to run, having only a week to do this testing means much of the optimization is probably left out.They went with a 'generic' set of relative optimizations in the interest of time, and these are the (very interesting) results.

CoachAub - Wednesday, July 12, 2017 - link

Benchmarks just need to be run on as level as a field as possible. Intel has controlled the market so long, software leans their way. Who was optimizing for Opteron chips in 2016-17? ;)theeldest - Tuesday, July 11, 2017 - link

The compiler used isn't meant to be the the most optimized, but instead it's trying to be representative of actual customer workloads.Most customer applications in normal datacenters (not google, aws, azure, etc) are running binaries that are many years behind on optimizations.

So, yes, they can get better performance. But using those optimizations is not representative of the market they're trying to show numbers for.

CajunArson - Tuesday, July 11, 2017 - link

That might make a tiny bit of sense if most of the benchmarks run were real-world workloads and not C-Ray or POV-Ray.The most real-world benchmark in the whole setup was the database benchmark.

coder543 - Tuesday, July 11, 2017 - link

The one benchmark that favors Intel is the "most real-world"? Absolutely, I want AnandTech to do further testing, but your comments do not sound unbiased.