Sizing Up Servers: Intel's Skylake-SP Xeon versus AMD's EPYC 7000 - The Server CPU Battle of the Decade?

by Johan De Gelas & Ian Cutress on July 11, 2017 12:15 PM EST- Posted in

- CPUs

- AMD

- Intel

- Xeon

- Enterprise

- Skylake

- Zen

- Naples

- Skylake-SP

- EPYC

Floating Point

Normally our HPC benchmarking is centered around OpenFoam, a CFD software we have used for a number of articles over the years. However, since we moved to Ubuntu 16.04, we could not get it to work anymore. So we decided to change our floating point intensive benchmark for now. For our latest article, we're testing with C-ray, POV-Ray, and NAMD.

The idea is to measure:

- A FP benchmark that is running out of the L1 (C-ray)

- A FP benchmark that is running out of the L2 (POV-Ray)

- And one that is using the memory subsytem quite often (NAMD)

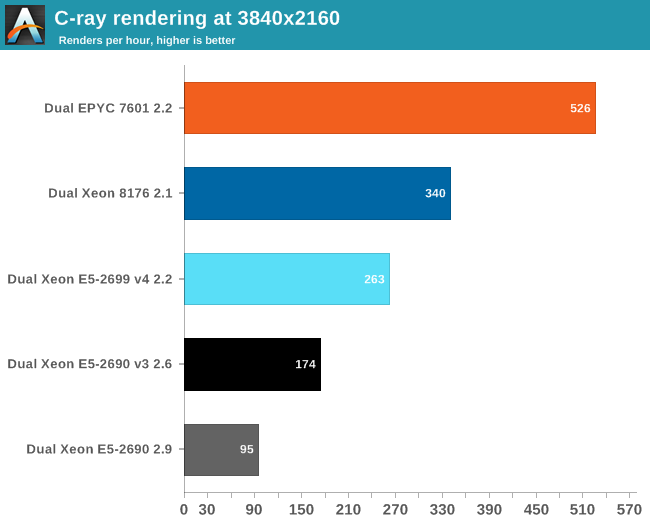

Floating Point: C-ray

C-ray is an extremely simple ray-tracer which is not representative of any real world raytracing application. In fact, it is essentially a floating point benchmark that runs out of the L1-cache. Luckily it is not as synthetic and meaningless as Whetstone, as you can actually use the software to do simple raytracing.

We use the standard benchmarking resolution (3840x2160) and the "sphfract" file to measure performance. The binary was precompiled.

Wow. What just happened? It looks like a landslide victory for the raw power of the four FP pipes of Zen: the EPYC chip is no less than 50% faster than the competition. Of course, it is easy to feed FP units if everything resides in the L1. Next stop, POV-Ray.

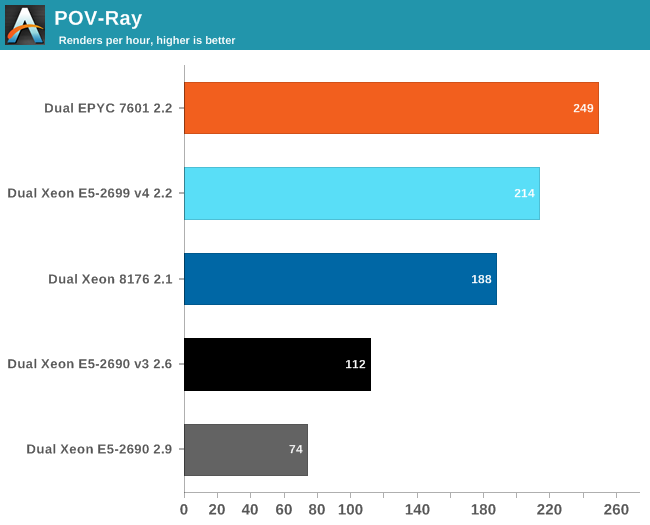

Floating Point: POV-Ray 3.7

POV-Ray is known to run mostly out of the L2-cache, so the massive DRAM bandwidth of the EPYC CPU does not play a role here. Nevertheless, the EPYC CPU performance is pretty stunning: about 16% faster than Intel's Xeon 8176. But what if AVX and DRAM access come in to play? Let us check out NAMD.

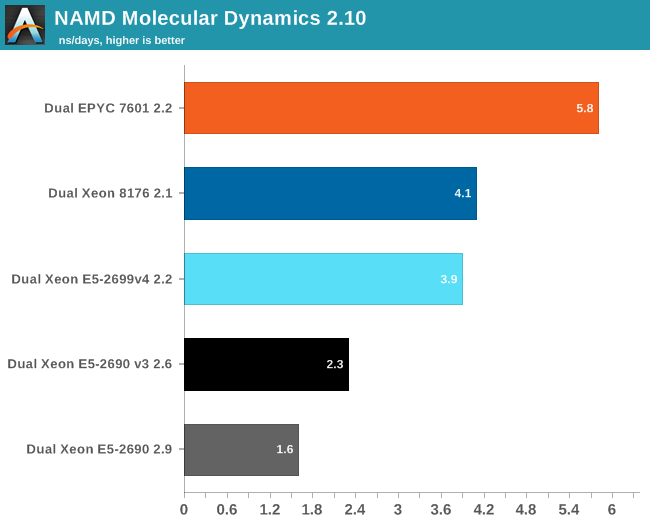

Floating Point: NAMD

Developed by the Theoretical and Computational Biophysics Group at the University of Illinois Urbana-Champaign, NAMD is a set of parallel molecular dynamics codes for extreme parallelization on thousands of cores. NAMD is also part of SPEC CPU2006 FP. In contrast with previous FP benchmarks, the NAMD binary is compiled with Intel ICC and optimized for AVX.

First, we used the "NAMD_2.10_Linux-x86_64-multicore" binary. We used the most popular benchmark load, apoa1 (Apolipoprotein A1). The results are expressed in simulated nanoseconds per wall-clock day. We measure at 500 steps.

Again, the EPYC 7601 simply crushes the competition with 41% better performance than Intel's 28-core. Heavily vectorized code (like Linpack) might run much faster on Intel, but other FP code seems to run faster on AMD's newest FPU.

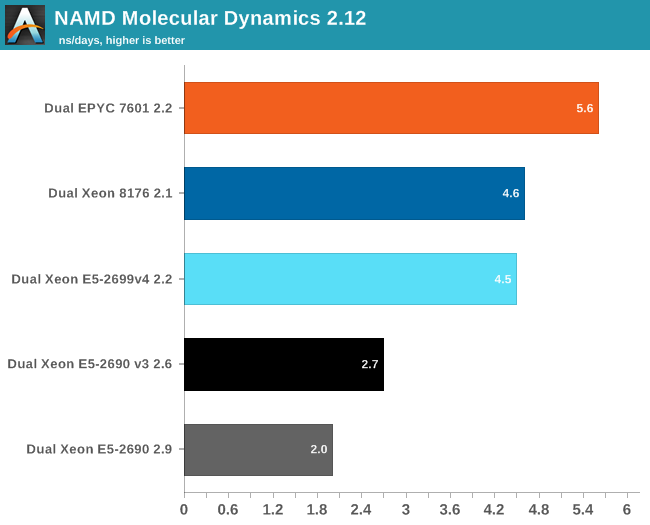

For our first shot with this benchmark, we used version 2.10 to be able to compare to our older data set. Version 2.12 seems to make better use of "Intel's compiler vectorization and auto-dispatch has improved performance for Intel processors supporting AVX instructions". So let's try again:

The older Xeons see a perforance boost of about 25%. The improvement on the new Xeons is a lot lower: about 13-15%. Remarkable is that the new binary is slower on the EPYC 7601: about 4%. That simply begs for more investigation: but the deadline was too close. Nevertheless, three different FP tests all point in the same direction: the Zen FP unit might not have the highest "peak FLOPs" in theory, there is lots of FP code out there that runs best on EPYC.

219 Comments

View All Comments

ddriver - Tuesday, July 11, 2017 - link

Gotta love the "you don't care about the xeon prices" part thou. Now that intel don't have a performance advantage, and their product value at the high end is half that of amd, AT plays the "intel is the better brand" card. So expected...OZRN - Wednesday, July 12, 2017 - link

You need some perspective. Database licensing for Oracle happens per core, where Intel's performance is frequently better in a straight line and since they achieve it on lower core count it's actually better value for the use case. Higher per-CPU cost is not so much of a concern when you pay twice as much for a processor license to cover those cores.I'm an AMD fan and I made this account just for you, sweetheart, but don't blind yourself to the truth just because Intel has a history of shady business. In most regards this is a balanced review, and where it isn't, they tell you why it might not be. Chill out.

ddriver - Thursday, July 13, 2017 - link

You are such a clown. Nobody, I repeat, NOBODY on this planet uses 64 core 128 thread 512 gigabytes of ram servers to run a few MB worth of database. You telling me to get pespective thus can mean only two things, that you are a buthurt intel fanboy troll or that you are in serious need of head examination. Or maybe even both. At any rate, that perfectly explains your ridiculously low standards for "balanced review".Notmyusualid - Friday, July 14, 2017 - link

It seems no matter what opinion someone presents that might exhibit Intel in a better light - you are going to hate it anyway.What a life you must lead.

OZRN - Friday, July 14, 2017 - link

No, they don't. They use them to host gigabytes to terabytes worth of mission critical databases, with specified amounts of cores dedicated to seperate environments of hard partitioned data manipulation. I've done some quick math for you and in an average setup of Enterprise Edition of Oracle DB, with only the usually reported options and extras, this type of database would cost over $3.7m to run on *64 cores alone*. At this point, where is your hardware sunk costs argument?Also, I don't think anyone here is impressed by your ability to immediately personally insult people making valid points. Good luck finding your head that deep in your colon.

CajunArson - Tuesday, July 11, 2017 - link

"All of our testing was conducted on Ubuntu Server "Xenial" 16.04.2 LTS (Linux kernel 4.4.0 64 bit). The compiler that ships with this distribution is GCC 5.4.0."I'd recommend using a more updated distro and especially a more up to date compiler (GCC 5.4 is only a bug-fix release of a compiler from *2015*) if you want to see what these parts are truly capable of.

Phoronix does heavy-duty Linux reviews and got some major performance boosts on the i9 7900X simply by using up to date distros: http://www.phoronix.com/scan.php?page=article&...

Considering that Purley is just an upscaled version of the i9 7900X, I wouldn't be surprised to see different results.

CajunArson - Tuesday, July 11, 2017 - link

As a followup to my earlier comment, that Phoronix story, for example, shows a speedup factor of almost 5X on the C-ray benchmark simply by using a modern distro with some tuning for the more modern Skylake architecture.I'm not saying Purley would have a 5X speedup on C-ray per-say, but I'd be shocked if it didn't get a good boost using modern software that's actually designed for the Skylake architecture.

CoachAub - Wednesday, July 12, 2017 - link

Keywords: "actually designed for the Skylake architecture". Will there be optimizations for AMD Epyc chips?mkozakewich - Friday, July 14, 2017 - link

If it's a reasonable optimization, it makes sense to include it in the benchmark. If I were building these systems, I'd want to see benchmarks that resembled as closely as possible my company's workflow. (Which may be for older software or newer software; neither are inherently more relevant, though benchmarks on newer software will usually be relevant further into the future.)CajunArson - Tuesday, July 11, 2017 - link

And another followup: The time kernel compilation on the i9 7900X got almost a factor of 2 speedup over the Ubuntu 16.04 using more modern distros.