Sizing Up Servers: Intel's Skylake-SP Xeon versus AMD's EPYC 7000 - The Server CPU Battle of the Decade?

by Johan De Gelas & Ian Cutress on July 11, 2017 12:15 PM EST- Posted in

- CPUs

- AMD

- Intel

- Xeon

- Enterprise

- Skylake

- Zen

- Naples

- Skylake-SP

- EPYC

Intel’s Turbo Modes

A last minute detail from Intel yesterday was information on the Turbo modes. As expected, not all of the processors actually run at their rated/base frequency: most will apply a series of turbo modes depending on how many cores are registered as ‘active’. Each core can have its frequency adjusted independently, allowing VMs to take advantage of different workload types and not be hamstrung by occupants on other VMs in the same socket. This becomes important when AVX, AVX2 and AVX-512 are being used at the same time.

Most of the turbo modes are a sliding scale, with the peak turbo used when only one or two cores are active, sliding down to a minimum frequency that may be the ‘base’ frequency or just above it. There’s a lot of information for the parts here, so we’ll break it down into stages.

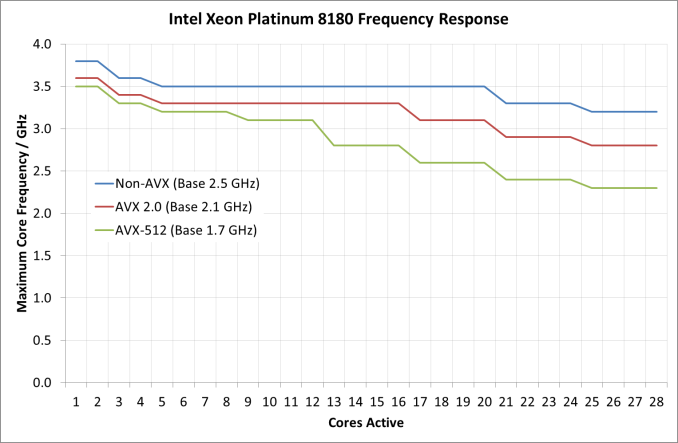

First up, a look at the Platinum 8180 in the different modes:

It should be worth noting what the base frequency actually is, and some of the nuance in Intel’s wording here. The base frequency is the guaranteed frequency of the chip – Intel sells the chip with the base frequencies as the guarantee, such that when the chip is not idle and not in normal conditions (i.e. when not in thermal power states to reduce temperature) should operate at this frequency or above it. Intel also lists the per-core turbo frequencies as ‘Maximum Core Frequencies’ indicating that the processors could be running lower than listed, depending on power distribution and requirements in other areas of the chip (such as the uncore, or memory controller). It’s a vague set of terms but ultimately the frequency is determined on the fly and can be affected by many factors, but Intel guarantees a certain amount and provides guides as to what it expects the turbo frequencies to be.

As for the Platinum 8180, it keeps its top turbo modes while up to two cores are active, and then drops down. It does this again for another two cores, and a further two cores. From this point, under non-AVX load the CPU is pretty much the same frequency until >20 cores are loaded, but does not decrease that much in all. For AVX 2.0 and AVX-512, the downward slope of more cores means less frequency continues, with AVX-512 taking a bigger jump down at 13 cores loaded. The final turbo frequency for AVX-512 running on all cores is 2.3 GHz.

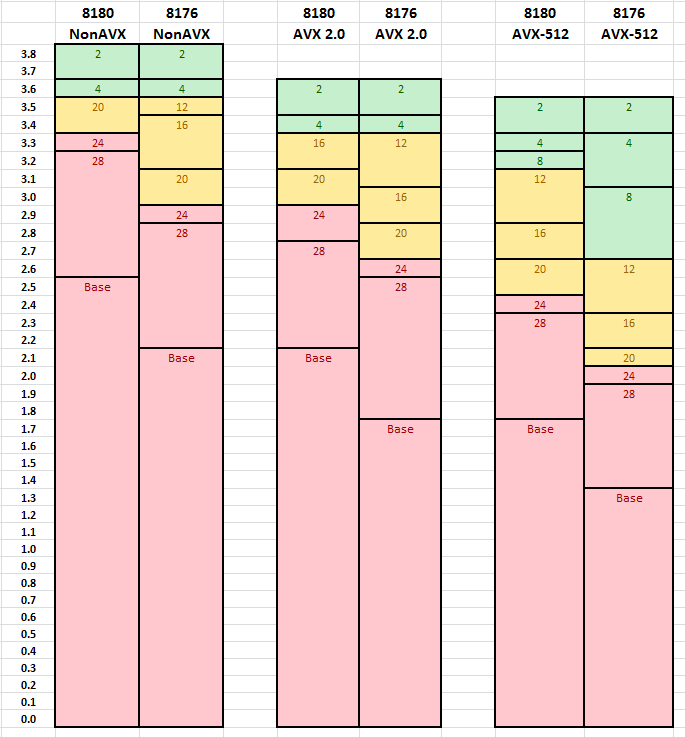

Comparing the two 28-core CPUs for which we have turbo information gives this graph. The numbers relate to the number of cores need to be loaded for that frequency.

Both processors are equal to each other for dual core loading, but the separation occurs when more cores are loaded. As we move through to AVX 2.0 and AVX-512, it is clear where the separations are in performance – to get the best for variable core loading, the more expensive processors are required.

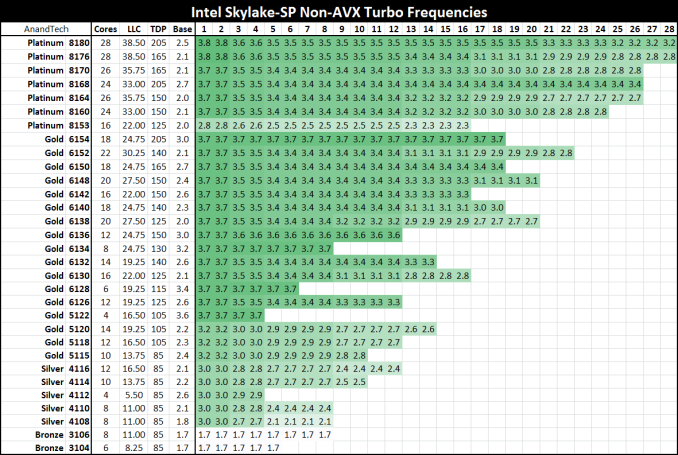

Here’s the big table for all the processors on Non-AVX loading:

Despite the 2.0/2.1 GHz base on most of the Platinum series, all the CPUs will turbo up to 3.7-3.8 GHz on low core loading except for the lower power Platinum 8153. For users wanting to strike a good balance between the core count and frequency, the Gold 6154 is probably the place to be: 18 cores that will only ever run at 3.7 GHz with non-AVX loading (3.5-2.7 GHz on AVX-512 depending on core count), and will be $3543 as a list price at 205W. It is perhaps worth noting that this will likely top any of the Core i9 processors planned: at 18-cores and 205W for 3.7 GHz, the Core i9-7980XE which will have 18 cores but run 165W will likely be clocked lower (but also only ~$2000).

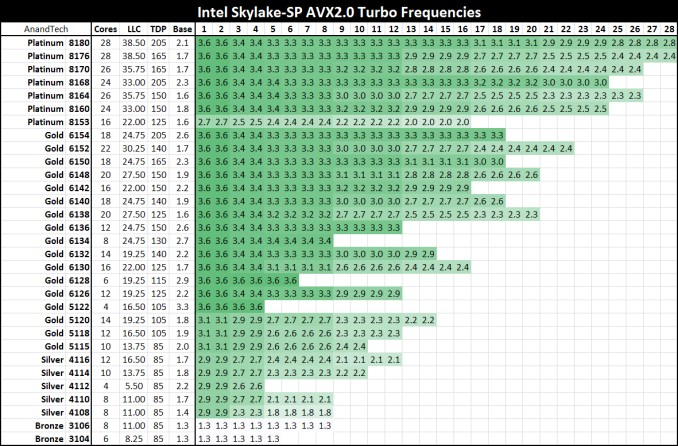

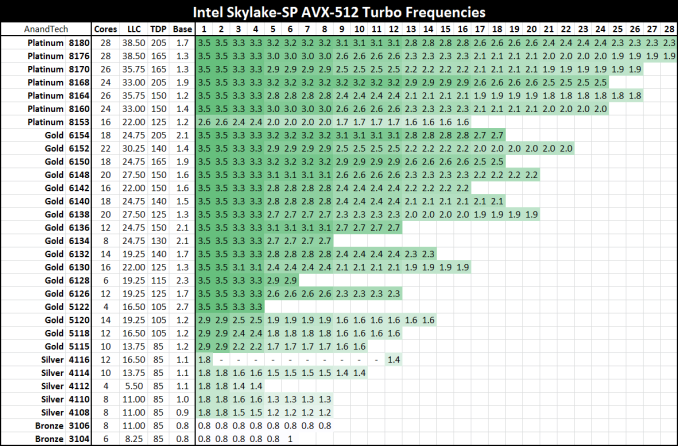

Moving onto AVX2.0 and AVX-512:

219 Comments

View All Comments

twtech - Thursday, July 20, 2017 - link

I'd really like to see some compile-time benchmarks for these CPUs.For my own particular interests, time taken to do a full recompile of the Unreal 4 engine from source would be very useful. But even something more generic like the Linux kernel compiles per hour benchmark could serve as a useful point of reference.

szupek - Friday, July 21, 2017 - link

Meanwhile, the entire world still runs on IBM's DB2 for Datbases and IBM's Z/AS400 Mainframes. The fastest database in the world, by far...oh and the most secure (it's only hackable by standing in front of the console, seriously). Every single credit card transaction. Every single plain ticket. Most medical records and all of wall street. Yup. IBM still owns. So much that most of commenters probably have no idea just how big IBM truly is. If you care about Database speed & security, these processors shouldn't appeal to you.stevefan1999 - Saturday, July 22, 2017 - link

It's impossible for AMD to win completely.Remember kids, public cloud service providers such as Amazon(AWS), Google(GCP) and Joyent would still stick with Intel due to not only the compatibility issues like ecosystem and vendor inconsistency, but also the VM migration and security and module issues, all mentioned in the presentation slides presented by Intel. They are a very serious matter, as they, the public cloud services, are powering the Internet we use everyday, so being stable, consistent and be able to serve a good amount of SLA is vital to the public cloud, we wouldn't expect them to play with the new lad in the hood, the EPYC.

IIRC only the Microsoft(Azure) are using AMD server CPUs partially in some of their datacenters, running various Linux and Windows VMs using Hyper-V, and they have been performing quite well

The cloud services are exploding every year, but with what I've said, I doubt AMD could even kick in the first door at least for 3 to 4 years. This is still a big-win for Intel and what manipulations will Intel do I don't know.

On the other hand, Intel has failed to service the desktop market and they're figuring out how to hold their asses on the Internet infrastructure, never had them know the crusade of EPYC will come this fast.

The server market is quite a big meat, it's a 21 bil market, cool right? But that you will have guaranteed 'server upgrade' every year, is a bigger matter, as those server CPUs are designated to be disposed given the wattage and performance per dollar is lower on the newer CPUs. Those god-damn server operators will keen to replace their CPU (and therefore some serious metal pollution issues). Intel has been exploiting this and gained a big hurdle of money and therefore had their ecosystem grown. This is how Intel defends their platform by vendor lock-in, pathetic.

AMD is now being performance and cost competitive to Intel, but it's still dead in the High Performance Computing campaign unless AMD could provide higher frequencies. Well I have to say I know nothing about HPC, but I remembered the Bulldozer architecture of AMD is actually targeted and marketed for HPC! That's why AMD failed in general-purpose computing market and started the downfall of AMD/Domination of Intel 5 years ago. Even though we know the fate of Bulldozer, but hopefully AMD could still scrap some of the HPC goodies of Bulldozer out and benefits the mankind by accelerating researches such as finding the cures for cancer or solving some precise physics and mathematics.

Well, anyway the cloud, the HPC and the server market are the last resort for Intel and they will definitely hold their last ground. Good luck AMD on crushing the mean and obese Intel!

errorr - Sunday, July 23, 2017 - link

For all the talk about speed and efficiency the problem is about $$$. The sad fact is that what matters most isn't even the price of the cpus which is chump change in the grand scheme of things but how the software licensing costs are determined. Per core or per socket software pricing will matter a lot. The software companies will decide how successful EPYC is. I have a feeling they will be biased slightly toward AMD at the beginning as it is in their interest to foster competition for Intel, or if they are not forward looking enough the end customers might argue that the competition will benefit the SW companies in the long run by continuing to push competition.msroadkill612 - Thursday, July 27, 2017 - link

Whatever, its all pointless if the competition can read your secrets, which is a matter very close to the hearts of the cheque signers.AMD seem to have something very superior to offer in that department.

qweqwe - Tuesday, August 8, 2017 - link

we just did some heavy inhouse hpc-tests with epyc against diff. intel servers.the epyc is the clear winner in terms of performance and power consumption when it

comes to hand-tuned parallel-vector-code examples.

not bad amd !

readonly1 - Friday, October 27, 2017 - link

qweqwe, I totally agree with you. Our inhouse HPC tests get the similar conclusion, after comparing AMD Epyc 7351 (dual socket, 32 cores, 2400Mhz) and Intel SKylake 6154 (dual socket, 36 cores, 3000Mhz). I think AMD clearly wins in the memory bandwidth, which is extremely important for HPC computation.msroadkill612 - Monday, November 13, 2017 - link

7/11/2017 "Microsoft is already deploying AMD's EPYC in their Azure Cloud Datacenters."Interesting. As i have been theorising, a possible reason for the absence of retail epyc is not supply, but demand.

A single sale can soak up production runs.

If so tho, not much sign of big revenues from it yet, but there are other explanations for that. Contra processors for development work e.g.

q.epsilon.p - Sunday, June 10, 2018 - link

power consumption numbers with every benchmark would have been nice, because these parts are server benchmarks, Perf / Watt is one of the primary concerns. And where AMD kinda crush Intel, because it's isn't exactly being honest with it's TDP values nowadays when it comes to Data Centre and HEDT.TDP was traditionally the absolute maximum the CPU would put out as heat, now with a power consumption of 670W I am assuming that the heat being put out by the CPU is more than 165W.