Sizing Up Servers: Intel's Skylake-SP Xeon versus AMD's EPYC 7000 - The Server CPU Battle of the Decade?

by Johan De Gelas & Ian Cutress on July 11, 2017 12:15 PM EST- Posted in

- CPUs

- AMD

- Intel

- Xeon

- Enterprise

- Skylake

- Zen

- Naples

- Skylake-SP

- EPYC

Apache Spark 2.1 Benchmarking

Apache Spark is the poster child of Big Data processing. Speeding up Big Data applications is the top priority project at the university lab I work for (Sizing Servers Lab of the University College of West-Flanders), so we produced a benchmark that uses many of the Spark features and is based upon real world usage.

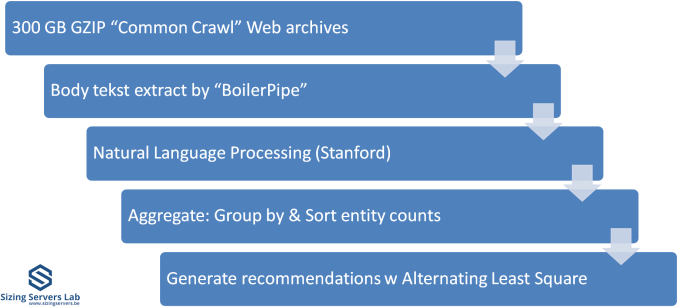

The test is described in the graph above. We first start with 300 GB of compressed data gathered from the CommonCrawl. These compressed files are a large amount of web archives. We decompress the data on the fly to avoid a long wait that is mostly storage related. We then extract the meaningful text data out of the archives by using the Java library "BoilerPipe". Using the Stanford CoreNLP Natural Language Processing Toolkit, we extract entities ("words that mean something") out of the text, and then count which URLs have the highest occurrence of these entities. The Alternating Least Square algorithm is then used to recommend which URLs are the most interesting for a certain subject.

In previous articles, we tested with Spark 1.5 in standalone mode (non-clustered). That worked out well enough, but we saw diminishing returns as core counts went up. In hindsight, just dumping 300 GB of compressed data in one JVM was not optimal for 30+ core systems. The high core counts of the Xeon 8176 and EPYC 7601 caused serious performance issues when we first continued to test this way. The 64 core EPYC 7601 performed like a 16-core Xeon, the Skylake-SP system with 56 cores was hardly better than a 24-core Xeon E5 v4.

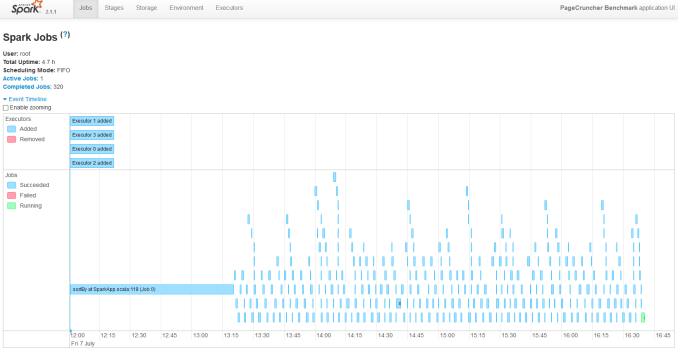

So we decided to turn our newest servers into virtual clusters. Our first attempt is to run with 4 executors. Researcher Esli Heyvaert also upgraded our Spark benchmark so it could run on the latest and greatest version: Apache Spark 2.1.1.

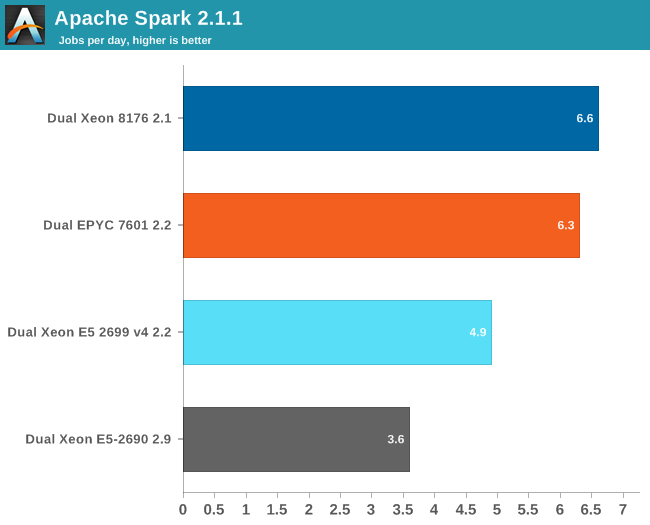

Here are the results:

If you wonder who needs such server behemoths besides the people who virtualize a few dozen virtual machines, the answer is Big Data. Big Data crunching has an unsatisfiable hunger for – mostly integer – processing power. Even on our fastest machine, this test needs about 4 hours to finish. It is nothing less than a killer app.

Our Spark benchmark needs about 120 GB of RAM to run. The time spent on storage I/O is negligible. Data processing is very parallel, but the shuffle phases require a lot of memory interaction. The ALS phase does not scale well over many threads, but is less than 4% of the total testing time.

Given the higher clockspeed in lightly threaded and single threaded parts, the faster shuffle phase probably gives the Intel chip an edge of only about 5%.

219 Comments

View All Comments

twtech - Thursday, July 20, 2017 - link

I'd really like to see some compile-time benchmarks for these CPUs.For my own particular interests, time taken to do a full recompile of the Unreal 4 engine from source would be very useful. But even something more generic like the Linux kernel compiles per hour benchmark could serve as a useful point of reference.

szupek - Friday, July 21, 2017 - link

Meanwhile, the entire world still runs on IBM's DB2 for Datbases and IBM's Z/AS400 Mainframes. The fastest database in the world, by far...oh and the most secure (it's only hackable by standing in front of the console, seriously). Every single credit card transaction. Every single plain ticket. Most medical records and all of wall street. Yup. IBM still owns. So much that most of commenters probably have no idea just how big IBM truly is. If you care about Database speed & security, these processors shouldn't appeal to you.stevefan1999 - Saturday, July 22, 2017 - link

It's impossible for AMD to win completely.Remember kids, public cloud service providers such as Amazon(AWS), Google(GCP) and Joyent would still stick with Intel due to not only the compatibility issues like ecosystem and vendor inconsistency, but also the VM migration and security and module issues, all mentioned in the presentation slides presented by Intel. They are a very serious matter, as they, the public cloud services, are powering the Internet we use everyday, so being stable, consistent and be able to serve a good amount of SLA is vital to the public cloud, we wouldn't expect them to play with the new lad in the hood, the EPYC.

IIRC only the Microsoft(Azure) are using AMD server CPUs partially in some of their datacenters, running various Linux and Windows VMs using Hyper-V, and they have been performing quite well

The cloud services are exploding every year, but with what I've said, I doubt AMD could even kick in the first door at least for 3 to 4 years. This is still a big-win for Intel and what manipulations will Intel do I don't know.

On the other hand, Intel has failed to service the desktop market and they're figuring out how to hold their asses on the Internet infrastructure, never had them know the crusade of EPYC will come this fast.

The server market is quite a big meat, it's a 21 bil market, cool right? But that you will have guaranteed 'server upgrade' every year, is a bigger matter, as those server CPUs are designated to be disposed given the wattage and performance per dollar is lower on the newer CPUs. Those god-damn server operators will keen to replace their CPU (and therefore some serious metal pollution issues). Intel has been exploiting this and gained a big hurdle of money and therefore had their ecosystem grown. This is how Intel defends their platform by vendor lock-in, pathetic.

AMD is now being performance and cost competitive to Intel, but it's still dead in the High Performance Computing campaign unless AMD could provide higher frequencies. Well I have to say I know nothing about HPC, but I remembered the Bulldozer architecture of AMD is actually targeted and marketed for HPC! That's why AMD failed in general-purpose computing market and started the downfall of AMD/Domination of Intel 5 years ago. Even though we know the fate of Bulldozer, but hopefully AMD could still scrap some of the HPC goodies of Bulldozer out and benefits the mankind by accelerating researches such as finding the cures for cancer or solving some precise physics and mathematics.

Well, anyway the cloud, the HPC and the server market are the last resort for Intel and they will definitely hold their last ground. Good luck AMD on crushing the mean and obese Intel!

errorr - Sunday, July 23, 2017 - link

For all the talk about speed and efficiency the problem is about $$$. The sad fact is that what matters most isn't even the price of the cpus which is chump change in the grand scheme of things but how the software licensing costs are determined. Per core or per socket software pricing will matter a lot. The software companies will decide how successful EPYC is. I have a feeling they will be biased slightly toward AMD at the beginning as it is in their interest to foster competition for Intel, or if they are not forward looking enough the end customers might argue that the competition will benefit the SW companies in the long run by continuing to push competition.msroadkill612 - Thursday, July 27, 2017 - link

Whatever, its all pointless if the competition can read your secrets, which is a matter very close to the hearts of the cheque signers.AMD seem to have something very superior to offer in that department.

qweqwe - Tuesday, August 8, 2017 - link

we just did some heavy inhouse hpc-tests with epyc against diff. intel servers.the epyc is the clear winner in terms of performance and power consumption when it

comes to hand-tuned parallel-vector-code examples.

not bad amd !

readonly1 - Friday, October 27, 2017 - link

qweqwe, I totally agree with you. Our inhouse HPC tests get the similar conclusion, after comparing AMD Epyc 7351 (dual socket, 32 cores, 2400Mhz) and Intel SKylake 6154 (dual socket, 36 cores, 3000Mhz). I think AMD clearly wins in the memory bandwidth, which is extremely important for HPC computation.msroadkill612 - Monday, November 13, 2017 - link

7/11/2017 "Microsoft is already deploying AMD's EPYC in their Azure Cloud Datacenters."Interesting. As i have been theorising, a possible reason for the absence of retail epyc is not supply, but demand.

A single sale can soak up production runs.

If so tho, not much sign of big revenues from it yet, but there are other explanations for that. Contra processors for development work e.g.

q.epsilon.p - Sunday, June 10, 2018 - link

power consumption numbers with every benchmark would have been nice, because these parts are server benchmarks, Perf / Watt is one of the primary concerns. And where AMD kinda crush Intel, because it's isn't exactly being honest with it's TDP values nowadays when it comes to Data Centre and HEDT.TDP was traditionally the absolute maximum the CPU would put out as heat, now with a power consumption of 670W I am assuming that the heat being put out by the CPU is more than 165W.