Sizing Up Servers: Intel's Skylake-SP Xeon versus AMD's EPYC 7000 - The Server CPU Battle of the Decade?

by Johan De Gelas & Ian Cutress on July 11, 2017 12:15 PM EST- Posted in

- CPUs

- AMD

- Intel

- Xeon

- Enterprise

- Skylake

- Zen

- Naples

- Skylake-SP

- EPYC

This morning kicks off a very interesting time in the world of server-grade CPUs. Officially launching today is Intel's latest generation of Xeon processors, based on the "Skylake-SP" architecture. The heart of Intel's new Xeon Scalable Processor family, the "Purley" 100-series processors incorporate all of Intel's latest CPU and network fabric technology, not to mention a very large number of cores.

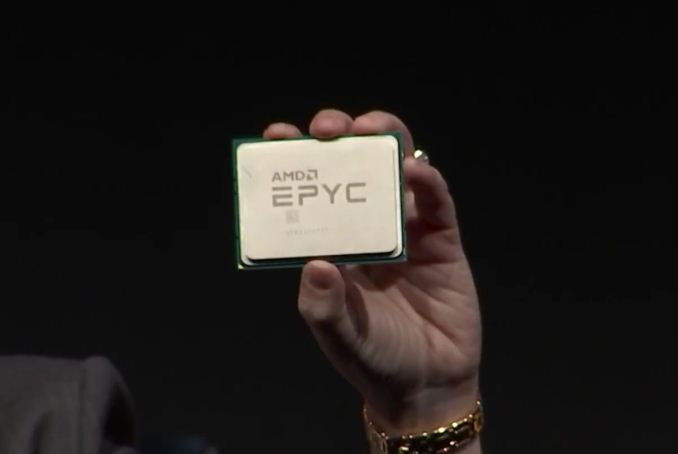

Meanwhile, a couple of weeks back AMD soft-launched their new EPYC 7000 series processors. Based on the company's Zen architecture and scaled up to server-grade I/O and core counts, EPYC represents an epic achievement for AMD, once again putting them into the running for competitive, high performance server CPUs after nearly half a decade gone. EPYC processors have begun shipping, and just in time for today's Xeon launch, we also have EPYC hardware in the lab to test.

Today's launch is a situation that neither company has been in for quite a while. Intel hasn't had serious competition in years, and AMD has't been able to compete. As a result, both companies are taking the other's actions very seriously.

In fact we could go on for much longer than our quip above in describing the rising tension at the headquarters of AMD and Intel. For the first time in 6 years (!), a credible alternative is available for the newly launched Xeon. Indeed, the new Xeon "Skylake-SP" is launching today, and the yardstick for it is not the previous Xeon (E5 version 4), but rather AMD's spanking new EPYC server CPU. Both CPUs are without a doubt very different: micro architecture, ISA extentions, memory subsystem, node topology... you name it. The end result is that once again we have the thrilling task of finding out how the processors compare and which applications their various trade-offs make sense.

The only similarity is that both server packages are huge. Above you see the two new Xeon packages –with and without an Omni-Path connector – both of which are as big as a keycard. And below you can see how one EPYC CPU fills the hand of AMD's CEO Dr. Lisa Su.

Both are 64 bit x86 CPUs, but that is where the similarities end. For those of you who have been reading Ian's articles closely, this is no surprise. The consumer-focused Skylake-X is the little brother of the newly launched Xeon "Purley", both of which are cut from the same cloth that is the Skylake-SP family. In a nutshell, the Skylake-SP family introduces the following new features:

- AVX-512 (Many different variants of the ISA extension are available)

- A 1 MB (instead of a 256 KB) L2-cache with a non-inclusive L3

- A mesh topology to connected the cores and L3-cache chunks together

Meanwhile AMD's latest EPYC Server CPU was launched a few weeks ago:

On the package are four silicon dies, each one containing the same 8-core silicon we saw in the AMD Ryzen processors. Each silicon die has two core complexes, each of four cores, and supports two memory channels, giving a total maximum of 32 cores and 8 memory channels on an EPYC processor. The dies are connected by AMD’s newest interconnect, the Infinity Fabric...

In the next pages, we will be discussing the impact of these architectural choices on server software.

219 Comments

View All Comments

oldlaptop - Thursday, July 13, 2017 - link

Why on earth is gcc -Ofast being used to mimic "real-world", non-"aggressively optimized"(!) conditions? This is in fact the *most* aggressive optimization setting available; it is very sensitive to the exact program being compiled at best, and generates bloated (low priority on code size) and/or buggy code at worst (possibly even harming performance if the generated code is so big as to harm cache coherency). Most real-world software will be built with -O2 or possibly -Os. I can't help but wonder why questions weren't asked when SPEC complained about this unwisely aggressive optimization setting...peevee - Thursday, July 13, 2017 - link

"added a second full-blown 512 bit AVX-512 unit. "Do you mean "added second 256 ALU, which in combination with the first one implements full 512-bit AVX-512 unit"?

peevee - Thursday, July 13, 2017 - link

"getting data from the right top node to the bottom left node – should demand around 13 cycles. And before you get too concerned with that number, keep in mind that it compares very favorably with any off die communication that has to happen between different dies in (AMD's) Multi Chip Module (MCM), with the Skylake-SP's latency being around one-tenth of EPYC's."1/10th? Asking data from L3 on the chip next to it will take 130 (or even 65 if they are talking about averages) cycles? Does not sound realistic, you can request data from RAM at similar latencies already.

AmericasCup - Friday, July 14, 2017 - link

'For enterprises with a small infrastructure crew and server hardware on premise, spending time on hardware tuning is not an option most of the time.'Conversely, our small crew shop has been tuning AMD (selected for scalar floating point operations performance) for years. The experience and familiarity makes switching less attractive.

Also, you did all this in one week for AMD and two weeks for Intel? Did you ever sleep? KUDOS!

JohanAnandtech - Friday, July 21, 2017 - link

Thanks for appreciating the effort. Luckily, I got some help from Ian on Tuesday. :-)AntonErtl - Friday, July 14, 2017 - link

According to http://www.anandtech.com/show/10158/the-intel-xeon... if you execute just one AVX256 instruction on one core, this slows down the clocks of all E5v4 cores on the same socket for at least 1ms. Somewhere I read that newer Xeons only slow down the core that executes the AVX256 instruction. I expect that it works the same way for AVX512, and yes, this means that if you don't have a load with a heavy proportion of SIMD instructions, you are better off with AVX128 or SSE. The AMD variant of having only 128-bit FPUs and no clock slowdown looks better balanced to me. It might not win Linpack benchmark competitions, but for that one uses GPUs anyway these days.wagoo - Sunday, July 16, 2017 - link

Typo on the CLOSING THOUGHTS page: "dual Silver Xeon solutions" (dual socket)Great read though, thanks! Can finally replace my dual socket shanghai opteron home server soon :)

Chaser - Sunday, July 16, 2017 - link

AMD's CPU future is looking very promising!bongey - Tuesday, July 18, 2017 - link

EPYC power consumption is just wrong. Somehow you are 50W over what everyone else is getting at idle. https://www.servethehome.com/amd-epyc-7601-dual-so...Nenad - Thursday, July 20, 2017 - link

Interesting SPECint2006 results:- Intel in their slide #9 claims that Intel 8160 is 2% faster than EPYC 7601

- Anandtech in article tests that EPYC 7601 is 42% faster than Intel 8176

Those two are quite different, even if we ignore that 8176 should be faster than 8160. In other words, those Intel test results look very suspicious.