Sizing Up Servers: Intel's Skylake-SP Xeon versus AMD's EPYC 7000 - The Server CPU Battle of the Decade?

by Johan De Gelas & Ian Cutress on July 11, 2017 12:15 PM EST- Posted in

- CPUs

- AMD

- Intel

- Xeon

- Enterprise

- Skylake

- Zen

- Naples

- Skylake-SP

- EPYC

AMD's EPYC Server CPU

If you have read Ian's articles about Zen and EPYC in detail, you can skip this page. For those of you who need a refresher, let us quickly review what AMD is offering.

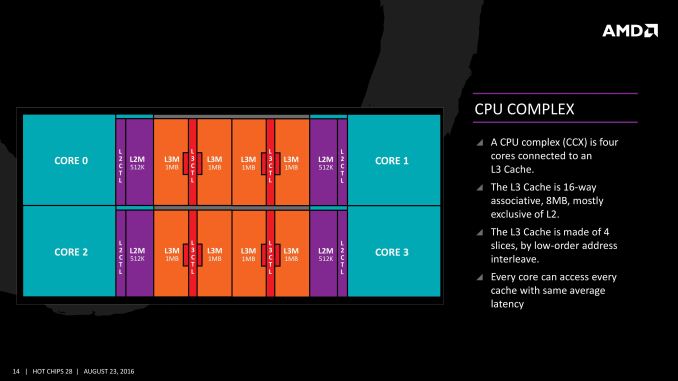

The basic building block of EPYC and Ryzen is the CPU Complex (CCX), which consists of 4 vastly improved "Zen" cores, connected to an L3-cache. In a full configuration each core technically has its own 2 MB of L3, but access to the other 6 MB is rather speedy. Within a CCX we measured 13 ns to access the first 2 MB, and 15 to 19 ns for the rest of the 8 MB L3-cache, a difference that's hardly noticeable in the grand scheme of things. The L3-cache acts as a mostly exclusive victim cache.

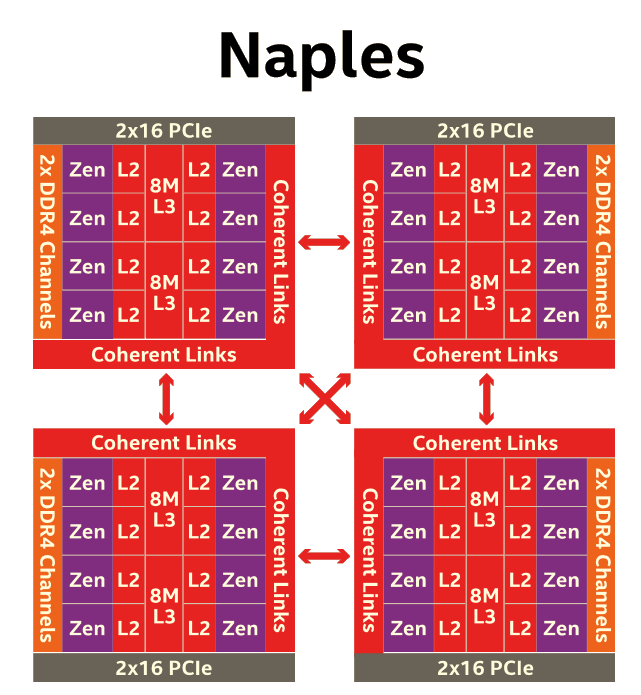

Two CCXes make up one Zeppelin die. A custom fabric – AMD's Infinity Fabric – ties together two CCXes, the two 8 MB L3-caches, 2 DDR4-channels, and the integrated PCIe lanes. That topology is not without some drawbacks though: it means that there are two separate 8 MB L3 caches instead of one single 16 MB LLC. This has all kinds of consequences. For example the prefetchers of each core make sure that data of the L3 is brought into the L1 when it is needed. Meanwhile each CCX has its own separate (not inside the L3, so no capacity hit) and dedicated SRAM snoop directory (keeping track of 7 possible states). In other words, the local L3-cache communicates very quickly with everything inside the same CCX, but every data exchange between two CCXes comes with a tangible latency penalty.

Moving further up the chain, the complete EPYC chip is a Multi Chip Module(MCM) containing 4 Zeppelin dies.

AMD made sure that each die is only one hop apart from the other, ensuring that the off-die latency is as low as reasonably possible.

Meanwhile scaling things up to their logical conclusion, we have 2P configurations. A dual socket EPYC setup is in fact a "virtual octal socket" NUMA system.

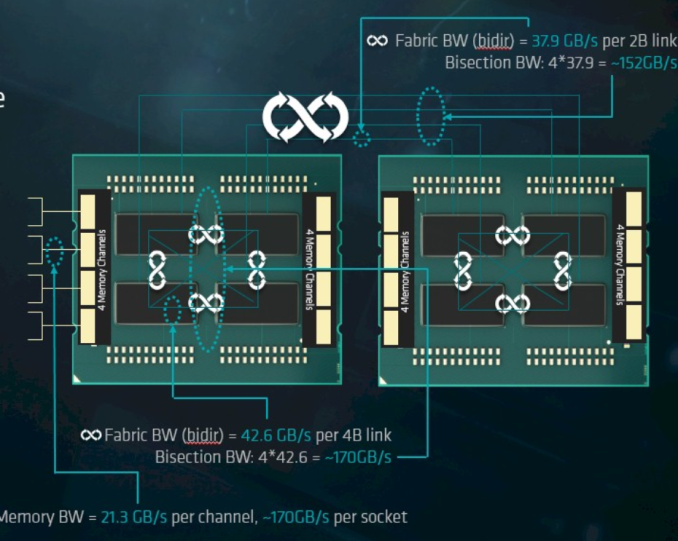

AMD gave this "virtual octal socket" topology ample bandwidth to communicate. The two physical sockets are connected by four bidirectional interconnects, each consisting of 16 PCIe lanes. Each of these interconnect links operates at +/- 38 GB/s (or 19 GB/s in each direction).

So basically, AMD's topology is ideal for applications with many independently working threads such as small VMs, HPC applications, and so on. It is less suited for applications that require a lot of data synchronization such as transactional databases. In the latter case, the extra latency of exchanging data between dies and even CCX is going to have an impact relative to a traditional monolithic design.

219 Comments

View All Comments

JKflipflop98 - Wednesday, July 12, 2017 - link

For years I thought you were just really committed to playing the "dumb AMD fanbot" schtick for laughs. It's infinitely more funny now that I know you've actually been *serious* this entire time.ddriver - Wednesday, July 12, 2017 - link

Whatever helps you feel better about yourself ;) I bet it is funny now, that AT have to carefully devise intel biased benches and lie in its reviews in hopes intel at least saves face. BTW I don't have a single amd CPU running ATM.WinterCharm - Thursday, July 13, 2017 - link

Uh, what are you smoking? this is a pretty even piece.boozed - Tuesday, July 11, 2017 - link

You haven't done your job properly unless you've annoyed the fanboys (and perhaps even fangirls) for both sides!JohanAnandtech - Wednesday, July 12, 2017 - link

Wise words. Indeed :-)Ranger1065 - Wednesday, July 12, 2017 - link

If you are referring to ddriver, I agree, wise words indeed.ddriver - Wednesday, July 12, 2017 - link

Well, that assumption rests on the presumption that the point of reviews is to upsed fanboys.I'd say that a "review done right" would include different workload scenarios, there is nothing wrong with having one that will show the benefits of intel's approach to doing server chips, but that should be properly denoted, and should be just one of several database tests and should be accompanied by gigabytes of databases which is what we use in real world scenarios.

CoachAub - Wednesday, July 12, 2017 - link

It was mentioned more than once that this review was rushed to make a deadline and that the suite of benchmarks were not everything they wanted to run and without optimizations or even the usual tweaks an end-user would make to their system. So, keep that in mind as you argue over the tests and different scenarios, etc.ddriver - Thursday, July 13, 2017 - link

It doesn't take a lot of time to populate a larger database so that you can make a benchmark that involves an actual real world usage scenario. It wasn't the "rushing" that prompted the choice of database size...mpbello - Friday, July 14, 2017 - link

If you are rushing, you reduce scope and deliver fewer pieces with high quality instead of insisting on delivering a full set of benchmarks that you are not sure about its quality.The article came to a very strong conclusion: Intel is better for database scenarios. Whatever you do, whether you are rushing or not, you cannot state something like that if the benchmarks supporting your conclusion are not well designed.

So I agree that the design of the DB benchmark was incredibly weak to sustain such an important conclusion that Intel is the best choice for DB applications.