ATI Mobility Radeon 9600 and NVIDIA GeForce FX Go5650: Taking on DX9

by Andrew Ku on September 14, 2003 11:04 PM EST- Posted in

- Laptops

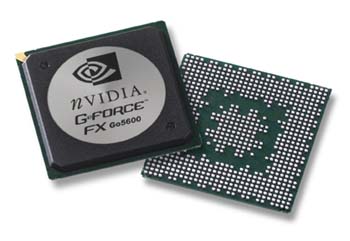

NVIDIA – GeForce FX Go5600 and GeForce FX Go5650

For high-end mobile systems, NVIDIA brings the GeForce FX Go5600 (NV31M), which is appropriately based on the GeForce FX 5600 (NV31), the specs of which can be found here. The specification of the Go version compared to its desktop big brother is virtually the same. As we cited in our preview, it is produced on a 0.13 micron process, is a full DX9 part, shares a number of similarities with the NV30, and consumes 1.0V while running.

The original GeForce FX 5600 Ultra graphics processor, which includes the Go part, are supposed to be clocked at 350MHz core clock and 350MHz memory clock (700MHz effective). However, we have only been aware of original GeForce FX Go5600 shipping at the highest of 270MHz core clock and 300MHz memory clock. The reason why we use the term “original” is because the GeForce FX Go5600 has undergone a refresh. Like the GeForce FX 5600 Ultra, the Go part has two versions now. One is the GeForce FX Go5600 and the other, the GeForce FX Go5650. The first of the two is based on the wire bond design, and the second is the flip-chip. The flip-chip design allows the GeForce FX Go5650 to hit higher frequencies when compared to its predecessor. The flip-chip version of the desktop GeForce FX 5600 Ultra is clocked at 400MHz core and 400MHz memory (800MHz effective). As of late, we have only seen a shipping version of the GeForce FX Go5650 clocked at 325MHz core clock and 295MHz memory clock. So basically, there are two versions of the desktop NV31 and the NV31M. Though, the desktop version doesn’t have a new name incantation like the mobile line. NVIDIA has informed us that they have already started the transition, and we expect to see all future mobile systems (and those announced within a few weeks) to hit the shelves with the new flip-chip version of NV31M.

In the GeForce FX Go5200 and GeForce FX Go5600 preview, we mentioned the support for component output and a MPEG2 decode assist engine. However, we still have yet to test these two features, and will report back once we do, accordingly. For a more detailed look into GeForce FX Go5200 and GeForce FX Go5650, read our original preview.

47 Comments

View All Comments

Anonymous User - Monday, September 15, 2003 - link

That 30 FPS-eye-limit rubbish always comes up in these sort of threads - I can't believe there are people who think they can't tell the difference between a game running at 30 FPS and 60 FPS.Anyway, I'd like to ask about the HL2 benches - you mention the 5600 is supposed to drop down a code path, but don't specifically say which one was used in the tests. DX8? Mixed? The charts say "DX 9.0", so if that was indeed used then it's interesting from a theoretical point of view but doesn't actually tell us how the game will run on such a system, since the DX8 code path is recommmended by Valve for the 5200/5600.

Anonymous User - Monday, September 15, 2003 - link

The "car wheels not rotating right" effect is caused by aliasing, and you'll still get that effect even if your video card is running at 2000fps.Besides, you're limited by your monitor's refresh rate anyhow.

Anonymous User - Monday, September 15, 2003 - link

#14 that is incorrect and totally misleading. Humans can tell the difference up to about 60fps (sometimes a little more).Have you ever seen a movie where the car's tires dont seem to rotate right? Thats becuse at 29.97fps you notice things like that.

Anonymous User - Monday, September 15, 2003 - link

#13, unless your not human, the human eye cant see a difference at 30fps and up. 60fps is a goal for users cause at that point, even if there is a slow down to 30fps you cant see the difference.Anonymous User - Monday, September 15, 2003 - link

Overall, I liked the article...However, whilst I understand that you wanted to run everything at maximum detail to show how much faster one chipset may be than another, it would have been helpful if some lower resolution benchmarks could have been thrown in.

After all, what good does it do you to know that chip B may perform at 30fps whilst chip A performs at 10fps if both are unplayable?

I don't mind whether I can play a game at an astoundingly good detail level or not - I care more about whether I can play the game at all! :)

In the end, we'd all love to be able to play all our games in glorious mega-detail looks-better-than-real-life mode at 2000fps, but it's not always possible.

A big question should be can I play the game at a reasonable speed with a merely acceptable quality. And that's the sort of information that helps us poor consumers! :)

Thanks for your time and a great article.

Sxotty - Monday, September 15, 2003 - link

Um do you mean floating point (FP16) or 16bit color? As opposed to FP32 on the NV hardware, as ATI's doesn't even support FP32, which is not 32bit color. ATI supports FP24. LOL and the no fog thing was just funny, that is NV's fault it is not like it has to be dropped they did it to gain a tiny fraction of performance.rqle - Monday, September 15, 2003 - link

I really like this comment:"Don’t forget that programmers are also artists, and on a separate level, it is frustrating for them to see their hard work go to waste, as those high level settings get turned off."

Hope future article on graphic card/chipset will offer more insight on how the may developer feel.

Anonymous User - Monday, September 15, 2003 - link

please note: the warcraft benchmark was done under direct3d. now nvidia cards perform badly under direct 3d with warcraft whereas ati does a very fine job. it's a completely different story, however, if u start warcraft 3 with the warcraft.exe -opengl command. so please take note of that, only very few people about this anyway. my quadro 4 700 go gl gets about +10fps more under opgengl compared to d3d!Pete - Monday, September 15, 2003 - link

Nice read. Actually, IIRC, UT2003 is DX7, with some DX7 shaders rewritten in DX8 for minimal performance gains. Thus, HL2 should be not only the first great DX9 benchmark, but also a nice DX8(.1) benchmark as well.Anonymous User - Monday, September 15, 2003 - link

so valve let you guys test out half life 2 on some laptops eh? very nice. (great review to, well written)