Exploring DynamIQ and ARM’s New CPUs: Cortex-A75, Cortex-A55

by Matt Humrick on May 29, 2017 12:00 AM EST- Posted in

- Smartphones

- CPUs

- Arm

- Mobile

- Cortex

- DynamIQ

- Cortex A75

- Cortex A55

DynamIQ

Before we discuss the new CPUs, we need to discuss DynamIQ. Introduced 5 years ago, ARM’s original big.LITTLE (bL) technology, which allows multiple clusters of up to 4 CPUs to be chained together, has been massively successful in the marketplace, allowing various combinations of its Cortex-A family of CPUs to power mobile devices ranging from the budget-friendly with no frills to the budget-busting flagships stuffed with technology. The combination of Cortex-A and bL extends beyond smartphones and tablets too, with applications ranging from servers to automotive.

Over the years, ARM’s IP and the needs of its customers have evolved, necessitating a new version of bL: DynamIQ. ARM started working on DynamIQ in 2013 by asking a single question: “How do you make big.LITTLE better?” Looking forward, ARM could see DynamIQ needed to be more flexible, more scalable, and offer better performance. Considering how much work went into this project, DynamIQ will be around for at least the next few years, so hopefully it delivers on those goals.

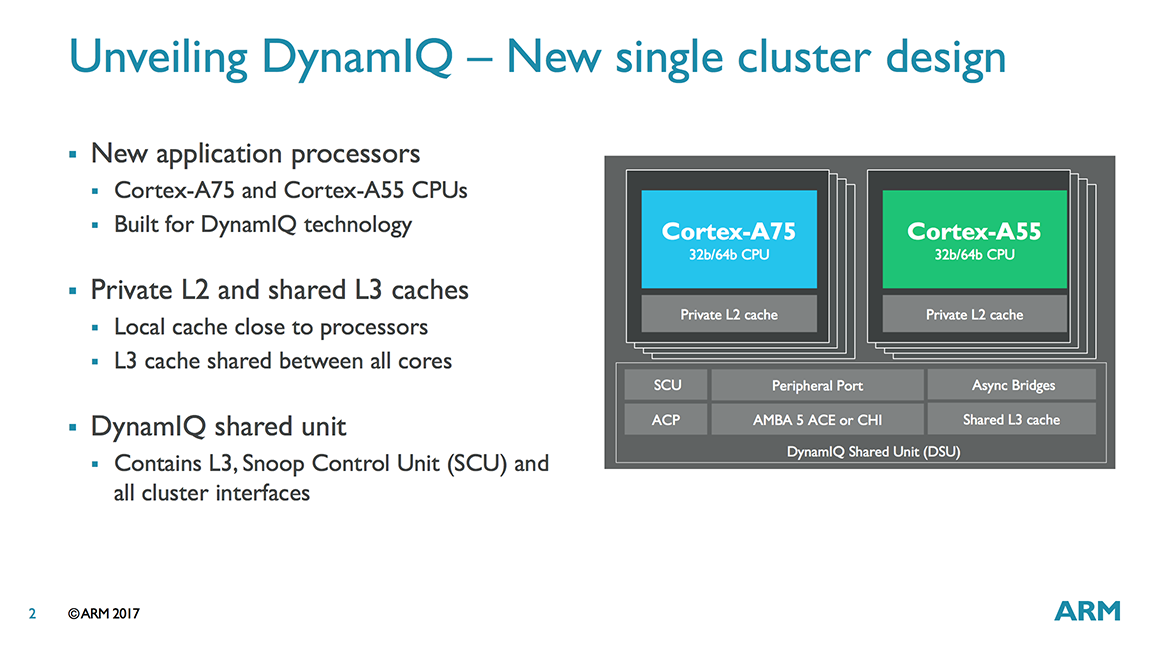

Like bL, DynamIQ provides a way to group CPUs into clusters and connect them to other processors and hardware within the system; however, there’s several significant changes, starting with the ability to place big and little Cortex-A CPUs in the same cluster. With bL, different CPUs had to reside within separate clusters. What appears to be a simple reshuffling of cores actually impacts CPU performance and configuration flexibility.

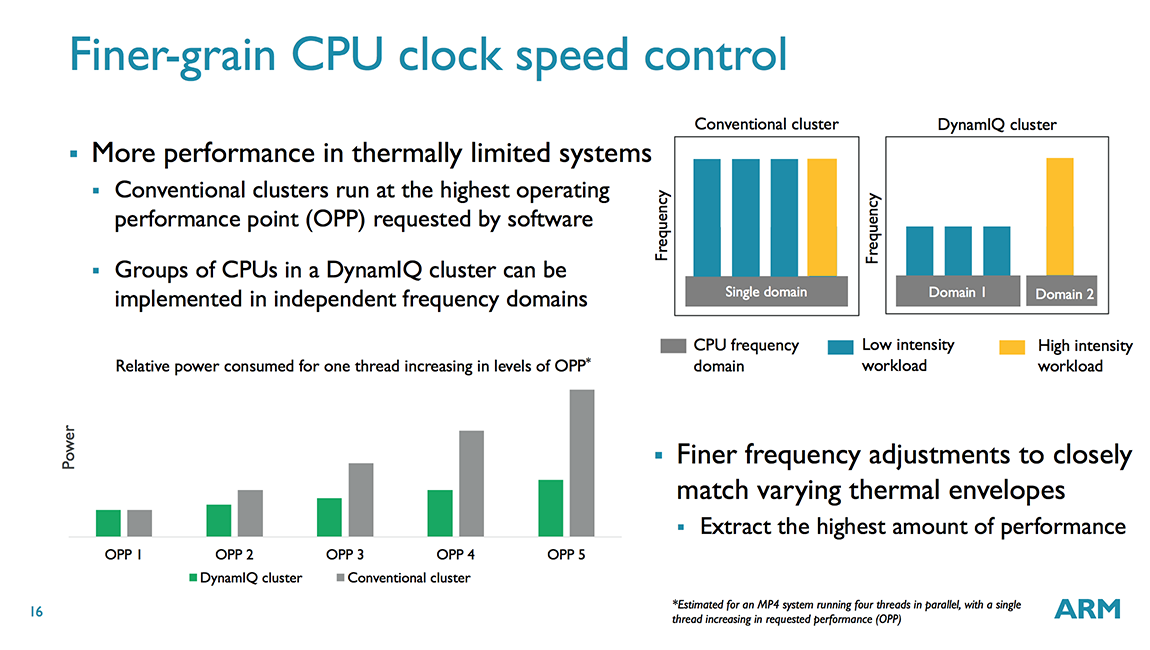

Another big change is the ability to place up to 8 CPUs inside a single cluster (up from 4 for bL), with the total number of CPUs scaling up to 256 with 32 clusters, which can scale even further to 1000s of CPUs with multi-chip support provided via a CCIX interface. Within a cluster CPUs are divided into voltage/frequency domains, and within a domain each core is inside its own power domain. This allows each CPU to be individually powered down, although all CPUs in the same domain must operate at the same frequency, which is no different from bL; however, with DynamIQ each cluster can support up to 8 voltage/frequency domains, providing greater flexibility than bL’s single voltage/frequency domain per cluster. So, what does this mean? It means that, in theory, an SoC vendor could place each CPU into its own voltage domain so that voltage/frequency could be set independently for each of the 8 CPUs in the cluster. Each voltage/frequency domain requires its own voltage regulator, which adds cost and complexity, so we’ll most likely continue to see 2-4 CPUs per domain.

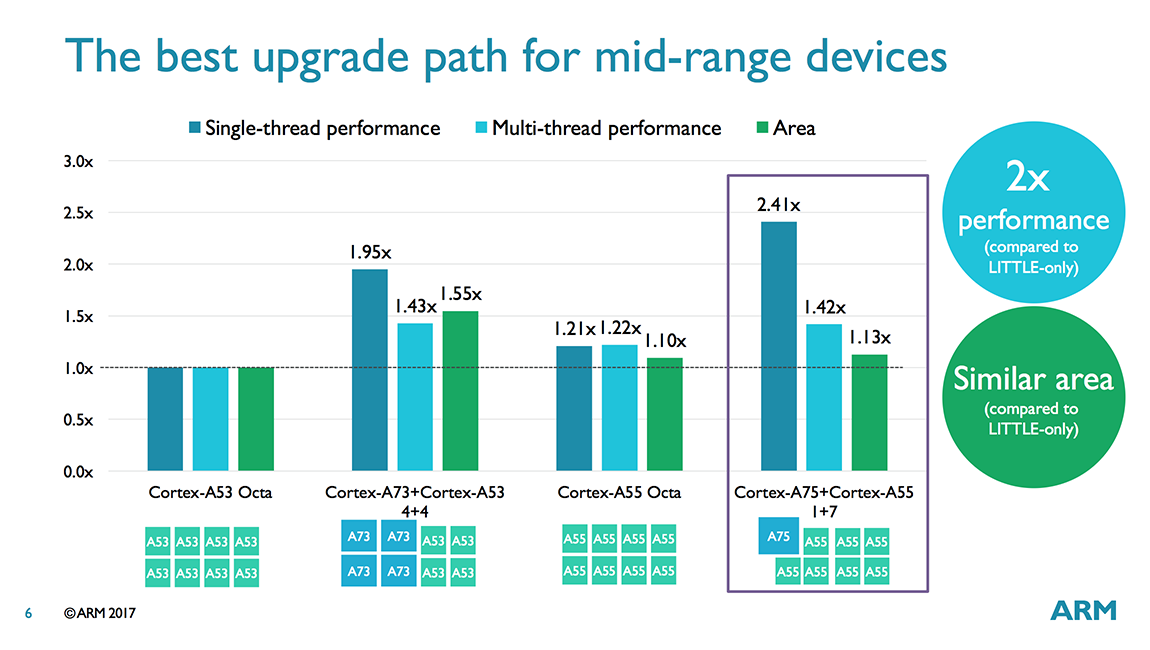

ARM still sees 8-core configurations being used in mobile devices over the next few years. With bL, this would likely be a 4+4 pairing using 4 big cores and 4 little cores or 8 little cores spread across 2 clusters. With DynamIQ, all 8 cores can fit inside a single cluster and can be split into any combination (1+7, 2+6, 3+5, 4+4) of A75 and A55 cores. ARM sees the 1+7 configuration, where one A55 core is replaced by a big A75 core, as particularly appealing for the mid-range market, because it offers up to 2.41x better single-thread performance and 1.42x better multi-thread performance for only a 1.13x increase in die area compared to an octa-core A53 configuration (iso-process, iso-frequency).

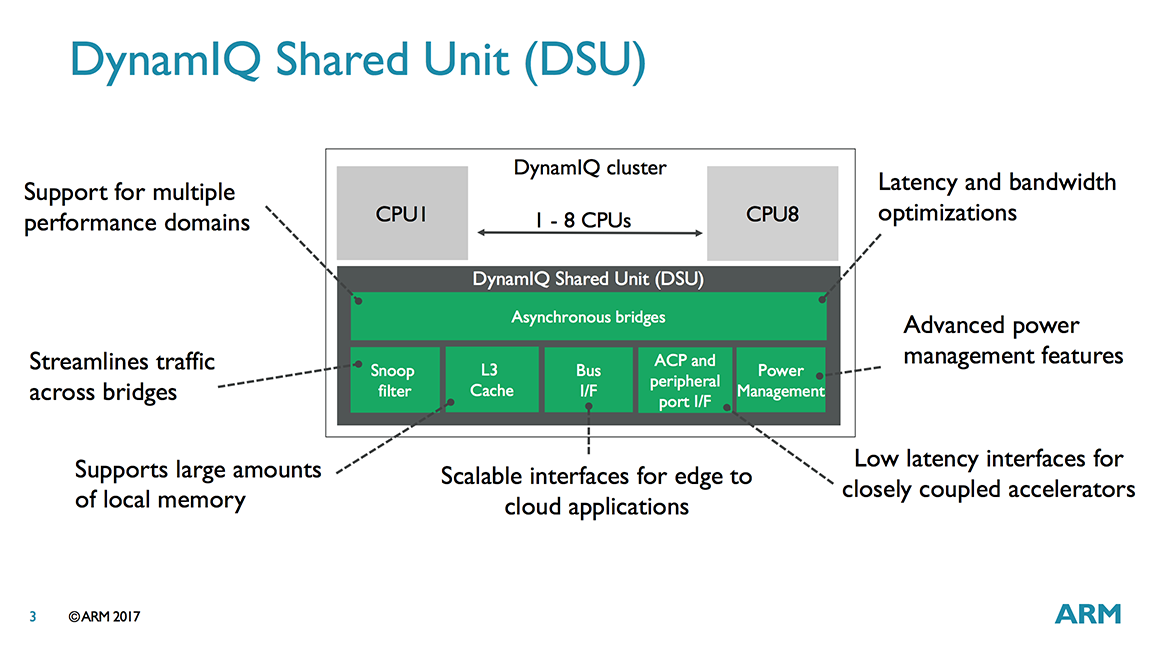

The main puzzle piece that enables this flexibility is the DynamIQ Shared Unit (DSU), a separate block that sits inside each DynamIQ cluster and functions as a central hub for the CPUs within the cluster and the bridge to the rest of the system. Each voltage/frequency domain in the cluster can be configured to run synchronously or asynchronously with the DSU. Using asynchronous bridges (one per domain) allows different CPUs (A75/A55) to operate at different frequencies (using synchronous bridges would force all CPUs to operate at the same frequency).

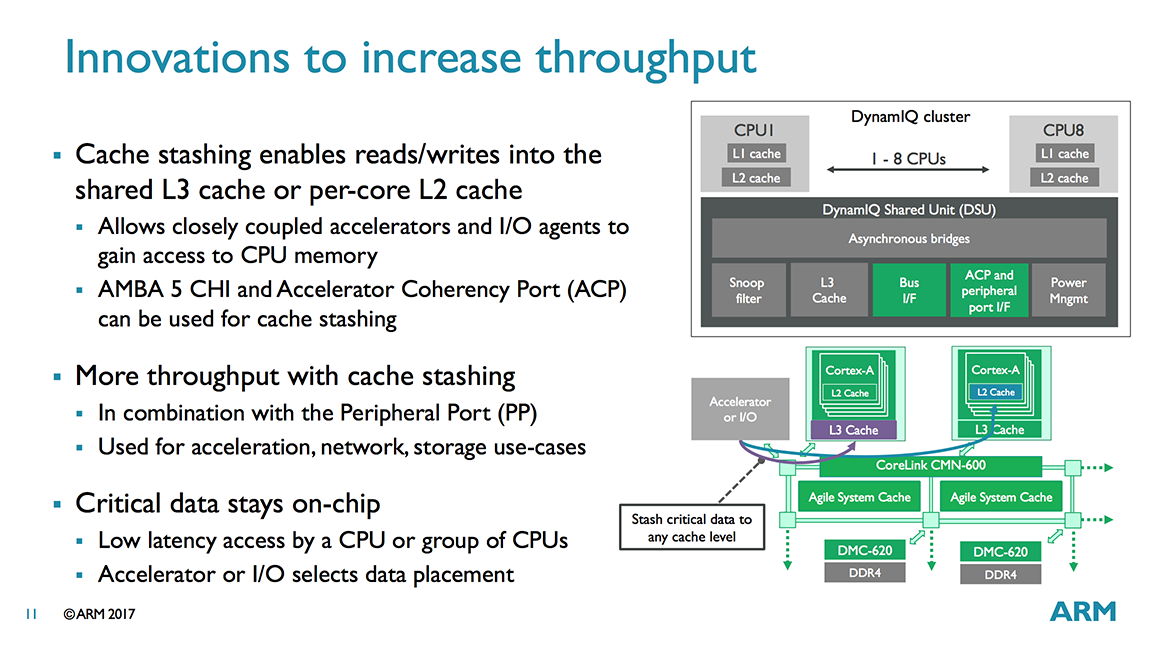

The DSU communicates with a CCI, CCN, or CMN cache-coherent interconnect through 1 to 2 128-bit AMBA 5 ACE ports or a single 256-bit AMBA 5 CHI port. There’s also an Accelerated Coherency Port (ACP) for attaching specialized accelerators that require cache coherency with the CPUs. It’s also used for enabling DynamIQ’s cache stashing feature, which we’ll discuss in a minute. Finally, there’s a separate peripheral port that’s used to program the accelerators attached to the ACP interface (basically a shortcut for programming transactions so they do not need to be routed through the system interconnect).

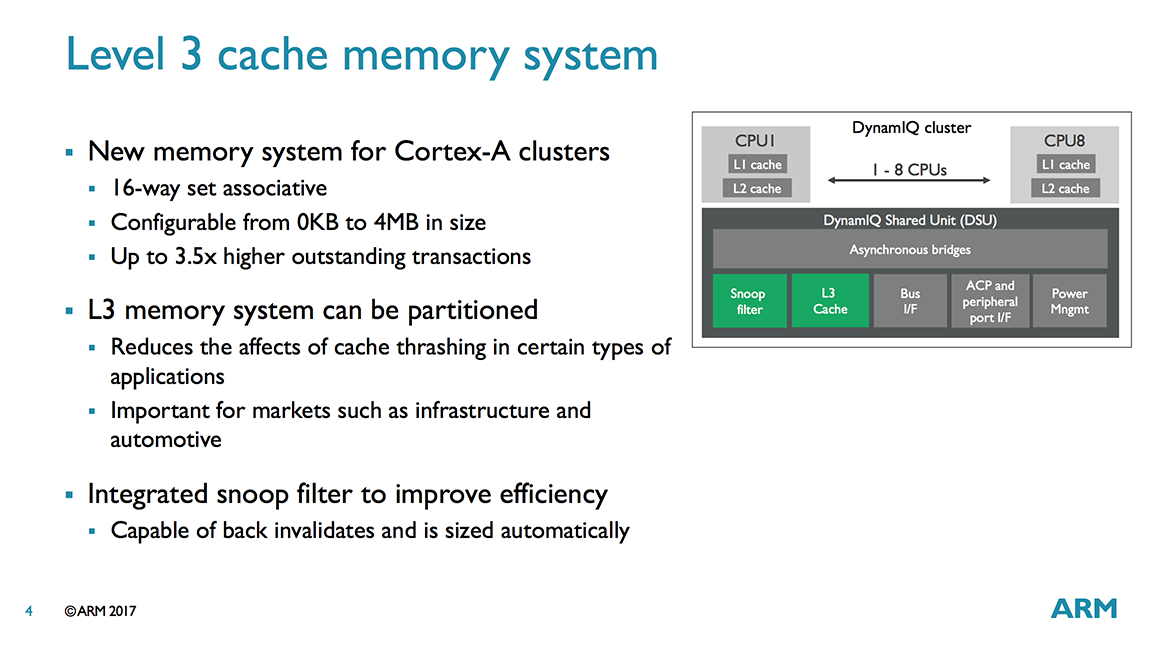

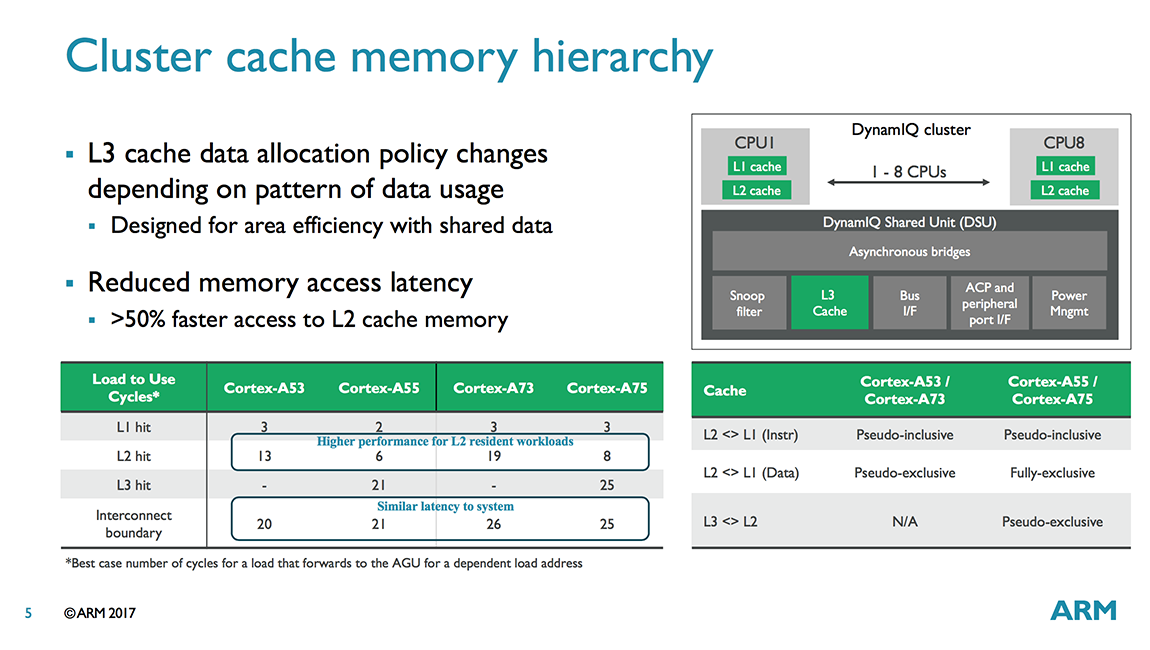

So far we’ve discussed DynamIQ’s flexibility and scalability features, but it also improves CPU performance through a new cache topology. With bL, CPUs inside a cluster had access to a shared L2 cache; however, DynamIQ compatible CPUs (currently limited to A75/A55) have private L2 caches that operate at the CPU core’s frequency. Moving the L2 closer to the core improves L2 cache latency by 50% or more. DynamIQ also adds another level of cache: The optional shared L3 cache lives inside the DSU and is 16-way set associative. Cache sizes are 1MB, 2MB, or 4MB, but may be omitted for certain applications like networking. The L3 cache is technically pseudo-exclusive, but ARM says it’s really closer to being fully-exclusive, with nearly all of the L3’s contents not appearing in the L2 and L1 caches. If the new L3 cache was inclusive, meaning that it contained a copy of a CPU’s L2, then its performance benefit would be largely mitigated and a lot of area and power would be wasted.

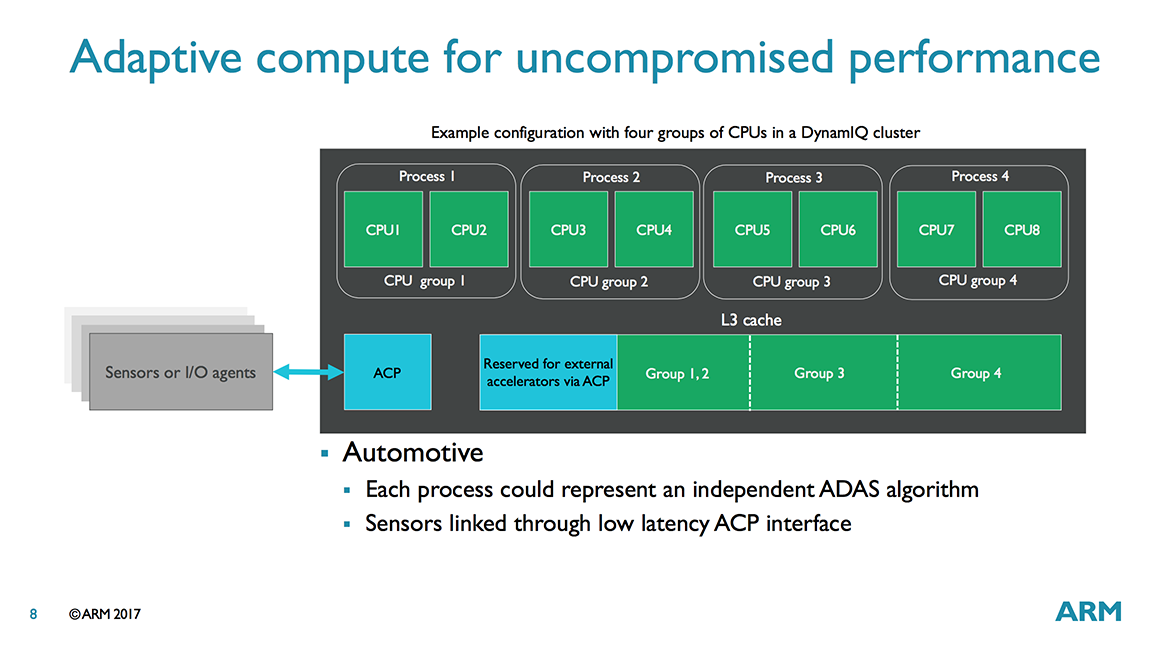

The L3 cache can be partitioned, which can be useful for networking or embedded systems that run a fixed workload or applications that require more deterministic data management. It can be partitioned into a maximum of 4 groups, and the split can be unbalanced, so 1 CPU could get 3MB while the other 7 CPUs would share the remaining 1MB in an 8-core 4MB L3 configuration. Each group can be assigned to specific CPU(s) or external accelerators attached to the DSU via the ACP or other interface. Any processors not specifically assigned to a cache group share the remaining L3 cache. The partitions are dynamic and can be created/adjusted during runtime by the OS or hypervisor.

One of the features supported by DynamIQ is error reporting, which allows the system to report detected errors, both correctable and uncorrectable, to software. The L3 supports ECC/parity (actually all levels of cache and snoop filters do, with SECDED on caches that can hold dirty data and SED parity on caches that only hold clean data) in order to be ASIL-D compliant. The L3 also has persistent error correction and can support recovery from a single hard error (data poisoning is supported at a 64-bit granularity).

Another new feature is cache stashing, which allows a GPU or other specialized accelerators and I/O agents to read/write data into the shared L3 cache or directly into a specific CPU’s L2 cache via the ACP or AMBA 5 CHI port. A specific example is a networking appliance that uses a TCP/IP offload engine to accelerate packet processing. Instead of writing its data to system memory for the CPU to fetch or relying on some other cache coherency mechanism, the accelerator could use cache stashing to write its data directly into the CPU’s L2, increasing performance and reducing power consumption.

In order to use cache stashing, the software drivers running in kernel space need to be aware of the processor and cache topology, which will require custom code to enable hardware outside the cluster to access the shared L3 or an individual CPU’s L2. While a limitation for consumer electronics where time to market is key, it’s not as serious of an issue for commercial applications.

While cache stashing could be a useful feature for sharing data with processors sitting outside the cluster, DynamIQ also makes it easier to share data among CPUs within a cluster. This is one of the reasons why ARM wanted to bring big and little CPUs into the same cluster. Moving cache lines within a DynamIQ cluster is faster than moving them between clusters like with bL, reducing latency when migrating threads between big and little cores.

DynamIQ also includes improved power management. Having the DSU perform all cache and coherency management in hardware instead of software saves several steps when changing CPU power states, allowing the CPU cores to power up or down much faster than before with bL. The DSU can also power down portions of the L3 cache to reduce leakage power by autonomously monitoring cache usage and switching between full on, half off, and full off states.

The DSU includes a Snoop Control Unit (SCU) with an integrated snoop filter for handling the new cache topology. Together with L3 cache and other control logic, the DSU is about the same area as an A55 core in its max configuration or half the area of an A55 in its min configuration. These are just rough estimates because most of the DSU area is used by the L3 cache and the size of the DSU logic scales with the number of CPU cores.

Some of ARM’s partners may be slow to migrate from bL to DynamIQ, choosing to stick with a technology and CPU cores they are familiar with instead of investing the extra time and money required to develop new solutions. But for performance (and marketing) sensitive markets that need access to ARM’s latest CPU cores, such as mobile, the switch to DynamIQ should happen quickly, with the first DynamIQ SoCs likely to appear by the end of 2017 or early 2018.

104 Comments

View All Comments

Samus - Monday, May 29, 2017 - link

Ok, point taken, 4 year gap. Even more unacceptable. But you continue to imply the architecture hitting a wall because of the inherently in order nature of RISC so I ask you, why have Samsung and Apple continued to have great success deviating from ARM's reference designs, while Qualcomm has been married to them and paying the performance price (specifically looking at you, 808)Death666Angel - Monday, May 29, 2017 - link

"die-constrained design" He gave the answer right there, at least as far as Apple is concerned. ARM is imposing a certain, small, die size for the A53/55 cores, that limits their potential performance a lot. Apple doesn't care about die size since their margins are high enough. The Apple A5X was as large as the Intel Ivy Bridge 4C die. Both were around in 2012, though Intel had the process node advantage. Still insane numbers. And Samsung is also very vertically integrated, they don't have to worry as much about die size as Qualcomm since they own the manufacturing as well. And QC doesn't have to play the performance game, since their choke hold on the modem technology allows them to still have plenty of design wins for the moment.Wilco1 - Monday, May 29, 2017 - link

It's a 3 year gap. And Samsung still uses Cortex-A53 alongside Mongoose.tipoo - Monday, May 29, 2017 - link

Not RISC constrained to in-order, nothing to do with RISC, rather that in-order is a design choice for this particular model for power and die size.A75, Hurricane, etc are of course massively out of order ARM/RISC designs.

name99 - Wednesday, May 31, 2017 - link

Zephyr is NOT a massive OoO design. Probably 2-wide in order. We don't know its performance, but it certainly saves power (compared toA9) while not seeming to slow down the phone.ARM seems to hurt itself by an insistence on these TINY designs. (Just like Intel on the other side hurts itself by an insistence on designs that are first server targeted). Apple wins partially by not trying to be everything to everyone...

helvete - Wednesday, August 30, 2017 - link

Ending up being nothing for nobody? /saryonoco - Monday, May 29, 2017 - link

There is nothing inherently in-order about RISC. Various ARM designs such as Cortex A57, A72, A73 and A75 are out of order.Samsung has not really deviated from ARM's reference design by much, they still use the Cortex A53 in various SoCs including their latest and greatest Exynos 8895. And that 8895 is also falling behind Snapdragon 835, which is a standard A73 implementation for all intents and purposes (Qualcomm's marketing notwithstanding).

Apple, well, they can afford to dedicate the die area to a big core. No one else can.

ZeDestructor - Tuesday, May 30, 2017 - link

Everyone can go for the giant-die approach, but thanks to how marketing works, people will buy 8 A53s well before they even consider 4 A53 + 1 Cyclone-sized core.name99 - Wednesday, May 31, 2017 - link

Ding ding ding. We have a winnerWilco1 - Monday, May 29, 2017 - link

It was late 2014, and the first designs should appear late this year (just like Cortex-A73 was announced last year and appeared the same year). That is a 3 year gap, not 4 years.Note Cortex-A53 scaled quite well, from 1.3GHz in Exynos 5433 to 1.8GHz in Kirin (and goes up to 2.5GHz in Helio P25). The big cores scaled via yearly new micro-architectures, so the big/little performance ratio has remained similar.

> Fact is, there is only so much you can do with an in-order die-constrained design.

And more importantly not only keeping but actually improving power efficiency. It could go much faster if it were allowed a similar power budget as the big cores.