Launching the #CPUOverload Project: Testing Every x86 Desktop Processor since 2010

by Dr. Ian Cutress on July 20, 2020 1:30 PM ESTCPU Tests: SPEC2006 1T, SPEC2017 1T, SPEC2017 nT

SPEC2017 and SPEC2006 is a series of standardized tests used to probe the overall performance between different systems, different architectures, different microarchitectures, and setups. The code has to be compiled, and then the results can be submitted to an online database for comparison. It covers a range of integer and floating point workloads, and can be very optimized for each CPU, so it is important to check how the benchmarks are being compiled and run.

We run the tests in a harness built through Windows Subsystem for Linux, developed by our own Andrei Frumusanu. WSL has some odd quirks, with one test not running due to a WSL fixed stack size, but for like-for-like testing is good enough. SPEC2006 is deprecated in favor of 2017, but remains an interesting comparison point in our data. Because our scores aren’t official submissions, as per SPEC guidelines we have to declare them as internal estimates from our part.

For compilers, we use LLVM both for C/C++ and Fortan tests, and for Fortran we’re using the Flang compiler. The rationale of using LLVM over GCC is better cross-platform comparisons to platforms that have only have LLVM support and future articles where we’ll investigate this aspect more. We’re not considering closed-sourced compilers such as MSVC or ICC.

clang version 10.0.0

clang version 7.0.1 (ssh://git@github.com/flang-compiler/flang-driver.git

24bd54da5c41af04838bbe7b68f830840d47fc03)-Ofast -fomit-frame-pointer

-march=x86-64

-mtune=core-avx2

-mfma -mavx -mavx2

Our compiler flags are straightforward, with basic –Ofast and relevant ISA switches to allow for AVX2 instructions. We decided to build our SPEC binaries on AVX2, which puts a limit on Haswell as how old we can go before the testing will fall over. This also means we don’t have AVX512 binaries, primarily because in order to get the best performance, the AVX-512 intrinsic should be packed by a proper expert, as with our AVX-512 benchmark.

To note, the requirements for the SPEC licence state that any benchmark results from SPEC have to be labelled ‘estimated’ until they are verified on the SPEC website as a meaningful representation of the expected performance. This is most often done by the big companies and OEMs to showcase performance to customers, however is quite over the top for what we do as reviewers.

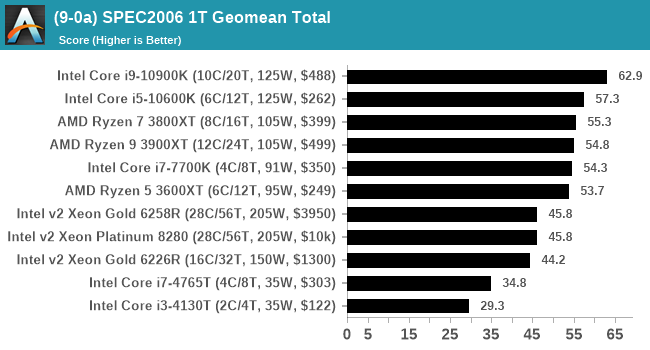

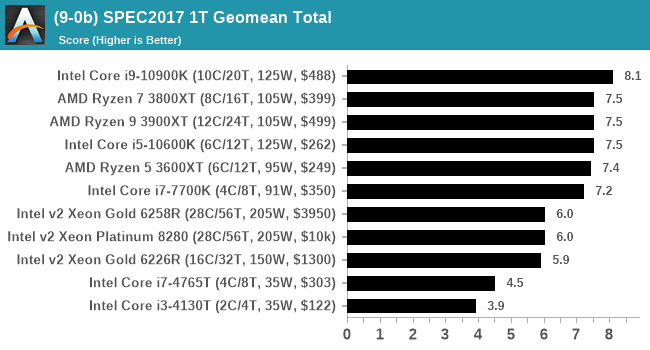

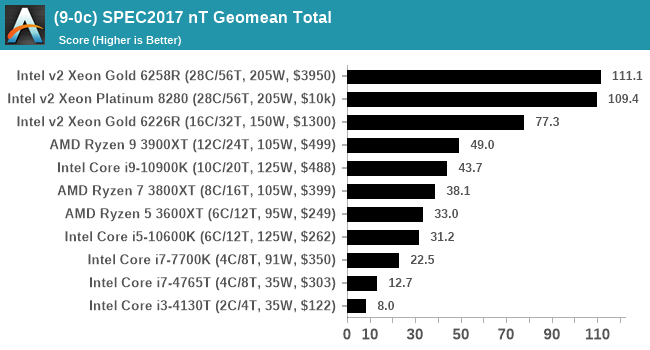

For each of the SPEC targets we are doing, SPEC2006 rate-1, SPEC2017 speed-1, and SPEC2017 speed-N, rather than publish all the separate test data in our reviews, we are going to condense it down into individual data points. The main three will be the geometric means from each of the three suites.

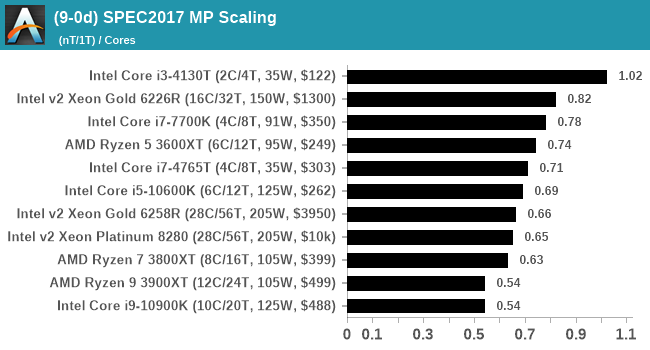

A fourth metric will be a scaling metric, indicating how well the nT result scales to the 1T result for 2017, divided by the number of cores on the chip.

The per-test data will be a part of Bench.

Experienced users should be aware that 521.wrf_r, part of the SPEC2017 suite, does not work in WSL due to the fixed stack size. It is expected to work with WSL2, however we will cross that bridge when we get to it. For now, we’re not giving wrf_r a score, which because we are taking the geometric mean rather than the average, should not affect the results too much.

110 Comments

View All Comments

PeachNCream - Tuesday, July 21, 2020 - link

You don't get what it means to perform a controlled test do you?Aspernari - Wednesday, July 22, 2020 - link

It's important to note that the environment is not actually well-controlled.https://twitter.com/IanCutress/status/128480609693...

We don't know temperature for the operating conditions for these tests, which matters more and more for boost behavior for CPUs and GPUs. He says 36c when he got into the office, we'll never know what the temperature peaked at, nor how often similar conditions were reached.

A standard platform is a good choice, but a controlled environment is also important. Unfortunately, the results aren't as reliable as they otherwise might have been.

PeterCollier - Wednesday, July 22, 2020 - link

And that's why this entire test is a complete waste of time. Something like Geekbench or especially Userbench is much, much better because it gives you a range of scores. Instead of trying to create false precision by saying that a AMD 4700U scored, say, a "979" on a benchmark, Userbench will say that all the 4700U's tested scored from 899 to 1008, and break it down into percentiles. This way, you have a range of expected performance in mind instead of being fixated on that "979" number, which could have been obtained in an unrealistic scenario.Rudde - Saturday, July 25, 2020 - link

Isn't userbench a synthetic together with geekbench? What exactly are they testing? Instead of knowing which of Intel i7 10700k and AMD ryzen 7 3800X is better at rendering, video encoding, number crunching or whatever your use case is, you'll get a distribution based on a largely unknown test. The Intel and AMD processors might end up being within error margins of each other in your use case, but that in itself tells something too. All benchmarks are inherently bad; there is not a single benchmark that captures every use case while not being affected by its environment (ram speeds, temperatures, etc). I prefer tests that I understand, over tests that I do not understand.bananaforscale - Wednesday, July 22, 2020 - link

One could ask what the point of Userbenchmark is in these days of quadcores being basically entry level while the benchmark has DECREASED its multicore weighting.A5 - Monday, July 20, 2020 - link

For my own personal test, getting an i7-4770K in the list would be a big help.Once you have a compile test, a Xeon E5-1680v3 would be nice to see so that I can sell my corp on newer workstations...

Shmee - Wednesday, July 22, 2020 - link

Those are great Haswell EP CPUs, and they OC too! I have an E5-1660v3 in my X99 rig.Mockingtruth - Monday, July 20, 2020 - link

I have a 3570k and a E8600 spare with respective motherboards and ram if useful?CampGareth - Monday, July 20, 2020 - link

Personally I'd like to see a Xeon E5-2670 v1 benchmarked. I'm still running a pair of them as my workstation but these days AMD can beat the performance on a single socket and halve the power consumption.Samus - Tuesday, July 21, 2020 - link

Do you run them in an HP Z620? I ran the same system with the same CPU’s for years at one of my clients. What a beast.