Intel Launches Optane Memory M.2 Cache SSDs For Consumer Market

by Billy Tallis on March 27, 2017 12:00 PM EST- Posted in

- SSDs

- Storage

- Intel

- SSD Caching

- M.2

- NVMe

- 3D XPoint

- Optane

- Optane Memory

Last week, Intel officially launched their first Optane product, the SSD DC P4800X enterprise drive. This week, 3D XPoint memory comes to the client and consumer market in the form of the Intel Optane Memory product, a low-capacity M.2 NVMe SSD intended for use as a cache drive for systems using a mechanical hard drive for primary storage.

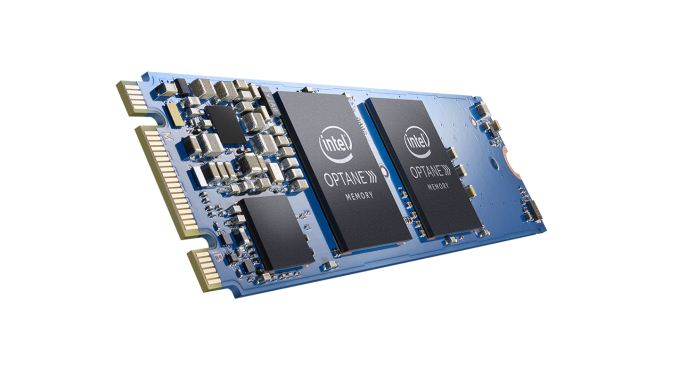

The Intel Optane Memory SSD uses one or two single-die packages of 3D XPoint non-volatile memory to provide capacities of 16GB or 32GB. The controller gets away with a much smaller package than most SSDs (especially PCIe SSD) since it only supports two PCIe 3.0 lanes and does not have an external DRAM interface. Because only two PCIe lanes are used by the drive, it is keyed to support M.2 type B and M slots. This keying is usually used for M.2 SATA SSDs while M.2 PCIe SSDs typically use only the M key position to support four PCIe lanes. The Optane Memory SSD will not function in a M.2 slot that provides only SATA connectivity. Contrary to some early leaks, the Optane Memory SSD uses the M.2 2280 card size instead of one of the shorter lengths. This makes for one of the least-crowded M.2 PCBs on the market even with all of the components on the top side.

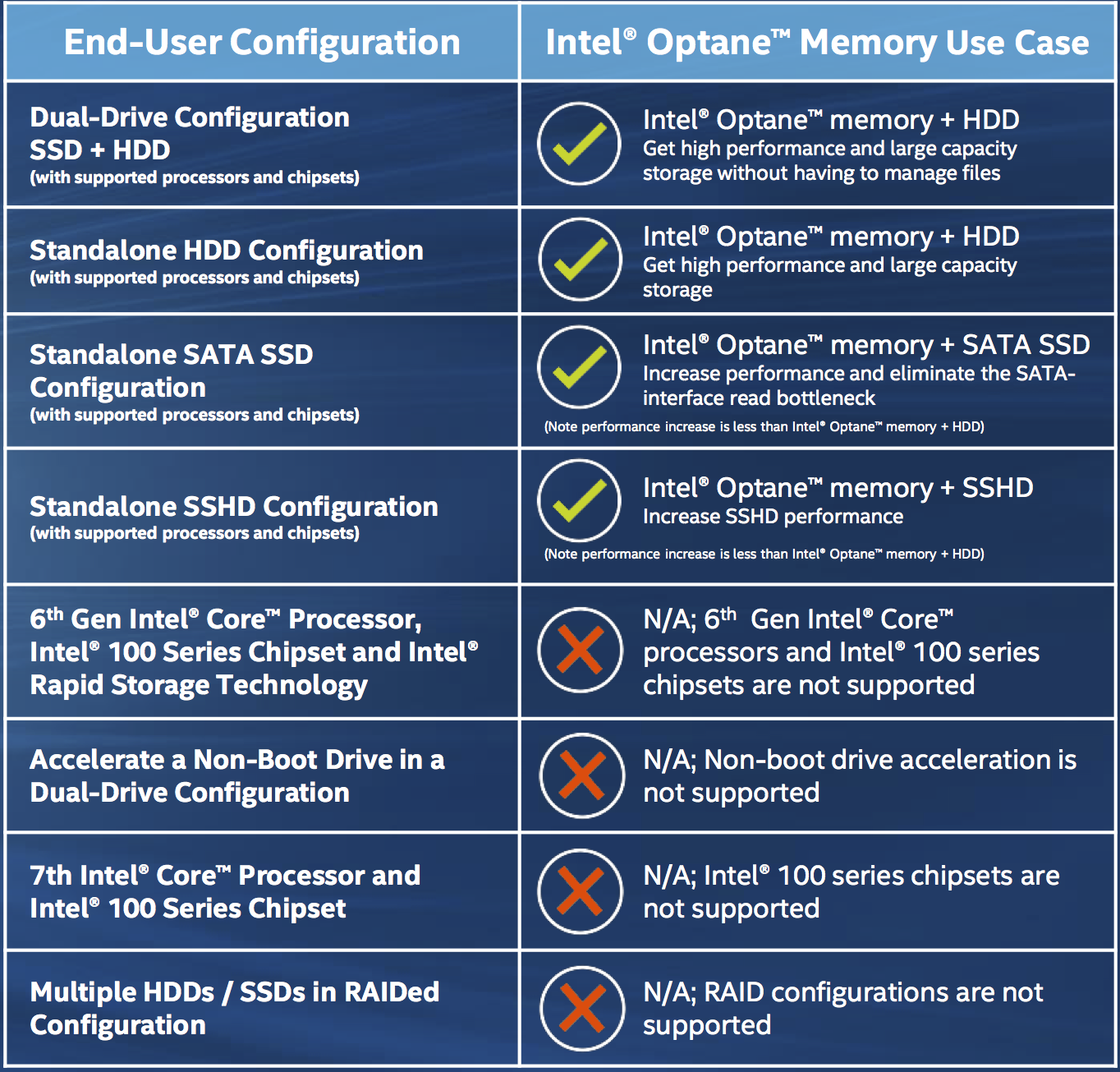

The very low capacity of the Optane Memory drives limits their usability as traditional SSDs. Intel intends for the drive to be used with the caching capabilities of their Rapid Storage Technology drivers. Intel first introduced SSD caching with their Smart Response Technology in 2011. The basics of Optane Memory caching are mostly the same, but under the hood Intel has tweaked the caching algorithms to better suit 3D XPoint memory's performance and flexibility advantages over flash memory. Optane Memory caching is currently only supported on Windows 10 64-bit and only for the boot volume. Booting from a cached volume requires that the chipset's storage controller be in RAID mode rather than AHCI mode so that the cache drive will not be accessible as a standard NVMe drive and is instead remapped to only be accessible to Intel's drivers through the storage controller. This NVMe remapping feature was first added to the Skylake-generation 100-series chipsets, but boot firmware support will only be found on Kaby Lake-generation 200-series motherboards and Intel's drivers are expected to only permit Optane Memory caching with Kaby Lake processors.

| Intel Optane Memory Specifications | |||

| Capacity | 16 GB | 32 GB | |

| Form Factor | M.2 2280 single-sided | ||

| Interface | PCIe 3.0 x2 NVMe | ||

| Controller | Intel unnamed | ||

| Memory | 128Gb 20nm Intel 3D XPoint | ||

| Typical Read Latency | 6 µs | ||

| Typical Write Latency | 16 µs | ||

| Random Read (4 KB, QD4) | 300k | ||

| Random Write (4 KB, QD4) | 70k | ||

| Sequential Read (QD4) | 1200 MB/s | ||

| Sequential Write (QD4) | 280 MB/s | ||

| Endurance | 100 GB/day | ||

| Power Consumption | 3.5 W (active), 0.9-1.2 W (idle) | ||

| MSRP | $44 | $77 | |

| Release Date | April 24 | ||

Intel has published some specifications for the Optane Memory drive's performance on its own. The performance specifications are the same for both capacities, suggesting that the controller has only a single channel interface to the 3D XPoint memory. The read performance is extremely good given the limitation of only one or two memory devices for the controller to work with, but the write throughput is quite limited. Read and write latency are very good thanks to the inherent performance advantage of 3D XPoint memory over flash. Endurance is rated at just 100GB of writes per day, for both 16GB and 32GB models. While this does correspond to 3-6 DWPD and is far higher than consumer-grade flash based SSDs, 3D XPoint memory was supposed to have vastly higher write endurance than flash and neither of the Optane products announced so far is specified for game-changing endurance. Power consumption is rated at 3.5W during active use, so heat shouldn't be a problem, but the idle power of 0.9-1.2W is a bit high for laptop use, especially given that there will also be a hard drive drawing power.

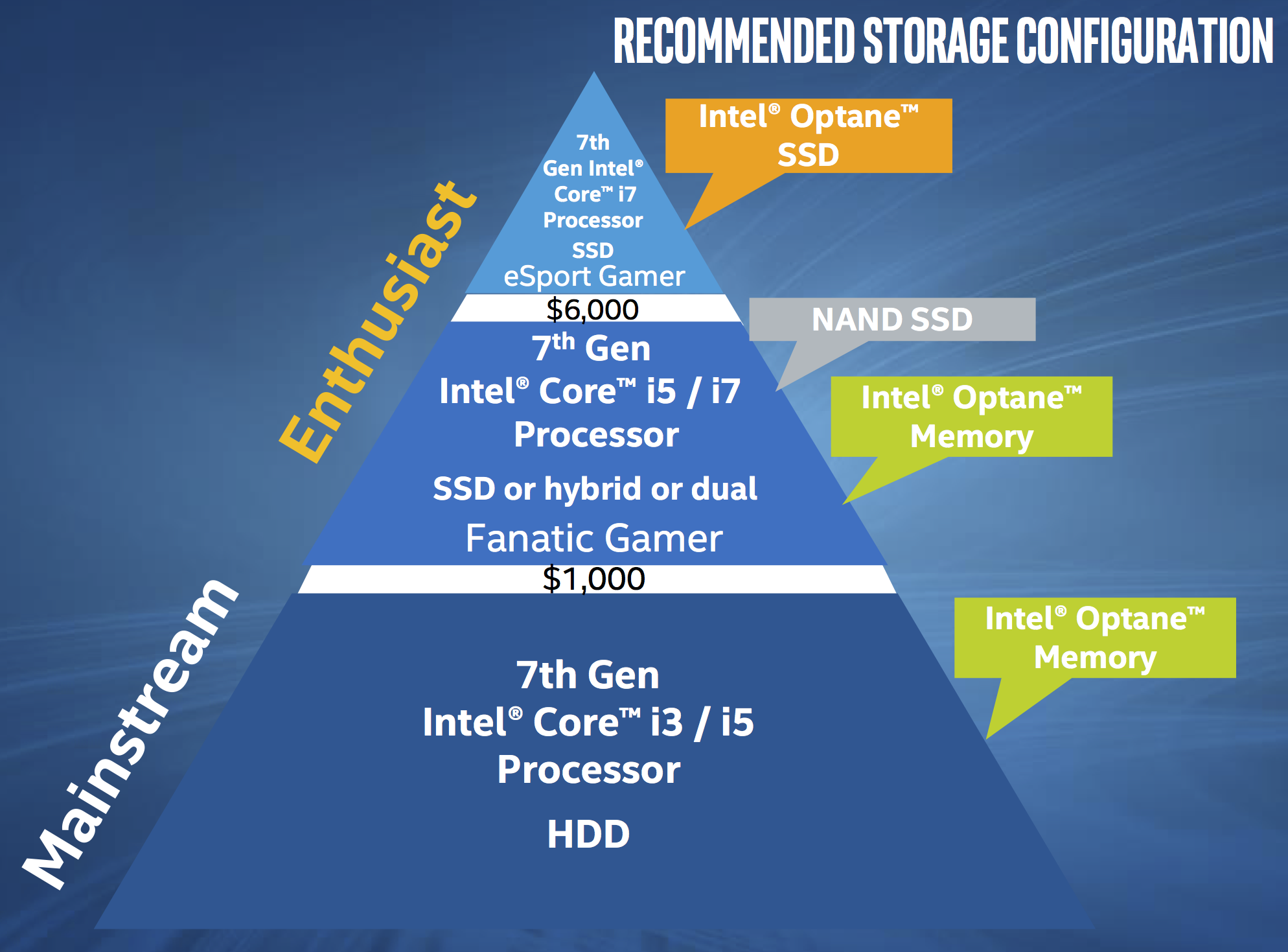

Intel's vision is for Optane Memory-equipped systems to offer a compelling performance advantage over hard drive-only systems for a price well below an all-flash configuration of equal capacity. The 16GB Optane Memory drive will retail for $44 while the 32GB version will be $77. As flash memory has declined in price over the years, it has gotten much easier to purchase SSDs that are large enough for ordinary use: 256GB-class SSDs start at around the same price as the 32GB Optane Memory drive, and 512GB-class drives are about the same as the combination of a 2TB hard drive and the 32GB Optane Memory. The Optane Memory products are squeezing into a relatively small niche for limited budgets that require a lot of storage and want the benefit of solid state performance without paying the full price of a boot SSD. Intel notes that Optane Memory caching can be used in front of hybrid drives and SATA SSDs, but the performance benefit will be smaller and these configurations are not expected to be common or cost effective.

The Optane Memory SSDs are now available for pre-order and are scheduled to ship on April 24. Pre-built systems equipped with Optane Memory should be available around the same time. Enthusiasts with large budgets will want to wait until later this year for Optane SSDs with sufficient capacity to use as primary storage. True DIMM-based 3D XPoint memory products are on the roadmap for next year.

Source: Intel

127 Comments

View All Comments

BurntMyBacon - Tuesday, March 28, 2017 - link

@ddriver: "I'd say databases. Of course, provided your database is small enough to fit on such a drive. DLLs are not an issue, those are shared between all processes which link against them and are loaded in memory as long as they are used, and they are usually hundreds of k to a few mb, so they absolutely do not qualify as something that would benefit from frequent random reads."I absolutely agree with databases here, but that isn't something you often see in a consumer system, so it really isn't the point of this consumer oriented cache. Also remember that this cache is persistent, so all those DLLs need to be loaded into memory every boot sequence. Not a great example as some people never shut down their system and if a large enough data set moves through the drive (depending on the internal cache algorithms) these files will be flushed anyways. Unfortunately I'm having trouble (as apparently is Intel) coming up with an obvious common use benefit for these drives. Perhaps a tablet/laptop/hybrid that is frequently powered on and off and uses hibernate or hybrid sleep would benefit from the low latency these present.

ddriver - Tuesday, March 28, 2017 - link

Intel is simply trying to find a market niche to cram that poor Hypetane miscarriage.Oh wow, a use case, for all the people who bought a brand new system to couple with a sole mechanical HDD and are desperately looking to populate their single M2 slot with something as useless as possible. All 3 of them.

For consumers a SATA M2 drive is more than enough, and also offers better efficiency. There would be no tangible benefit to using Hypetane "cache", money will be much better spent on a 128 GB SSD for a boot/os drive. That would be better than "cache", especially considering how IDIOTIC windoze caching policies are. It keeps on caching the most useless junk. I have 64 gigs of ram on my main box, and it kept on caching movies, ENTIRE movies, many many gigabytes rather than the small, frequently used files. Which is why I disabled caching for all SSD drives, and limited disk cache overall, cuz I hate wasting CPU cycles waiting on windoze to deallocate cached nonsense every time I run a memory demanding application. So no more of that genius "99% done, 10 minutes hanging on the last 1%" when copying big files to USB drives and such.

Alexvrb - Wednesday, March 29, 2017 - link

I have no idea why you're going on about it caching movies in RAM, anything in RAM can be overwritten instantly, so the RAM "usage" figures are misleading to novices. Write caching to RAM does give you a little bump, even with SSDs. If you really want max performance and you've got a reliable rig with a UPS/laptop battery, not only enabled write caching, but disable Write cache buffer flushing. That gives you the best of both worlds... provided you have reliable protection against power loss.ddriver - Wednesday, March 29, 2017 - link

"anything in RAM can be overwritten instantly"Have you ever heard about this thing called memory management and heap allocators? You cannot just write in ram willinilly, that will result in an instant crash. Having windoze cache filling your entire ram means that for every single new allocation it has to make room, deallocate some of the useless garbage it caches, that doesn't involve any penalty in "wiping" ram clean or something like that, but there are CPU cycles consumed by releasing the memory from the caching kernel and allocating it to another process.

Heap memory allocations are rather slow to begin with, and having to keep on making room each and every time you allocate heap memory makes it even slower.

As for the caching part, it was not about write cache but about the infamous "super fetch", i.e. the "read cache".

XZerg - Monday, March 27, 2017 - link

that's the thing - who in their right mind getting the 7th gen cpu would want to get a 16 or 32gb cache utilizing a full m.2 slot instead of just getting a 128gb or 256gb m.2 ssd for the same price? bigger storage and more useable across any config from last 10 years... this is a dead product with a major marketing spin to make it feel like a real worthy product.ddriver - Monday, March 27, 2017 - link

That's apparently intel's view on how much "consumers" need. 32 gigs of storage in the (usually sole) m2 slot. Might wanna throw in a few peanuts while they are it, just so that they seem extra generous.beginner99 - Tuesday, March 28, 2017 - link

Yeah, makes no sense. who would buy a new kabylake setup and not buy an SSD? The product would make some sense for older systems that still use HDD only but obviously there are no old kabylake systems. And since most mobos have exactly 1 m.2 slot, good look selling this intel. I recommend a crappy TLC 128 GB drive over this cache.Alexvrb - Tuesday, March 28, 2017 - link

Well you know there ARE sub-$50 Kaby Lakes. Do they not support drive caching? The idea is that you could get both a larger, cheaper SSD and a faster, smaller one to act as a cache. Not a bad concept. The problem is that Intel's speed/capacity/price is NOT where it needs to be. I'd use a competing conventional 128GB M.2 SSD as a cache before this thing. Maybe next gen!Samus - Tuesday, March 28, 2017 - link

And those 200MB/sec write speeds? This is a cache, after all. Clearly write caching won't be an improvement so how is this radically better than a $100 2TB SSHD that has 8GB SLC cache onboard? Not to mention the platform requirements for Optane caching and the inherent software complexity. This is just stupid of Intel to introduce something that costs this much based on a 10 year old concept introduced in Vista.It didn't work well then and it won't work well now. Yes the caching capacities have grown (Most readyboost caches were like 16 or 24GB) but so has storage capacities and overall data sets. A single AAA game is 50GB. A single game. There goes your entire cache, assuming the dumb algorithms SRT uses can even figure out to cache the maps in the first place. My experience is, it doesn't.

ddriver - Tuesday, March 28, 2017 - link

Hypetane is so "good" intel is struggling to find a market for it. It doesn't need to offer benefits, it doesn't even have to make sense. It just needs to sell, because otherwise it will look even worse than the complete and utter failure to live up to the "1000x better" hype.My money is on FIFO. First in - first out, actual usage patterns are too bothersome to take into account. It will cache stuff regardless of what it is, and when it runs out of cache, it will start purging the oldest data, or AT BEST the oldest and oldest accessed data. But access frequency is definitely not accounted for.

In most cases, people who know their stuff will be better of having that volume dedicated for fast access, and put the files they need to be fast there.