The Intel Optane Memory (SSD) Preview: 32GB of Kaby Lake Caching

by Billy Tallis on April 24, 2017 12:00 PM EST- Posted in

- SSDs

- Storage

- Intel

- PCIe SSD

- SSD Caching

- M.2

- NVMe

- 3D XPoint

- Optane

- Optane Memory

Intel's Caching History

Intel's first attempt at using solid-state memory for caching in consumer systems was the Intel Turbo Memory, a mini-PCIe card with 1GB of flash to be used by the then-new Windows Vista features Ready Drive and Ready Boost. Promoted as part of the Intel Centrino platform, Turbo Memory was more or less a complete failure. The cache it provided was far too small and too slow—sequential writes in particular were much slower than a hard drive. Applications were seldom significantly faster, though in systems short on RAM, Turbo Memory made swapping less painfully slow. Battery life could sometimes be extended by allowing the hard drive to spend more time spun down in idle. Overall, most OEMs were not interested in adding more than $100 to a system for Turbo Memory.

Intel's next attempt at caching came as SSDs were moving into the mainstream consumer market. The Z68 chipset for Sandy Bridge processors added Smart Response Technology (SRT), a SSD caching mode for Intel's Rapid Storage Technology (RST) drivers. SRT could be used with any SATA SSD but cache sizes were limited to 64GB. Intel produced the SSD 311 and later SSD 313 with low capacity but relatively high performance SLC NAND flash as caching-optimized SSDs. These SSDs started at $100 and had to compete against MLC SSDs that offered multiple times the capacity for the same price—enough that the MLC SSDs were starting to become reasonable options for every general-purpose storage without any hard drive.

Smart Response Technology worked as advertised but was very unpopular with OEMs, and it didn't really catch on as an aftermarket upgrade among enthusiasts. The rapidly dropping prices and increasing capacities of SSDs made all-flash configurations more and more affordable, while SSD caching still required extra work to set up and small cache sizes meant heavy users would still frequently experience uncached application launches and file loads.

Intel's caching solution for Optane Memory is not simply a re-use of the existing Smart Response Technology caching feature of their Rapid Storage Technology drivers. It relies on the same NVMe remapping feature added to Skylake chipsets to support NVMe RAID, but the caching algorithms are tuned for Optane. The Optane Memory software can be downloaded and installed separately without including the rest of the RST features.

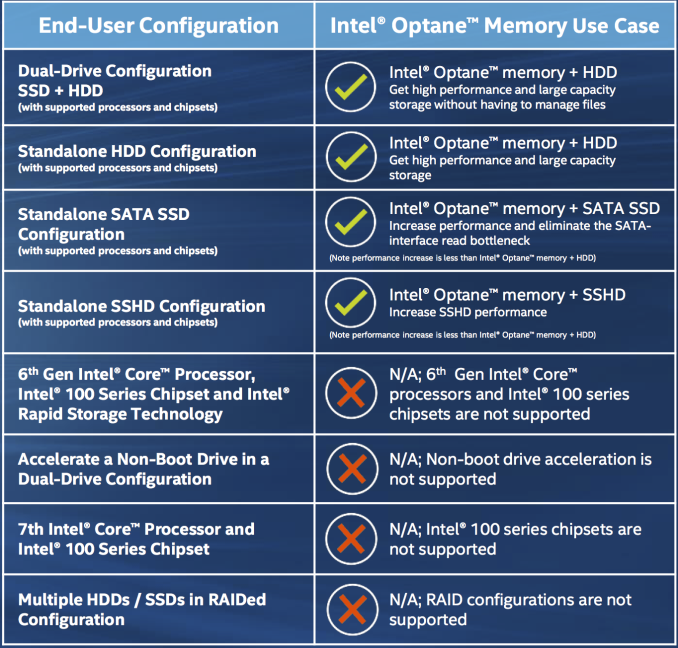

Optane Memory caching has quite a few restrictions: it is only supported with Kaby Lake processors and it requires a 200-series chipset or a HM175, QM175 or CM238 mobile chipset. Only Core i3, i5 and i7 processors are supported; Celeron and Pentium parts are excluded. Windows 10 64-bit is the only supported operating system. The Optane Memory module must be installed in a M.2 slot that connects to PCIe lanes provided by the chipset, and some motherboards will also have M.2 slots that do not support Optane Caching or RST RAID. The drive being cached must be SATA, not NVMe, and only the boot volume can be cached. Lastly, the motherboard firmware must have Optane Memory support to boot the cached volume. Motherboards that have the necessary firmware features will feature a UEFI tool to unpair the Optane Memory cache device from the backing device being cached, but this can also be performed with the Windows software.

Many of these restrictions are arbitrary and software enforced. The only genuine hardware requirement seems to be a Skylake 100-series or later chipset. The release notes for the final production release of the Optane Memory and RST drivers even includes in the list of fixed issues the removal of the ability to enable Optane caching with a non-Optane NVMe cache device, and the ability to turn on Optane caching with a Skylake processor in a 200-series motherboard. Don't be surprised if these drivers get hacked to provide Optane caching on any Skylake system that can do NVMe RAID with Intel RST.

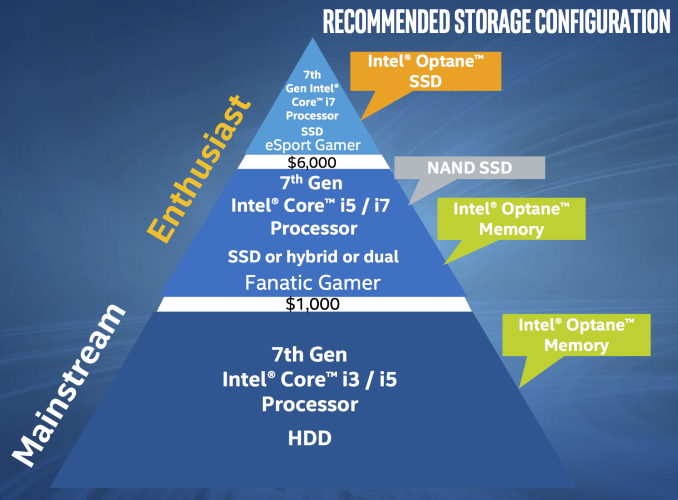

Intel's latest caching solution is not being pitched as a way of increasing performance in high-end systems; for that, they'll have full-size Optane SSDs for the prosumer market later this year. Instead, Optane Memory is intended to provide a boost for systems that still rely on a mechanical hard drive. It can be used to cache access to a SATA SSD or hybrid drive, but don't expect any OEMs to ship such a configuration—it won't be cost-effective. The goal of Optane Memory is to bring hard drive systems up to SSD levels of performance for a modest extra cost and without sacrificing total capacity.

110 Comments

View All Comments

Billy Tallis - Monday, April 24, 2017 - link

I've been considering interactive graphs. I'm not sure how easily our current CMS would let me include external scripts like D3.js, and I definitely want to make sure it provides a usable fallback to a pre-rendered image if the scripts can't load. If you have suggestions for something that might be easy to integrate into my python/matplotlib workflow, shoot me an email.And once I get the new 2017 consumer SSD test suite finished, I'll go back to having labeled bar charts for the primary scores, because that's the only easy to compare across a large number of drives.

watzupken - Monday, April 24, 2017 - link

I echo the conclusion that the cache is too little and too late. In a time where SSDs are becoming affordable as compared to the perhaps 5 years back, it makes little sense to fork out so much money for a puny 32gb cache along with other hardware requirements. It's fast, but it is not a full SSD.menting - Monday, April 24, 2017 - link

It's not aimed at replacing a SSD.Morawka - Monday, April 24, 2017 - link

has chipworks or anyone else figured out the material science behind this technology?zeeBomb - Tuesday, April 25, 2017 - link

Damn you guys killed the optane in a dayRyan Smith - Tuesday, April 25, 2017 - link

As is tradition.The manufacturers work hard, but SSD firmware development and validation is hard. There are a lot of drives out there that are better off today because we broke them first.

Reflex - Tuesday, April 25, 2017 - link

http://www.anandtech.com/show/9470/intel-and-micro...I think people need to re-read this article. Going over it makes much of the disappointment seem a bit overdone. Intel spoke to the potential of the technology, they didn't promise it all in the first version. They also spoke to its long term potential, including being able to stack the die and potentially move higher bit levels. I think its fair to say this isn't a consumer level product yet, but to ship a brand new memory tech at production level that is significantly faster and higher endurance than alternatives, is a significant accomplishment. We have been suck for more than a decade with a '3-5 year' timetable on new memory technologies, perhaps this will get other players to actually ship something (I'm looking at you HP and your promise of memristers two years ago).

Reflex - Tuesday, April 25, 2017 - link

Also, apparently typing comments at 11PM after a long day at the office isn't the best idea. Ignore my typos please. ;)testbug00 - Tuesday, April 25, 2017 - link

problem is Intel did not make this clear. Intel has now had multiple chance to clearly seperate the potential of the technology from the first generation implementation. They choose not to take it.This is slimey and disgusting.

The technology as a whole long term does indeed seem very promising, however.

Reflex - Tuesday, April 25, 2017 - link

Couldn't you say that about any company that talks about an upcoming technology and its potential then restricts its launch to specific niches? Which is almost everyone when it comes to new technologies...