The Intel Optane Memory (SSD) Preview: 32GB of Kaby Lake Caching

by Billy Tallis on April 24, 2017 12:00 PM EST- Posted in

- SSDs

- Storage

- Intel

- PCIe SSD

- SSD Caching

- M.2

- NVMe

- 3D XPoint

- Optane

- Optane Memory

Random Read

Random read speed is the most difficult performance metric for flash-based SSDs to improve on. There is very limited opportunity for a drive to do useful prefetching or caching, and parallelism from multiple dies and channels can only help at higher queue depths. The NVMe protocol reduces overhead slightly, but even a high-end enterprise PCIe SSDs can struggle to offer random read throughput that would saturate a SATA link.

Real-world random reads are often blocking operations for an application, such as when traversing the filesystem to look up which logical blocks store the contents of a file. Opening even an non-fragmented file can require the OS to perform a chain of several random reads, and since each is dependent on the result of the last, they cannot be queued.

These tests were conducted on the Optane Memory as a standalone SSD, not in any caching configuration.

Queue Depth 1

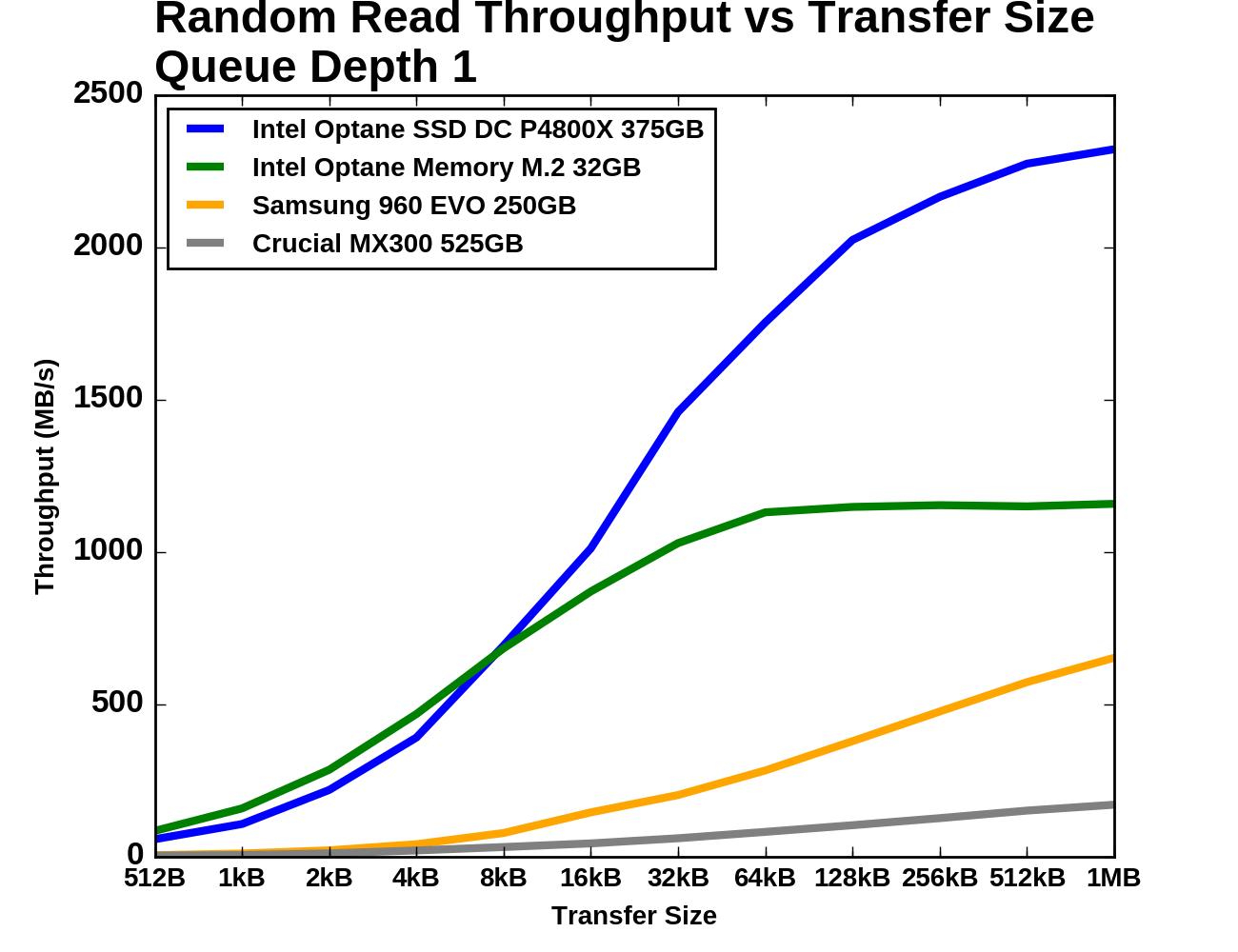

Our first test of random read performance looks at the dependence on transfer size. Most SSDs focus on 4kB random access as that is the most common page size for virtual memory systems and it is a common filesystem block size. For our test, each transfer size was tested for four minutes and the statistics exclude the first minute. The drives were preconditioned to steady state by filling them with 4kB random writes twice over.

|

|||||||||

| Vertical Axis scale: | Linear | Logarithmic | |||||||

The Optane Memory module manages to provide slightly higher performance than even the P4800X for small random reads, though it levels out at about half the performance for larger transfers. The Samsung 960 EVO starts out about ten times slower than the Optane Memory but narrows the gap in the second half of the test. The Crucial MX300 is behind the Optane memory by more than a factor of ten through most of the test.

Queue Depth >1

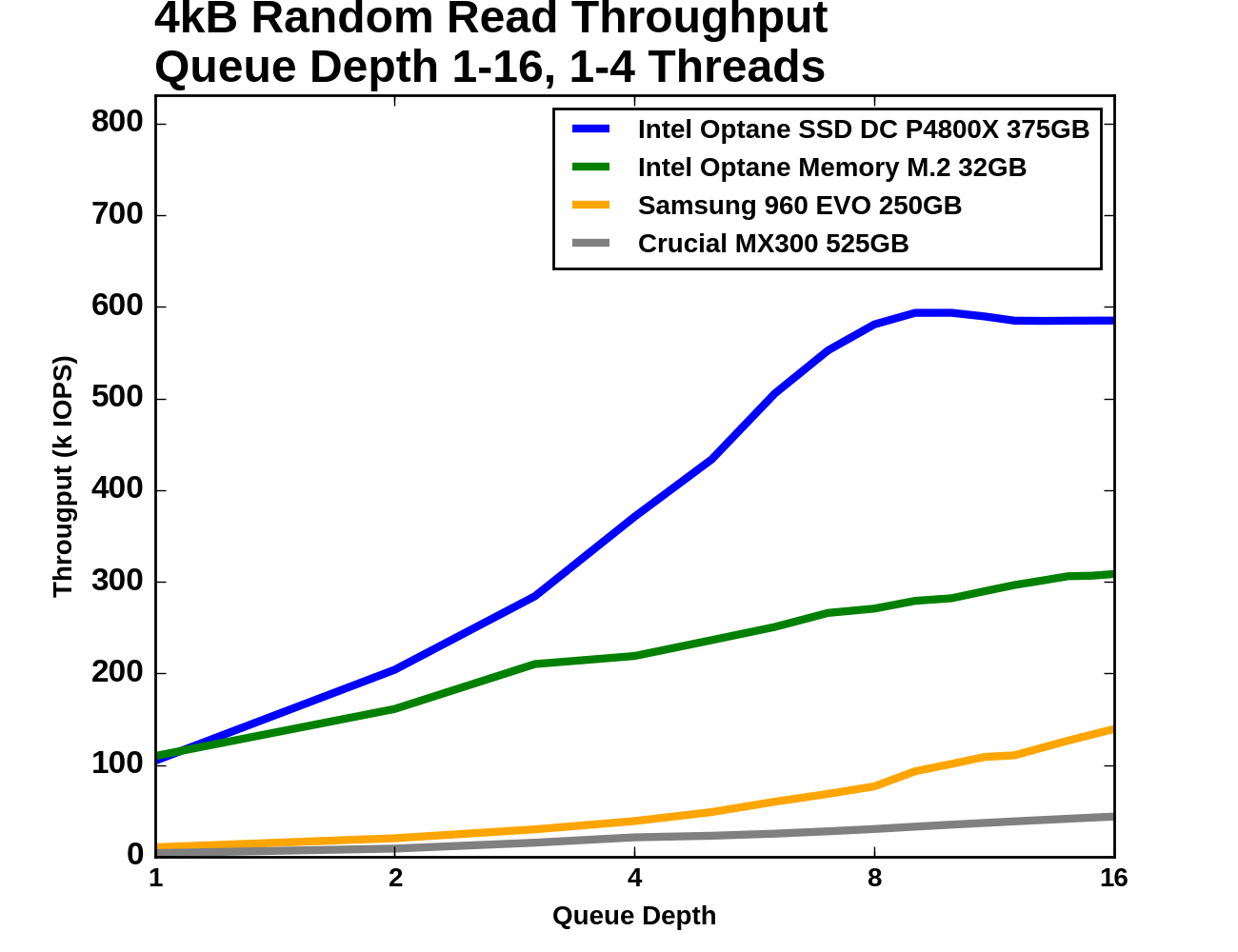

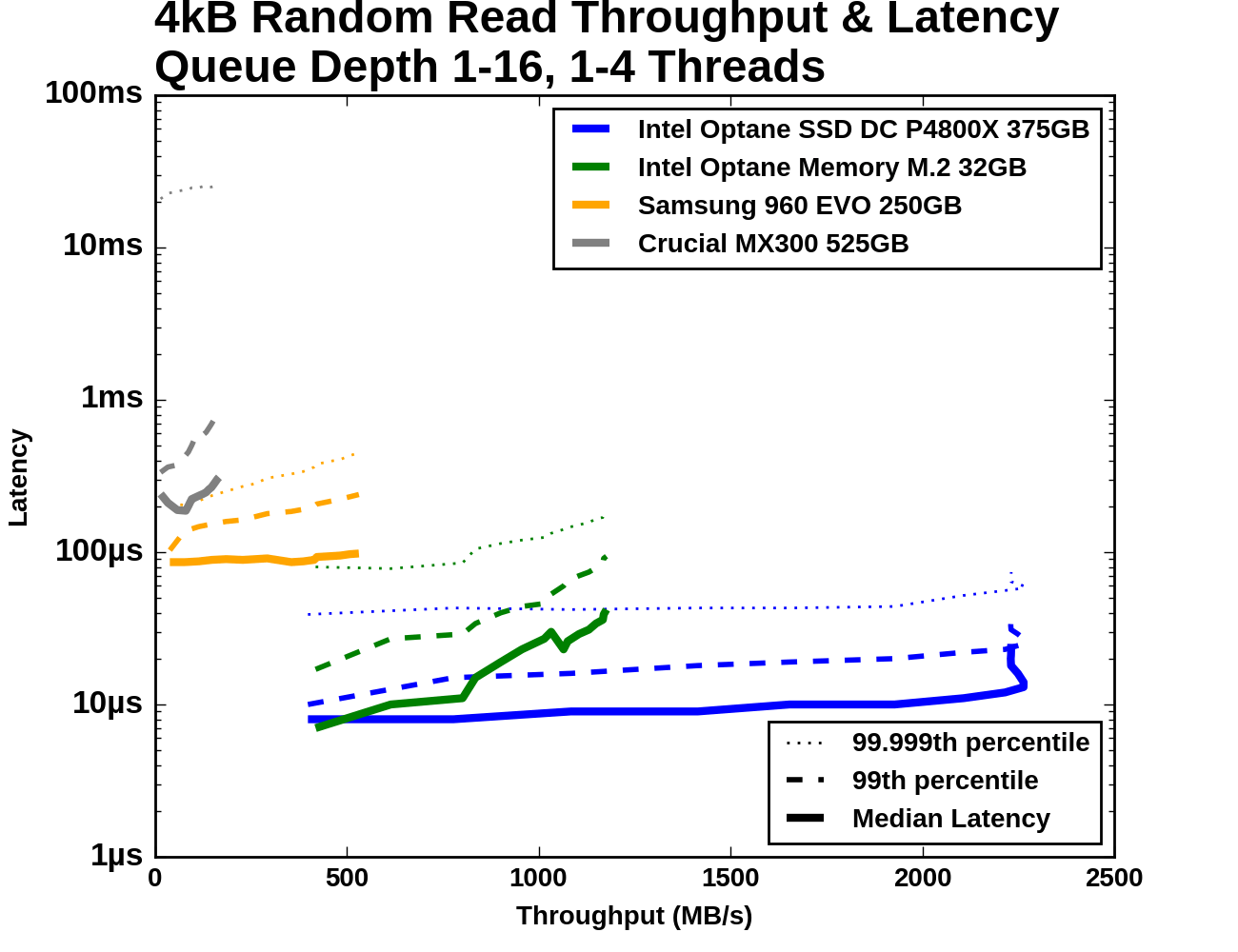

Next, we consider 4kB random read performance at queue depths greater than one. A single-threaded process is not capable of saturating the Optane SSD DC P4800X with random reads so this test is conducted with up to four threads. The queue depths of each thread are adjusted so that the queue depth seen by the SSD varies from 1 to 16. The timing is the same as for the other tests: four minutes for each tested queue depth, with the first minute excluded from the statistics.

The SATA, flash NVMe and two Optane products are each clearly occupying different regimes of performance, though there is some overlap between the two Optane devices. Except at QD1, the Optane Memory offers lower throughput and higher latency than the P4800X. By QD16 the Samsung 960 EVO is able to exceed the throughput of the Optane Memory at QD1, but only with an order of magnitude more latency.

|

|||||||||

| Vertical Axis scale: | Linear | Logarithmic | |||||||

Comparing random read throughput of the Optane SSDs against the flash SSDs at low queue depths requires plotting on a log scale. The Optane Memory's lead over the Samsung 960 EVO is much larger than the 960 EVO's lead over the Crucial MX300. Even at QD16 the Optane Memory holds on to a 2x advantage over the 960 EVO and a 6x advantage over the MX300. Over the course of the test from QD1 to QD16, the Optane Memory's random read throughput roughly triples.

|

|||||||||

| Mean | Median | 99th Percentile | 99.999th Percentile | ||||||

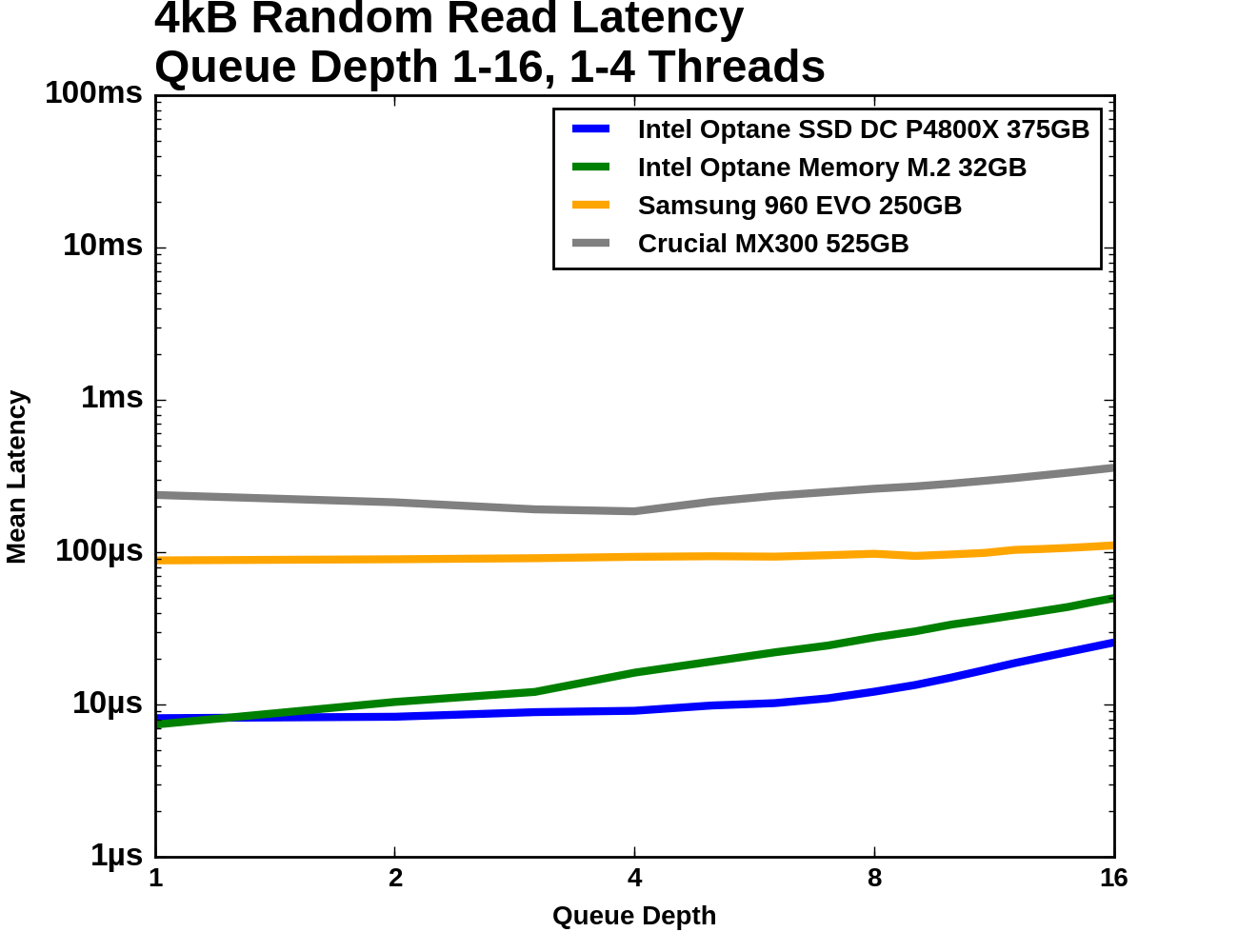

For mean and median random read latency, the two Optane drives are relatively close at low queue depths and far faster than either flash SSD. The 99th and 99.999th percentile latencies of the Samsung 960 EVO are only about twice as high as the Optane Memory while the Crucial MX300 falls further behind with outliers in excess of 20ms.

Random Write

Flash memory write operations are far slower than read operations. This is not always reflected in the performance specifications of SSDs because writes can be deferred and combined, allowing the SSD to signal completion before the data has actually moved from the drive's cache to the flash memory. Consumer workloads consist of far more reads than writes, but there are enough sources of random writes that they also matter to everyday interactive use. These tests were conducted on the Optane Memory as a standalone SSD, not in any caching configuration.

Queue Depth 1

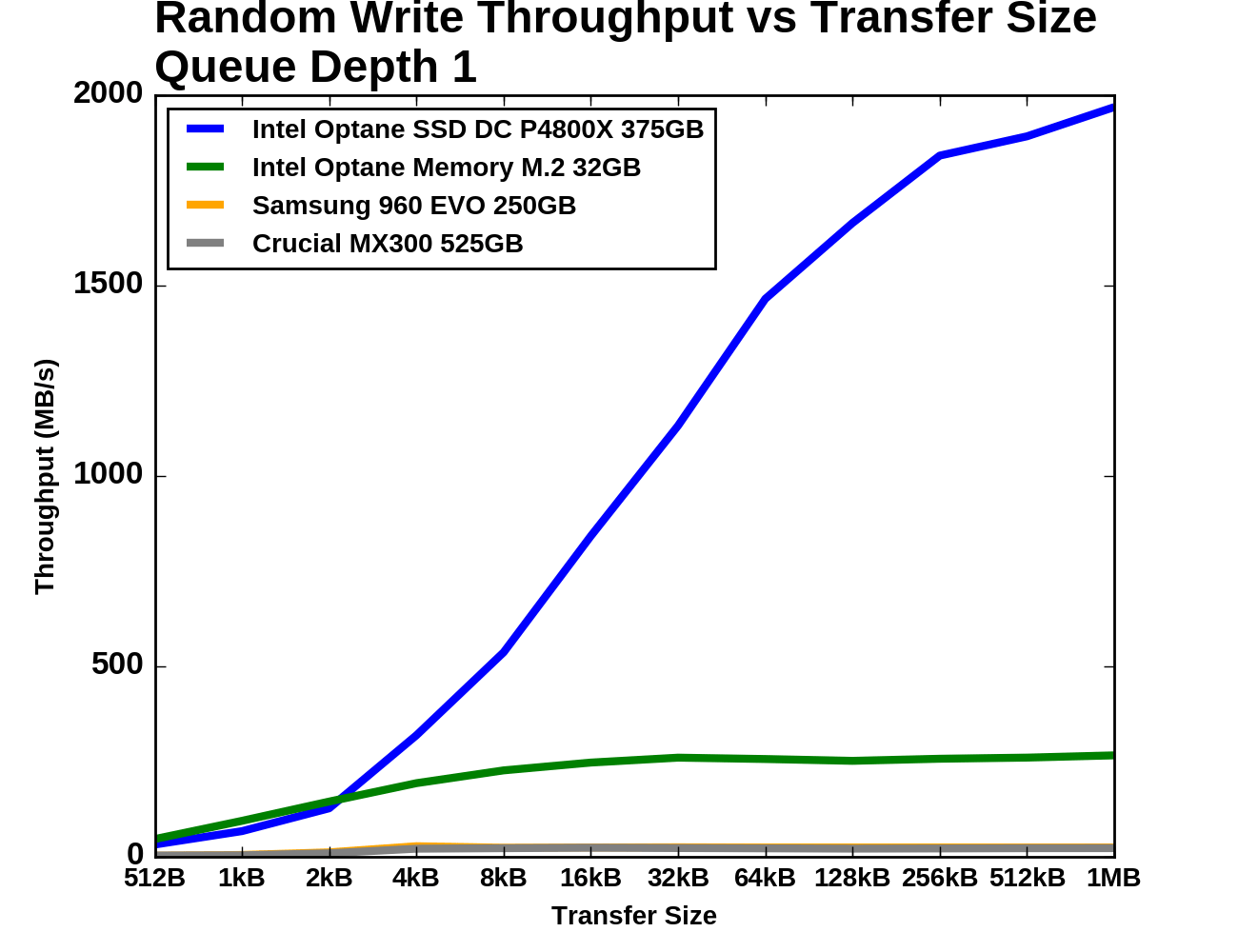

As with random reads, we first examine QD1 random write performance of different transfer sizes. 4kB is usually the most important size, but some applications will make smaller writes when the drive has a 512B sector size. Larger transfer sizes make the workload somewhat less random, reducing the amount of bookkeeping the SSD controller needs to do and generally allowing for increased performance.

|

|||||||||

| Vertical Axis scale: | Linear | Logarithmic | |||||||

As with random reads, the Optane Memory holds a slight advantage over the P4800X for the smallest transfer sizes, but the enterprise Optane drive completely blows away the consumer Optane Memory for larger transfers. The consumer flash SSDs perform quite similarly in this steady-state test and are consistently about an order of magnitude slower than the Optane Memory.

Queue Depth >1

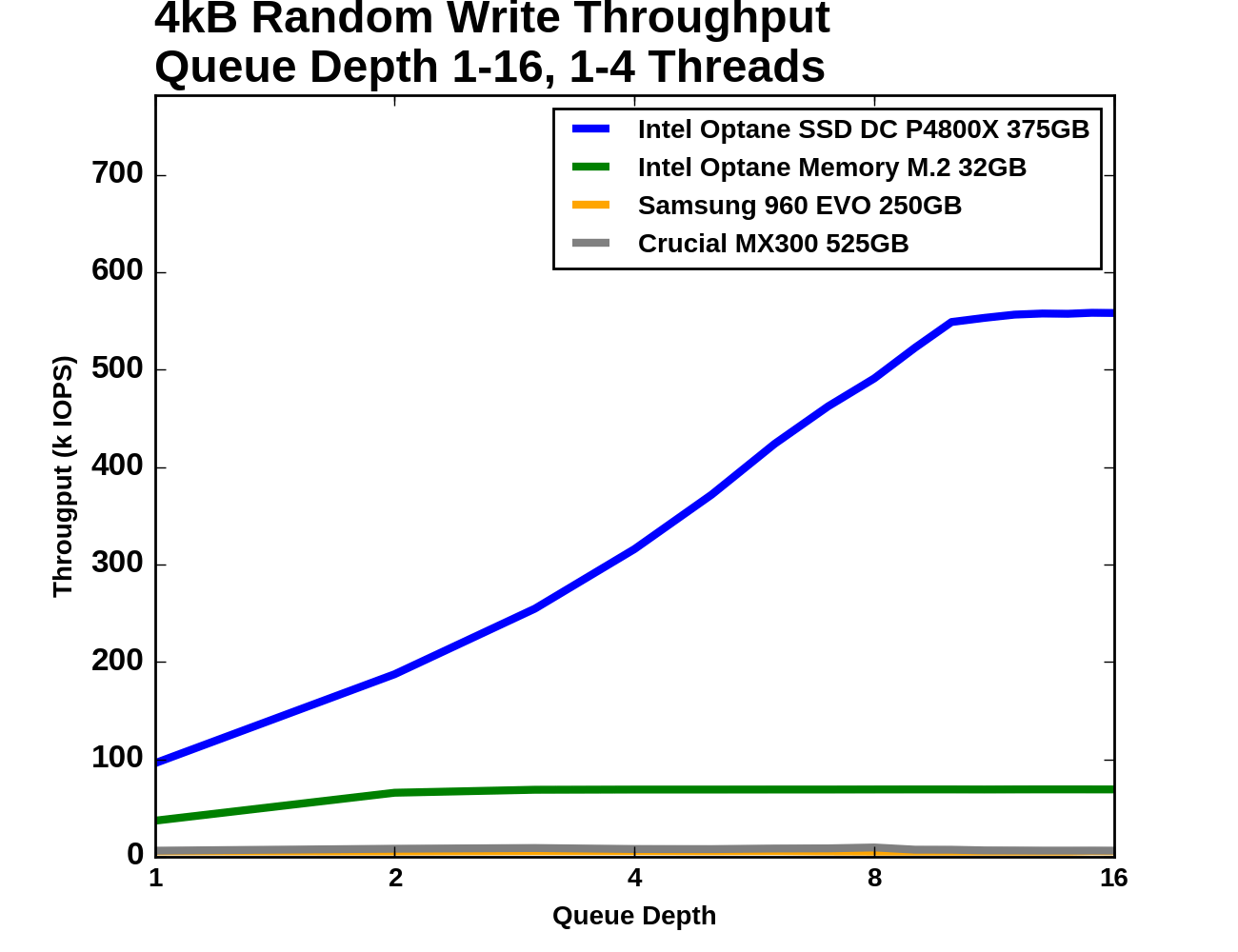

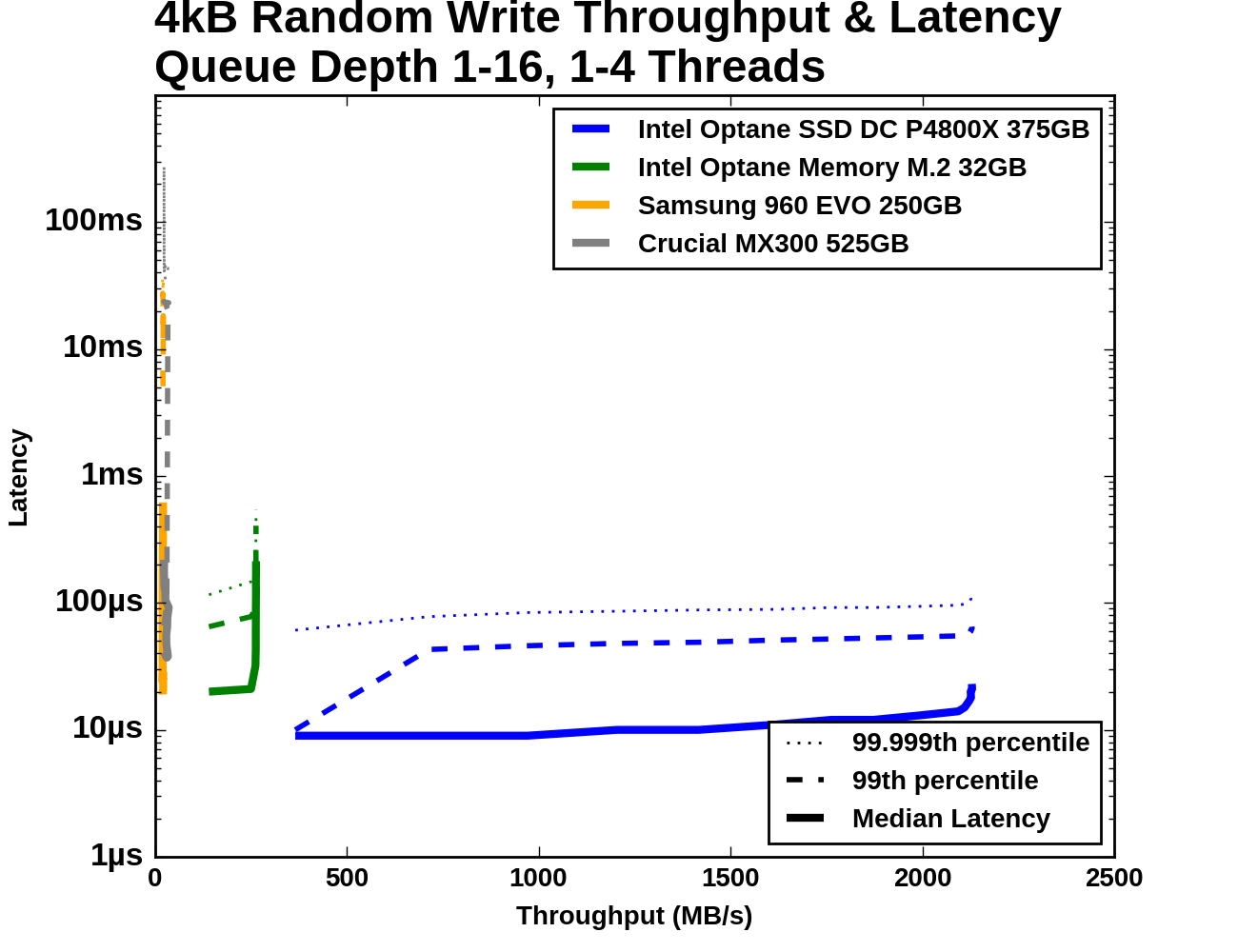

The test of 4kB random write throughput at different queue depths is structured identically to its counterpart random write test above. Queue depths from 1 to 16 are tested, with up to four threads used to generate this workload. Each tested queue depth is run for four minutes and the first minute is ignored when computing the statistics.

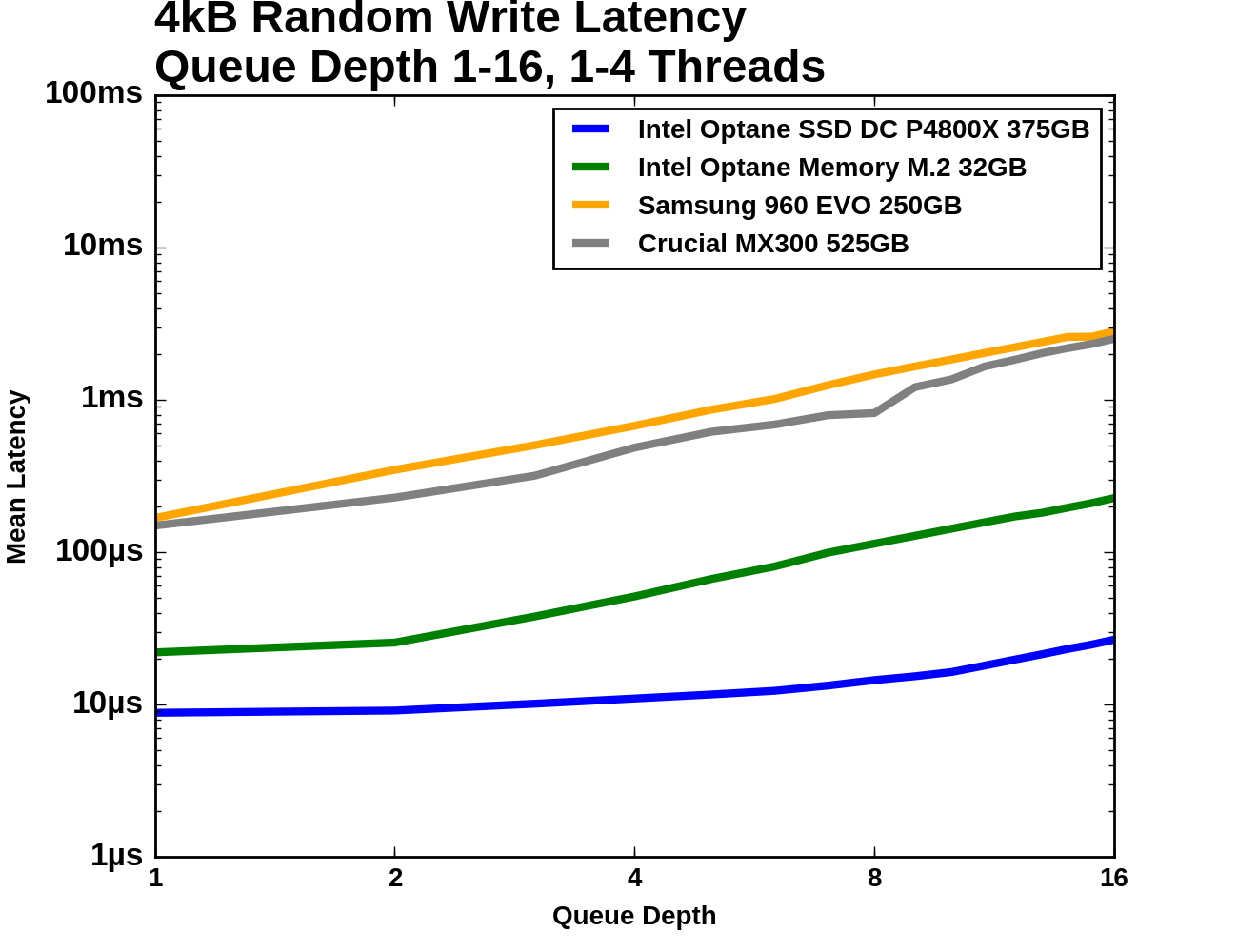

With the Optane SSD DC P4800X included on this graph, the two flash SSDs are have barely perceptible random write throughput, and the Optane Memory's throughput and latency both fall roughly in the middle of the gap between the P4800X and the flash SSDs. The random write latency of the Optane Memory is more than twice that of the P4800X at QD1 and is close to the latency of the Samsung 960 EVO, while the Crucial MX300 starts at about twice that latency.

|

|||||||||

| Vertical Axis scale: | Linear | Logarithmic | |||||||

When testing across the range of queue depths and at steady state, the 525GB Crucial MX300 is always delivering higher throughput than the Samsung 960 EVO, but with substantial inconsistency at higher queue depths. The Optane Memory almost doubles in throughput from QD1 to QD2, and is completely flat thereafter while the P4800X continues to improve until QD8.

|

|||||||||

| Mean | Median | 99th Percentile | 99.999th Percentile | ||||||

The Optane Memory and Samsung 960 EVO start out with the same median latency at QD1 and QD2 of about 20µs. The Optane Memory's latency increases linearly with queue depth after that due to its throughput being saturated, but the 960 EVO's latency stays lower until near the end of the test. The Samsung 960 EVO has relatively poor 99th percentile latency to begin with and is joined by the Crucial MX300 once it has saturated its throughput, while the Optane Memory's latency degrades gradually in the face of overwhelming queue depths. The 99.999th percentile latency of the flash-based consumer SSDs is about 300-400 times that of the Optane Memory.

110 Comments

View All Comments

ddriver - Tuesday, April 25, 2017 - link

Yeah, daring intel, the pioneer, taking mankind to better places.Oh wait, that's right, it is actually a greedy monopoly that has mercilessly milked people while making nothing aside from barely incremental stuff for years and through its anti-competitive practices has actually held progress back tremendously.

As I already mentioned above, the last time "intel dared to innovate" that resulted in netburst. Which was so bad that in order to save the day intel had to... do what? Innovate once again? Nope, god forbid, what they did was go back and improve on the good design they had and scrapped in their futile attempts to innovate.

And as I already mentioned above, all the secrecy behind xpoint might be exactly because it is NOTHING innovative, but something old and forgotten, just slightly improved.

Reflex - Tuesday, April 25, 2017 - link

Axe is looking pretty worn down from all that grinding....ddriver - Wednesday, April 26, 2017 - link

Also, unlike you, I don't let personal preferences cloud my objectivity. If a product is good, even if made by the most wretched corporation out there, it is not a bad product just because of who makes it, it is still a good product, still made by a wretched corporation.Even if intel wasn't a lousy bloated lazy greedy monopolist, hypetane would still suck, because it isn't anywhere near the "1000x" improvements they promised. It would suck even if intel was a charity that fed the starving in the 3rd world.

I would have had ZERO objections to hypetane, and also wouldn't call it hypetane to begin with, if intel, the spoiled greedy monopolist was still decent enough to not SHAMELESSLY LIE ABOUT IT.

Had they just said "10x better latency, 4x better low depth queue performance" and stuff like that, I'd be like "well, it's ok, it is faster than nand, you delivered what you promised.

But they didn't. They lied, and lied, and now that it is clear that they lied, they keep on lying and smearing with biased reviews in unrealistic workloads.

What kind of an idiot would ever approve of that?

fallaha56 - Tuesday, April 25, 2017 - link

OMG when our product wasn't as good as we said it was we didn't own-up about itand maybe you test against HDD (like Intel) but the rest of us are already packing SSDs

philehidiot - Saturday, April 29, 2017 - link

This is what companies do. Your technology is useless unless you can market it. And you don't market anything by saying it's mediocre. Look at BP's high octane fuel which supposedly cleans your engine and gets better fuel efficiency. The ONLY thing that higher octane fuel does is resist auto-ignition under compression better and thus certain high performance engines require it. As for cleaning your engine - you're telling me you've got a solvent which is better at cutting through crap than petrol AND can survive the massive temperatures and pressures inside the combustion chamber? It's the petrol which scrubs off the crap so yes, it's technically true. They might throw and additive or two in there but that will only help pre-combustion chamber and if you actually have a problem. And Yes, in certain, newer cars with certain sensors you will get SLIGHTLY higher MPG and therefore they advertise the maximum you'll get under ideal conditions because no one will but into it if you're realistic about the gains. The gains will never offset the extra cost of the fuel, however.PC marketing is exactly the same and why the J Micron controller was such a disaster so many years ago. They went for advertised high sequential throughput numbers being as high as possible and destroyed the random performance, Anand spotted it and OCZ threw a wobbler. But that experience led to drives being advertised on random performance as well as sequential.

So what's the lesson here? We should always take manufacturer's claims with a mouthful of salt and buy based on objective criteria and independent measurements. Manufacturers will always state what is achievable in basically a lab set up with conditions controlled to perfection. Why? Because for one you can't quote numbers based on real life performance because everyone's experience will differ and you can't account for the different variables they'll experience. And for two, if everyone else is quoting the maximum theoretical potential, you're immediately putting yourself at a disadvantage by not doing so yourself. It's not about your product, it's about how well you can sell it to a customer - see: Stupidly expensive Dyson Hairdryer. Provides no real performance benefit over a cheap hairdryer but cost a lot in R&D and is mostly advertising wank for rich people with small brains.

As for Intel being a greedy monopoly... welcome to capitalism. If you don't want that side effect of the system then bugger off to Cuba. Capitalism has brought society to the highest standard of living ever seen on this planet. No other form of economic operation has allowed so many to have so much. But the result is big companies like Intel, Google, Apple, etc, etc.

Advertising wank is just that. Figures to masturbate over. If they didn't do it then sites like Anandtech wouldn't need to exist as products would always be accurately described by the manufacturer and placed honestly within the market and so reviews wouldn't be required.

I doubt they lied completely - they will be going on the theoretical limits of their technology when all engineering limitations are removed. This will never happen in practice and will certainly never happen in a gen 1 product. Also, whilst I see this product as being pointless, it's obviously just a toe dipping exercise like the enterprise model. Small scale, very controlled use cases and therefore good real world use data to be returned for gen 2/3.

Personally, whilst I'm wowed by the figures, I don't see how they're going to improve things for me. So what's the point in a different technology when SLC can probably perform just as well? It's a different development path which will encounter different limitations and as a result will provide different advantages further down the road. Why do they continue to build coal fired power stations when we have CCGTs, wind, solar, nukes, etc? Because each technology has its strengths and weaknesses and encounters different engineering limitations in development. Plus a plurality of different, competing technologies is always better as it creates progress. You can't whinge about monopolies and then when someone starts doing something different and competing with the established norm start whinging about that.

fallaha56 - Tuesday, April 25, 2017 - link

hi @sarah i find that a dead hard drive also plays into responsiveness and boot times(!)this technology is clearly not anywhere near as good as Intel implied it was

CaedenV - Monday, April 24, 2017 - link

I have never once had an SSD fail because it has over-used its flash memory... but controllers die all the time. It seems that this will remain true for this as well.Ryan Smith - Tuesday, April 25, 2017 - link

And that's exactly what we're suspecting here. We've likely managed to hit a bug in the controller's firmware. Which to be sure, isn't fantastic, but it can be fixed.Prior to the P3700's launch, Intel sent us 4 samples specifically for stress testing. We managed to disable every last one of them. However Intel learned from our abuse, and now those same P3700s are rock-solid thanks to better firmware and drivers.

jimjamjamie - Tuesday, April 25, 2017 - link

Interesting that an ad-supported website can stress-test better than a multi-billion dollar company..testbug00 - Tuesday, April 25, 2017 - link

based on what? Have they sent you another model?A sample dying on day one, and only allowing testing via remote server doesn't confidence build.