The Intel Optane SSD DC P4800X (375GB) Review: Testing 3D XPoint Performance

by Billy Tallis on April 20, 2017 12:00 PM ESTSequential Read

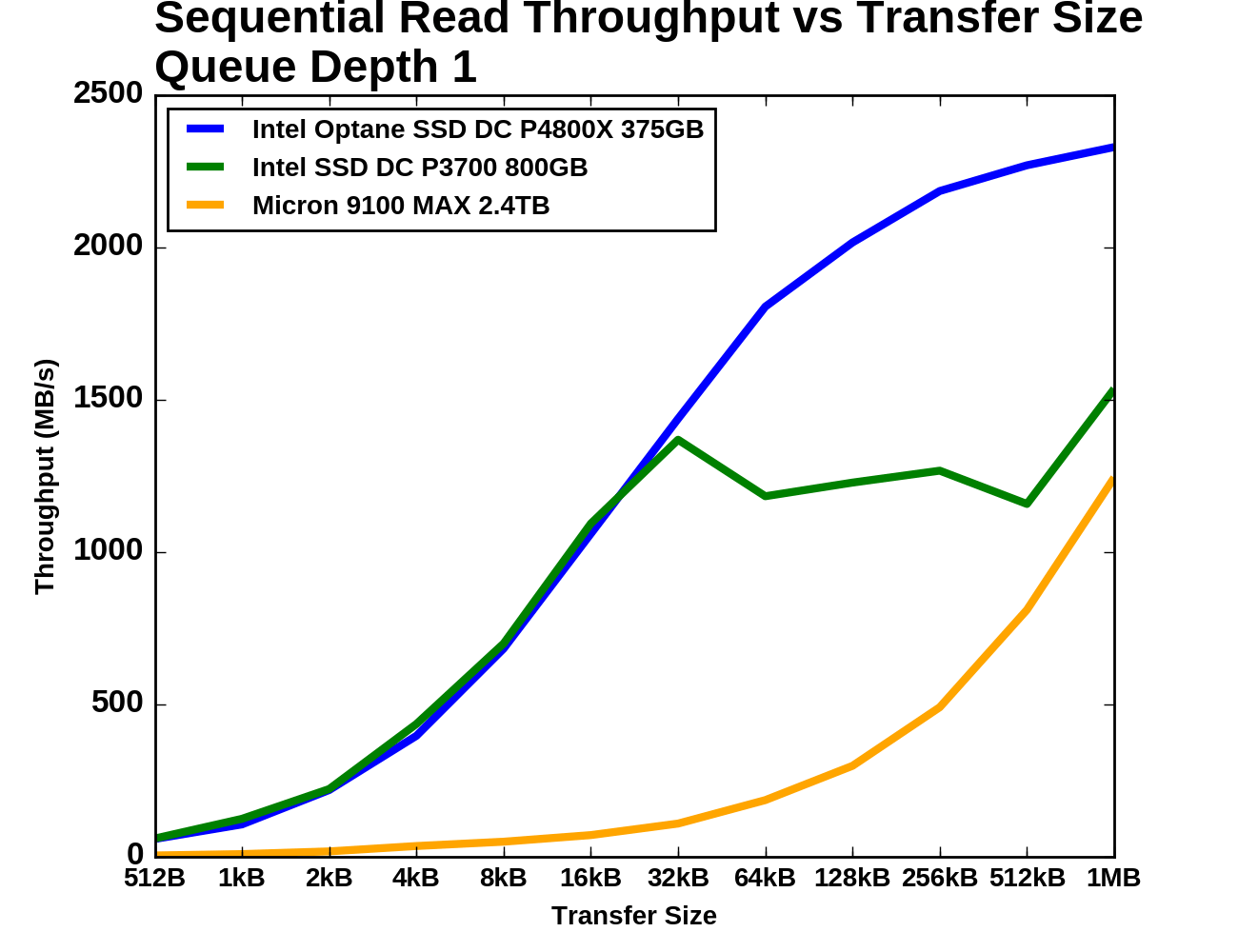

Intel provides no specifications for sequential access performance of the Optane SSD DC P4800X. Buying an Optane SSD for a mostly sequential workload would make very little sense given that sufficiently large flash-based SSDs or RAID arrays can offer plenty of sequential throughput. Nonetheless, it will be interesting to see how much faster the Optane SSD is with sequential transfers instead of random access.

Sequential access is usually tested with 128kB transfers, but this is more of an industry convention and is not based on any workload trend as strong as the tendency for random I/Os to be 4kB. The point of picking a size like 128kB is to have transfers be large enough that they can be striped across multiple controller channels and still involve writing a full page or more to the flash on each channel. Real-world sequential transfer sizes vary widely depending on factors like which application is moving the data or how fragmented the filesystem is.

Even without a large native page size to its 3D XPoint memory, we expect the Optane SSD DC P4800X to exhibit good performance from larger transfers. A large transfer requires the controller to process fewer operations for the same amount of user data, and fewer operations means less protocol overhead on the wire. Based on the random access tests, it appears that the Optane SSD is internally managing the 3D XPoint memory in a way that greatly benefits from transfers being at least 4kB even though the drive emulates a 512B sector size out of the box.

The drives were preconditioned with two full writes using 4kB random writes, so the data on each drive is entirely fragmented. This may limit how much prefetching of user data the drives can perform on the sequential read tests, but they can likely benefit from better locality of access to their internal mapping tables.

Queue Depth 1

The test of sequential read performance at different transfer sizes was conducted at queue depth 1. Each transfer size was used for four minutes, and the throughput was averaged over the final three minutes of each test segment.

|

|||||||||

| Vertical Axis scale: | Linear | Logarithmic | |||||||

For transfer sizes up to 32kB, both Intel drives deliver similar sequential read speeds. Beyond 32kB the P3700 appears to be saturated but also highly inconsistent. The Micron 9100 is plodding along with very low but steadily growing speeds, and by the end of the test it has almost caught up with the Intel P3700. It was at least ten times slower than the Optane SSD until the transfer size reached 64kB. The Optane SSD passes 2GB/s with 128kB transfers and finishes the test at 2.3GB/s.

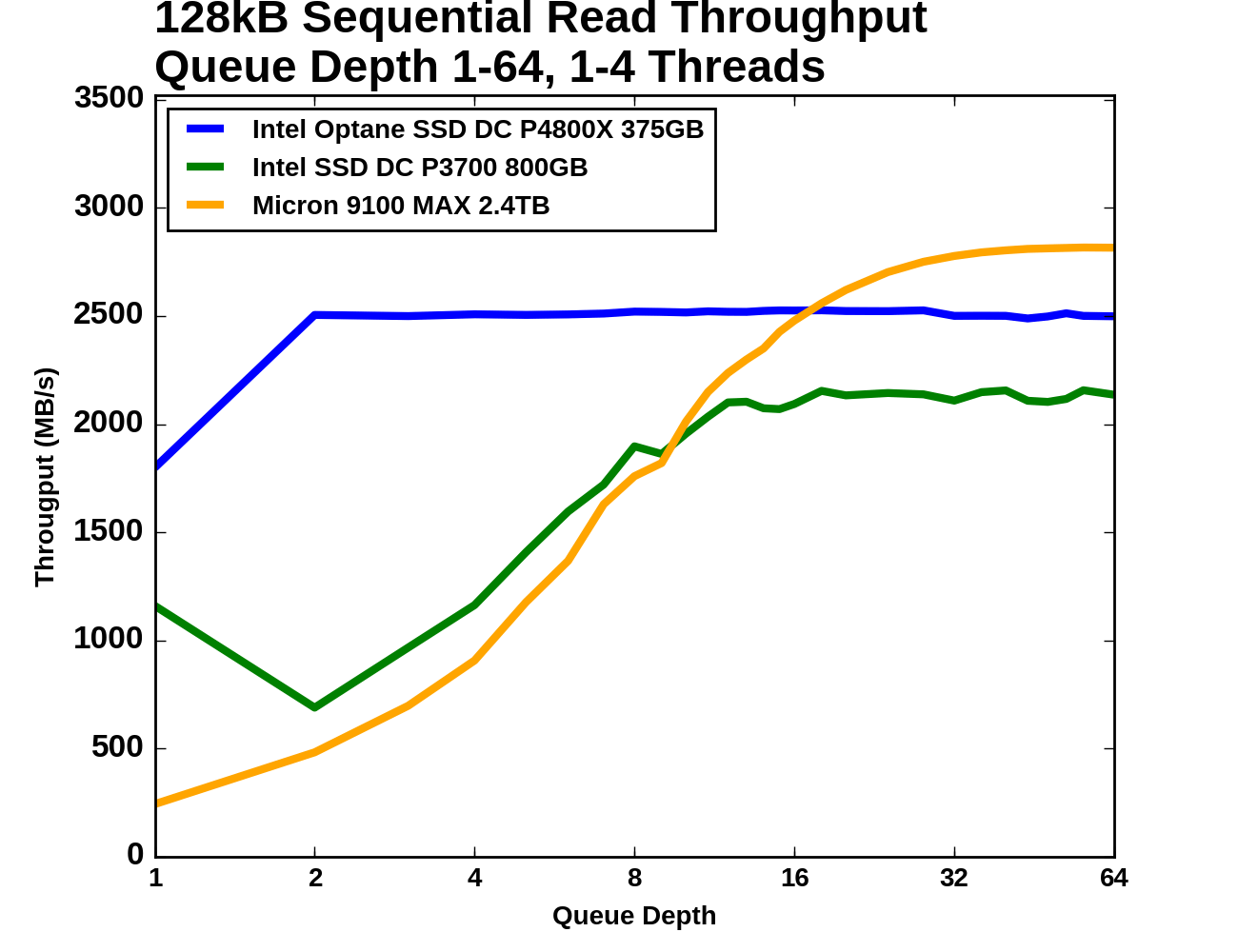

Queue Depth > 1

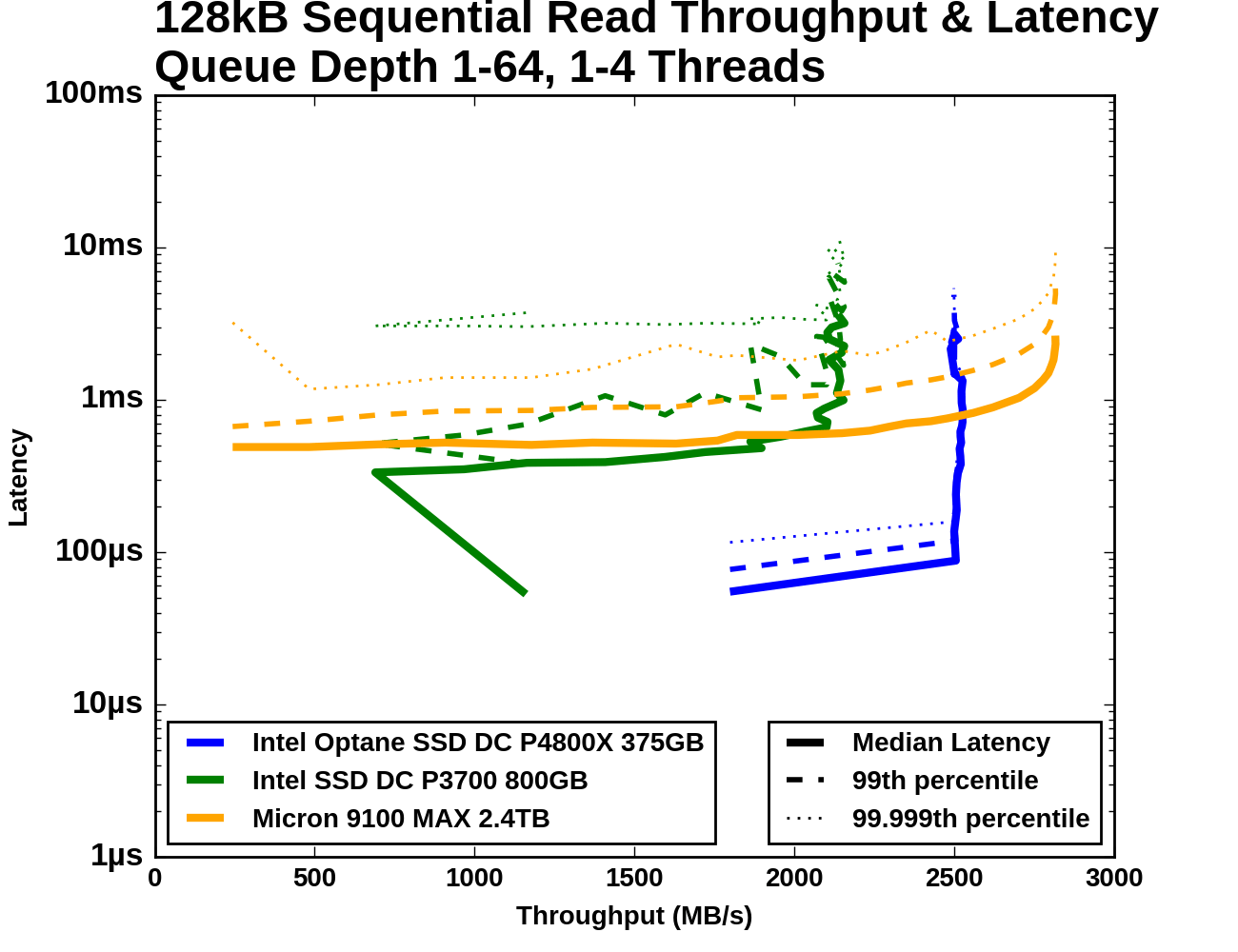

For testing sequential read speeds at different queue depths, we use the same overall test structure as for random reads: total queue depths of up to 64 are tested using a maximum of four threads. Each thread is reading sequentially but from a different region of the drive, so the read commands the drive receives are not entirely sorted by logical block address.

The Optane SSD DC P4800X starts out with a far higher QD1 sequential read speed than either flash SSD can deliver. The Optane SSD's median latency at QD1 is not significantly better than what the Intel P3700 delivers, but the P3700's 99th and 99.999th percentile latencies are at least an order of magnitude worse. Beyond QD1, the Optane SSD saturates while the Intel P3700 takes a temporary hit to throughput and a permanent hit to latency. The Micron 9100 starts out with low throughput and fairly high latency, but with increasing queue depth it manages to eventually surpass the Optane SSD's maximum throughput, albeit with ten times the latency.

|

|||||||||

| Vertical Axis units: | IOPS | MB/s | |||||||

The Intel Optane SSD DC P4800X starts this test at 1.8GB/s for QD1, and delivers 2.5GB/s at all higher queue depths. The Intel P3700 performs significantly worse when a second QD1 thread is introduced, but by the time there are four threads reading from the drive the total throughput has recovered. The Intel P3700 saturates a little past QD8, which is where the Micron 9100 passes it. The Micron 9100 then goes on to surpass the Optane SSD's throughput above QD16, but it too has saturated by QD64.

|

|||||||||

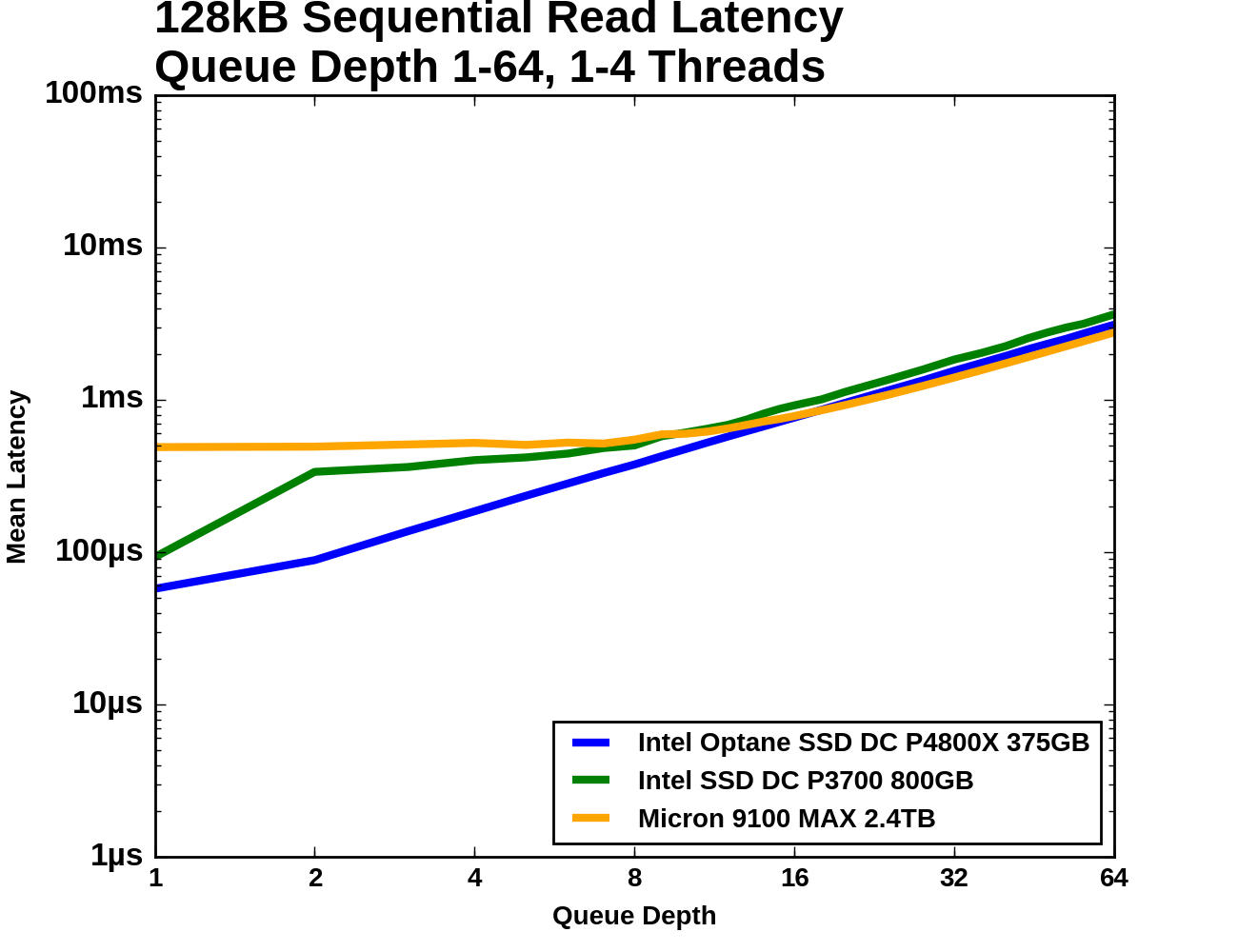

| Mean | Median | 99th Percentile | 99.999th Percentile | ||||||

The Optane SSD's latency increases modestly from QD1 to QD2, and then unavoidably increases linearly with queue depth due to the drive being saturated and unable to offer any better throughput. The Micron 9100 starts out with almost ten times the average latency, but is able to hold that mostly constant as it picks up most of its throughput. Once the 9100 passes the Optane SSD in throughput it is delivering slightly better average latency, but substantially higher 99th and 99.999th percentile latencies. The Intel P3700's 99.999th percentile latency is the worst of the three across almost all queue depths, and its 99th percentile latency is only better than the Micron 9100's during the early portions of the test.

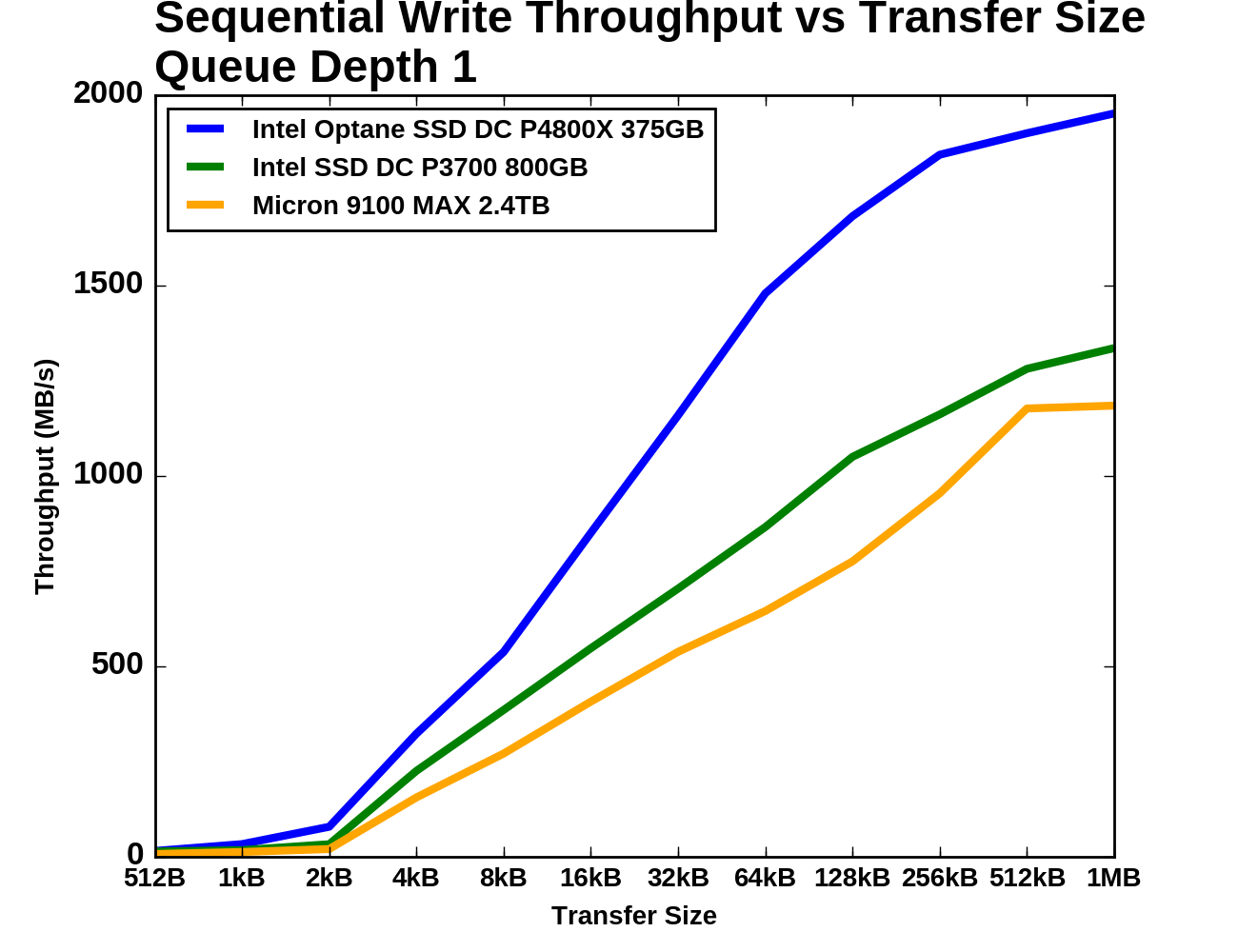

Sequential Write

The sequential write tests are structured identically to the sequential read tests save for the direction the data is flowing. The sequential write performance of different transfer sizes is conducted with a single thread operating at queue depth 1. For testing a range of queue depths, a 128kB transfer size is used and up to four worker threads are used, each writing sequentially but to different portions of the drive. Each sub-test (transfer size or queue depth) is run for four minutes and the performance statistics ignore the first minute.

|

|||||||||

| Vertical Axis scale: | Linear | Logarithmic | |||||||

As with random writes, sequential write performance doesn't begin to take off until transfer sizes reach 4kB. Below that size, all three SSDs offer dramatically lower throughput, with the Optane SSD narrowly ahead of the Intel P3700. The Optane SSD shows the steepest growth as transfer size increases, but it and the Intel P3700 begin to show diminishing returns beyond 64kB. The Optane SSD almost reaches 2GB/s by the end of the test while the Intel P3700 and the Micron 9100 reach around 1.2-1.3GB/s.

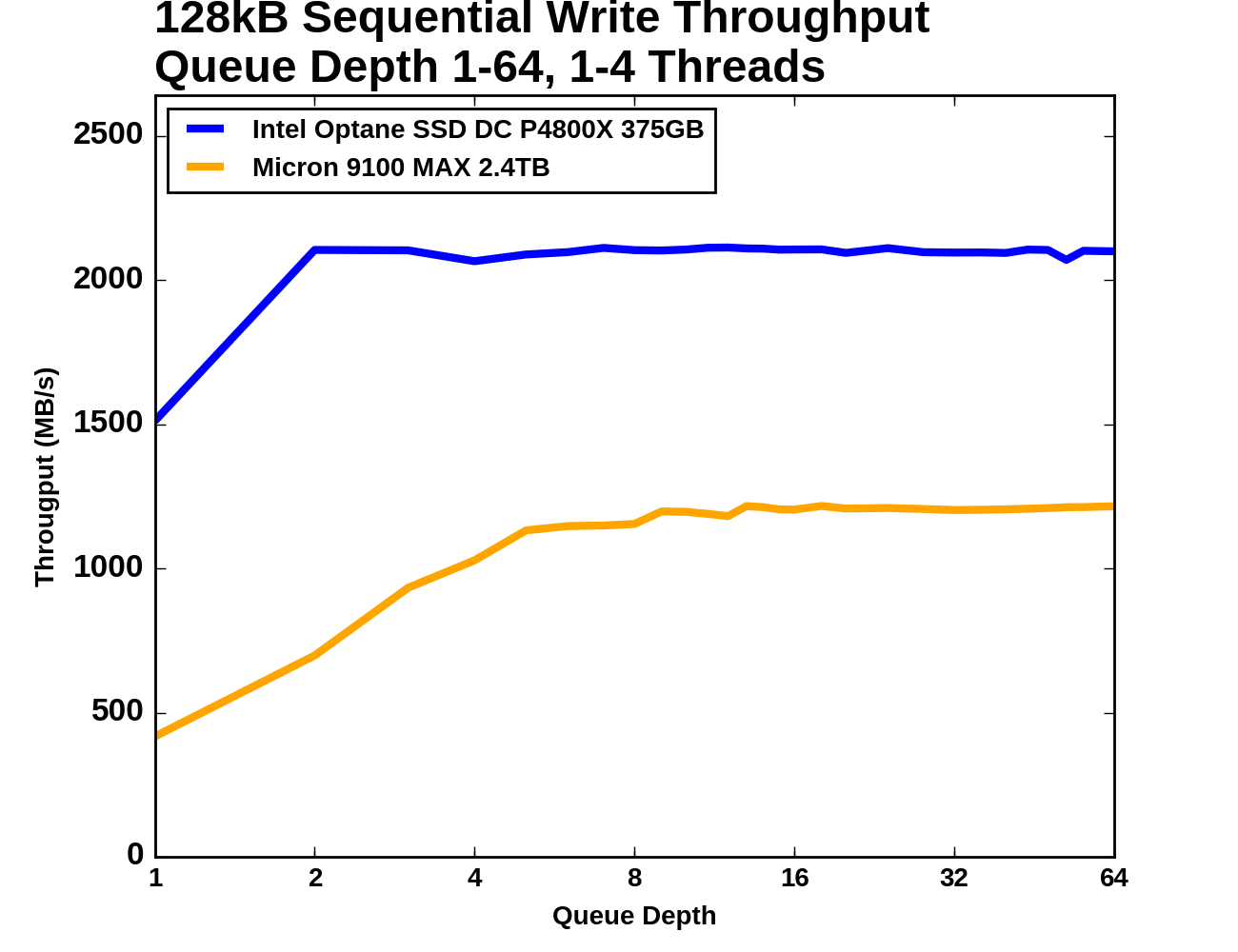

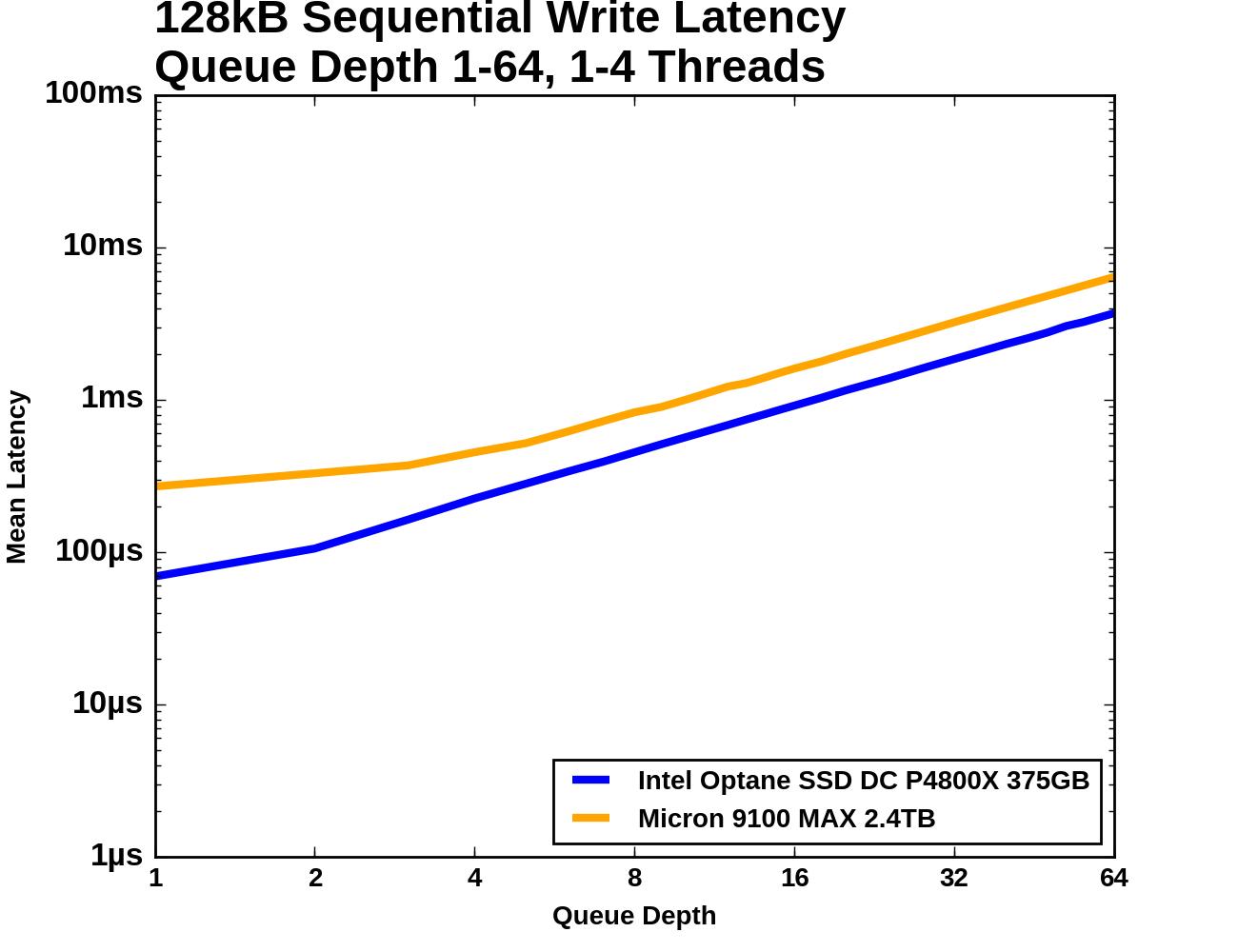

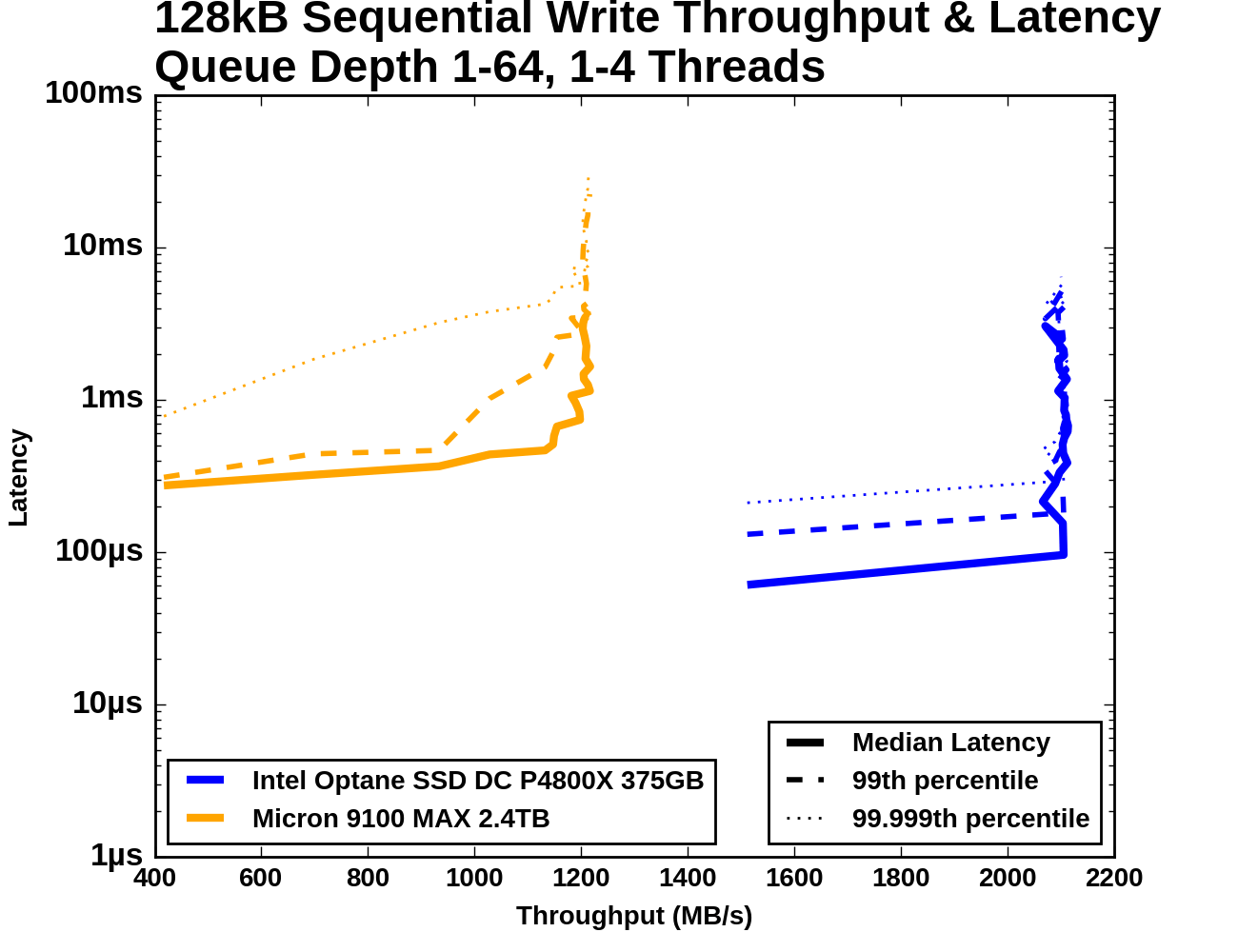

Queue Depth > 1

When testing sequential writes at varying queue depths, the Intel SSD DC P3700's performance was highly erratic. We did not have sufficient time to determine what was going wrong, so its results have been excluded from the graphs and analysis below.

The Optane SSD DC P4800X delivers better sequential write throughput at every queue depth than the Micron 9100 can deliver at any queue depth. The Optane SSD's latency increases only slightly as it reaches saturation while the Micron 9100's 99th percentile latency begins to climb steeply well before that drive reaches its maximum throughput. The Micron 9100's 99.999th percentile latency also grows substantially as throughput increases, but its growth is more evenly spread across the range of queue depths.

|

|||||||||

| Vertical Axis units: | IOPS | MB/s | |||||||

The Optane SSD reaches its maximum throughput at QD2 and maintains it as more threads and higher queue depths are introduced. The Micron 9100 only provides a little over half of the throughput and requires a queue depth of around 6-8 to reach that performance.

|

|||||||||

| Mean | Median | 99th Percentile | 99.999th Percentile | ||||||

The Micron 9100's 99th percentile latency starts out around twice that of the Optane SSD, but at QD3 it increases sharply as the drive approaches its maximum throughput until it is an order of magnitude higher than the Optane SSD. The 99.999th percentile latencies of the two drives are separated by a wide margin throughout the test.

117 Comments

View All Comments

Billy Tallis - Friday, April 21, 2017 - link

I said the NVMe driver wasn't manually switched into polling mode; I left it with the default behavior which on 4.8 seems to be not polling unless the application requests. I'm certainly not seeing the 100% CPU usage that would be likely if it was polling.If I'd had more time, I would have experimented with the latest kernel versions and the various tricks to get even lower latency.

tuxRoller - Friday, April 21, 2017 - link

I wasn't claiming that you disabled polling only that polling was disabled since it should be on be default for this device.Assuming you were looking at the sysfs interface, was the key that was set to 0 called io_poll or io_poll_delay? The later set to 0 enables hybrid polling, so the cpu wouldn't be pegged.

Either way, you wouldn't need a new kernel, just to enable a feature the kernel has had since 4.4 for these low latency devices.

Also, did you disable the pagecache (direct=1) in your fio commands? If you didn't, that would explain why aio was faster since it uses dio.

Btw, it's not my intent to unnecessarily criticize you because i realize the tests were performed under constrained circumstances. I just would've appreciated some comment in the article about a critical feature for this hardware was not enabled in the kernel.

yankeeDDL - Friday, April 21, 2017 - link

Optane was supposed to be 1000x faster, have 1000X endurance and be 10x denser than NAND (http://hothardware.com/ContentImages/NewsItem/4020...I realize this is the first product, but saying that it fell short of expectation is an understatement.

It has lower endurance, lower density and it is measurably faster, but certainly nowhere close 1000X.

Oh, did I mention it is 5-10X more expensive?

I am quite disappointed, to be honest. It will get better, but @not ready@ is something that comes to ind reading the article.

Billy Tallis - Friday, April 21, 2017 - link

3D XPoint memory was supposed to be 1000x faster than NAND, 1000x more durable than NAND, and 10x denser than DRAM. Those claims were about the 3D XPoint memory itself, not the Optane SSD built around that memory.ddriver - Friday, April 21, 2017 - link

It is probably as good as they said... if you compare it to the shittiest SD card from 10 years ago. Still technically NAND ;)yankeeDDL - Monday, April 24, 2017 - link

I disagree. I can agree that the speed may be limited by the drive, but even so, it falls short by a large factor. The durability and the density, however, are pretty much platform independent and they are not there by a very, very long shot. Intel itself demonstrated that it is only 2.4-3X faster (https://en.wikipedia.org/wiki/3D_XPoint).It clearly has a future, especially as the NAND is approaching the end of its scalability. Engineering wise is interesting, but today, it makes really little sense, while it should have been a slam dunk. I mean, who would have thought twice before buying a 500GB drive that maxes out the SATA for $20-30? But this one ... not so much.

zodiacfml - Friday, April 21, 2017 - link

It will perform better in DIMM.factual - Friday, April 21, 2017 - link

I don't see xpoint replacing dram due to both latency and endurance not being up to par , but It's going to disrupt the ssd market and as the technology matures and prices come down, I can see xpoint revolutionizing the storage market as ssd did years ago.Competition is clearly worried since seems like paid trolls are trying to spread falsehoods and bs here and elsewhere on the web.

ddriver - Saturday, April 22, 2017 - link

I just bet it will be highly disturbing to the SSD market LOL. With its inflated price, limited capacity and pretty much unnecessary advantages I can just see people lining up to buy that and leaving SSDs on the shelves.factual - Saturday, April 22, 2017 - link

You are either extremely ignorant or a paid troll !!! anyone who understands technology knows that new tech is always expensive. When SSDs came to the market, they were much more expensive and had a lot less capacity than HDDs but they closed the gap and disrupted the market. The same is bound to happen for Xpoint which performs better than NAND by orders of magnitude.