The NVIDIA GeForce GTX 1080 Ti Founder's Edition Review: Bigger Pascal for Better Performance

by Ryan Smith on March 9, 2017 9:00 AM ESTThe Witcher 3

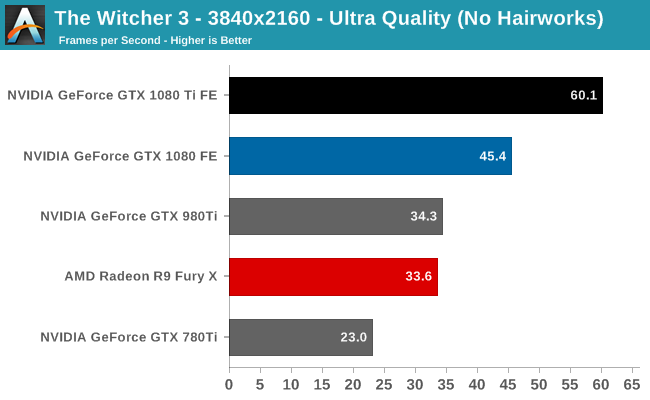

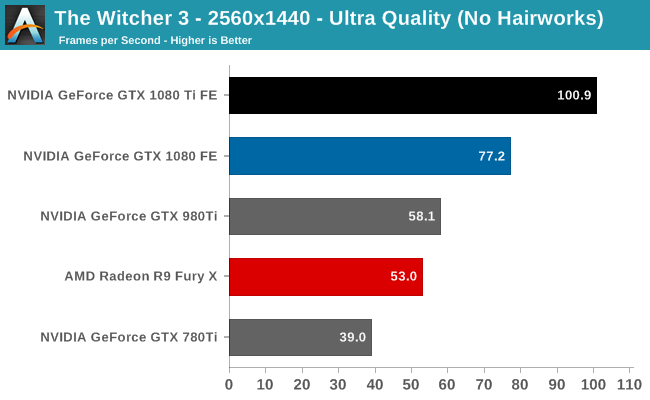

The third game in CD Projekt RED’s expansive RPG series, The Witcher 3 is our RPG benchmark of choice. Utilizing the company’s in-house engine, REDengine 3, The Witcher makes use of an array of DirectX 11 features, all of which combine to make the game both stunning and surprisingly GPU-intensive. Our benchmark is based on an action-heavy in-engine cutscene early in the game, and Hairworks is disabled.

NVIDIA primarily promotes the GTX 1080 Ti as a 4K card, and for good reason. Thanks to Bigger Pascal, NVIDIA finally has the performance to break 60fps on a number of games at 4K, with The Witcher 3 chief among them. At 60.1fps it just makes that mark, with virtually no room to spare.

Overall this game is a strong showing for NVIDIA’s newest card. The GTX 1080 Ti picks up another 32% over the GTX 1080, and 75% over the last-generation GTX 980 Ti.

161 Comments

View All Comments

close - Monday, March 13, 2017 - link

I was talking about optimizing Nvidia's libraries. When you're using an SDK to develop a game you'er relying a lot on that SDK. And if that's exclusively optimized for one GPU/driver combination you're not going to develop an alternate engine that's also optimized for a completely different GPU/driver. And there's a limit to how much you can optimize for AMD when you're building a game using Nvidia SDK.Yes, the developer could go ahead and ignore any SDK out there (AMD or Nvidia) just so they're not lazy but that would only bring worse results equally spread across all types of GPUs, and longer development times (with the associated higher costs).

You have the documentation here:

https://docs.nvidia.com/gameworks/content/gamework...

AMD offers the same services technically but why would developers go for it? They're optimizing their game for just 25% of the market. Only now is AMD starting to push with the Bethesda partnership.

So to summarize:

-You cannot touch Nvidia's *libraries and code* to optimize them for AMD

-You are allowed to optimize your game for AMD without losing any kind of support from Nvidia but when you're basing it on Nvidia's SDK there's only so much you can do

-AMD doesn't really support developers much with this since optimizing a game based on Nvidia's SDK seems to be too much effort even for them, and AMD would rather have developers using the AMD libraries but...

-Developers don't really want to put in triple the effort to optimize for AMD also when they have only 20% market share compared to Nvidia's 80% (discrete GPUs)

-None of this is illegal, it's "just business" and the incentive for developers is already there: Nvidia has the better cards so people go for them, it's logical that developers will follow

eddman - Monday, March 13, 2017 - link

Again, most of those gameworks effects are CPU only. It does NOT matter at all what GPU you have.As for GPU-bound gameworks, they are limited to just a few in-game effects that can be DISABLED in the options menu.

The main code of the game is not gameworks related and the developer can optimize it for AMD. Is it clear now?

Sure, it sucks that GPU-bound gameworks effects cannot be optimized for AMD and I don't like it either, but they are limited to only a few cosmetic effects that do not have any effect on the main game.

eddman - Monday, March 13, 2017 - link

Not to mention that a lot of gameworks game do not use any GPU-bound effects at all. Only CPU.eddman - Monday, March 13, 2017 - link

Just one example: http://www.geforce.com/whats-new/articles/war-thun...Look for the word "CPU" in the article.

Meteor2 - Tuesday, March 14, 2017 - link

Get a room you two!MrSpadge - Thursday, March 9, 2017 - link

AMD demonstrated they "cache thing" (which seems to be tile based rendering, as in Maxwell and Pascal) to result in a 50% performance increase. So 20% IPC might be far too conservative. I wouldn't bet on a 50% clock speed increase, though. nVidia designed Pascal for high clocks, it's not just the process. AMD seems to intend the same, but can they get it similarly well? If so I'm inclined to ask "why did it take you so long"?FalcomPSX - Thursday, March 9, 2017 - link

I look forward to vega and seeing how much performance it brings, and i really hope it does end up giving performance around a 1080 level for typically lower and more reasonable AMD pricing, but honestly, i expect it to probably come close to but not quite match a 1070 in dx11, surpass it in dx12, and at a much lower price.Midwayman - Thursday, March 9, 2017 - link

Even if its just 2 polaris chips of performance you're past 1070 level. I think conservative is 1080 @ $400-450. Not that there won't be a cut down part at 1070 level, but I'd be really surprised if that is the full die version.Meteor2 - Tuesday, March 14, 2017 - link

I think that sometimes Volta is over-looked. Whatever Vega brings, I feel Volta is going to top it.AMD is catching up with Intel and Nvidia, but outside of mainstream GPUs and HEDT CPUs, they've not done it yet.

Meteor2 - Tuesday, March 14, 2017 - link

Mind you Volta is only coming to Tesla this year, and not consumer until next year. Do AMD should have a competitive full stack for a year. Good times!