The NVIDIA GeForce GTX 1080 Ti Founder's Edition Review: Bigger Pascal for Better Performance

by Ryan Smith on March 9, 2017 9:00 AM ESTSecond Generation GDDR5X: More Memory Bandwidth

One of the more unusual aspects of the Pascal architecture is the number of different memory technologies NVIDIA can support. At the datacenter level, NVIDIA has a full HBM 2 memory controller, which they use for the GP100 GPU. Meanwhile for consumer and workstation cards, NVIDIA equips GP102/104 with a more traditional memory controller that supports both GDDR5 and its more exotic cousin: GDDR5X.

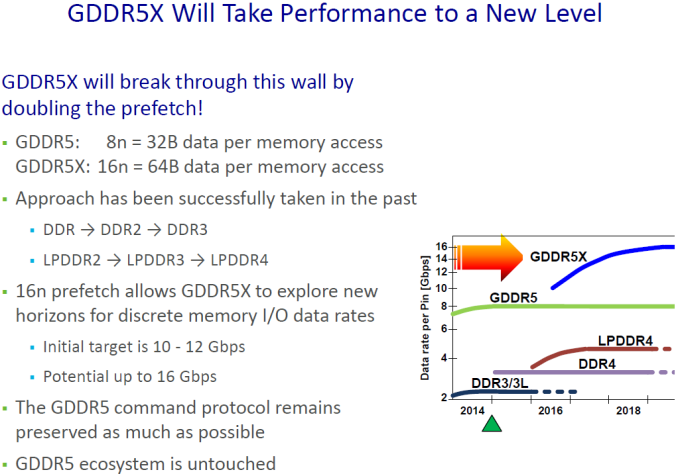

A half-generation upgrade of sorts, GDDR5X was developed by Micron to further improve memory bandwidth over GDDR5. GDDR5X further increases the amount of memory bandwidth available from GDDR5 through a combination of a faster memory bus coupled with wider memory operations to read and write more data from DRAM per clock. And though it’s not without its own costs such as designing new memory controllers and boards that can accommodate the tighter requirements of the GDDR5X memory bus, GDDR5X offers a step in performance between the relatively cheap and slow GDDR5, and relatively fast and expensive HBM2.

With rival AMD opting to focus on HBM2 and GDDR5 for Vega and Polaris respectively, NVIDIA has ended up being the only PC GPU vendor to adopt GDDR5X. The payoff for NVIDIA, besides the immediate benefits of GDDR5X, is that they can ship with memory configurations that AMD cannot. Meanwhile for Micron, NVIDIA is a very reliable and consistent customer for their GDDR5X chips.

When Micron initially announced GDDR5X, they laid out a plan to start at 10Gbps and ramp to 12Gbps (and beyond). Now just under a year after the launch of the GTX 1080 and the first generation of GDDR5X memory, Micron is back with their second generation of memory, which of course is being used to feed the GTX 1080 Ti. And NVIDIA, for their part, is very eager to talk about what this means for them.

With Micron’s second generation GDDR5X, NVIDIA is now able to equip their cards with 11Gbps memory. This is a 10% year-over-year improvement, and a not-insignificant change given that memory speeds increase at a fraction of GPU throughput. Coupled with GP102’s wider memory bus – which sees 11 of 12 lanes enabled for the GTX 1080 Ti – and NVIDIA is able to offer just over 480GB/sec of memory bandwidth with this card, a 50% improvement over the GTX 1080.

For NVIDIA, this is something they’ve been eagerly awaiting. Pascal’s memory controller was designed for higher GDDR5X memory speeds from the start, but the memory itself needed to catch up. As one NVIDIA engineer put it to me “We [NVIDIA] have it easy, we only have to design the memory controller. It’s Micron that has it hard, they have to actually make memory that can run at those speeds!”

Micron for their part has continued to work on GDDR5X after its launch, and even with what I’ve been hearing was a more challenging than anticipated launch last year, both Micron and NVIDIA seem to be very happy with what Micron has been able to accomplish with their second generation GDDR5X memory.

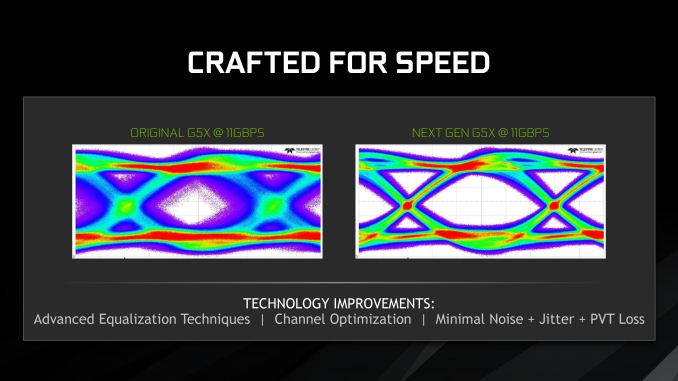

As demonstrated in eye diagrams provided by NVIDIA, Micron’s second generation memory coupled with NVIDIA’s memory controller is producing a very clean eye at 11Gbps, whereas the first generation memory (which was admittedly never speced for 11Gbps) would produce a very noisy eye. Consequently NVIDIA and their partners can finally push past 10Gbps for the GTX 1080 Ti and the forthcoming factory overclocked GTX 1080 and GTX 1060 cards.

Under the hood, the big developments here were largely on Micron’s side. The company continued to optimize their metal layers for GDDR5X, and combined with improved test coverage were able to make a lot of progress over the first generation of memory. This in turn is coupled with improvements in equalization and noise reduction, resulting in the clean eye we see above.

Longer-term here, GDDR6 is on the horizon. But before then, Micron is still working on further improvements to GDDR5X. Micron’s original goal was to hit 12Gbps with this memory technology, and while they’re not there quite yet, I wouldn’t be too surprised to be having this conversation once again for 12Gbps memory within the next year.

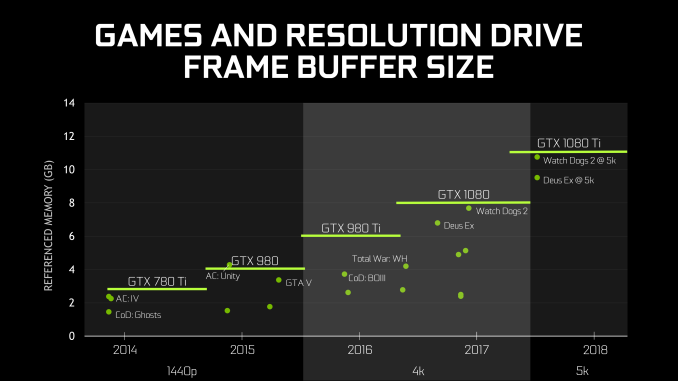

Finally, speaking of memory, it’s worth noting that NVIDIA also dedicated a portion of their GTX 1080 Ti presentation to discussing memory capacity. To be honest, I get the impression that NVIDIA feels like they need to rationalize equipping the GTX 1080 Ti with 11GB of memory, beyond the obvious conclusions that it is cheaper than equipping the card with 12GB and it better differentiates the GTX 1080 Ti from the Titan X Pascal.

In any case, NVIDIA believes that based on historical trends, 11GB will be sufficient for 5K gaming in 2018 and possibly beyond. Traditionally NVIDIA has not been especially generous on memory – cards like the 3GB GTX 780 Ti and 2GB GTX 770 felt the pinch a bit early – so going with a less-than-full memory bus doesn’t improve things there. On the other hand with the prevalence of multiplatform games these days, one of the biggest drivers in memory consumption was that the consoles had 8GB of RAM each; and with 11GB, the GTX 1080 Ti is well ahead of the consoles in this regard.

161 Comments

View All Comments

theuglyman0war - Sunday, March 12, 2017 - link

Geez developers can't catch a break...I been a registered developer with ATI/AMD and NVIDIA both for years...

Besides the research and papers and tools they invest to further the interest of game development. I have never had to do anything but reap the rewards and say thank you. Maybe there are secret meetings and koolaid I am missing?

Not sure what the actual correct scenario is tell you the truth?

Either triple AAA dev is hamstringing everyone's PC experience because development is all Console centric ( Which means tweaking FOR an "AMD owned architecture landscape" for two solid console cycles where "this console cycle alone sees both BOBCAT and PUMA being supported"! )

Or the Industry is in Nvidia's back pocket because of what? the overwhelming PhysX support? There is an option to turn on Hair in a game? Their driver support is an evil conspiracy?

Whatever... If there is some koolaid I wanna know where that line is? Gimme! I want me some green Koolaid!

theuglyman0war - Sunday, March 12, 2017 - link

Turns out your right! There is a monopoly in game development Software optimization supporting only one hardware platform! Alert the press!http://developer.amd.com/wordpress/media/2012/10/S...

HomeworldFound - Thursday, March 9, 2017 - link

Underhanded deals are part of the industry. Have you ever wondered about the prices even when price fixing is disallowed and supposedly abolished?These deals are always happening, look at the public terms of the deal NVIDIA and Intel.

Nvidia gets:

1.5 Billion Dollars, Over six Years.

6 Year Extension of C2D/AGTL+ Bus License

Access To Unspecified Intel Microprocessor Patents. Denver?

NVIDIA Doesn't Get:

DMI/QPI Bus License; Nehalem/Sandy Bridge Chipsets

x86 License, Including Rights To Make an x86 Emulator

For a company that was supposed to be headed into x86 wouldn't you say that's anti-competitive?

eddman - Friday, March 10, 2017 - link

Prices, I don't know. Isn't it a combination of market demand, (lack of) competition and shortages.What does that deal has to do with this? Not giving certain licenses, publicly, is not the same as actively preventing devs from optimizing for the competition in shadows.

theuglyman0war - Sunday, March 12, 2017 - link

Well there was/is a lot of litigation it seems like admittedly!close - Friday, March 10, 2017 - link

It would not be illegal, it's called "having the market by its bawles". Have you ever actually talked to someone who does this or do you like assuming it's illegal and conspiracy theory (@eddman)?You're thinking about GameWorks and GW is offered to developers under NDA with the agreement prohibiting them from changing the GW libraries in any way or "optimizing" for AMD. And by the time AMD gets their hands on the proper optimizations to put in their drivers it's pretty much too late. Also GW targets all the weaknesses of AMD GPUs like excessive use of tessellation.

But since everywhere you throw a game at you're going to find an Nvidia user (75% market share), game developers realize it's good for business and don't make too much noise.

Nothing is illegal, it's certainly not a conspiracy theory, it may very well be immoral but it's business. And it happens every day, all around you.

eddman - Friday, March 10, 2017 - link

I'm not talking about GPU-bound GW effects that can be disabled in game, and I do know that they are closed-source and cannot be optimized for AMD.I'm talking about whole game codes. Nvidia cannot legally prevent a developer from optimizing the non-GW code of a game (which is the main part) for AMD.

Besides, a lot of GW effects are CPU-only, so it doesn't matter what brand you use in such cases.

ddriver - Friday, March 10, 2017 - link

It cannot legally prevent devs from optimizing for amd, but it can legally cut their generous support.There isn't that much difference between:

"If you optimize for AMD we will stop giving you money" and

"If you optimize for AMD we will stop paying people to help you".

It is practically a combination of bribe and extortion. Both very much illegal.

And that's at studio level, at individual level nvidia can make it pretty much impossible to have a job at a good game studio.

Intel didn't exactly bribe anyone directly either, they just offered discounts. But they were found guilty.

Although if you ask me, intel being found guilty on all continents didn't really serve the purpose of punishing them for their crimes. It was more of a way to allow them to cheaply legalize their monopoly.

An actually punitive action would be fining them the full amount of money they made on their illegal practices, and cutting the company down to its pre-monopoly size. Instead the fines were pretty much modest, even laughably low, a tiny fraction of the money they made on their illegal business practices, and they got to keep the monopoly they build on them as well.

So yeah, some people made some money, the damage done by intel was not undone by any means, and their monopoly built through illegal practices has been washed clean and legal. Now that monopolist is as pure as the driven snow. And it took less than a quarter's worth of average net profit to wipe clean years of abuse.

ddriver - Friday, March 10, 2017 - link

amd was not in the position to make discounts, intel was, so they abused that on exclussive terms for monetary gainsamd is not in the position to sponsor software developers, nvidia is, so they abuse that on exclussive terms for monetary gains

I don't really see a difference between the two. No one was exactly forced to take intel's exclussive discounts either, much like with nvidia's exclusive support and visits to strip clubs and such.

So, by citing intel as precedent, I'd say what nvidia does is VERY MUCH ILLEGAL.

eddman - Friday, March 10, 2017 - link

Then why nothing has happened all these years, for something that seems to be open knowledge among game publishers, developers and GPU manufacturers?A dev asking nvidia for help optimizing a game is not illegal. It is a service. AMD is free to do the same.

What about those games where AMD helped the devs to optimize for their hardware? Was that illegal too? It's either illegal for both or none.

If they've been doing it openly for years and even put their logos in games' loading screens, then it's safe to say it's not illegal at all.