The AMD Zen and Ryzen 7 Review: A Deep Dive on 1800X, 1700X and 1700

by Ian Cutress on March 2, 2017 9:00 AM ESTSimultaneous MultiThreading (SMT)

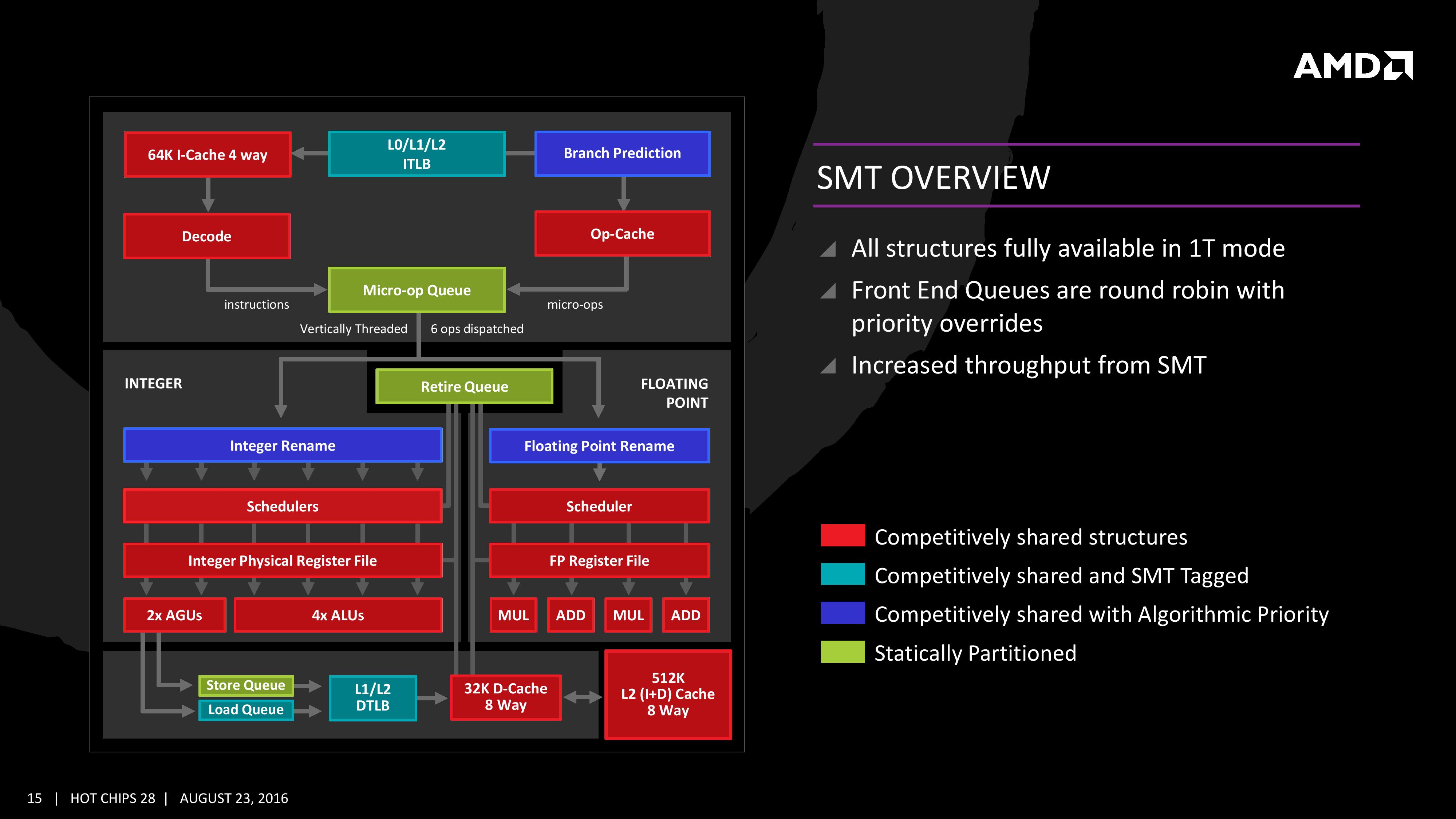

Zen will be AMD’s first foray into a true simultaneous multithreading structure, and certain parts of the core will act differently depending on their implementation. There are many ways to manage threads, particularly to avoid stalls where one thread is blocking another that ends in the system hanging or crashing. The drivers that communicate with the OS also have to make sure they can distinguish between threads running on new cores or when a core is already occupied – to achieve maximum throughput then four threads should be across two cores, but for efficiency where speed isn’t a factor, perhaps power gating/clock gating half the cores in a CCX is a good idea.

There are a number of ways that AMD will deal with thread management. The basic way is time slicing, and giving each thread an equal share of the pie. This is not always the best policy, especially when you have one performance dominant thread, or one thread that creates a lot of stalls, or a thread where latency is vital. In some methodologies the importance of a thread can be tagged or determined, and this is what we get here, though for some of the structures in the core it has to revert to a basic model.

With each thread, AMD performs internal analysis on the data stream for each to see which thread has algorithmic priority. This means that certain threads will require more resources, or that a branch miss needs to be prioritized to avoid long stall delays. The elements in blue (Branch Prediction, INT/FP Rename) operate on this methodology.

A thread can also be tagged with higher priority. This is important for latency sensitive operations, such as a touch-screen input or immediate user input elements required. The Translation Lookaside Buffers work in this way, to prioritize looking for recent virtual memory address translations. The Load Queue is similarly enabled this way, as typically low latency workloads require data as soon as possible, so the load queue is perfect for this.

Certain parts of the core are statically partitioned, giving each thread an equal timing. This is implemented mostly for anything that is typically processed in-order, such as anything coming out of the micro-op queue, the retire queue and the store queue. However, when running in SMT mode but only with a single thread, the statically partitioned parts of the core can end up as a bottleneck, as they are idle half the time.

The rest of the core is done via competitive scheduling, meaning that if a thread demands more resources it will try to get there first if there is space to do so each cycle.

New Instructions

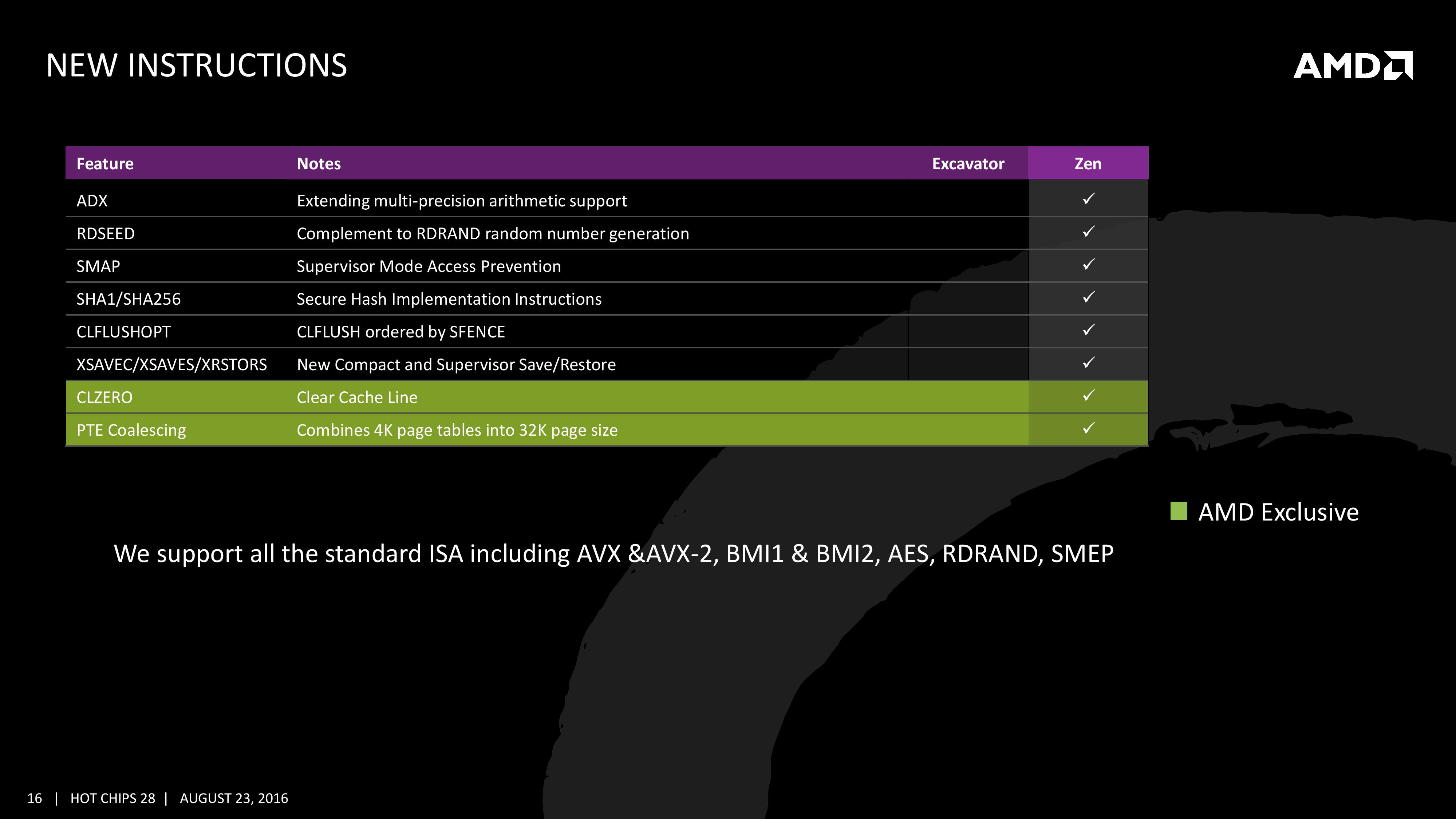

AMD has a couple of tricks up its sleeve for Zen. Along with including the standard ISA, there are a few new custom instructions that are AMD only.

Some of the new commands are linked with ones that Intel already uses, such as RDSEED for random number generation, or SHA1/SHA256 for cryptography (even with the recent breakthrough in security). The two new instructions are CLZERO and PTE Coalescing.

The first, CLZERO, is aimed to clear a cache line and is more aimed at the data center and HPC crowds. This allows a thread to clear a poisoned cache line atomically (in one cycle) in preparation for zero data structures. It also allows a level of repeatability when the cache line is filled with expected data. CLZERO support will be determined by a CPUID bit.

PTE (Page Table Entry) Coalescing is the ability to combine small 4K page tables into 32K page tables, and is a software transparent implementation. This is useful for reducing the number of entries in the TLBs and the queues, but requires certain criteria of the data to be used within the branch predictor to be met.

574 Comments

View All Comments

nt300 - Saturday, March 11, 2017 - link

If AMD hadn't gone with GF's 14nm process, ZEN would probably have been delayed. I think as soon as Ryzen Optimizations come out, these chips will further outperform.MongGrel - Thursday, March 9, 2017 - link

For some reason making a casual comment about anything bad about the chip will get you banned at the drop of a hat on the tech forums, and then if you call him out they will ban you more.

https://arstechnica.com/gadgets/2017/03/amds-momen...

MongGrel - Thursday, March 9, 2017 - link

For some reason, MarkFW seems to thinks he is the reincarnation of Kyle Bennet, and whines a lot before retreating to his safe space.nt300 - Saturday, March 11, 2017 - link

I've noticed in the past that AMD has an issue with increasing L3 cache speed and/or Latencies. Hopefully they start tightening the L3 as much as possible. Can Anandtech do a comparison between Ryzen before Optimizations and after Optimizations. Tyalpha754293 - Friday, March 17, 2017 - link

Looks like that for a lot of the compute-intensive benchmarks, the new Ryzen isn't that much better than say a Core i5-7700K.That's quite a bit disappointing.

AMD needs to up their FLOPS/cycle game in order to be able to compete in that space.

Such a pity because the original Opterons were a great value proposition vs. the Intels. Now, it doesn't even come close.

deltaFx2 - Saturday, March 25, 2017 - link

@Ian Cutress: When you do test gaming, if you can, I'd love to have the hypothesis behind the 'generally accepted methodology' tested out. The methodology being, to test it at lowest resolution. The hypothesis is that this stresses the CPU, and that a future, higher performance GPU will be bottlenecked by the slower CPU. Sounds logical, but is it?Here's the thing: Typically, when given more computing resources, people scale up their problem to utilize those resources. In other words, if I give you a more powerful GPU, games will scale up their perf requirements to match it, by doing stuff that were not possible/practical in earlier GPUs. Today's games are far more 'realistic' and are played at much higher resolutions than say 5 years ago. In which case, the GPU is always the limiting factor no matter what (unless one insists on playing 5 year old games on the biggest, baddest GPU). And I fully expect that the games of today are built to max out current GPUs, so hardware lags software.

This has parallels with what happens in HPC: when you get more compute nodes for HPC problems, people scale up the complexity of their simulations rather than running the old, simplified simulations. Amdahl's law is still not a limiting factor for HPC, and we seem to be talking about Exascale machines now. Clearly, there's life in HPC beyond what a myopic view through the Amdahl law lens would indicate.

Just a thought :) Clearly, core count requirements have gone up over the last decade, but is it true that a 4c/8t sandy bridge paired up with Nvidia's latest and greatest is CPU-bottlenecked at likely resolutions?

wavelength - Friday, March 31, 2017 - link

I would love to see Anand test against AdoredTV's most recent findings on Ryzen https://www.youtube.com/watch?v=0tfTZjugDegLawJikal - Friday, April 21, 2017 - link

What I'm surprised to see missing... in virtually all reviews across the web... is any discussion (by a publication or its readers) on the AM4 platform's longevity and upgradability (in addition to its cost, which is readily discussed).Any Intel Platform - is almost guaranteed to not accommodate a new or significantly revised microarchitecture... beyond the mere "tick". In order to enjoy a "tock", one MUST purchase a new motherboard (if historical precedent is maintained).

AMD AM4 Platform - is almost guaranteed to, AT LEAST, accommodate Ryzen "II" and quite possibly Ryzen "III" processors. And, in such cases, only a new processor and BIOS update will be necessary to do so.

This is not an insignificant point of differentiation.

PeterCordes - Monday, June 5, 2017 - link

The uArch comparison table has some errors for the Intel columns. Dispatch/cycle: Skylake can read 6 uops per clock from the uop cache into the issue queue, but the issue stage itself is still only 4 uops wide. You've labelled Even running from the loop buffer (LSD), it can only sustain a throughput of 4 uops per clock, same 4-wide pipeline width it has been since Core2. (pre-Haswell it has to be a mix of ALU and some store or load to sustain that throughput without bottlenecking on the execution ports.) Skylake's improved decode and uop-cache bandwidth lets it refill the uop queue (IDQ) after bubbles in earlier stages, keeping the issue stage fed (since the back-end is often able to actually keep up).Ryzen is 6-wide, but I think I've read that it can only issue 6 uops per clock if some of them are from "double instructions". e.g. 256-bit AVX like VADDPS ymm0, ymm1, ymm2 that decodes to two separate 128-bit uops. Running code with only single-uop instructions, the Ryzen's front-end throughput is 5 uops per clock.

In Intel terminology, "dispatch" is when the scheduler (aka Reservation Station) sends uops to the execution units. The row you've labelled "dispatch / cycle" is clearly the throughput for issuing uops from the front-end into the out-of-order core, though. (Putting them into the ROB and Reservation Station). Some computer-architecture people call that "dispatch", but it's probably not a good idea in an x86 context. (Unless AMD uses that terminology; I'm mostly familiar with Intel).

----

You list the uop queue size at 128 for Skylake. This is bogus. It's always 64 per thread, with or without hyperthreading. Intel has alternated in SnB/IvB/HSW/SKL between this and letting one thread use both queues as a single big queue. HSW/BDW statically partition their 56-entry queue into two 28-entry halves when two threads are active, otherwise it's a 56-entry queue. (Not 64). Agner Fog's microarch pdf and Intel's optmization manual both confirm this (in Section 2.1.1 about Skylake's front-end improvements over previous generations).

Also, the 4-uop per clock issue width is 4 fused-domain uops, so I was able to construct a loop that runs 7 unfused-domain uops per clock (http://www.agner.org/optimize/blog/read.php?i=415#... with 2 micro-fused ALU+load, one micro-fused store, and a dec/branch. AMD doesn't talk about "unfused" uops because it doesn't use a unified scheduler, IIRC, so memory source operands always stay with the ALU uop.

Also, you mentioned it in the text, but the L1d change from write-through to write-back is worth a table row. IIRC, Bulldozer's L1d write-back has a small buffer or something to absorb repeated writes of the same lines, so it's not quite as bad as a classic write-through cache would be for L2 speed/power requirements, but Ryzen is still a big improvement.