The AMD Zen and Ryzen 7 Review: A Deep Dive on 1800X, 1700X and 1700

by Ian Cutress on March 2, 2017 9:00 AM ESTPower, Performance, and Pre-Fetch: AMD SenseMI

Part of the demos leading up to the launch involved a Handbrake video transcode: a multithreaded test, showing a near-identical completion time between a high-frequency Ryzen without turbo compared to an i7-6900K at similar frequencies. Similarly we saw a Blender test we saw back in August achieving the same feat. AMD at the time also fired up some power meters, showing that Ryzen power consumption in that test was a few watts lower than the Intel part, implying that AMD is meeting its targets for power, performance and as a result, efficiency. The 52% improvement in IPC/efficiency is a result AMD seems confident that this target has been surpassed.

Leading up to the launch, AMD explained during our briefings that during the Zen design stages, up to 300 engineers were working on the core engine with an aggressive mantra of higher IPC for no power gain. This has apparently lead to over two million work hours of time dedicated to Zen. This is not an uncommon strategy for core designs. Part of this time will be spent devoping new power modes, and part of Zen is is that optimization and extension of the power/frequency curve: a key point in AMD’s new 5-stage ‘SenseMI’ technology.

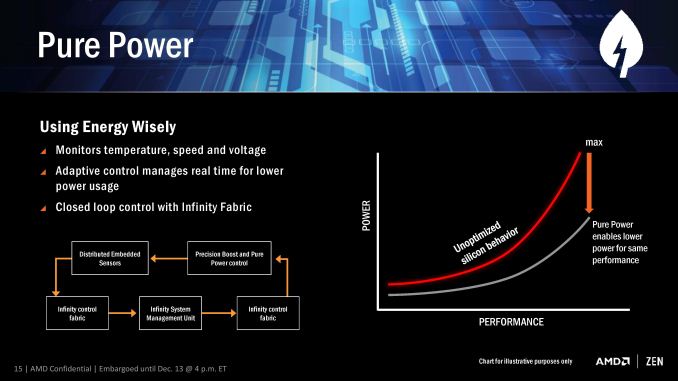

SenseMI Stage 1: Pure Power

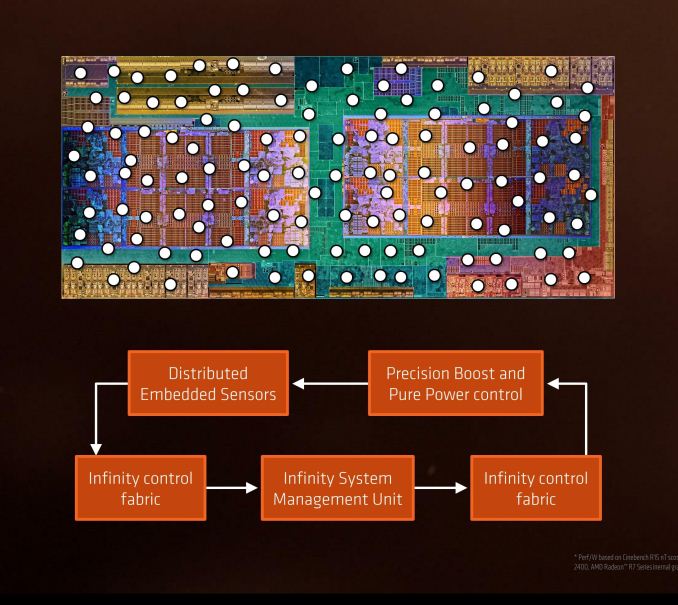

A number of recent microprocessor launches have revolved around silicon-optimized power profiles. We are now removed from the ‘one DVFS curve fits all’ application for high-end silicon, and AMD’s solution in Ryzen will be called Pure Power. The short explanation is that using distributed embedded sensors in the design (first introduced in bulk with Carrizo) that monitor temperature, speed and voltage, and the control center can manage the power consumption in real time. The glue behind this technology comes in form of AMD’s new ‘Infinity Fabric’.

The fact that it’s described as a fabric means that it goes through the entire processor, connecting various parts together as part of that control. This is something wildly different to what we saw in Carrizo, aside from being the next-gen power adjustment and under a new name, and will permiate through Zen, Vega, and future AMD products.

The upshot of Pure Power is that the DVFS curve is lower and more optimized for a given piece of silicon than a generic DVFS curve, which results in giving lower power at various/all levels of performance. This in turn benefits the next part of SenseMI, Precision Boost.

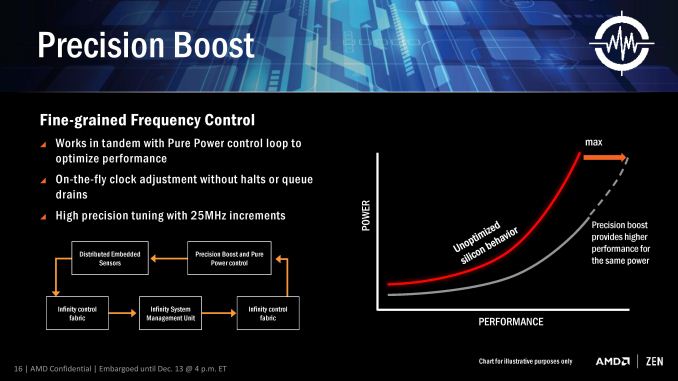

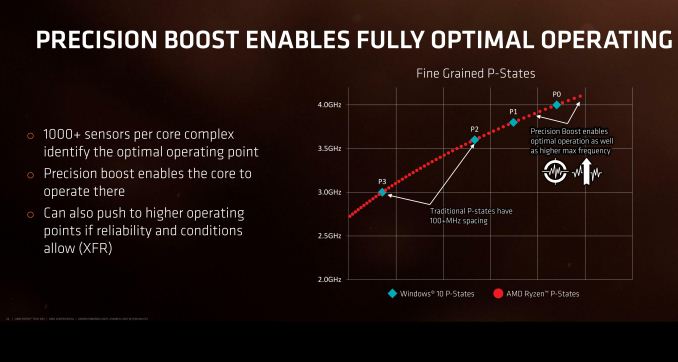

SenseMi Stage 2: Precision Boost

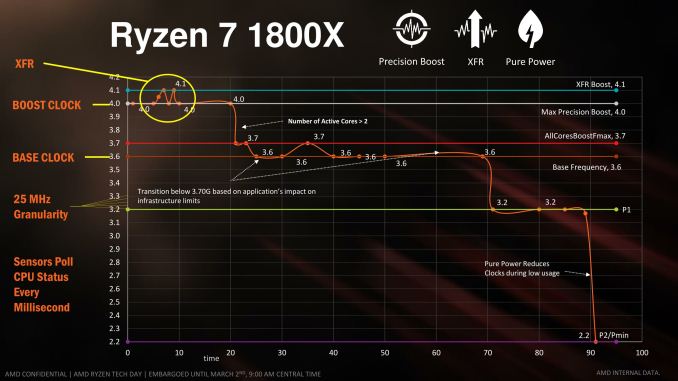

For almost a decade now, most commercial PC processors have invoked some form of boost technology to enable processors to use less power when idle and fully take advantage of the power budget when only a few elements of the core design is needed. We see processors that sit at 2.2 GHz that boost to 2.7 GHz when only one thread is needed, for example, because the whole chip still remains under the power limit. AMD is implementing Precision Boost for Ryzen, increasing the DVFS curve to better performance due to Pure Power, but also offering frequency jumps in 25 MHz steps which is new.

Precision Boost relies on the same Infinity Control Fabric that Pure Power does, but allows for adjustments of core frequency based on performance requirements and suitability/power given the rest of the core. The fact that it offers 25 MHz steps is surprising, however.

Current turbo control systems, on both AMD and Intel, are invoked by adjusting the CPU frequency multiplier. With the 100 MHz base clock on all modern CPUs, one step in frequency multiplier gives 100 MHz jump for the turbo modes, and any multiple of the multiplier can be used on the basis of whole numbers only.

With AMD moving to 25 MHz jumps in their turbo, this means either AMD can implement 0.25x fractional multipliers, similar to how processors in the early 2000s were able to negotiate 0.5x multiplier jumps. What this means in reality is that the processor has over 100 different frequencies it can potentially operate at, although control of the fractional multipliers below P0 is left to XFR (below).

Part of this comes down to the extensive sensor technology, originally debuted for AMD in Carrizo at scale, but now offering almost 1000 sensors per chip to analyze at what frequency the core can run at. AMD controls all frequency of each core independently, which suggests that users might be able to find the highest performing core and lock important software on it.

If we consider that Zen’s original chief designer was Jim Keller (and his team), known for a number of older generation of AMD processors, a similar fractional multiplier technology might be in play here. If/when we get more information on it, we will let you know.

SenseMi Stage 3: Extended Frequency Range (XFR)

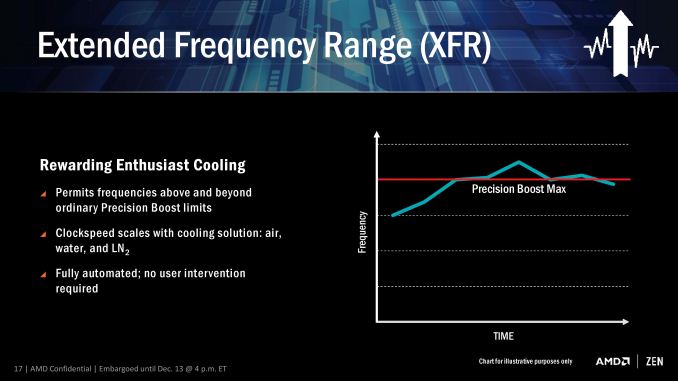

The main marketing points of on-the-fly frequency adjustment are typically down to low idle power and higher performance when needed. The current processors on the market have rated speeds on the box which are fixed frequency settings that can be chosen by the processor/OS depending on what level of performance is possible/required. AMD’s new XFR mode seems to do away with this, offering what sounds like an unlimited bound on performance.

The concept here is that, beyond the rated turbo mode, if there is sufficient cooling then the CPU will continue to increase the clock speed and voltage until a cooling limit is reached. This is somewhat murky territory, though AMD claims that a multitude of different environments can be catered for the feature. AMD was not clear if this limit is determined by power consumption, temperature, or if they can protect from issues such as a bad frequency/voltage setting.

This is a dynamic adjustment rather than just another embedded look-up table such as P-states. AMD states that XFR is a fully automated system with no user intervention, although I suspect in time we might see an on/off switch in the BIOS. It also somewhat negates overclocking if your cooling can support it, which then brings up the issue for overclocking in general: casual users may not ever need to step into the overclocking world if the CPU does it all automatically.

I imagine that a manual overclock will still be king, especially for extreme overclockers competing with liquid nitrogen, as being able to personally fine tune a system might be better than letting the system do it itself. It can especially be true in those circumstances, as sensors on hardware can fail, report the wrong temperature, or may only be calibrated within a certain range.

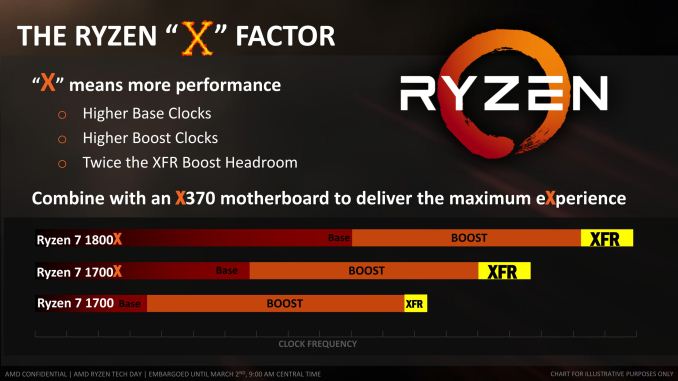

XFR will be on every consumer CPU (as the Zen microarchitecture is destined for server and mobile as well, XFR might have different connotations for both of those markets), and typically will allow for +100 MHz. CPUs that have the extra 'X' should allow for up to +200 MHz through XFR. This level of XFR is not set in stone, and may change in future CPUs.

SenseMi Stage 4+5: Neural Net Prediction and Smart Prefetch

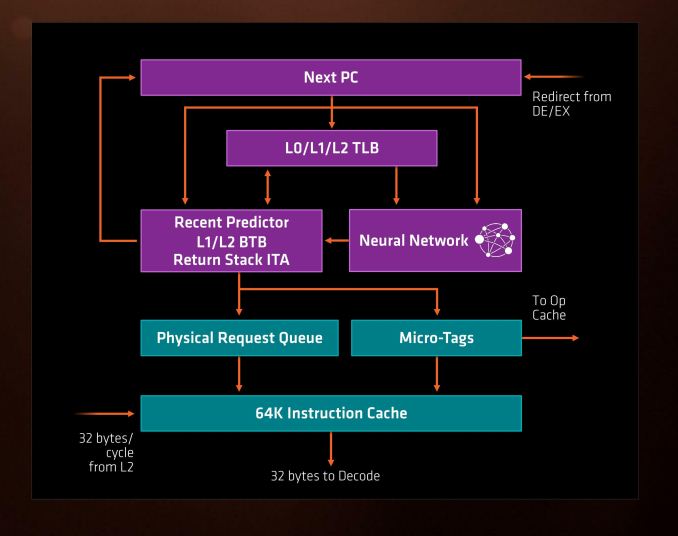

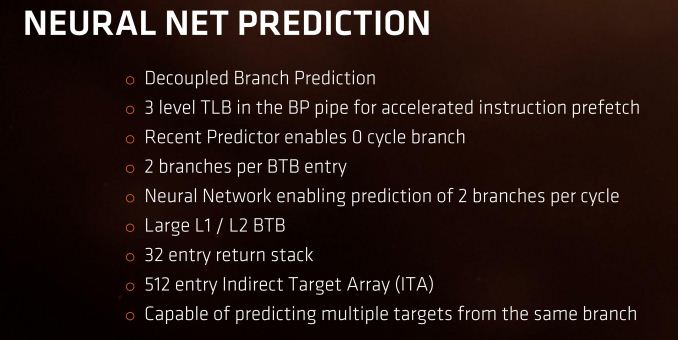

Every generation of CPUs from the big companies come with promises of better prediction and better pre-fetch models. These are both important to hide latency within a core which might be created by instruction decode, queuing, or more usually, moving data between caches and main memory to be ready for the instructions. With Ryzen, AMD is introducing its new Neural Net Prediction hardware model along with Smart Pre-Fetch.

AMD is announcing this as a ‘true artificial network inside every Zen processor that builds a model of decisions based on software execution’. This can mean one of several things, ranging from actual physical modelling of instruction workflow to identify critical paths to be accelerated (unlikely) or statistical analysis of what is coming through the engine and attempting to work during downtime that might accelerate future instructions (such as inserting an instruction to decode into an idle decoder in preparation for when it actually comes through, therefore ends up using the micro-op cache and making it quicker).

For Zen this means two branches can be predicted per cycle (so, one per thread per cycle), and a multi-level TLB to assist recently required instructions again. With these caches and buffers, typically doubling in size gets a hit rate of sqrt(2), or +41%, for double the die area, and it becomes a balance of how good you want it to be compared with how much floor plan area can be dedicated to it.

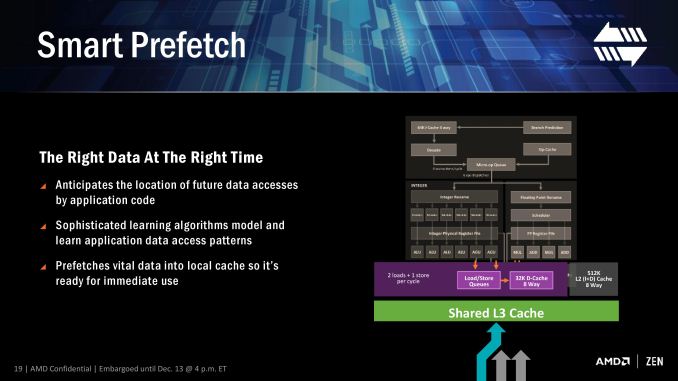

Modern processors already do decent jobs when repetitive work is being used, such as identifying when every 4th element in a memory array is being accessed, and can pull that data in earlier to be ready in case it is used. The danger of smart predictors however is being overly aggressive – pulling in too much data that old data might be ditched because it’s never used (over prediction), pulling in too much data such that it’s already evicted by the time the data is needed (aggressive prediction), or simply wasting excess power with bad predictions (stupid prediction…).

AMD is stating that Zen implements algorithm learning models for both instruction prediction and prefetch, which will no doubt be interesting to see if they have found the right balance of prefetch aggression and extra work in prediction.

It is worth noting here that AMD will likely draw upon the increased L3 bandwidth in the new core as a key element to assisting the prefetch, especially as the shared L3 cache is an exclusive victim cache and designed to contain data already used/evicted to be used again at a later date.

574 Comments

View All Comments

Notmyusualid - Saturday, March 4, 2017 - link

Can't disagree with you pal. They look like they execptional value for money.I on the other hand, am already on LGA2011-v3 platform, so I won't be changing, but the main point here is - AMD are back. And we welcome them too.

Alexvrb - Saturday, March 4, 2017 - link

Yeah... if the pricing is as good as rumored for the Ryzen 5, I may pick up a quad-core model. Gives me an upgrade path too, maybe a Ryzen+ hexa or octa-core down the road. For budget builds that Ryzen 3 non-SMT quad-core is going to be hard to argue with though.wut - Sunday, March 5, 2017 - link

You're really optimistically assuming things.Kaby Lake Core i5 7400 $170

Ryzen 5 1600X $259

...and single thread benchmark shows Core i5 to be firmly ahead, just as Core i7 is. The story doesn't seem to change much in the mid range.

Meteor2 - Tuesday, March 7, 2017 - link

@wut spot-on. It also seems that Zen on GloFlo 14 nm doesn't clock higher than 4.0 GHz. Zen has lower IPC and lower actual clocks than Intel KBL.Whichever way you cut it, however many cores in a chip are being considered, in terms of performance, Intel leads. Intel's pricing on >4 core parts is stupid and AMD gives them worthy price competition here. But at 4C and below, Intel still leads. AMD isn't price-competitive here either. No wonder Intel haven't responded to Zen. A small clock bump with Coffee Lake and a slow move to 10 nm starting with Cannon Lake for mobile CPUs (alongside or behind the introduction of 10 nm 'datacentre' chips) is all they need to do over the next year.

After all, if Intel used the same logic as TSMC and GloFlo in naming their process nodes, i.e. using the equivalent nanometre number of if finFETs weren't being used, Intel would say they're on a 10 nm process. They have a clear lead over GloFlo and thus anything AMD can do.

Cooe - Sunday, February 28, 2021 - link

I'm here from the future to tell you that you were wrong about literally everything though. AMD is kicking Intel's ass up and down the block with no end in sight.Cooe - Sunday, February 28, 2021 - link

Hahahaha. I really fucking hope nobody actually took your "buying advice". The 6-core/12-thread Ryzen 5 1600 was about as fast at 1080p gaming as the 4c/4t i5-7400 ON RELEASE in 2017, and nowadays with modern games/engines it's like TWICE AS FAST.deltaFx2 - Saturday, March 4, 2017 - link

I think the reviewer you're quoting is Gamers Nexus. He doesn't come across as being a particularly erudite person on matters of computer architecture. He throws a bunch of tests at it, and then spews a few untutored opinions, which may or may not be true. Tom's hardware does a lot of the same thing, and more, and their opinions are far more nuanced. Although they too could have tried to use an AMD graphics card to see if the problems persist there as well, but perhaps time was the constraint.There's the other question of whether running the most expensive GPU at 1080p is representative of real-world performance. Gaming, after all, is visual and largely subjective. Will you notice a drop of (say) 10 FPS at 150 FPS? How do you measure goodness of output? Let's contrive something.

All CPUs have bottlenecks, including Intel. The cases where AMD does better than Intel are where AMD doesn't have the bottlenecks Intel has, but nobody has noticed it before because there wasn't anything else to stack up against it. The question that needs to be answered in the following weeks and months is, are AMD's bottlenecks fixable with (say) a compiler tweak or library change? I'd expect much of it is, but lets see. There was a comment on some forum (can't remember) that said that back when Athlon64 (K8) came out, the gaming community was certain that it was terrible for gaming, and Netburst was the way to go. That opinion changed pretty quickly.

Notmyusualid - Saturday, March 4, 2017 - link

Gamers Nexus seem 'OK' to me. I don't know the site like I do Anandtech, but since Anand missed out the games....I am forced to make my opinions elsewhere. And funny you mentions Toms, they seem to back it up to some degree too, and I know these two sites are cross-owned.

But still, when Anand get around to benching games with Ryzen, only then will I draw my final conclusions.

deltaFx2 - Sunday, March 5, 2017 - link

@ Notmyusualid: I'm sure Gamers Nexus numbers are reasonable. I think they and Tom's (and other reviewers) see a valid bottleneck that I can only guess is software optimization related. The issue with GN was the bizarre and uninformed editorializing. Comments like, the workloads that AMD does well at are not important because they can be accelerated on GPU (not true, but if true, why on earth did GN use it in the first place?). There are other cases where he drops i5s from evaluation for "methodological reasons" but then says R7 == i5. Even based on the tests he ran, this is not true. Anyway, the reddit link goes over this in far more detail than I could (or would).Meteor2 - Tuesday, March 7, 2017 - link

@DeltaFX2 in what way was GamersNexus conclusion that tasks that can be pushed to GPUs should be incorrect? Are you saying Premiere and Blender can't be used on GPUs?GN's conclusion was:

"If you’re doing something truly software accelerated and cannot push to the GPU, then AMD is better at the price versus its Intel competition. AMD has done well with its 1800X strictly in this regard. You’ll just have to determine if you ever use software rendering, considering the workhorse that a modern GPU is when OpenCL/CUDA are present. If you know specific in stances where CPU acceleration is beneficial to your workflow or pipeline, consider the 1800X."

I think that's very fair and a very good summary of Ryzen.