HiSilicon Kirin 960: A Closer Look at Performance and Power

by Matt Humrick on March 14, 2017 7:00 AM EST- Posted in

- Smartphones

- Mobile

- SoCs

- HiSilicon

- Cortex A73

- Kirin 960

GPU Power Consumption and Thermal Stability

GPU Power Consumption

The Kirin 960 adopts ARM’s latest Mali-G71 GPU, and unlike previous Kirin SoCs that tried to balance performance and power consumption by using fewer GPU cores, the 960’s 8 cores show a clear focus on increasing peak performance. More cores also means more power and raises concerns about sustained performance.

We measure GPU power consumption using a method that’s similar to what we use for the CPU. Running the GFXBench Manhattan 3.1 and T-Rex performance tests offscreen, we calculate the system load power by subtracting the device’s idle power from its total active power while running each test, using each device’s onboard fuel gauge to collect data.

| GFXBench Manhattan 3.1 Offscreen Power Efficiency (System Load Power) |

||||

| Mfc. Process | FPS | Avg. Power (W) |

Perf/W Efficiency |

|

| LeEco Le Pro3 (Snapdragon 821) | 14LPP | 33.04 | 4.18 | 7.90 fps/W |

| Galaxy S7 (Snapdragon 820) | 14LPP | 30.98 | 3.98 | 7.78 fps/W |

| Xiaomi Redmi Note 3 (Snapdragon 650) |

28HPm | 9.93 | 2.17 | 4.58 fps/W |

| Meizu PRO 6 (Helio X25) | 20Soc | 9.42 | 2.19 | 4.30 fps/W |

| Meizu PRO 5 (Exynos 7420) | 14LPE | 14.45 | 3.47 | 4.16 fps/W |

| Nexus 6P (Snapdragon 810 v2.1) | 20Soc | 21.94 | 5.44 | 4.03 fps/W |

| Huawei Mate 8 (Kirin 950) | 16FF+ | 10.37 | 2.75 | 3.77 fps/W |

| Huawei Mate 9 (Kirin 960) | 16FFC | 32.49 | 8.63 | 3.77 fps/W |

| Galaxy S6 (Exynos 7420) | 14LPE | 16.62 | 4.63 | 3.59 fps/W |

| Huawei P9 (Kirin 955) | 16FF+ | 10.59 | 2.98 | 3.55 fps/W |

The Mate 9’s 8.63W average is easily the highest of the group and simply unacceptable for an SoC targeted at smartphones. With the GPU consuming so much power, it’s basically impossible for the GPU and even a single A73 CPU core to run at their highest operating points at the same time without exceeding a 10W TDP, a value more suitable for a large tablet. The Mate 9 allows its GPU to hit 1037MHz too, which is a little silly. For comparison, the Exynos 7420 on Samsung’s 14LPE FinFET process, which also has an 8 core Mali GPU (albeit an older Mali-T760), only goes up to 772MHz, keeping its average power below 5W.

The Mate 9’s average power is 3.1x higher than the Mate 8’s, but because peak performance goes up by the same amount, efficiency turns out to be equal. Qualcomm’s Adreno 530 GPU in Snapdragon 820/821 is easily the most efficient with this workload, and despite achieving about the same performance of Kirin 960, it uses less than half the power.

| GFXBench T-Rex Offscreen Power Efficiency (System Load Power) |

||||

| Mfc. Process | FPS | Avg. Power (W) |

Perf/W Efficiency |

|

| LeEco Le Pro3 (Snapdragon 821) | 14LPP | 94.97 | 3.91 | 24.26 fps/W |

| Galaxy S7 (Snapdragon 820) | 14LPP | 90.59 | 4.18 | 21.67 fps/W |

| Galaxy S7 (Exynos 8890) | 14LPP | 87.00 | 4.70 | 18.51 fps/W |

| Xiaomi Mi5 Pro (Snapdragon 820) | 14LPP | 91.00 | 5.03 | 18.20 fps/W |

| Apple iPhone 6s Plus (A9) [OpenGL] | 16FF+ | 79.40 | 4.91 | 16.14 fps/W |

| Xiaomi Redmi Note 3 (Snapdragon 650) |

28HPm | 34.43 | 2.26 | 15.23 fps/W |

| Meizu PRO 5 (Exynos 7420) | 14LPE | 55.67 | 3.83 | 14.54 fps/W |

| Xiaomi Mi Note Pro (Snapdragon 810 v2.1) |

20Soc | 57.60 | 4.40 | 13.11 fps/W |

| Nexus 6P (Snapdragon 810 v2.1) | 20Soc | 58.97 | 4.70 | 12.54 fps/W |

| Galaxy S6 (Exynos 7420) | 14LPE | 58.07 | 4.79 | 12.12 fps/W |

| Huawei Mate 8 (Kirin 950) | 16FF+ | 41.69 | 3.58 | 11.64 fps/W |

| Meizu PRO 6 (Helio X25) | 20Soc | 32.46 | 2.84 | 11.43 fps/W |

| Huawei P9 (Kirin 955) | 16FF+ | 40.42 | 3.68 | 10.98 fps/W |

| Huawei Mate 9 (Kirin 960) | 16FFC | 99.16 | 9.51 | 10.42 fps/W |

Things only get worse for Kirin 960 in T-Rex, where average power increases to 9.51W and GPU efficiency drops to the lowest value of any device we’ve tested. As another comparison point, the Exynos 8890 in Samsung’s Galaxy S7, which uses a wider 12 core Mali-T880 GPU at up to 650MHz, averages 4.7W and is only 12% slower, making it 78% more efficient.

All of the flagship SoCs we’ve tested from Apple, Qualcomm, and Samsung manage to stay below a 5W ceiling in this test, and even then these SoCs are unable to sustain peak performance for very long before throttling back because of heat buildup. Ideally, we like to see phones remain below 4W in this test, and pushing above 5W just does not make any sense.

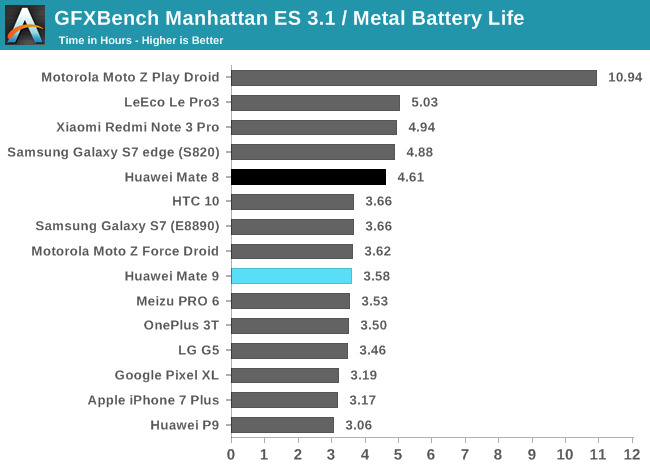

The Kirin 960’s higher power consumption has a negative impact on the Mate 9’s battery life while gaming. It runs for 1 hour less than the Mate 8, a 22% reduction that would be more pronounced it the Mate 9 did not throttle back GPU frequency during the test. Ultimately, the Mate 9’s runtime is similar to other flagship phones (with smaller batteries), while providing similar or better performance. To reconcile Kirin 960’s high GPU power consumption with the Mate 9’s acceptable battery life in our gaming test, we need to look more closely at its behavior over the duration of the test.

GPU Thermal Stability

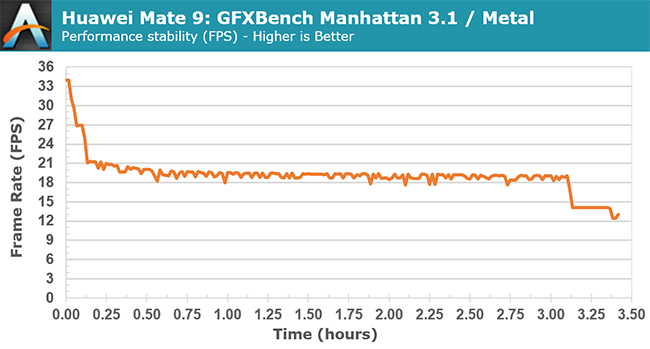

The Mate 9 only maintains peak performance for about 1 minute before reducing GPU frequency, dropping frame rate to 21fps after 8 minutes, a 38% reduction relative to the peak value. It reaches equilibrium after about 30 minutes, with frame rate hovering around 19fps, which is still better than the phones using Kirin 950/955 that peak at 11.5fps with sustained performance hovering between 9-11fps. It’s also as good as or better than phones using Qualcomm’s Snapdragon 820/821 SoCs. The Moto Z Force Droid, for example, can sustain a peak performance of almost 18fps for 12 minutes, gradually reaching a steady-state frame rate of 14.5fps, and the LeEco Pro 3 sustains 19fps after dropping from a peak value of 33fps.

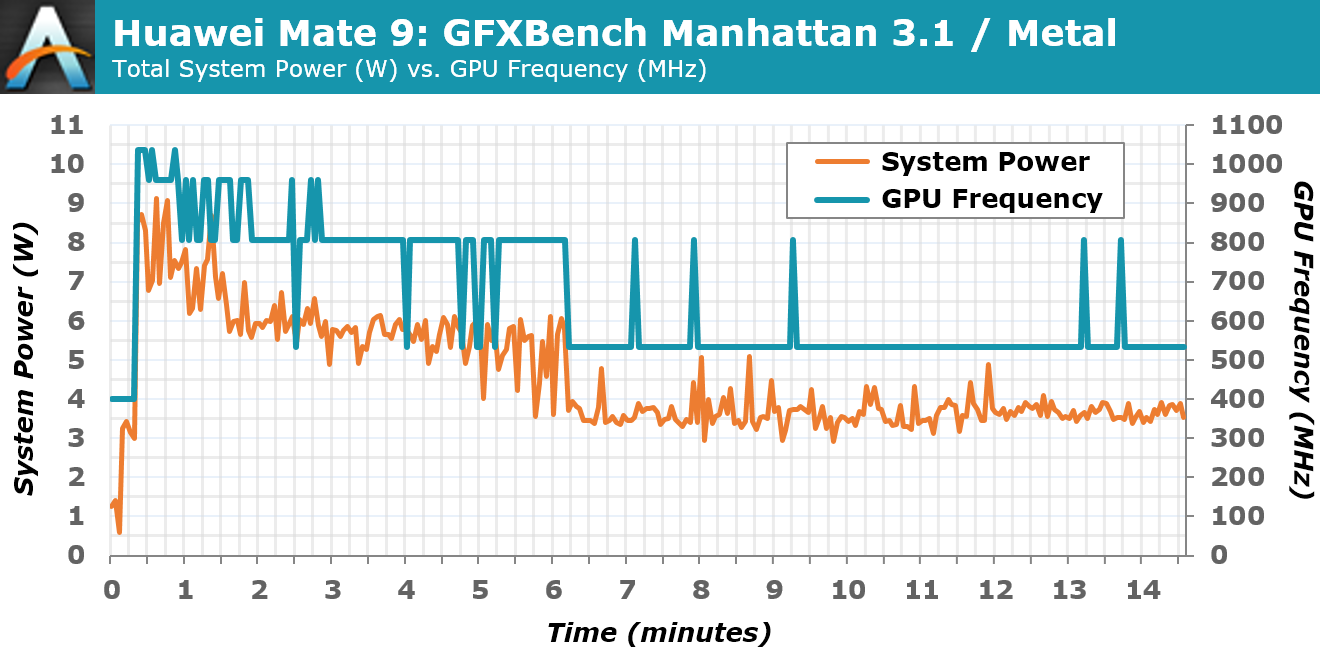

In the lower chart, which shows how the Mate 9’s GPU frequency and power consumption change during the first 15 minutes of the gaming battery test, we can see that once GPU frequency drops to 533MHz, average power consumption drops below 4W, a sustainable value that still results in performance on par with other flagship SoCs after they’ve throttled back too. This suggests that Huawei/HiSilicon should have chosen a more sensible peak operating point for Kirin 960’s GPU of 650MHz to 700MHz. The only reason to push GPU frequency to 1037MHz (at least in a phone or tablet) is to make the device look better on a spec sheet and post higher peak scores in benchmarks.

Lowering GPU frequency would not improve Kirin 960’s low GPU efficiency, however. Because we do not have any other Mali-G71 examples at this time, we cannot say if this is indicative of ARM’s new GPU microarchitecture (I suspect not) or the result of HiSilicon’s implementation and process choice.

86 Comments

View All Comments

lilmoe - Tuesday, March 14, 2017 - link

I read things thoroughly before criticizing. You should do the same before jumping in to support an idiotic comment like fanofanand's. He's more interested in insulting people than finding the truth.These tests are the ones which aren't working. No one gets nearly as much battery life as they report. Nor are the performance gains anywhere near what benchmarks like geekbench are reporting. If something isn't working, one should really look for other means. That's how progress works.

You can't test a phone the same way you test a workstation. You just can't. NO ONE leaves their phone lying on a desk for hours waiting on it to finish compiling 500K lines of code, or rendering a one-hour 3D project or a 4K video file for their channel on Youtube. But they do spend a lot of time watching video on Youtube, browsing the web with 30 second pauses between each scroll, and uploading photos/videos to social media after applying filters. Where are these tests??? You know, the ones that actually MATTER for most people? You know, the ones that ST performance matters less for, etc, etc...

Anyway, I did suggest what I believe is a better, more realistic, method for testing. Hint, it's in the fifth paragraph of my original reply. But who cares right? We just want to know "which is the fastest", which method confirms our biases, regardless of the means of how such performance is achieved. Who cares about the truth.

People are stubborn. I get that. I'm stubborn too. But there's a limit at how stubborn people can be, and they need to be called out for it.

Meteor2 - Wednesday, March 15, 2017 - link

I'm with fanof and close on this one. Here we have a consistent battery of repeatable tests. They're not perfectly 'real-world' but they're not far off either; there's only so many things a CPU can do.I like this test suite (though I'd like to see GB/clock and SPi and GB/power calculated and graphed too). If you can propose a better one, do so.

close - Wednesday, March 15, 2017 - link

This isn't about supporting someone's comment, I was very clear which part I agree with: the one where you help come up with a practical implementation of your suggestion.Phone can and should be tested like normal desktops since the vast majority of them spend most of their time idling, just like phones. The next this is running Office like applications, normal browsing, and media consumption.

You're saying that "NO ONE leaves their phone lying on a desk for hours waiting on it to finish compiling 500K lines of code". But how many people would find even that relevant? How many people compile 500K lines of code regularly? Or render hours of 4K video? And I'm talking about percentage of the total.

Actually the ideal case for testing any device is multiple scenarios that would cover a more user types: from light browsing and a handful of phone calls to heavy gaming or media consumption. These all result in vastly different results as a SoC/phone might be optimized for sporadic light use or heavier use for example. So a phone that has best battery life and efficiency while gaming won't do so while browsing. So just like benchmarks, any result would only be valid for people who follow the test scenario closely in their daily routine.

But the point wasn't whether an actual "real world" type scenario is better, rather how exactly do you apply that real world testing into a sequence of steps that can be reproduced for every phone consistently? How do you make sure that all phones are tested "equally" with that scenario and that none has an unfair (dis)advantage from the testing methodology? Like Snapchat or FB being busier one day and burning through the battery faster.

Just like the other guy was more interested in insults (according to you), you seem more interested in cheap sarcasm than in actually providing an answer. I asked for a clear methodology. You basically said that "it would be great if we had world peace and end hunger". Great for a beauty pageant, not so great when you were asked for a testing methodology. A one liner is not enough for this. A methodology is you describing exactly how you proceed with testing the phones, step by step, while guaranteeing reproducibility and fairness. Also please explain how opening a browser, FB, or Snapchat is relevant for people who play games 2 hours per day, watch movies or actually use the phone as a phone and talk to other people.

You're making this more difficult than it should be. You look like you had plenty of time to think about this. I hald half a day and already I came up with a better proposal then yours (multiple scenarios vs. single scenario). And of course, I will also leave out the exact methodology part because this is a comment competition not an actual search for solutions.

lilmoe - Wednesday, March 15, 2017 - link

I like people who actually spend some time to reply. But, again, I'd appreciate it more if you read my comments more carefully. I told you that the answer you seek is in my first reply, in the fifth paragraph. If you believe I have "plenty of time" just for "cheap sarcasm", then sure we can end it here. If you don't, then go on reading.I actually like this website. That's why I go out of my way to provide constructive criticism. If I was simply here for trolling, my comments won't be nearly as long.

SoCs don't live in a vacuum, they come bundled with other hardware and software (Screen, radios, OS/Kernel), optimized to work on the device being reviewed. In the smartphone world, you can't come to a concrete conclusion on the absolute efficiency of a certain SoC based on one device, because many devices with the same SoC can be configured to run that SoC differently. This isn't like benchmarking a Windows PC, where the kernel and governer are fixed across hardware, and screens are interchangeable.

Authors keep acknowledging this fact, yet do very little to go about testing these devices using other means. It's making it hard for everyone to understand the actual performance of said devices, or the real bang for the buck they provide. I think we can agree on that.

"You're making this more difficult than it should be"

No, really, I'm not. You are. When someone is suggesting something a bit different, but everyone is slamming them for the sake of "convention" and "familiarity", then how are we supposed to make progress?

I'm NOT saying that one should throw benchmarks out. But I do believe that benchmarks should stay in meaningful context. They give you a rough idea about the snappiness of a ultra-mobile device, since it's been proven time after time that the absolute performance of these processors is ONLY needed for VERY short bursts, unlike workstations. However, they DO NOT give you anywhere near a valid representation of average power draw and device battery life, and neither do scripts written to run synthetic/artificial workloads. Period.

This is my point. I believe the best way to measure a specific configuration is by first specifying the performance point a particular OEM is targeting, and then measuring the power draw of that target. This comes in as the average clocks the CPU/GPU at various workloads, from gaming, browsing, playing video, to social media. It doesn't matter how "busy" these content providers are at specific times, the average clocks will be the same regardless because the workload IS the same.

I have reason to believe that OEMs are optimizing their kernels/governers for each app alone. Just like they did with benchmarks several years ago, where they ramp clocks up when they detect a benchmark running. Except, they're doing it the right way now, and optmizing specific apps to run differently on the device to provide the user with the best experience.

When you've figured out the average the OEM is targetting for various workloads, you'd certainly know how much power it's drawing, and how much battery life to expect AFTER you've already isolated other factors, such as the screen and radios. It also makes for a really nice read, as a bonus (hence, "worth investigating").

This review leaves an important question unanswered about this SoC's design (I'm really interested to know the answer); did HiSilicon cheap out on the fab process to make more money and leach on the success of its predecessor? Or did they do that with good intentions to optimize their SoC further for modern, real world workloads that currently used benchmarks are not detecting? I simply provided a suggest to answer that question. Does that warrant the language in his, or your reply? Hence my sarcasm.

fanofanand - Tuesday, March 14, 2017 - link

It's exciting to see the envelope being pushed, and though these are some interesting results I like that they are pushing forward and not with a decacore. The G71 looks like a botched implementation if it's guzzling power that heavily, I wonder if some firmware/software could fix that? A73 still looks awesome, and I can't wait to see a better implementation!psychobriggsy - Tuesday, March 14, 2017 - link

TBH the issue with the GPU appears to be down to the clock speed it is configured with.It's clear that this is set for benchmarking purposes, and it's good that this has been caught.

Once the GPU settles down into a more optimal 533MHz configuration, power consumption goes down significantly. Sadly it looks like there are four clock settings for the GPU, and they've wasted three of them on stupid high clocks. A better setup looks to be 800MHz, 666MHz, 533MHz and a power saving 400MHz that most Android games would still find overkill.

Meteor2 - Wednesday, March 15, 2017 - link

Performance/Watt is frankly rubbish whatever the clock speed. Clearly they ran out of time or money to implement Bifrost properly.fanofanand - Wednesday, March 15, 2017 - link

That's what I'm thinking, I read the preview to Bitfrost and thought "wow this thing is going to be killer!" I was right on the money, except that it's a killer of batteries, not competing GPUs.Shadowmaster625 - Tuesday, March 14, 2017 - link

What is HTML5 DOM doing that wrecks the Snapdragon 821 so badly?joms_us - Tuesday, March 14, 2017 - link

Just some worthless test that the Monkey devs put to show how awesome iPhones are. But if you do real side-by-side website comparison between iPhone and and phone with SD821, SD821 will wipe the floor.