The Intel Core i5-7600K (91W) Review: The More Amenable Mainstream Performer

by Ian Cutress on January 3, 2017 12:01 PM ESTPower Consumption

As with all the major processor launches in the past few years, performance is nothing without a good efficiency to go with it. Doing more work for less power is a design mantra across all semiconductor firms, and teaching silicon designers to build for power has been a tough job (they all want performance first, naturally). Of course there might be other tradeoffs, such as design complexity or die area, but no-one ever said designing a CPU through to silicon was easy. Most semiconductor companies that ship processors do so with a Thermal Design Power, which has caused some arguments recently based presentations broadcast about upcoming hardware.

Yes, technically the TDP rating is not the power draw. It’s a number given by the manufacturer to the OEM/system designer to ensure that the appropriate thermal cooling mechanism is employed: if you have a 65W TDP piece of silicon, the thermal solution must support at least 65W without going into heat soak. Both Intel and AMD also have different ways of rating TDP, either as a function of peak output running all the instructions at once, or as an indication of a ‘real-world peak’ rather than a power virus. This is a contentious issue, especially when I’m going to say that while TDP isn’t power, it’s still a pretty good metric of what you should expect to see in terms of power draw in prosumer style scenarios.

So for our power analysis, we do the following: in a system using one reasonable sized memory stick per channel at JEDEC specifications, a good cooler with a single fan, and a GTX 770 installed, we look at the long idle in-Windows power draw, and a mixed AVX power draw given by OCCT (a tool used for stability testing). The difference between the two, with a good power supply that is nice and efficient in the intended range (85%+ from 50W and up), we get a good qualitative comparison between processors. I say qualitative as these numbers aren’t absolute, as these are at-wall VA numbers based on power you are charged for, rather than consumption. I am working with our PSU reviewer, E.Fylladikatis, in order to find the best way to do the latter, especially when working at scale.

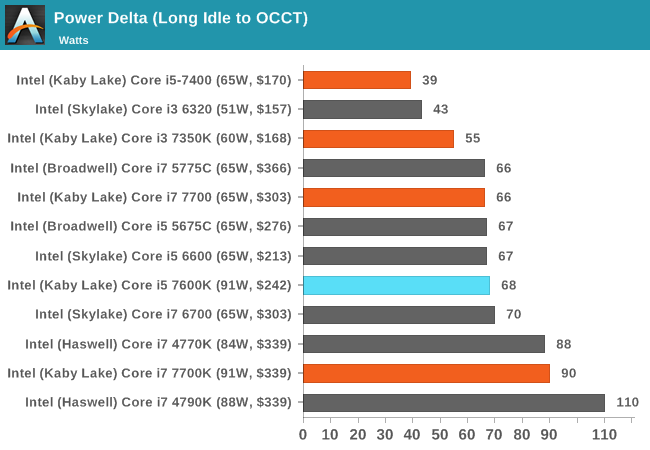

Nonetheless, here are our recent results for Kaby Lake at stock frequencies:

The Core i5-7600K, despite the 91W TDP rating, only achieved 63W in our power test. This is relatively interesting, suggesting that the Core i5 sits in a good power bracket for voltage (we see that with overclocking below), but also the mixed-AVX loading only starts piling on the power consumption when there are two hyperthreads going for those instructions at the same time per core. When there’s only one thread per core for AVX, there seems to be enough time for each of the AVX units to slow down and speed back up, reducing overall power consumption.

Overclocking

At this point I’ll assume that as an AnandTech reader, you are au fait with the core concepts of overclocking, the reason why people do it, and potentially how to do it yourself. The core enthusiast community always loves something for nothing, so Intel has put its high-end SKUs up as unlocked for people to play with. As a result, we still see a lot of users running a Sandy Bridge i7-2600K heavily overclocked for a daily system, as the performance they get from it is still highly competitive.

There’s also a new feature worth mentioning before we get into the meat: AVX Offset. We go into this more in our bigger overclocking piece, but the crux is that AVX instructions are power hungry and hurt stability when overclocked. The new Kaby Lake processors come with BIOS options to implement an offset for these instructions in the form of a negative multiplier. As a result, a user can stick on a high main overclock with a reduced AVX frequency for when the odd instruction comes along that would have previously caused the system to crash.

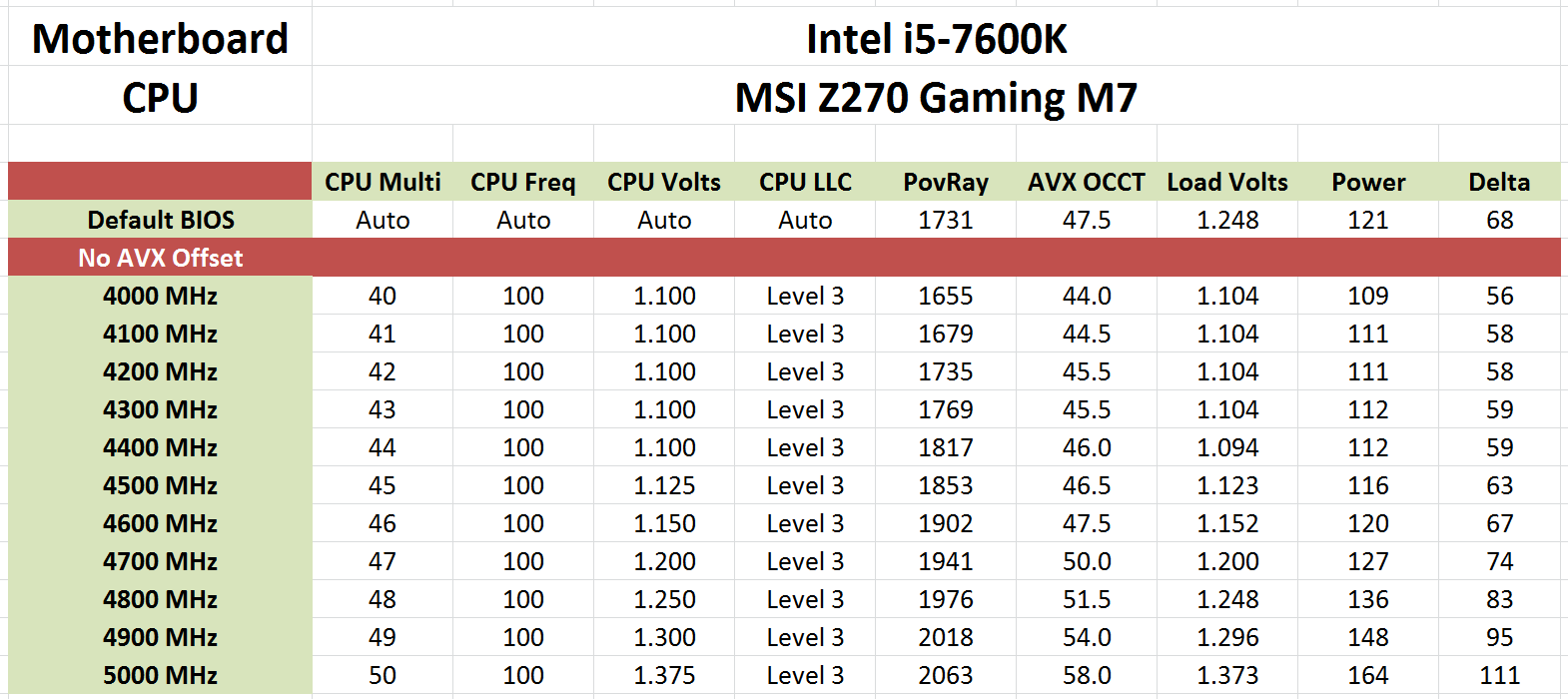

We used the AVX Offset option when we overclocking the Core i7-7700K and achieved another 100-200 MHz extra frequency for non-AVX before succumbing to overheating. Ultimately that’s not a lot of frequency, but that can be enough for some users. With the Core i5-7600K, we got a great result without even touching the AVX offset option:

Straight out of the box, our retail sample achieved an OCCT stable 5.0 GHz with mixed AVX at a 1.375V setting, with the software recording only 58C peak on the core and a maximum power draw of 111W. That’s fairly astonishing – from the base 3.8/4.2 GHz we were able to get up to 800 MHz in an overclock for just under double the power draw (or, +20W over TDP).

70 Comments

View All Comments

Magichands8 - Tuesday, January 3, 2017 - link

This Optane looks completely useless. But Optane DRAM sounds like it could be interesting. Depending upon how much slower it is.lopri - Wednesday, January 4, 2017 - link

I immediately thought of "AMD Memory" which AMD launched after the Bulldozer flop. But then again Intel have been going after this storage caching scheme for years now and I do not think it has taken them anywhere.smilingcrow - Tuesday, January 3, 2017 - link

Games frame rates to 2 decimal points adds nothing but making it harder to scan the numbers quickly. Enough already.1_rick - Tuesday, January 3, 2017 - link

One place where the extra threads of an i7 are useful is if you're using VMs (maybe to run a database server or something.) I've found that an i5 with 8GB can get bogged down pretty drastically just from running a VM.Meteor2 - Tuesday, January 3, 2017 - link

I kinda think that if you're running db VMs on an i7, you're doing it wrong.t.s - Wednesday, January 4, 2017 - link

or if you're Android developer using Android Studio.lopri - Wednesday, January 4, 2017 - link

That is true but as others have implied you can get 6 or 8 real core Xeons for cheaper than these new Kaby Lake chips if VM is what you need the performance of a CPU is for.ddhelmet - Tuesday, January 3, 2017 - link

Well I am more glad now that I got a Skylake. Even if I waited for this the performance increase is not worth it.Kaihekoa - Tuesday, January 3, 2017 - link

Y'all need to revise your game benchmarking analysis. At least use some current generation GPUs, post the minimum framerates, and test at 1440p. The rest of your review is exceptional, but the gaming part needs some modernization, please.lopri - Wednesday, January 4, 2017 - link

I thank the author for a clear yet thorough review. A lot of grounds are covered and the big picture of the chip's performance and features is well communicated. I agree with the author's recommendation at the end as well. I have not felt that I am missing out anything compared to i7's while running a 2500K for my gaming system, and unless you know for certain that you can take advantage of HyperThreading, spending the difference in dollars toward an SSD or a graphics card is a wiser expenditure that will provide you with better computing experience.Having said that, I am wtill not compelled to upgrade my 2500K which has been running at 4.8 GHz for years. (It does 5.0 GHz no problem but I run it at 4.8 to leave some "headroom") While I think the 7600K is barely a worthy upgrade (finally!) on its own light, but the added cost cannot be overlooked. A new motherboard, new memory, and potentially a new heat sink will quickly add to the budget, and I am not sure if it is going to be worth all the expenses that will follow.

Of course all that could be worthwhile if overclocking was fun, but Intel pretty much have killed overclocking and the overclocking community. Intentionally if I might add. Today overclocking does not give one a sense of discovery or accomplishment. Competition between friendly enthusiasts or hostile motherboard/memory vendors has disappeared. Naturally there is no accumulation or exchange of knowledge in the community, and conversations have become frustrating and vain due to lack of overclocking expertise. Only some brute force overclocking with dedicated cooling has some following, and the occasional "overclocking" topics in the forums are really a braggadocio in disguise, of which the competition underneath is really about who spent the most on their rigs with the latest blingy stuff. Needless to say those are not as exciting or illuminating as the real overclocking of the yore, and in my opinion there are better ways to spend money for such a self-gratification without the complication that often accompanies overclocking which in the end fails to impress.

Intel might have a second thought about its overclocking policies now, but just as many things Intel have done in recent years, it is too little too late. And their chips have no headroom anyway. My work system is due for an upgrade and I am probably going to pick up a couple of E2670s which will give me 16 real cores for less than $200. Why bother with the new stuff when the IPC gain is meager and there is no fun in overclocking? And contribute to Intel's revenue? Thank you but no thank you.

P.S. Sorry I meant to commend the author for the excellent (albeit redundant) review but ended up ranting about something else. Oh well, carry on..