The Intel Core i7-7700K (91W) Review: The New Out-of-the-box Performance Champion

by Ian Cutress on January 3, 2017 12:02 PM ESTIn the Words of Jeremy Clarkson: POWEEEERRRRR

As with all the major processor launches in the past few years, performance is nothing without a good efficiency to go with it. Doing more work for less power is a design mantra across all semiconductor firms, and teaching silicon designers to build for power has been a tough job (they all want performance first, naturally). Of course there might be other tradeoffs, such as design complexity or die area, but no-one ever said designing a CPU through to silicon was easy. Most semiconductor companies that ship processors do so with a Thermal Design Power, which has caused some arguments recently based on performance presentations.

Yes, technically the TDP rating is not the power draw. It’s a number given by the manufacturer to the OEM/system designer to ensure that the appropriate thermal cooling mechanism is employed: if you have a 65W TDP piece of silicon, the thermal solution must support at least 65W without going into heat soak. Both Intel and AMD also have different ways of rating TDP, either as a function of peak output running all the instructions at once, or as an indication of a ‘real-world peak’ rather than a power virus. This is a contentious issue, especially when I’m going to say that while TDP isn’t power, it’s still a pretty good metric of what you should expect to see in terms of power draw in prosumer style scenarios.

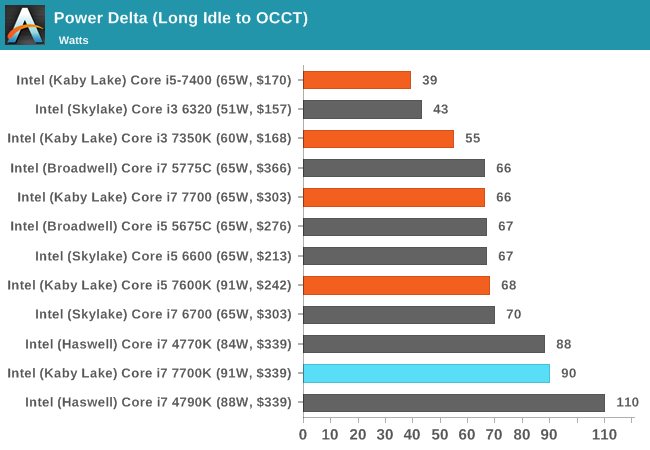

So for our power analysis, we do the following: in a system using one reasonable sized memory stick per channel at JEDEC specifications, a good cooler with a single fan, and a GTX 770 installed, we look at the long idle in-Windows power draw, and a mixed AVX power draw given by OCCT (a tool used for stability testing). The difference between the two, with a good power supply that is nice and efficient in the intended range (85%+ from 50W and up), we get a good qualitative comparison between processors. I say qualitative as these numbers aren’t absolute, as these are at-wall VA numbers based on power you are charged for, rather than consumption. I am working with our PSU reviewer, E.Fylladikatis, in order to find the best way to do the latter, especially when working at scale.

Nonetheless, here are our recent results for Kaby Lake at stock frequencies:

What amazes me, if anything, is how close the Core i7 and Core i3 parts are to their TDP in our measurements. Previously, such as with the Core i7-6700K and Core i7-4790K, we saw +20W on our system compared to TDP, but the Core i7-7700K is pretty much bang on at 90W (for a 91W rated part). Similarly, the Core i3-7350K is rated at 60W and we measured it at 55W. The Core i5-7600K is a bit different due to no hyperthreading meaning the AVX units aren’t loaded as much, but more on that in that review.

To clarify, our tests were performed on retail units. No engineering sample trickery here.

With power on the money, this perhaps mean that Intel is getting the voltages of each CPU to where they should be based on the quality of the silicon. In previous generations, Intel would over estimate the voltage needed in order to capture more CPUs within a given yield – however AMD has been demonstrating of late that it is possible to tailor the silicon more based on internal metrics. Either our samples are flukes, or Intel is doing something similar here.

With power consumption in mind, let’s move on to Overclocking, and watch some sand burn a hole in a PCB (hopefully not).

Overclocking

At this point I’ll assume that as an AnandTech reader, you are au fait with the core concepts of overclocking, the reason why people do it, and potentially how to do it yourself. The core enthusiast community always loves something for nothing, so Intel has put its high-end SKUs up as unlocked for people to play with. As a result, we still see a lot of users running a Sandy Bridge i7-2600K heavily overclocked for a daily system, as the performance they get from it is still highly competitive.

Despite that, the i7-7700K has somewhat of an uphill battle. As a part with a 4.5 GHz turbo frequency, if users are expecting a 20-30% increase for a daily system then we will be pushing 5.4-5.8 GHz, which for daily use with recent processors has not happened.

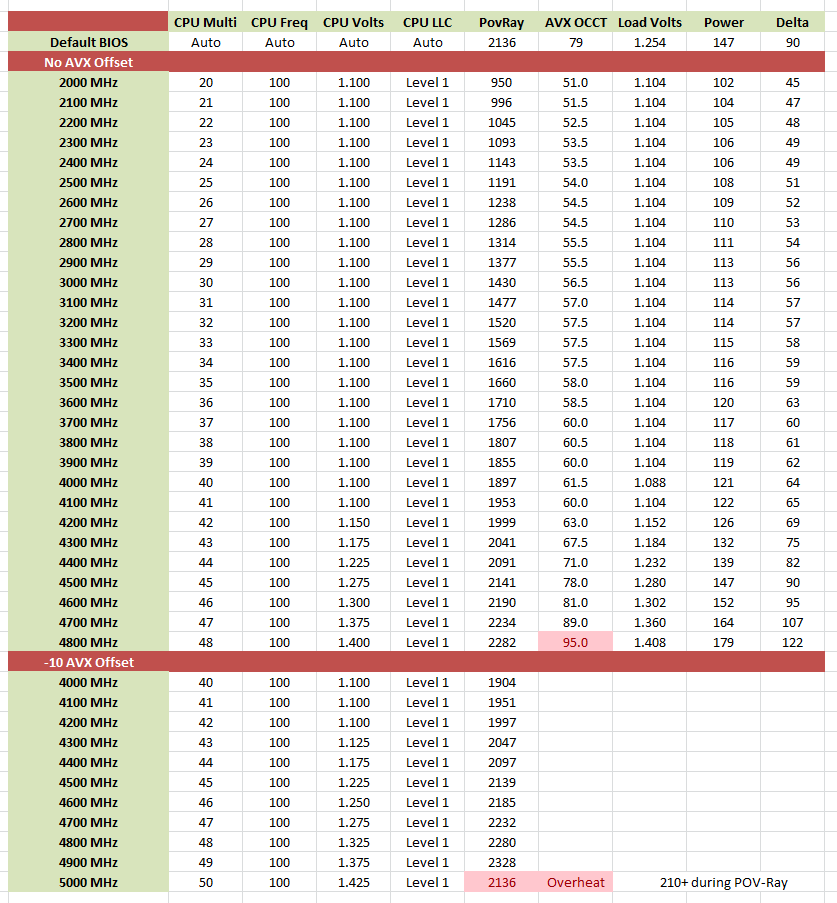

There’s also a new feature worth mentioning before we get into the meat: AVX Offset. We go into this more in our bigger overclocking piece, but the crux is that AVX instructions are power hungry and hurt stability when overclocked. The new Kaby Lake processors come with BIOS options to implement an offset for these instructions in the form of a negative multiplier. As a result, a user can stick on a high main overclock with a reduced AVX frequency for when the odd instruction comes along that would have previously caused the system to crash.

Because of this, we took our overclocking methods in two ways. First, we left the AVX Offset alone, meaning our OCCT mixed-AVX stability test got the full brunt of AVX power and the increased temperature/power reading there in. We then applied a second set of overclocks with a -10 offset, meaning that at 4.5 GHz the AVX instructions were at 3.5 GHz. This did screw up some of our usual numbers that rely on the AVX part to measure power, but here are our results:

At stock, our Core i7-7700K ran at 1.248 volts at load, drawing 90 watts (the column marked ‘delta’), and saw a temperature of 79C using our 2kg copper cooling.

After this, we put the CPU on a 20x multiplier, set it to 1.000 volt (which didn’t work, so 1.100 volts instead), gave the load-line calibration setting to Level 1 (constant voltage on ASRock boards), and slowly went up in 100 MHz jumps. Every time the POV-Ray/OCCT stability tests failed, the voltage was raised 0.025V.

This gives a few interesting metrics. For a long time, we did not need a voltage increase: 1.100 volts worked as a setting all the way up to 4.2 GHz, which is what we’ve been expecting for a 14nm processor at such a frequency. From there the voltage starts increasing, but at 4.5 GHz we needed more voltage in a manual overclock to achieve stability than the CPU gave itself at stock frequency. So much for overclocking! It wasn’t until that 4.3-4.5 GHz set of results that the CPU started to get warm, as shown by the OCCT temperature values.

At 4.8 GHz, the Core i7-7700K passed POV-Ray with ease, however the 1.400 volts needed at that point were pushing the processor up to 95C during OCCT and its mixed AVX workload. At that point I decided to call an end to it, where the CPU was now drawing 122W from idle to load. The fact that it is only 122W is surprisingly low – I would have thought we would be nearing 160W at this point, other i7 overclockable processors at this level in the past.

The second set of results is with the AVX offset. This afforded stability at 4.8 GHz and 4.9 GHz, however at 5.0 GHz and 1.425 volts the CPU was clearly going into thermal recovery modes, as given by the lower scores in POV-Ray.

Based on what we’ve heard out in the ether, our CPU sample is somewhat average to poor in terms of overclocking performance. Some colleagues at the motherboard manufacturers are seeing 5.0 GHz at 1.3 volts (with AVX offset) although I’m sure they’re not talking in terms of a serious reasonable stability.

125 Comments

View All Comments

ThomasS31 - Tuesday, January 3, 2017 - link

I meant difference in high end CPUs... ofc. Sorry.Why no edit on your site? :)

pxnx - Tuesday, January 3, 2017 - link

These games are ancient, why even bother benchmarking them?Michael Bay - Saturday, January 14, 2017 - link

>2015>ancient

Mithan - Tuesday, January 3, 2017 - link

I have a 2500k, and I am going to upgrade (its 6 years old).Seriously considering a i7 7700k, people keep telling me to go for Zen because I am going to see a "big difference with games", even though those same people know that Zen will run slower on a per core basis.

I don't see this "big difference" with extra cores in todays games.

We can extrapolate based on current benchmarks, that the i7 7700k will be faster for games, as seen by using the 68xx Intel Series to compare against, even in Ashes of the Singularity.

I can see a "big difference" going from Core i5 to Core i7 or Core i5 to 6/8 Cores, but I don't see a big difference going from Core i7 to 6 or 8 cores in GAMES.

I get that unzipping documents, handbreak, etc are all going to be faster, but I don't particularly care about all those apps I rarely use (if I use them). It isn't like a 7700k is going to choke on Chrome.

I get that a 6 or 8 core will let me play a game and stream content faster (I don't stream).

Can somebody else sound in on my opinion?

DigitalFreak - Tuesday, January 3, 2017 - link

From what's known about Zen so far, you are correct. If all you care about is standard PC stuff and gaming, you're better off with a Kabey Lake i5 or i7. It looks like Zen will be cheaper but similar performing alternative to the Broadwell E processors for those that do more "workstation class" stuff. Of course that remains to be seen until we get some real unbiased benchmarks.close - Wednesday, January 4, 2017 - link

Until we see some retail parts it's hard to get an good idea about Zen. Clocks may vary from ES chips and the price might be motivating enough. 5-10% less performance for 40% lower price could be appealing to anybody who's not looking only at the very highest end of every component.close - Wednesday, January 4, 2017 - link

Also if you plan on holding onto this new CPU for a long time then go for more cores even if it comes with slightly lower clocks. You'll very likely be able to overclock it and squeeze more MHz but you'll never squeeze in more cores. And remember that 6-7 years ago dual-cores were considered the norm while today some games won't even start on a dual core.Game performance is getting less and less dependent on CPU so personally I would always go for the CPU that offers better general performance and more cores than one with slightly higher clocks that focuses the performance in games and gaming benchmarks. If you want better game performance think of a better GPU, that will actually bring palpable improvement over generations.

I'd hold on to the old 2500k for a while, until we get some nice reviews for what's coming.

carticket - Wednesday, January 4, 2017 - link

Just popping in (and registering) to echo that the 2500k is still a great CPU and this is not a great time to hop on the upgrade train with such an incremental upgrade over Skylake.I had a memory failure in my system, and that got me seriously considering a Kaby Lake upgrade, but for what would likely be a $500-600 upgrade, I just don't see a significant benefit. I say this as someone who hopped on the 970 train (upgrade from a 560 Ti) a few months before the 1070s hit the market with vastly better performance.

Toss3 - Wednesday, January 4, 2017 - link

The 7700K is going to be a massive upgrade and definitely worth it if you are currently on something older than Haswell. If your software/games are running at a decent framerate, and you really don't need to upgrade, then I'd suggest waiting as we'll start seeing 6 cores becoming the standard pretty soon (first with Zen and then with Coffee Lake).close - Wednesday, January 4, 2017 - link

Aren't we talking about the exact same massive upgrade a 6700K would have provided a year ago? If that wasn't enough to convince a user to upgrade then why would it be now?Upgrading now means they've just waited one more year with a really old CPU but ended up paying the same price for the same performance this year.

And thinking about an upgrade and those massive benefits just before we finally have a hope that AMD might launch something competitive isn't the best strategy even if your framerates already suffer. For the first time in years Intel might be forced to drop prices but why wait a couple of months when you can pay full price now for last year's CPU, right?