The Intel SSD 600p (512GB) Review

by Billy Tallis on November 22, 2016 10:30 AM ESTPerformance Consistency

Our performance consistency test explores the extent to which a drive can reliably sustain performance during a long-duration random write test. Specifications for consumer drives typically list peak performance numbers only attainable in ideal conditions. The performance in a worst-case scenario can be drastically different as over the course of a long test drives can run out of spare area, have to start performing garbage collection, and sometimes even reach power or thermal limits.

In addition to an overall decline in performance, a long test can show patterns in how performance varies on shorter timescales. Some drives will exhibit very little variance in performance from second to second, while others will show massive drops in performance during each garbage collection cycle but otherwise maintain good performance, and others show constantly wide variance. If a drive periodically slows to hard drive levels of performance, it may feel slow to use even if its overall average performance is very high.

To maximally stress the drive's controller and force it to perform garbage collection and wear leveling, this test conducts 4kB random writes with a queue depth of 32. The drive is filled before the start of the test, and the test duration is one hour. Any spare area will be exhausted early in the test and by the end of the hour even the largest drives with the most overprovisioning will have reached a steady state. We use the last 400 seconds of the test to score the drive both on steady-state average writes per second and on its performance divided by the standard deviation.

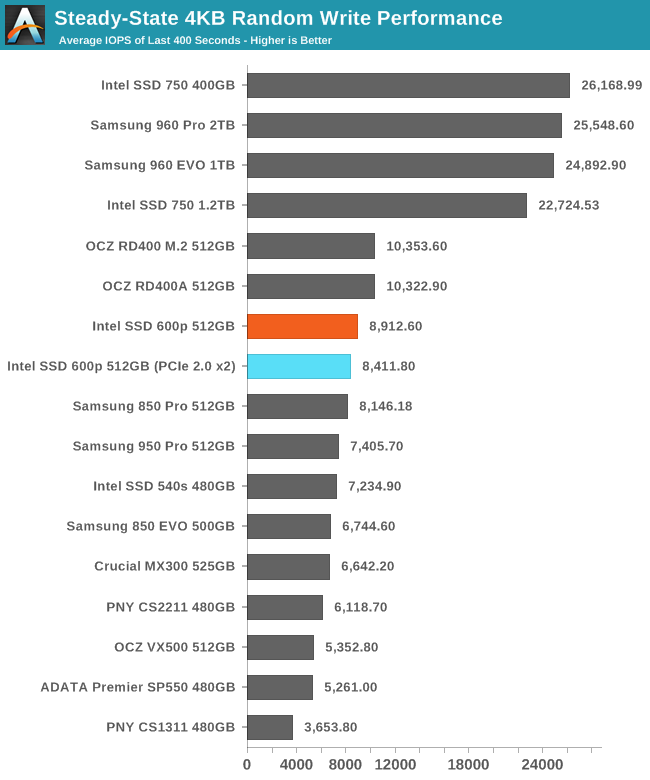

The Intel 600p's steady state random write performance is reasonably fast, especially for a TLC SSD. The 600p is faster than all of the SATA SSDs in this collection. The Intel 750 and Samsung 960s are in an entirely different league, but the OCZ RD400 is only slightly ahead of the 600p.

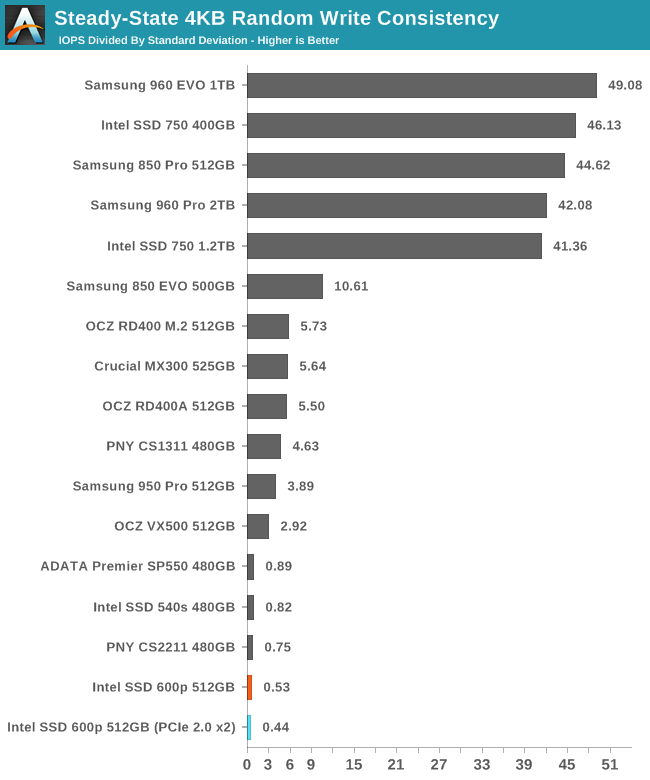

Despite a decently high average performance, the 600p has a very low consistency score, indicating that even after reaching steady state, the performance varies widely and the average does not tell the whole story.

|

|||||||||

| Default | |||||||||

| 25% Over-Provisioning | |||||||||

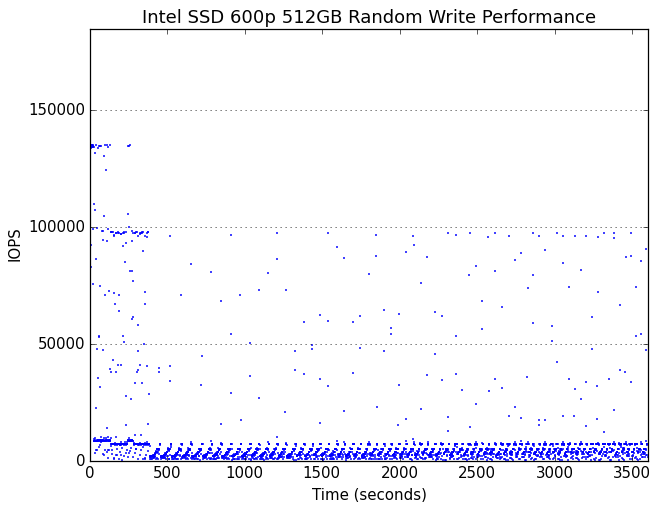

Very early in the test, the 600p begins showing cyclic drops in performance due to garbage collection. Several minutes into the hour-long test, the drive runs out of spare area and reaches steady state.

|

|||||||||

| Default | |||||||||

| 25% Over-Provisioning | |||||||||

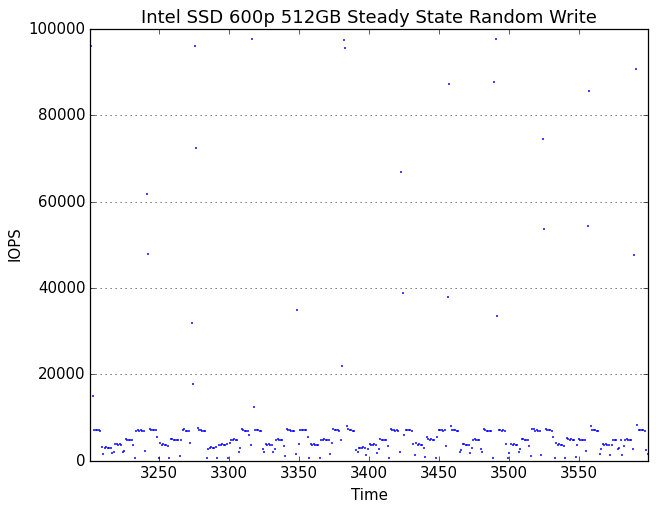

In its steady state, the 600p spends most of the time tracing out a sawtooth curve of performance that has a reasonable average but is constantly dropping down to very low performance levels. Oddly, there are also brief moments of unhindered performance where the drive spikes to exceptionally high performance of up to 100k IOPS, but these are short and infrequent enough to have little impact on the average performance. It would appear that the 600p occasionally frees up some SLC cache, which then immediately gets used up and kicks off another round of garbage collection.

With extra overprovisioning, the 600p's garbage collection cycles don't drag performance down as far, making the periodicity less obvious.

63 Comments

View All Comments

ddriver - Tuesday, November 22, 2016 - link

A fool can dream James, a fool can dream...He also wants to live in a really big house made of cards and bathe in dry water, so his hair don't get wet :D

Kevin G - Wednesday, November 23, 2016 - link

Conceptually a PCIe bridge/NVMe RAID controller could implement additional PCIe lanes on the drive side for RAID5/6 purposes. For example, 16 lanes to the bridge and six 4 lane slots on the other end. There is still the niche in the server space where reliability is king and having removable and redundant media is important. Granted, this niche is likely served better by U.2 for hot swap bays than M.2 but they'd use the same conceptual bridge/RAID chip proposed here.vFunct - Wednesday, November 23, 2016 - link

> However WHY would you want to do that when you could just go get an Intel P3520 2TB drive or for higher speed a P3700 2TB drive.Those are geared towards database applications (and great for it, as I use them), not media stores.

Media stores are far more cost sensitive.

jjj - Tuesday, November 22, 2016 - link

And this is why SSD makers should be forced to list QD1 perf numbers, it's getting ridiculous.powerarmour - Tuesday, November 22, 2016 - link

I hate TLC.Notmyusualid - Tuesday, November 22, 2016 - link

I'll second that.ddriver - Tuesday, November 22, 2016 - link

Then you will love QLCBrokenCrayons - Wednesday, November 23, 2016 - link

I'm not a huge fan either, but I was also reluctant to buy into MLC over much more durable SLC despite the cost and capacity implications. At this point, I'd like to see some of these newer, much more durable solid state memory technologies that are lurking in labs find their way into the wider world. Until then, TLC is cheap and "good enough" for relatively disposable consumer electronics, though I do keep a backup of my family photos and the books I've written...well, several backups since I'd hate to lose those things.bug77 - Tuesday, November 22, 2016 - link

The only thing that comes to mind is: why, intel, why?milli - Tuesday, November 22, 2016 - link

Did you test the MX300 with the original firmware or the new firmware?