The Samsung 960 Pro (2TB) SSD Review

by Billy Tallis on October 18, 2016 10:00 AM EST

A year ago, Samsung brought their PCIe SSD technology to the retail market in the form of the Samsung 950 Pro, an NVMe M.2 SSD with Samsung's 3D V-NAND flash memory. The 950 Pro didn't appear out of nowhere—Samsung had shipped two generations of M.2 PCIe SSDs to OEMs, but before the 950 Pro they hadn't targeted consumers directly.

Now, the successor to the 950 Pro is about to hit the market. The Samsung 960 Pro is from one perspective just a generational refresh of the 950 Pro: the 32-layer V-NAND is replaced with 48-layer V-NAND that has twice the capacity per die, and the UBX SSD controller is replaced by its Polaris successor that debuted earlier this year in the SM961 and PM961 OEM SSDs. However...

| Samsung 960 PRO Specifications Comparison | ||||||

| 960 PRO 2TB | 960 PRO 1TB | 960 PRO 512GB | 950 PRO 512GB |

950 PRO 256GB |

||

| Form Factor | Single-sided M.2 2280 | Single-sided M.2 2280 | ||||

| Controller | Samsung Polaris | Samsung UBX | ||||

| Interface | PCIe 3.0 x4 | PCIe 3.0 x4 | ||||

| NAND | Samsung 48-layer 256Gb MLC V-NAND | Samsung 32-layer 128Gbit MLC V-NAND | ||||

| Sequential Read | 3500 MB/s | 3500 MB/s | 3500 MB/s | 2500MB/s | 2200MB/s | |

| Sequential Write | 2100 MB/s | 2100 MB/s | 2100 MB/s | 1500MB/s | 900MB/s | |

| 4kB Random Read (QD1) | 14k IOPS | 12k IOPS | 11k IOPS | |||

| 4kB Random Write (QD1) | 50k IOPS | 43k IOPS | 43k IOPS | |||

| 4kB Random Read (QD32) | 440k IOPS | 440k IOPS | 330k IOPS | 300k IOPS | 270k IOPS | |

| 4kB Random Write (QD32) | 360k IOPS | 360k IOPS | 330k IOPS | 110k IOPS | 85k IOPS | |

| Read Power | 5.8W | 5.3W | 5.1W | 5.7W (average) | 5.1W (average) | |

| Write Power | 5.0W | 5.2W | 4.7W | |||

| Endurance | 1200TB | 800TB | 400TB | 400TB | 200TB | |

| Warranty | 5 Year | 5 Year | ||||

| Launch MSRP | $1299 | $629 | $329 | $350 | $200 | |

... looking at the performance specifications of the 960 Pro, it clearly is much more than just a refresh. Part of this is due to the fact that PCIe SSDs simply have more room to advance than SATA SSDs, so it's possible for Samsung to add 1GB/s to the sequential read speed and to triple the random write speed. But to bring about those improvements and stay at the top of a market segment that is seeing new competition every month, Samsung has had to make significant changes to almost every aspect of the hardware.

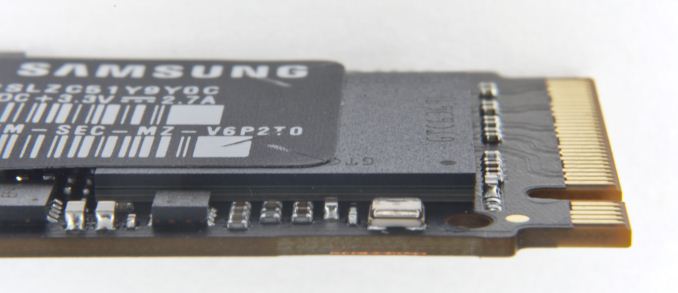

We've already analyzed Samsung's 48-layer V-NAND in reviewing the 4TB 850 EVO it first premiered in. The Samsung 960 Pro uses the 256Gb MLC variant, which allows for a single 16-die package to contain 512GB of NAND, twice what was possible for the 950 Pro. Samsung has managed another doubling of drive capacity by squeezing four NAND packages on to a single side of the M.2 2280 card. Doing this while keeping to that single-sided design required freeing up the space taken by the DRAM, which is now stacked on top of the controller in a package-on-package configuration.

Samsung's Polaris controller is also a major change from the UBX controller used in the 950 Pro. Meeting the much higher performance targets of the 960 Pro with the UBX controller architecture would have required significantly higher clock speeds that the drive's power budget wouldn't allow for. Instead, the Polaris controller widens from three ARM cores to five, and now dedicates one core for communication with the host system.

The small size of the M.2 form factor combined with the higher power required to perform at the level expected of a PCIe 3.0 x4 SSD means that heat is a serious concern for M.2 PCIe SSDs. In general, these SSDs can be forced to throttle themselves rather than overheat when subjected by intensive benchmarks and stress tests, but at the same time most drives avoid thermal throttling during typical real-world use. Most heavy workloads are bursty, especially at 2GB/sec.

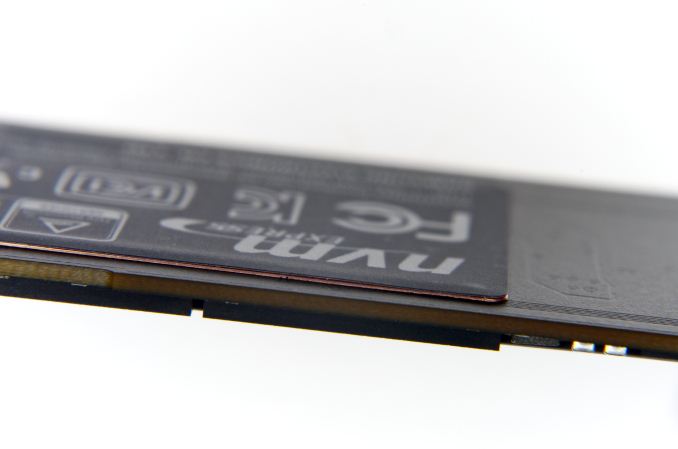

Even so, many users would prefer the benefits of reliable sustained performance offered by a well-cooled PCIe SSD, and almost every M.2 PCIe SSD is now doing something to address thermal concerns. Toshiba's OCZ RD400 is available with an optional PCIe x4 to M.2 add-in card that puts a thermal pad directly behind the SSD controller. Silicon Motion's SM2260 controller integrates a thin copper heatspreader on the top of the controller package. Plextor's M8Pe is available with a whole-drive heatspreader. Samsung has decided to put a few layers of copper into the label stuck on the back side of the 960 Pro. This is thin enough to not have any impact on the drive's mechanical compatibility with systems that require a single-sided drive, but the heatspreader-label does make a significant improvement in the thermal behavior of the 960 Pro, according to Samsung.

(click for full resolution close-up)

(click for full resolution close-up)

The warranty on the 960 Pro is five years, the same as for the 950 Pro but half of what is offered with the 850 Pro. When the 950 Pro was introduced, Samsung explained that the decreased warranty period on a higher-end product was due to NVMe and PCIe SSDs being a less mature technology than SATA SSDs. Despite having a very successful year with the 950 Pro, Samsung isn't bumping the warranty period back up to 10 years, and I would be surprised if they ever release a consumer SSD with such a long warranty period again.

Going hand in hand with the warranty period is the write endurance rating. The 512GB and 1TB models have endurance ratings that are equivalent to the drive writes per day offered by the 950 Pro. The 2TB 960 Pro's endurance rating falls short at 1200TB instead of the 1600TB that would be double the rating on the 1TB 960 Pro. When asked about this discrepancy during the Q&A session at Samsung's SSD Global Summit where the 960 Pro was announced, Samsung dodged the question and did not offer a satisfactory explanation.

The one other area where the 960 Pro does not promise significant progress is price. Despite switching to denser NAND, the MSRP of the 512GB 960 Pro is only slightly lower than the MSRP the 512GB 950 Pro launched with, and slightly higher than the current retail price of the 950 Pro. The 960 Pro is using more advanced packaging for the controller and NAND and the controller itself likely costs a bit more, but the bigger factor keeping the price up is probably the dearth of serious competition.

When the Samsung 950 Pro launched, its main competition in the PCIe space was the Intel SSD 750, a derivative of their enterprise PCIe SSD line equipped with consumer-oriented firmware. It's big and power hungry, but brought NVMe to the consumer market and set quite a few performance records in the process. The 950 Pro couldn't beat the SSD 750 in every test, but it comes out ahead where it matters most for everyday client workloads. Since then, new NVMe controllers have arrived from Marvell, Silicon Motion and Phison. We reviewed the OCZ RD400 and found it was able to beat the 950 Pro in several tests, especially when considering the 1TB RD400 against the largest 950 Pro that is only 512GB. We will be comparing the 2TB Samsung 960 Pro against its predecessor and these competing high-end PCIe SSDs, as well as three 2TB-class SATA SSDs.

| AnandTech 2015 SSD Test System | |

| CPU | Intel Core i7-4770K running at 3.5GHz (Turbo & EIST enabled, C-states disabled) |

| Motherboard | ASUS Z97 Pro (BIOS 2701) |

| Chipset | Intel Z97 |

| Memory | Corsair Vengeance DDR3-1866 2x8GB (9-10-9-27 2T) |

| Graphics | Intel HD Graphics 4600 |

| Desktop Resolution | 1920 x 1200 |

| OS | Windows 8.1 x64 |

- Thanks to Intel for the Core i7-4770K CPU

- Thanks to ASUS for the Z97 Deluxe motherboard

- Thanks to Corsair for the Vengeance 16GB DDR3-1866 DRAM kit, RM750 power supply, Carbide 200R case, and Hydro H60 CPU cooler

72 Comments

View All Comments

emn13 - Wednesday, October 19, 2016 - link

Especially since NAND hasn't magically gotten lots faster after the SATA->NVMe transition. If SATA is fast enough to saturate the underlying NAND+controller combo when they must actually write to disk, then NVMe simply looks unnecessarily expensive (if you look at writes only). Since the fast NVMe drives all have ram caches, it's hard to detect whether data is properly being written.Perhaps windows is doing something odd here, but it's definitely fishy.

jhoff80 - Tuesday, October 18, 2016 - link

This is probably a stupid question because I've been changing that setting for years on SSDs without even thinking about it and you clearly know more about this than I do, but does the use of a drive in a laptop (eg battery-powered) or with a UPS for the system negate this risk anyway? That was always my impression, but it could very much be wrong.shodanshok - Tuesday, October 18, 2016 - link

Having a battery, laptops are inherently safer than desktop against power loss. However, a bad (or missing) battery and/or a failing cable/connector can expose the disks to the very same unprotected power-loss scenario.Dr. Krunk - Sunday, October 23, 2016 - link

What happens if accidently press the battery release button and it pops out just enough to lose connection?woggs - Tuesday, October 18, 2016 - link

I would love to see Anandtech do a deep dive into this very topic. It's important. I've heard that windows and other apps do excessive cache flushing when enabled and that's also a problem. I've also heard intel SSDs completely ignore the cache flush command and simply implement full power loss protection. Batching writes into ever larger pieces is a fact of SSD life and it needs to be done right.voicequal - Tuesday, October 18, 2016 - link

Agreed. Last year I traced slow disk i/o on a new Surface Pro 4 with 256GB Toshiba XG3 NVMe to the write-cache buffer flushing, so I checked the box to turn it off. Then in July, another driver bug caused the Surface Pro 4 to frequently lock up and require a forced power off. Within a few weeks I had a corrupted Windows profile and system file issues that took several DISM runs to clean up. Don't know for sure if my problem resulted from the disabled buffer flushing, but I'm now hesitant to reenable the setting.It would be good to understand what this setting does with respect to NVMe driver operation, and interesting to measure the impact / data loss when power loss does occur.

Kristian Vättö - Tuesday, October 18, 2016 - link

I think you are really exaggerating the problem. DRAM cache has been used in storage well before SSDs became mainstream. Yes, HDDs have DRAM cache too and it's used for the same purpose: to cache writes. I would argue that HDDs are even more vulnerable because data sits in the cache for a longer time due to the much higher latency of platter-based storage.Because of that, all consumer friendly file systems have resilience against small data losses. In the end, only a few MB of user data is cached anyway, so it's not like we talk about a major data loss. It's small enough not to impact user experience, and the file system can recover itself in case there was metadata in the lost cache.

If this was a severe issue, there would have been a fix years ago. For client-grade products there is simply no need because 100% data protection and uptime are not needed.

shodanshok - Tuesday, October 18, 2016 - link

The problem is not the cache, rather ignoring cache flushes requests. I know DRAM caches are used from decades, and when disks lied about flushing them (in the good old IDE days), catastrophic filesystem failure were much more common (see XFS or ZFS FAQs / mailing lists for some reference, or even SATA command specifications).I'm not exaggerating anything: it is a real problem, greatly debated in the Linux community in the past. From https://lwn.net/Articles/283161/

"So the potential for corruption is always there; in fact, Chris Mason has a torture-test program which can make it happen fairly reliably. There can be no doubt that running without barriers is less safe than using them"

This quote is ext3-specific, but other journaled filesystem behave in very similar manners. And hey - the very same Windows check box warns you about the risks related to disabling flushes.

You should really inquiry Microsoft about what these check box do on its NVMe driver. Anyway, suggesting to disable cache flushes is a bad advise (unless you don't use your PC for important things).

Samus - Wednesday, October 19, 2016 - link

I don't think people understand how cache flushing works at the hardware level.If the operating system has buffer flushing disabled, it will never tell the drive to dump the cache, for example, when an operation is complete. In this event, a drive will hold onto whatever data is in cache until the cache fills up, then the drive firmware will trigger the controller to write the cache to disk.

Since OS's randomly write data to disk all the time, bits here and there go into cache to prevent disk thrashing/NAND wear, all determined in hardware. This has nothing to do with pooled or paged data at the OS level or RAM data buffers.

Long story short, it's moronic to disable write buffer flushing, where the OS will command the drive after IO operations (like a file copy or write) complete, ensuring the cache is clear as the system enters idle. This happens hundreds if not thousands of times per minute and its important to fundamentally protect the data in cache. With buffer flushing disabled the cache will ALWAYS have something in it until you shutdown - which is the only time (other than suspend) a buffer flush command will be sent.

Billy Tallis - Wednesday, October 19, 2016 - link

"With buffer flushing disabled the cache will ALWAYS have something in it until you shutdown - which is the only time (other than suspend) a buffer flush command will be sent."I expect at least some drives flush their internal caches before entering any power saving mode. I've occasionally seen the power meter spike before a drive actually drops down to its idle power level, and I probably would have seen a lot more such spikes if the meter were sampling more than once per second.