AMD Zen Microarchiture Part 2: Extracting Instruction-Level Parallelism

by Ian Cutress on August 23, 2016 8:45 PM EST- Posted in

- CPUs

- AMD

- x86

- Zen

- Microarchitecture

The High-Level Zen Overview

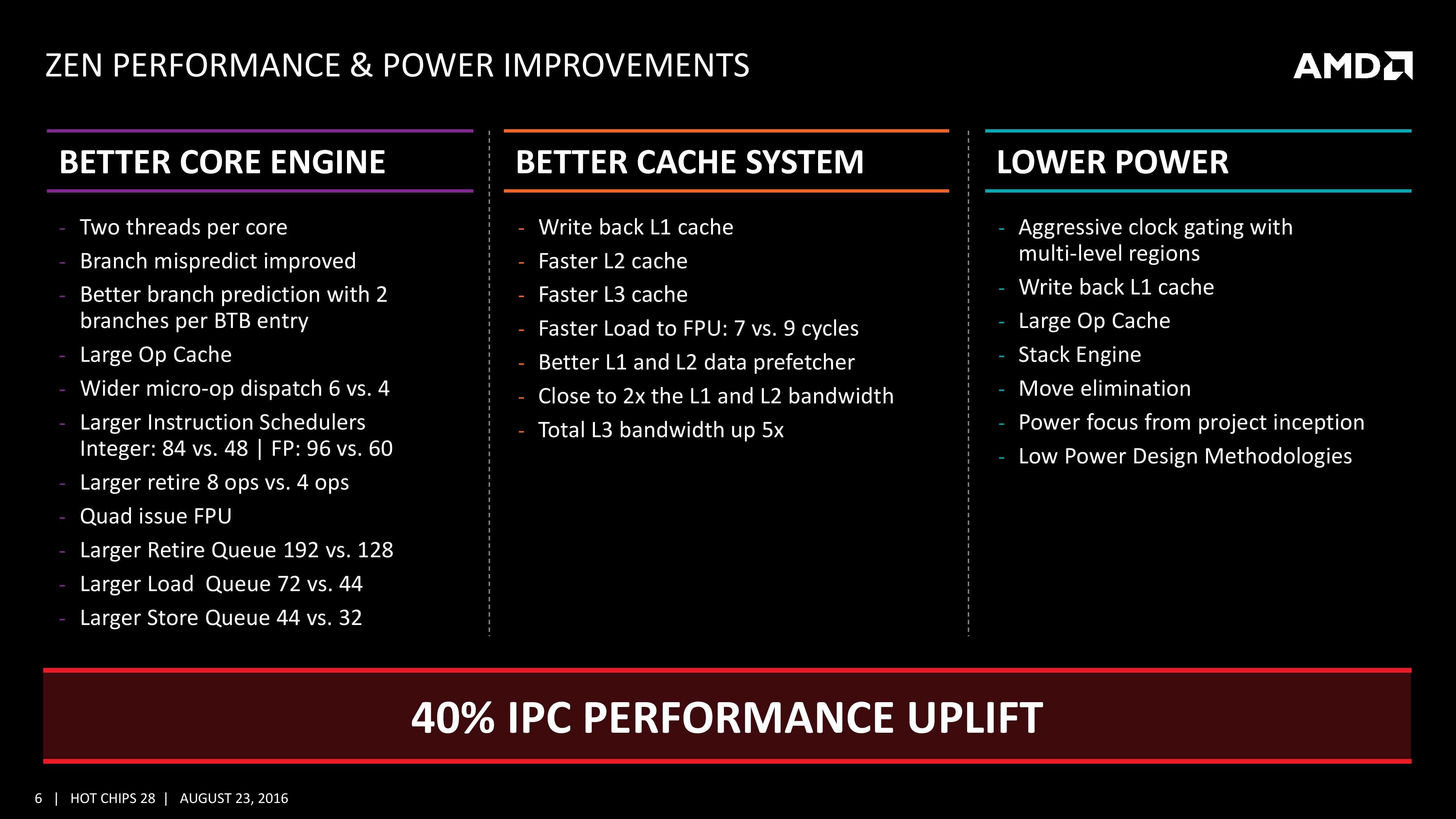

AMD is keen to stress that the Zen project had three main goals: core, cache and power. The power aspect of the design is one that was very aggressive – not in the sense of aiming for a mobile-first design, but efficiency at the higher performance levels was key in order to be competitive again. It is worth noting that AMD did not mention ‘die size’ in any of the three main goals, which is usually a requirement as well. Arguably you can make a massive core design to run at high performance and low latency, but it comes at the expense of die size which makes the cost of such a design from a product standpoint less economical (if AMD had to rely on 500mm2 die designs in consumer at 14nm, they would be priced way too high). Nevertheless, power was the main concern rather than pure performance or function, which have been typical AMD targets in the past. The shifting of the goal posts was part of the process to creating Zen.

This slide contains a number of features we will hit on later in this piece but covers a number of main topics which come under those main three goals of core, cache and power.

For the core, having bigger and wider everything was to be expected, however maintaining a low latency can be difficult. Features such as the micro-op cache help most instruction streams improve in performance and bypass parts of potentially long-cycle repetitive operations, but also the larger dispatch, larger retire, larger schedulers and better branch prediction means that higher throughput can be maintained longer and in the fastest order possible. Add in dual threads and the applicability of keeping the functional units occupied with full queues also improves multi-threaded performance.

For the caches, having a faster prefetch and better algorithms ensures the data is ready when each of the caches when a thread needs it. Aiming for faster caches was AMD’s target, and while they are not disclosing latencies or bandwidth at this time, we are being told that L1/L2 bandwidth is doubled with L3 up to 5x.

For the power, AMD has taken what it learned with Carrizo and moved it forward. This involves more aggressive monitoring of critical paths around the core, and better control of the frequency and power in various regions of the silicon. Zen will have more clock regions (it seems various parts of the back-end and front-end can be gated as needed) with features that help improve power efficiency, such as the micro-op cache, the Stack Engine (dedicated low power address manipulation unit) and Move elimination (low-power method for register adjustment - pointers to registers are adjusted rather than going through the high-power scheduler).

The Big Core Diagram

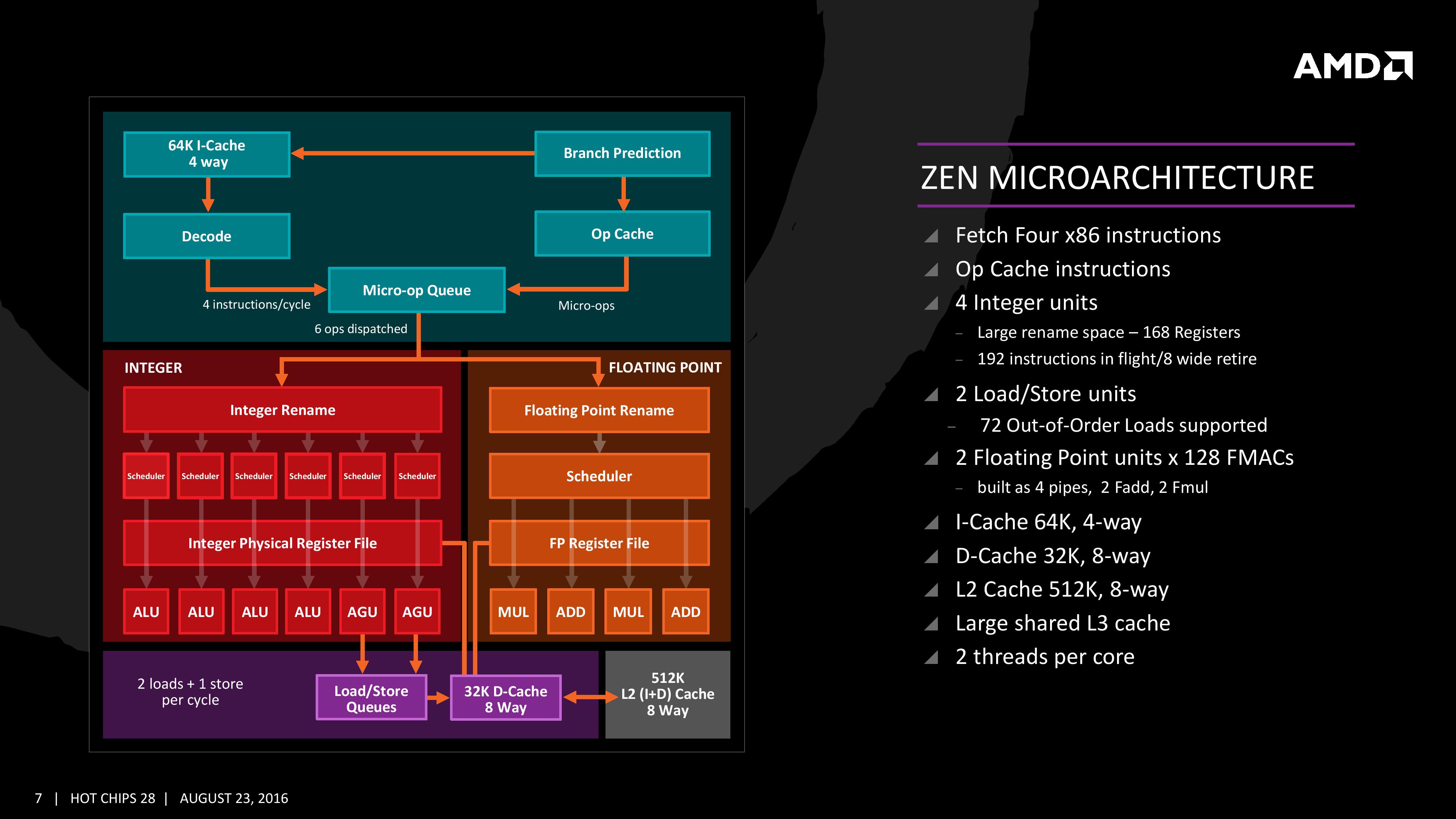

We saw this diagram last week, but now we get updates on some of the bigger features AMD wants to promote:

The improved branch predictor allows for 2 branches per Branch Target Buffer (BTB), but in the event of tagged instructions will filter through the micro-op cache. On the other side, the decoder can dispatch 4 instructions per cycle however some of those instructions can be fused into the micro-op queue. Fused instructions still come out of the queue as two micro-ops, but take up less buffer space as a result.

As mentioned earlier, the INT and FP pipes and schedulers are separated, but the INT rename space is 168 registers wide, which feeds into 6x14 scheduling queues. The FP employs as 160 entry register file, and both the FP and INT sections feed into a 192-entry retire queue. The retire queue can operate at 8 instructions per cycle, moving up from 4/cycle in previous AMD microarchitectures.

The load/store units are improved, supporting a 72 out-of-order loads, similar to Skylake. We’ll discuss this a bit later. On the FP side there are four pipes (compared to three in previous designs) which support combined 128-bit FMAC instructions. These cannot be combined for one 256-bit AVX2, but can be scheduled for AVX2 over two instructions.

106 Comments

View All Comments

atlantico - Friday, August 26, 2016 - link

Wow looncraz!! Really cool effort you made :)Spunjji - Saturday, August 27, 2016 - link

You numbers are different to everyone else's. Given that you don't cite any of your sources I believe everyone else.Krysto - Wednesday, August 24, 2016 - link

I would hope they try to double the cores of Intel for notebooks.Dual-core Zen without SMT will DESTROY Intel's Atom-based Celerons and Pentiums at the low-end. There will be absolutely ZERO reason to get a Celeron or Pentium notebooks once Zen appears on the market at that price range.

But at the Core i3 and Core i5 levels, I was hoping AMD would price a quad-core Zen with no SMT against dual-core Core i3 and Core i5, and a quad-core Zen with SMT against Intel's quad-core (no HT) Core i5, and finally 8-core with and without SMT variants against Intel's quad-core Core i7 chips (with HT).

If they can basically double the cores compared to what Intel has to offer at around the same price level, and maybe with only slightly worse single-thread performance and slightly worse power consumption, AMD's chips should be a NO-BRAINER. The value would be incredible, and it would push the market towards having powerful quad-core chips by default for most PCs. Intel is going to HATE that, because it would seriously cut into their profits. So AMD could use that strategy to both offer great value products and hurt Intel significantly.

looncraz - Wednesday, August 24, 2016 - link

AMD is not seeking the low end, they are trying to redefine AMD as the top-tier CPU company they once were. They are aiming for the top and the bulk of the market.Zen+'s 15% IPC improvement over Zen might just give them the performance crown, but I'm sure Intel has taken note and planned accordingly.

zaza - Wednesday, August 24, 2016 - link

but the AMD CCX module is a quad core module. i am not sure if it is easy for AMD to just remove two.looncraz - Wednesday, August 24, 2016 - link

Very easy, you just fuse off the defective core, that's the beauty of independent cores. The core complex just shares a common data bus and third level cache. Disabling a core in the complex will simply have it not ask for data on the common data bus. The L3 cache may or may not be cut down (probably will be).H2323 - Wednesday, August 24, 2016 - link

"While Zen is initially a high-performance x86 core at heart, it is designed to scale all the way from notebooks to supercomputers, or from where the Cat cores (such as Jaguar and Puma) were all the way up to the old Opterons and beyond, all with at least +40% IPC."https://www.youtube.com/watch?v=eUSJfGehKDQ

In the video its more than 40% across all of internal texting.

Vigilant007 - Saturday, August 27, 2016 - link

I don't know if AMD will ever have a major win as far as the PC industry again. Realistically they'll end up focusing on building custom x86 for consoles, and server chips. I can also see them exploiting their ability to do x86 to design custom chips for Apple.AMD could end up being a fantastic acquisition target as well.

Tuna-Fish - Tuesday, August 23, 2016 - link

From page 3:> and L2 with 512 entries and support for 4K and 256K pages only.

Surely you meant 4k and 2MB pages only?

deltaFx2 - Tuesday, August 23, 2016 - link

Ian, an error here: "It also states that the L3 is mostly inclusive of the L2 cache, which stems from the L3 cache as a victim cache for L2 data." A victim L3 is by definition an exclusive cache (as you note elsewhere). Also I don't understand why you have the impression that a victim cache is less efficient than an inclusive cache. As you note, an inclusive cache has to keep duplicate copies of data in L2 and L3 whereas an exclusive cache stores exactly 1 copy (either L2 or L3 but never both). In an exclusive cache hierarchy, a cache block is inserted into the L2, and when evicted, is put into the L3. In an inclusive cache hierarchy, a cache block is inserted both into the L2 and L3. Doesn't the exclusive hierarchy make better use of space? Incidentally, AMD has done exclusive caches since K8 at least. This isn't new.