Micron Announces QuantX Branding For 3D XPoint Memory (UPDATED)

by Billy Tallis on August 9, 2016 10:45 AM EST- Posted in

- SSDs

- Micron

- 3D XPoint

- Flash Memory Summit

- QuantX

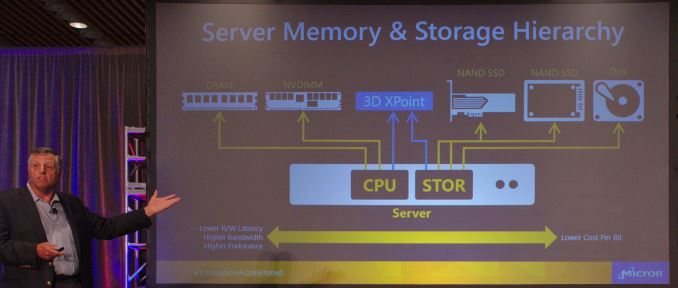

In a keynote speech later this morning at Flash Memory Summit, Micron will be unveiling the branding and logo that their products based on 3D XPoint memory will be using. The new QuantX brand is Micron's counterpart to Intel's Optane brand and will be used for the NVMe storage products that we will hear more about later this year. 3D Xpoint memory was jointly announced by Intel and Micron shortly before last year's Flash Memory Summit and we analyzed the details that were available at the time. Since then there has been very little new official information but much speculation. We do know that the initial products will be NVMe SSDs rather than NVDIMMs or other memory bus attached devices.

In the meantime, Micron VP Darren Thomas goes on stage at 11:30 AM PT, and we'll update with any further information.

UPDATE:

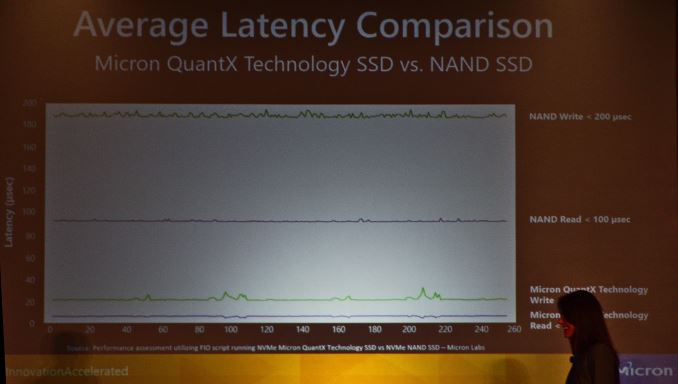

Micron's keynote reiterated their strategy of positioning 3D XPoint and thus QuantX products in between NAND flash and DRAM, with the advantages of 3d XPoint relative to each highlighted (while the disadvantages go unmentioned). But then they moved on to showing some meaningful performance graphs from actual benchmarks of QuantX drives.

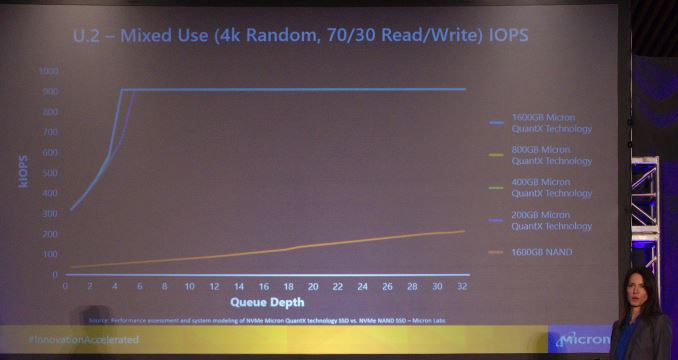

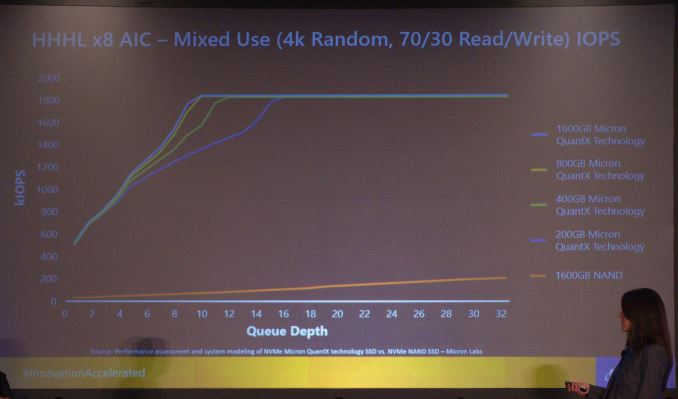

The factor of ten improvement in both read and write latency for NVMe drives is great, but perhaps the more impressive results are the graphs showing queue depth scaling. In a comparison of U.2 NVMe drives, Micron showed performance of QuantX drives ranging from 200GB to 1600GB, relative to a 1600GB NAND flash NVMe SSD. The QuantX drives saturated the PCIe x4 link with a 70/30 mix of random reads and random writes with queue depths of just 4-6, while the NAND SSD gets nowhere close to saturating the link with random accesses. The next comparison was of the PCIe x8 add-in card performance, where the link was saturated with queue depths between 10 and 16, depending on the capacity of the QuantX drive.

This great performance at low queue depths will make it relatively easy for a wide variety of workloads to benefit from the raw performance 3D XPoint memory offers. By contrast, the biggest and fastest PCIe SSDs based on NAND flash often require careful planning on the software side to achieve full utilization.

Source: Micron

52 Comments

View All Comments

AnnonymousCoward - Wednesday, August 10, 2016 - link

Huh? IoT doesn't need speed.Wardrop - Tuesday, August 9, 2016 - link

Can we get rid of this Maxx Hop guy in the comments.ddriver - Tuesday, August 9, 2016 - link

Nah, IoT lives matter!Lolimaster - Wednesday, August 10, 2016 - link

With the Radeon SSG I think AMD is developing their own version of 3dxpoint hence the "next gen memory" after HBM2,It would be awesome if they change the cpu market with an integrated APU+(VRAM+NVDIMM) all in one package.

HollyDOL - Wednesday, August 10, 2016 - link

I understand the reasons for integrated components - it's much faster, compact etc. Otoh old times modularity had its benefits and I kind of liked it more - you bought exactly what you needed. These days you have plenty... and while for some pple it has benefits for others it's just waste silicon. Wish I could configure cpu on hardware level not to have iGPU at all, or motheboard to have specific NIC, 6 SATA-3, U.2, pump capable 4pin fan slot, this and this PCIE slot but not firewire or sound. Often you pay quite a bit extra and get one feature you wanted or sacrifice the feature.Sounds like I am getting old runt, doh.

BrokenCrayons - Wednesday, August 10, 2016 - link

You can get iGPU-less chips from AMD. Athlon x4 CPUs have no onboard graphics (though they're probably all harvested A-series processors with faulty graphics). Of course, you'll suffer in processor performance for it. I own an x4 860K which was purchased to replace an old Q6600. The x4 was faster, but surprisingly not by as much of a margin as I thought.fanofanand - Wednesday, August 10, 2016 - link

This is nostalgia for the sake of nostalgia. Having everything on the package decreases interconnect lengths, simplifies wiring, reduces complexity for motherboard manufacturers, decreases latency, and reduces electrical usage. There is absolutely zero reason for having the "modularity" you describe.BrokenCrayons - Wednesday, August 10, 2016 - link

I admit that I'm conflicted when it comes to greater component integration's benefits and the flexibility of end user replaceable components. There's no doubt the reasons for shifting more functions into the CPU package are really good ones, but I disagree with the idea that there's no reason at all to retain the modularity that Holly's pointed out. Dispersing heat generation out to a larger physical area is a good idea. Look at how hot Skylake processors run when they have 72 EUs and eDRAM included in the chip package and though for iGPUs, those parts are extremely fast, they still fall short, giving end users justification for adding a dedicated graphics card when they reach a GPU performance wall, yet also have more than enough CPU power to handle their workloads...such is the case with many owners of Sandy Bridge desktops right now where a GPU and a SSD upgrade will keep them happy for a year or three more. Build-to-order is another good reason to retain some flexibility in computing devices. OEMs have an easier time swapping or adding parts to suit the needs of their customers rather than hoping to sell all of them the same system or keeping many differently configured devices for sale that cannot be changed just prior to shipping.FunBunny2 - Wednesday, August 10, 2016 - link

-- I admit that I'm conflicted when it comes to greater component integration's benefits and the flexibility of end user replaceable components."integration" is just another word for monopoly. and folks wonder why it's so easy to attack X86 machines?

fanofanand - Wednesday, August 10, 2016 - link

He has made the same stupid iOT comment several times. Nobody gives a rip about iOT. Maybe in 5 years but the computing power simply is inadequate in such tiny devices today.