Assessing IBM's POWER8, Part 1: A Low Level Look at Little Endian

by Johan De Gelas on July 21, 2016 8:45 AM ESTMulti Threading Prowess

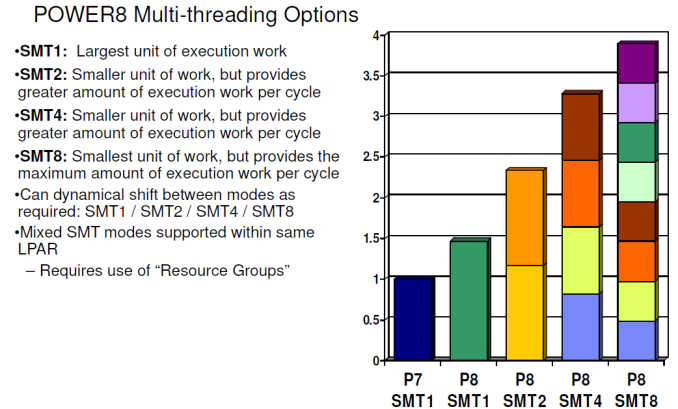

The gains of 2-way SMT (Hyperthreading) on Intel processors are still relatively small (10-20%) in many applications. The reason is that threads have to share most of the critical resources such as L1-cache, the instruction TLB, µop cache, and instruction queue. That IBM uses 8-way SMT and still claims to get significant performance gains piqued our interest. Is this just benchmarketing at best or did they actually find a way to make 8-way SMT work?

It is interesting to note that with 2-way SMT, a single thread is still running at about 80% of its performance without SMT. IBM claims no less than a 60% performance increase due to 2-way SMT, far beyond what Intel has ever claimed (30%). This can not be simply explained by the higher amount of issue slots or decoding capabilities.

The real reason is a series of trade-offs and extra resource investments that IBM made. For example, the fetch buffer contains 64 instructions in ST mode, but twice as many entries are available in 2-way SMT mode, ensuring each thread still has a 64 instruction buffer. In SMT4 mode, the size of the fetch buffer for each thread is divided in 2 (32 instructions), and only in SMT8 mode things get a bit cramped as the buffer is divided by 4.

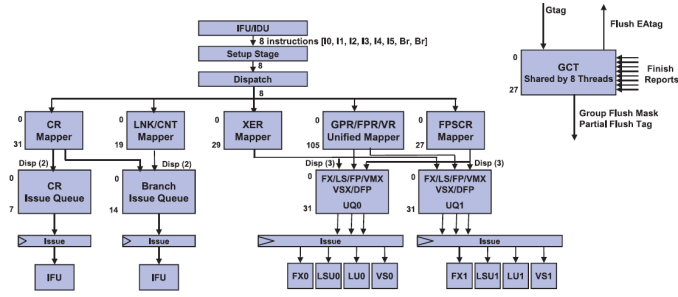

The design philosophy of making sure that 2 threads do not hinder each other can be found further down the pipeline. The Unified Issue Queue (UniQueue) consists of two symmetric halves (UQ0 and UQ1), each with 32 entries for instructions to be issued.

Each of these UQs can issue instructions to their own reserved Load/Store, Integer (FX), Load, and Vector units. A single thread can use both queues, but this setup is less flexible (and thus less performant) than a single issue queue. However, once you run 2 threads on top of a core (SMT-2), the back-end acts like it consists of two full-blown 5-way superscalar cores, each with their own set of physical registers. This means that one thread cannot strangle the other by using or blocking some of the resources. That is the reason why IBM can claim that two threads will perform so much better than one.

It is somewhat similar to the "shared front-end, dual-core back-end" that we have seen in Bulldozer, but with (much) more finesse. For example, the data cache is not divided. The large and fast 64 KB D-cache is available for all threads and has 4 read ports. So two threads will be able to perform two loads at the same time. Another example is that a single thread is not limited to one half, but can actually use both, something that was not possible with Bulldozer.

Dividing those ample resources in two again (SMT-4) should not pose a problem. All resources are there to run most server applications fast and one of the two threads will regularly pause when a cache miss or other stalls occur. The SMT-8 mode can sometimes be a step too far for some applications, as 4 threads are now dividing up the resources of each issue queue. There are more signs that SMT-8 is rather cramped: instruction prefetching is disabled in SMT-8 modus for bandwidth reasons. So we suspect that SMT-8 is only good for very low IPC, "throughput is everything" server applications. In most applications, SMT-8 might increase the latency of individual threads, while offering only a small increase in throughput performance. But the flexibility is enormous: the POWER8 can work with two heavy threads but can also transform itself into a lightweight thread machine gun.

124 Comments

View All Comments

JohanAnandtech - Thursday, July 28, 2016 - link

Send me a mail at johan@anandtech.comabufrejoval - Thursday, August 4, 2016 - link

Hmm, a bit fuzzy after the first paragraph or so and evidently because I dislike malwaretizement: Such links should be banned!mystic-pokemon - Friday, July 22, 2016 - link

Hi floobitFor virtualization: powerVM and out of the box KVM (tested on Fedora 23, Ubuntu 15.04 / 15.10 / 16.04) work quite well. Xen doesn't work well or hasn't been officially tested / released.

tipoo - Thursday, July 21, 2016 - link

Fun! I was always curious about this processor.tipoo - Thursday, July 21, 2016 - link

Interesting that the L3 eDRAM not only allows them to pack in much more L3 (what was it, 3 SRAM transistors per eDRAM or something?), but it's also low latency which was a cited concern with eDARM by some people. Appears to be an unfounded fear.And then on top of that they put another large L4 eDRAM cache on.

Maybe Intel needs to play with eDRAM more...

tipoo - Thursday, July 21, 2016 - link

Lol, eDRAM, not eDARMKevin G - Thursday, July 21, 2016 - link

There was a change in how the L4 cache works from Broadwell to SkyLake on the mobile parts. The implication is that Intel was exploring the idea of a large L4 eDRAM for SkyLake-EP/EX parts. We'll see how that turns out as Intel also has explored using HMC as a cache for high bandwidth applications in Knights Landing. So either way, Intel has thus idea on there radar and we'll see how it pans out next year.tsk2k - Thursday, July 21, 2016 - link

Is it possible to run Windows on one of these?ZeDestructor - Thursday, July 21, 2016 - link

At the moment, a very solid no.That said, if enough partners ask for it and/or if the numbers make sense for Azure, MS will at the very least have a damn good look at porting Windows over.

DanNeely - Thursday, July 21, 2016 - link

It's probably just a case of doing QA and releasing it. They've sold a PPC build in the past; and maintain internal builds for a number of other CPU architectures to avoid accidentally baking x86isms into the core code.