The Intel Broadwell-E Review: Core i7-6950X, i7-6900K, i7-6850K and i7-6800K Tested

by Ian Cutress on May 31, 2016 2:01 AM EST- Posted in

- CPUs

- Intel

- Enterprise

- Prosumer

- X99

- 14nm

- Broadwell-E

- HEDT

Professional Performance: Windows

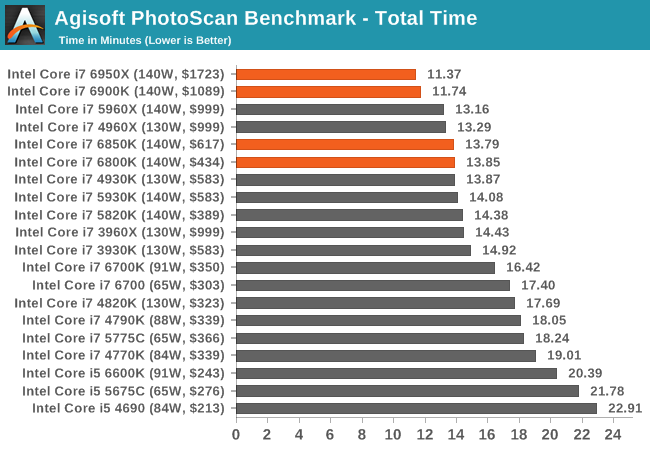

Agisoft Photoscan – 2D to 3D Image Manipulation: link

Agisoft Photoscan creates 3D models from 2D images, a process which is very computationally expensive. The algorithm is split into four distinct phases, and different phases of the model reconstruction require either fast memory, fast IPC, more cores, or even OpenCL compute devices to hand. Agisoft supplied us with a special version of the software to script the process, where we take 50 images of a stately home and convert it into a medium quality model. This benchmark typically takes around 15-20 minutes on a high end PC on the CPU alone, with GPUs reducing the time.

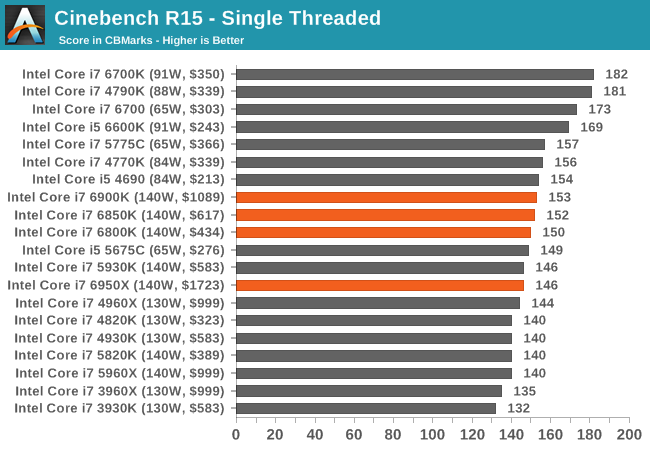

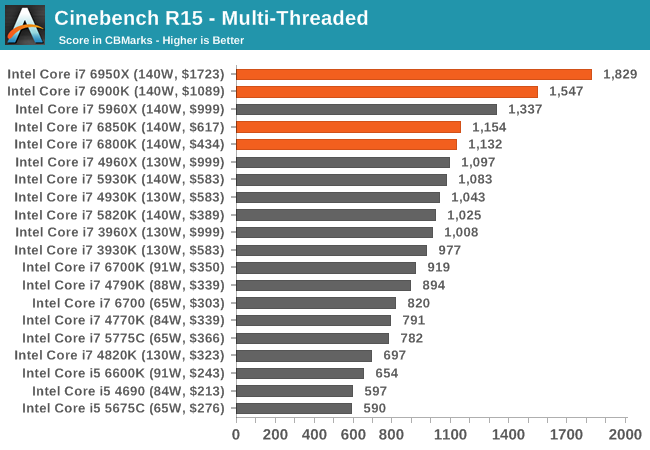

Cinebench R15

Cinebench is a benchmark based around Cinema 4D, and is fairly well known among enthusiasts for stressing the CPU for a provided workload. Results are given as a score, where higher is better.

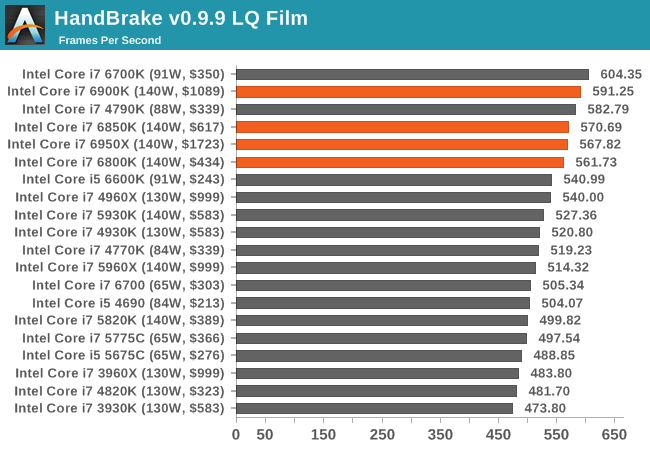

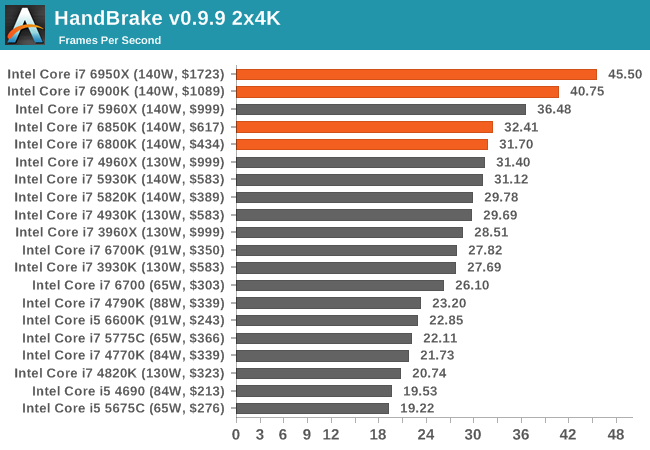

HandBrake v0.9.9: link

For HandBrake, we take two videos (a 2h20 640x266 DVD rip and a 10min double UHD 3840x4320 animation short) and convert them to x264 format in an MP4 container. Results are given in terms of the frames per second processed, and HandBrake uses as many threads as possible.

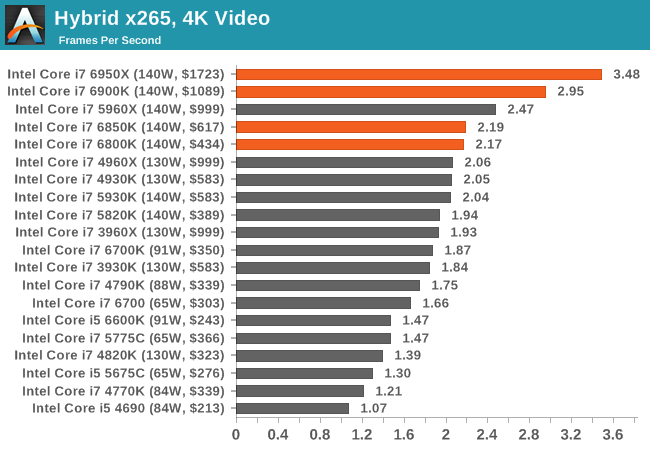

Hybrid x265

Hybrid is a new benchmark, where we take a 4K 1500 frame video and convert it into an x265 format without audio. Results are given in frames per second.

205 Comments

View All Comments

Witek - Thursday, June 16, 2016 - link

@rodmunch69 - Yeah. I also almost 4 years on 3930K (at 3.2GHz->4.2GHz), which I got for something like 350$ at the time. And I still do not see anything better in similar price bracket. x86 arch is a shit, with limited instruction issue, substandard compilers targeting generic cpus, and power hungry circuits trying to workaround the arch limitations.The prices on these new CPUs are shit, and Intel do that because they do not have competition in high performance x86 market right now. I understand making 14nm chip is more costly than previous generations, but eh, still it is crazy. There is no point of using 14nm for desktop if it doesn't provide substantial performance boost or power saving, at similar price. I am seriously looking into ARM64, Power8, MIPS and other architectures (especially high core counts, or ones still in developement - like Out of the Box Computing Mill CPU), that can break this trend (at least on Linux). SPARC looks like highest performance at the moment, but the prices are crazy as hell. Intel Phi is also interesting for paralellized workloads, but the price is still very high (due to the smaller target market).

Zen will help bring these prices into check. 16 core Zen (32 threads) at 1000$ would be awesome, and will bring all these Intel i7 cpus prices down substantially too.

vivs26 - Tuesday, May 31, 2016 - link

Looking at single threaded and multi threaded performance cant help but be reminded of Amdahl's law. The performance you can extract out of your system is only as much your workload allows you to ....ithehappy - Tuesday, May 31, 2016 - link

I am still on i7 950, just with a GTX970, will it be worth it if I get the 6800K? Or shall I still wait for Skylake-E? Gaming is my main priority, and low power consumption, because mine is on nearly 24x7.rhysiam - Wednesday, June 1, 2016 - link

If all you care about is gaming, get an i7 6700K. There's almost no value proposition in these CPUs anymore unless you absolutely need more than 4 cores. Very few games have been shown to benefit from more than 4 cores at all, and the hyperthreading on the i7 6700K will be there to help if (probably when) games finally start to scale better. The single threaded performance of the 6700K is also significantly better. If you have high end graphics and a 120/144hz display, where CPU performance can sometimes start to matter, the 6700K is actually the faster CPU, and would net you higher fps than any of these overpriced Broadwell-E CPUs.The only argument you could make is that at some point in the future games might start to benefit from 6+ cores. We've already seen in gaming benchmarks of i5s vs i3s vs Pentiums that hyperthreading does a surprisingly good job at mitigating the impact of a game running more threads than you have CPU cores. There's a very good chance that the 4 Core + HT of an i7 6700K will hold its own in gaming for a long time to come. Even if that turns out to not be the case, you'd be much better off in the long run just upgrading your machine when you need it rather than sinking money into a Broadwell-E system now.

mapesdhs - Thursday, June 9, 2016 - link

Re your power consumption, if that's because you care about long term cost, then there's a lot of utility in used hw such as a 3930K. It'll give a very good boost, it's much easier to oc than the later models, it's cheap, the platform supports broad SLI/CF, and it'd take years for the slightly higher power consumption of a 4.8 3930K to wipe out the huge cost saving vs. a 3930K (BIN for 96 UKP on eBay UK atm). It'll also better exploit future improvements in game design that support more cores.If you do want something new though, then rhysiam is right, 6700K or 4790K is fine.

Or go for something inbetween, like a used 4930K (costs a bit more, but higher IPC and some other benefits over SB-E).

However, if you do want something new, then rhysiam is right, the 6700K is plenty, or indeed a 4790K.

asmian - Tuesday, May 31, 2016 - link

Quite apart from cost/performance, the key question for some is whether this last version of Broadwell has had retrofitted the SGX extensions that were introduced with Skylake. Was this feature left out as it wasn't part of the original Broadwell platform? (Preferable) lack of SGX will mean this is the last secure-from-remote-snooping Intel processor release, otherwise the last will unfortunately be Haswell/Haswell-E.Anandtech has been conspicuously silent on SGX and why this is a privacy nightmare for users, unable to monitor or detect exactly what software may be secretly running on their processors due to a by-design inability to snoop on the process in-use memory. The benign use cases usually put forward hardly outweigh the risk of mode-adoption by virii, trojans and user-snooping malware of government origin, able to obfuscate their own remote loading, which would potentially be immune from detection by any means (likely including by the AV and anti-malware industry).

For more on why SGX is of concern read http://theinvisiblethings.blogspot.co.uk/2013_08_0... and http://theinvisiblethings.blogspot.co.uk/2013_09_0...

Please confirm definitively whether Broadwell-E has SGX or not.

Jvboom - Tuesday, May 31, 2016 - link

This is so disappointing. Every time a new release comes out I come on here hoping to justify buying. The numbers just aren't there for the $$.Tchamber - Tuesday, May 31, 2016 - link

Is anyone else disappointed that a new, cutting-edge CPU consumes 10W more than my 2010 i7 970 with the same number of cores? Add to that, prices go up faster than performance does. That makes it nice to see that CPUs don't make much difference in gaming. There are plenty of features I'd like, but I can wait till Zen comes out. In all honesty, I'll probably buy Zen just to support the underdog.krypto1300 - Tuesday, May 31, 2016 - link

Man, and I'm still getting by with my workhorse 1366 platform from 6 years ago. Running a Xeon X5650 @ 3.66GHz , 16GB of DDR31866 and a GTX 970! Everything still runs great! Doom and Project Cars do 1440P @ 60fps no problem!mapesdhs - Thursday, June 9, 2016 - link

A good example that shows the continued utility of what IMO was the last really ground-breaking new chipset release. I can remember reading every review I could find at the time about Nehalem and X58. Not done that since.Btw, are you by any chance using a Gigabyte board? 8)