The NVIDIA GeForce GTX 1080 & GTX 1070 Founders Editions Review: Kicking Off the FinFET Generation

by Ryan Smith on July 20, 2016 8:45 AM ESTGP104: The Heart of GTX 1080

At the heart of the GTX 1080 is the first of the consumer-focused Pascal GPUs, GP104. Though no two GPU generations are ever quite alike, GP104 follows a number of design cues established with the past couple 104 GPUs. Overall 104 GPUs have struck a balance between size and performance, allowing NVIDIA to get a suitably high yielding GPU out at the start of a generation, and to be followed up with larger GPUs later on as yields improve. With the exception of the GTX 780, 104 GPUs been the backbone of NVIDIA’s GTX 70 and 80 parts, and that is once again the case for the Pascal generation.

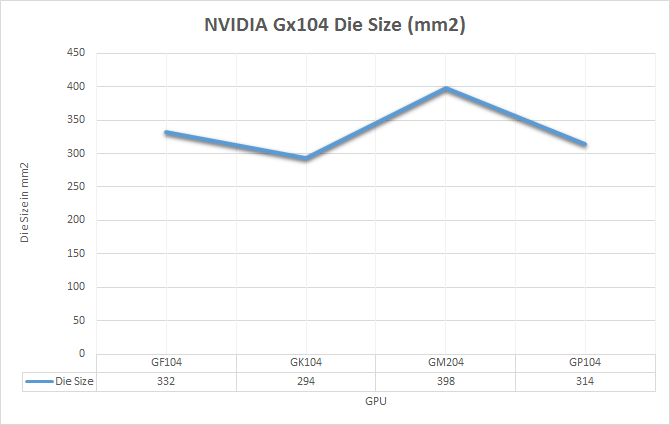

In terms of die size, GP104 comes in at 314mm2. This is right in NVIDIA’s traditional sweet spot for these designs, slotting in between the 294mm2 GK104 and the 332mm2 GF104. In terms of total transistors we’re looking at 7.2B transistors, up from 3.5B on GK104 and the 5.2B of the more unusual GM204. The significant increase in density comes from the use of TSMC’s 16nm FinFET process, which compared to 28nm combines a full node shrink, something that has been harder and harder to come by as the years have progressed.

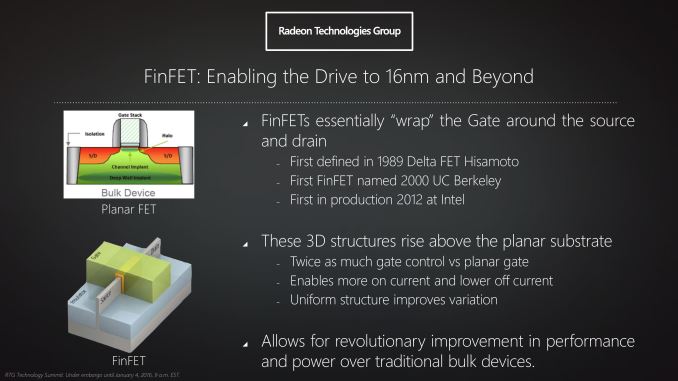

Though the density improvement offered by TSMC’s 16nm process is of great importance to GP104’s overall performance, for once density takes a back seat to the properties of the process itself. I am of course speaking about the FinFET transistors, which are the headlining feature of TSMC’s process.

We’ve covered FinFET technology in depth before, so I won’t completely rehash it here. But in brief, FinFETs are an important development for chip fabrication as processes have gone below 28nm. As traditional, planar transistors have shrunk in feature size – and ultimately, the number of atoms they’re comprised of – electrical leakage has increased. With fewer atoms in a transistor, there are equally fewer atoms to control the flow of electrons.

FinFET in turn is a solution to this problem, essentially allowing fabs to turn back the clock on electrical leakage. By building transistors as three-dimensional objects with height as opposed to two-dimensional objects, giving FinFET transistors their characteristic fins in the process, FinFET technology greatly reduces the amount of energy a transistor leaks. In practice what this means is that FinFET technology not only reduces the total amount of energy wasted from leakage, but it also allows transistors to be operated at a much lower voltage, something we’ll see in depth with our analysis of GTX 1080.

FinFETs, or rather the lack thereof, are a big part of why we never saw GPUs built on TSMC’s 20nm process. It was TSMC’s initial belief that they could contain leakage well enough using traditional High-K Metal Gate (HKMG) technology on 20nm, a bet they ultimately lost. At 20nm, planar transistors were just too leaky to use for many applications, which is why ultimately we only saw SoCs on 20nm (and even then they were suboptimal). FinFETs, as it turns out, are absolutely necessary to get good performance out of transistors built on processes below 28nm.

And while it took TSMC some time to get there, now that they have the capability NVIDIA can reap the benefits. Not only can NVIDIA finally build a relatively massive chip like a GPU on a sub-28nm process, but thanks to the various beneficial properties of FinFETs, it allows them to take their designs in a different direction than what they could do on 28nm.

200 Comments

View All Comments

TestKing123 - Wednesday, July 20, 2016 - link

Sorry, too little too late. Waited this long, and the first review was Tomb Raider DX11?! Not 12?This review is both late AND rushed at the same time.

Mat3 - Wednesday, July 20, 2016 - link

Testing Tomb Raider in DX11 is inexcusable.http://www.extremetech.com/gaming/231481-rise-of-t...

TheJian - Friday, July 22, 2016 - link

Furyx still loses to 980ti until 4K at which point the avg for both cards is under 30fps, and the mins are both below 20fps. IE, neither is playable. Even in AMD's case here we're looking at 7% gain (75.3 to 80.9). Looking at NV's new cards shows dx12 netting NV cards ~6% while AMD gets ~12% (time spy). This is pretty much a sneeze and will as noted here and elsewhere, it will depend on the game and how the gpu works. It won't be a blanket win for either side. Async won't be saving AMD, they'll have to actually make faster stuff. There is no point in even reporting victory at under 30fps...LOL.Also note in that link, while they are saying maxwell gained nothing, it's not exactly true. Only avg gained nothing (suggesting maybe limited by something else?), while min fps jumped pretty much exactly what AMD did. IE Nv 980ti min went from 56fps to 65fps. So while avg didn't jump, the min went way up giving a much smoother experience (amd gained 11fps on mins from 51 to 62). I'm more worried about mins than avgs. Tomb on AMD still loses by more than 10% so who cares? Sort of blows a hole in the theory that AMD will be faster in all dx12 stuff...LOL. Well maybe when you force the cards into territory nobody can play at (4k in Tomb Raiders case).

It would appear NV isn't spending much time yet on dx12, and they shouldn't. Even with 10-20% on windows 10 (I don't believe netmarketshare's numbers as they are a msft partner), most of those are NOT gamers. You can count dx12 games on ONE hand. Most of those OS's are either forced upgrades due to incorrect update settings (waking up to win10...LOL), or FREE on machine's under $200 etc. Even if 1/4 of them are dx12 capable gpus, that would be NV programming for 2.5%-5% of the PC market. Unlike AMD they were not forced to move on to dx12 due to lack of funding. AMD placed a bet that we'd move on, be forced by MSFT or get console help from xbox1 (didn't work, ps4 winning 2-1) so they could ignore dx11. Nvidia will move when needed, until then they're dominating where most of us are, which is 1080p or less, and DX11. It's comic when people point to AMD winning at 4k when it is usually a case where both sides can't hit 30fps even before maxing details. AMD management keeps aiming at stuff we are either not doing at all (4k less than 2%), or won't be doing for ages such as dx12 games being more than dx11 in your OS+your GPU being dx12 capable.

What is more important? Testing the use case that describes 99.9% of the current games (dx11 or below, win7/8/vista/xp/etc), or games that can be counted on ONE hand and run in an OS most of us hate. No hate isn't a strong word here when the OS has been FREE for a freaking year and still can't hit 20% even by a microsoft partner's likely BS numbers...LOL. Testing dx12 is a waste of time. I'd rather see 3-4 more dx11 games tested for a wider variety although I just read a dozen reviews to see 30+ games tested anyway.

ajlueke - Friday, July 22, 2016 - link

That would be fine if it was only dx12. Doesn't look like Nvidia is investing much time in Vulkan either, especially not on older hardware.http://www.pcgamer.com/doom-benchmarks-return-vulk...

Cygni - Wednesday, July 20, 2016 - link

Cool attention troll. Nobody cares what free reviews you choose to read or why.AndrewJacksonZA - Wednesday, July 20, 2016 - link

Typo on page 18: "The Test""Core i7-4960X hosed in an NZXT Phantom 630 Windowed Edition" Hosed -> Housed

Michael Bay - Thursday, July 21, 2016 - link

I`d sure hose me a Core i7-4960X.AndrewJacksonZA - Wednesday, July 20, 2016 - link

@Ryan & team: What was your reasoning for not including the new Doom in your 2016 GPU Bench game list? AFAIK it's the first indication of Vulkan performance for graphics cards.Thank you! :-)

Ryan Smith - Wednesday, July 20, 2016 - link

We cooked up the list and locked in the games before Doom came out. It wasn't out until May 13th. GTX 1080 came out May 14th, by which point we had already started this article (and had published the preview).AndrewJacksonZA - Wednesday, July 20, 2016 - link

OK, thank you. Any chance of adding it to the list please?I'm a Windows gamer, so my personal interest in the cross-platform Vulkan is pretty meh right now (only one title right now, hooray! /s) but there are probably going to be some devs are going to choose it over DX12 for that very reason, plus I'm sure that you have readers who are quite interested in it.