The NVIDIA GeForce GTX 1080 & GTX 1070 Founders Editions Review: Kicking Off the FinFET Generation

by Ryan Smith on July 20, 2016 8:45 AM ESTOverclocking

For our final evaluation of the GTX 1080 and GTX 1070 Founders Edition cards, let’s take a look a overclocking.

Whenever I review an NVIDIA reference card, I feel it’s important to point out that while NVIDIA supports overclocking – why else would they include fine-grained controls like GPU Boost 3.0 – they have taken a hard stance against true overvolting. Overvolting is limited to NVIDIA’s built in overvoltage function, which isn’t so much a voltage control as it is the ability to unlock 1-2 more boost bins and their associated voltages. Meanwhile TDP controls are limited to whatever value NVIDIA believes is safe for that model card, which can vary depending on its GPU and its power delivery design.

For GTX 1080FE and its 5+1 power design, we have a 120% TDP limit, which translates to an absolute maximum TDP of 216W. As for GTX 1070FE and its 4+1 design, this is reduced to a 112% TDP limit, or 168W. Both cards can be “overvolted” to 1.093v, which represents 1 boost bin. As such the maximum clockspeed with NVIDIA’s stock programming is 1911MHz.

| GeForce GTX 1080FE Overclocking | ||||

| Stock | Overclocked | |||

| Core Clock | 1607MHz | 1807MHz | ||

| Boost Clock | 1734MHz | 1934MHz | ||

| Max Boost Clock | 1898MHz | 2088MHz | ||

| Memory Clock | 10Gbps | 11Gbps | ||

| Max Voltage | 1.062v | 1.093v | ||

| GeForce GTX 1070FE Overclocking | ||||

| Stock | Overclocked | |||

| Core Clock | 1506MHz | 1681MHz | ||

| Boost Clock | 1683MHz | 1858MHz | ||

| Max Boost Clock | 1898MHz | 2062MHz | ||

| Memory Clock | 8Gbps | 8.8Gbps | ||

| Max Voltage | 1.062v | 1.093v | ||

Both cards ended up overclocking by similar amounts. We were able to take the GTX 1080FE another 200MHz (+12% boost) on the GPU, and another 1Gbps (+10%) on the memory clock. The GTX 1070 could be pushed another 175MHz (+10% boost) on the GPU, while memory could go another 800Mbps (+10%) to 8.8Gbps.

Both of these are respectable overclocks, but compared to Maxwell 2 where our reference cards could do 20-25%, these aren’t nearly as extreme. Given NVIDIA’s comments on the 16nm FinFET voltage/frequency curve being steeper than 28nm, this could be first-hand evidence of that. It also indicates that NVIDIA has pushed GP104 closer to its limit, though that could easily be a consequence of the curve.

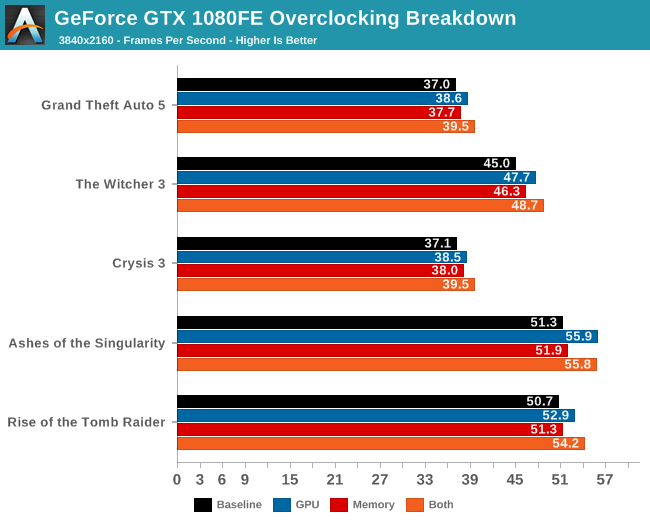

Given that this is our first look at Pascal, before diving into overall performance, let’s first take a look at an overclocking breakdown. NVIDIA offers 4 knobs to adjust when overclocking: overvolting (unlocking additional boost bins), increasing the power/temperature limits, the memory clock, and the GPU clock. Though all 4 will be adjusted for a final overclock, it’s often helpful to see whether it’s GPU overclocking or memory overclocking that delivers the greater impact, especially as it can highlight where the performance bottlenecks are on a card.

To examine this, we’ve gone ahead and benchmarked the GTX 1080 4 times: once with overvolting and increased power/temp limits (to serve as a baseline), once with the memory overclocked added, once with GPU overclock added, and finally with both the GPU and memory overclocks added.

| GeForce GTX 1080 Overclocking Performance | ||||||

| Power/Temp Limit (+20%) | Core (+12%) | Memory (+10%) | Cumulative | |||

| Tomb Raider |

+3%

|

+4%

|

+1%

|

+10%

|

||

| Ashes |

+1%

|

+9%

|

+1%

|

+10%

|

||

| Crysis 3 |

+4%

|

+4%

|

+2%

|

+11%

|

||

| The Witcher 3 |

+2%

|

+6%

|

+3%

|

+10%

|

||

| Grand Theft Auto V |

+1%

|

+4%

|

+2%

|

+8%

|

||

Across all 5 games, the results are clear and consistent: GPU overclocking contributes more to performance than memory overclocking. To be sure, both contribute, but even after compensating for the fact that the GPU overclock was a bit greater than the memory overclock (12% vs 10%), we still end up with the GPU more clearly contributing. Though I am a bit surprised that increasing the power/temperature limit didn't have more of an effect.

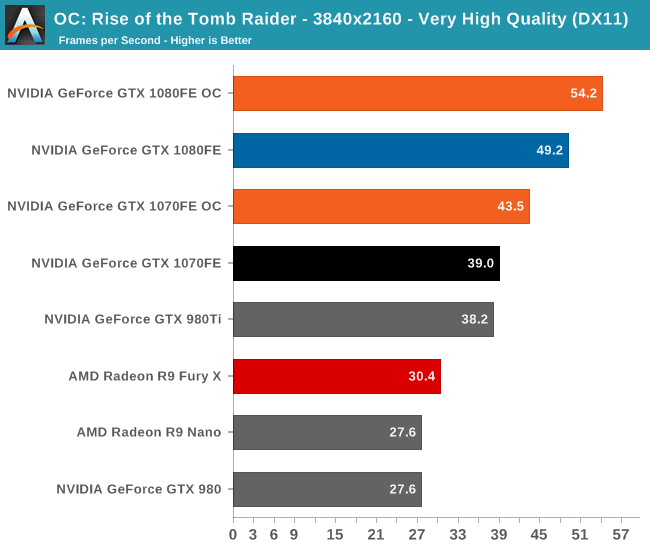

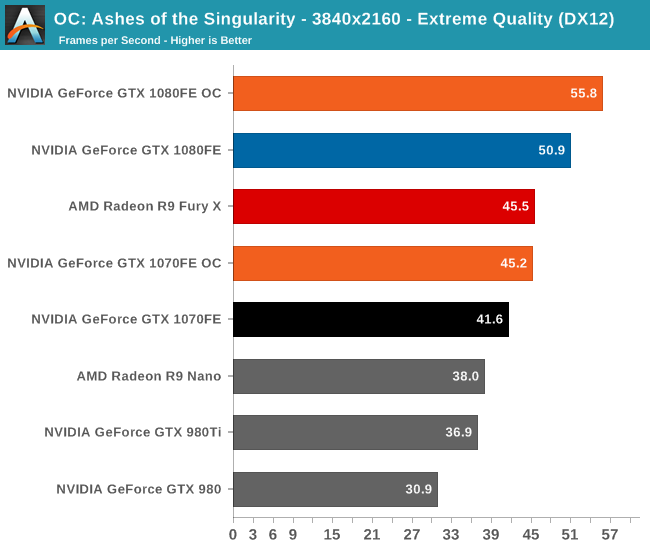

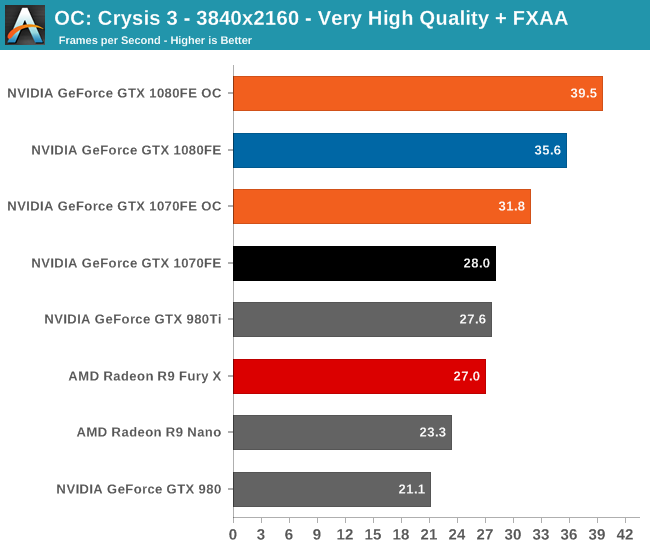

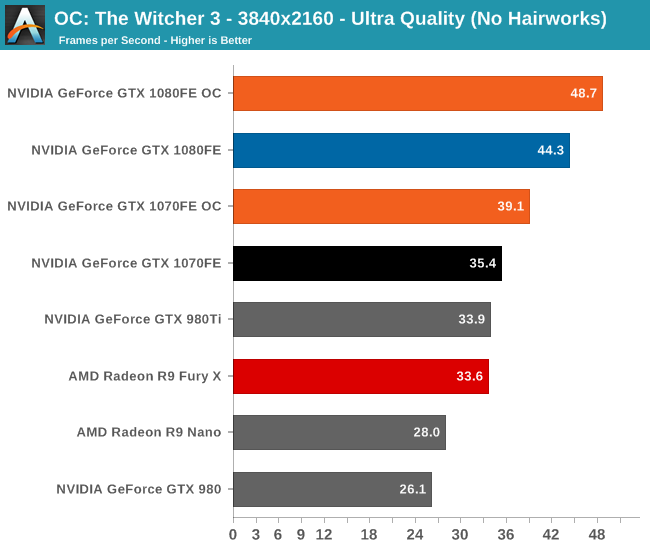

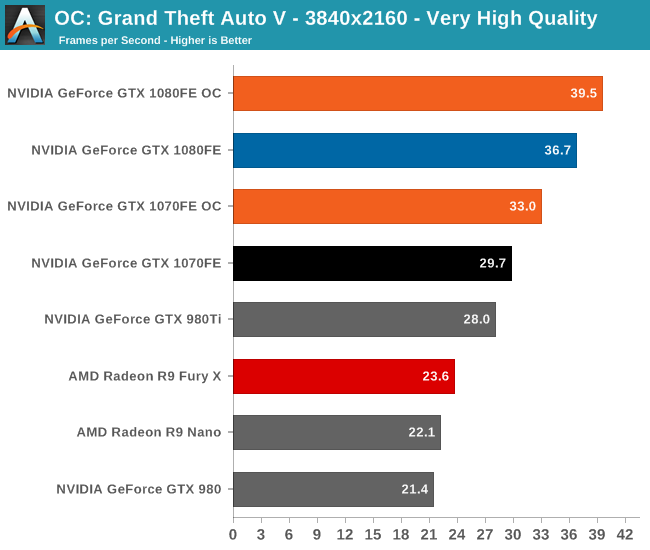

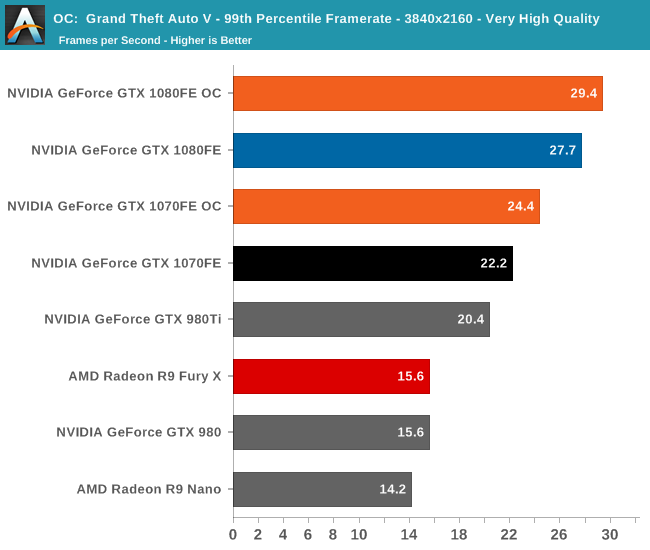

Overall we’re looking at an 8%-10% increase in performance from overclocking. It’s enough to further stretch the GTX 1080FE and GTX 1070FE’s leads, but it won’t radically alter performance.

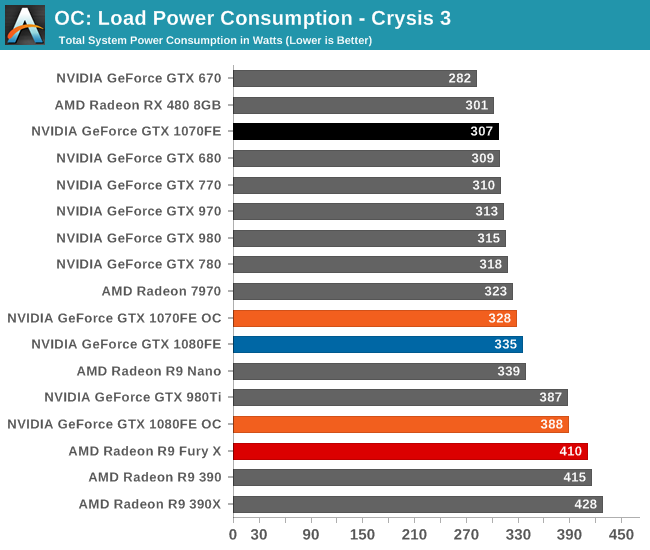

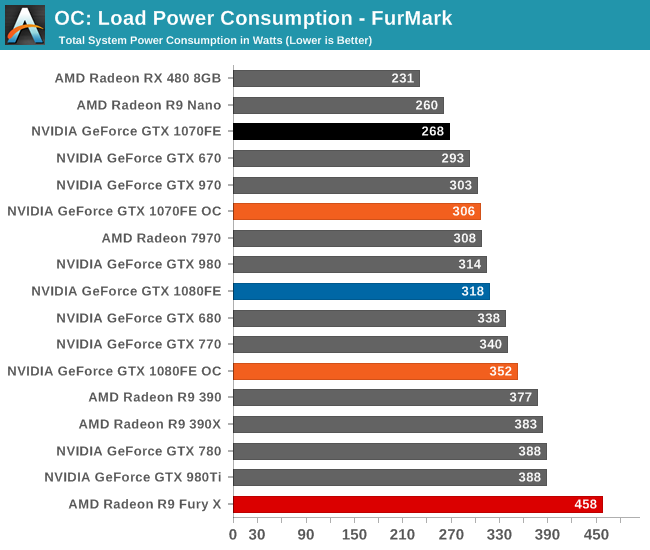

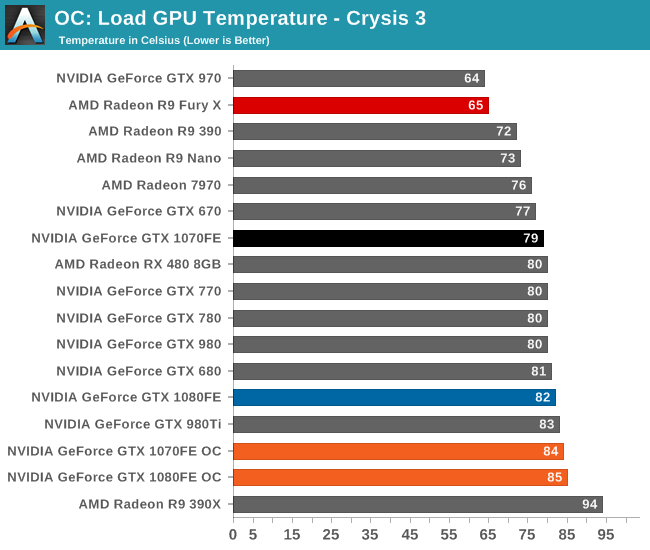

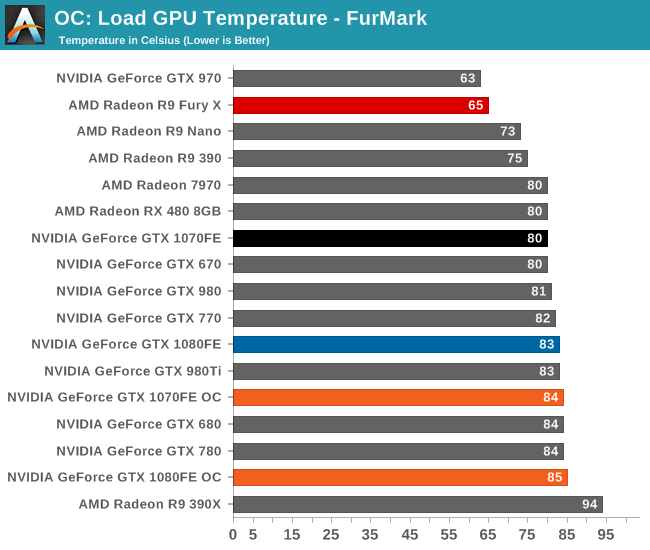

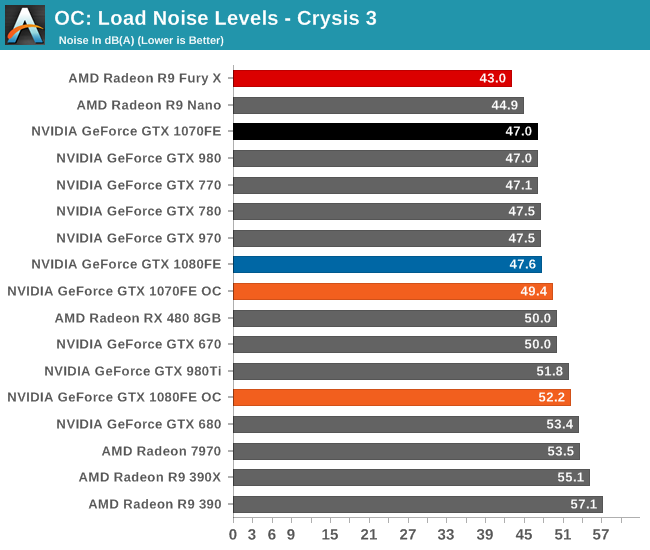

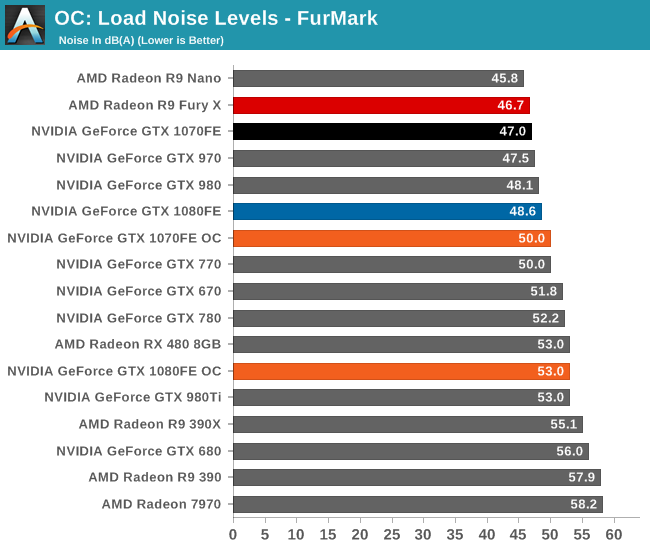

Finally, let’s see the cost of overclocking in terms of power, temperature, and noise. For the GTX 1080FE, the power cost at the wall proves to be rather significant. An 11% Crysis 3 performance increase translates into a 60W increase in power consumption at the wall, essentially moving GTX 1080FE into the neighborhood of NVIDIA’s 250W cards like the GTX 980 Ti. The noise cost is also not insignificant, as GTX 1080FE has to ramp up to 52.2dB(A), a 4.6dB(A) increase in noise. Meanwhile FurMark essentially confirms these findings, with a smaller power increase but a similar increase in noise.

As for the GTX 1070FE, neither the increase in power consumption nor noise is quite as high as GTX 1080FE, though the performance uplift is also a bit smaller. The power penalty is just 21W at the wall for Crysis 3 and 38W for FurMark. This translates to a 2-3dB(A) increase in noise, topping out at 50.0dB for FurMark.

200 Comments

View All Comments

Ryan Smith - Wednesday, July 20, 2016 - link

Thanks.Eden-K121D - Wednesday, July 20, 2016 - link

Finally the GTX 1080 reviewguidryp - Wednesday, July 20, 2016 - link

This echoes what I have been saying about this generation. It is really all about clock speed increases. IPC is essentially the same.This is where AMD lost out. Possibly in part the issue was going with GloFo instead of TSMC like NVidia.

Maybe AMD will move Vega to TSMC...

nathanddrews - Wednesday, July 20, 2016 - link

Curious... how did AMD lose out? Have you seen Vega benchmarks?TheinsanegamerN - Wednesday, July 20, 2016 - link

its all about clock speed for Nvidia, but not for AMD. AMD focused more on ICP, according to them.tarqsharq - Wednesday, July 20, 2016 - link

It feels a lot like the P4 vs Athlon XP days almost.stereopticon - Wednesday, July 20, 2016 - link

My favorite era of being a nerd!!! Poppin' opterons into s939 and pumpin the OC the athlon FX levels for a fraction of the price all while stompin' on pentium. It was a good (although expensive) time to a be a nerd... Besides paying 100 dollars for 1gb of DDR500. 6800gs budget friendly cards, and ATi x1800/1900 super beasts.. how i miss the dayseddman - Thursday, July 21, 2016 - link

Not really. Pascal has pretty much the same IPC as Maxwell and its performance increases accordingly with the clockspeed.Pentium 4, on the other hand, had a terrible IPC compared to Athlon and even Pentium 3 and even jacking its clockspeed to the sky didn't help it.

guidryp - Wednesday, July 20, 2016 - link

No one really improved IPC of their units.AMD was instead forced increase the unit count and chip size for 480 is bigger than the 1060 chip, and is using a larger bus. Both increase the chip cost.

AMD loses because they are selling a more expensive chip for less money. That squeezes their unit profit on both ends.

retrospooty - Wednesday, July 20, 2016 - link

"This echoes what I have been saying about this generation. It is really all about clock speed increases. IPC is essentially the same."- This is a good thing. Stuck on 28nm for 4 years, moving to 16nm is exactly what Nvidias architecture needed.