The NVIDIA GeForce GTX 1080 & GTX 1070 Founders Editions Review: Kicking Off the FinFET Generation

by Ryan Smith on July 20, 2016 8:45 AM ESTDesigning GP104: Running Up the Clocks

So if GP104’s per-unit throughput is identical to GM204, and the SM count has only been increased from 2048 to 2560 (25%), then what makes GTX 1080 60-70% faster than GTX 980? The answer there is that instead of vastly increasing the number of functional units for GP104 or increasing per-unit throughput, NVIDIA has instead opted to significantly raise the GPU clockspeed. And this in turn goes back to the earlier discussion on TSMC’s 16nm FinFET process.

With every advancement in fab technology, chip designers have been able to increase their clockspeeds thanks to the basic physics at play. However because TSMC’s 16nm node adds FinFETs for the first time, it’s extra special. What’s happening here is a confluence of multiple factors, but at the most basic level the introduction of FinFETs means that the entire voltage/frequency curve gets shifted. The reduced leakage and overall “stronger” FinFET transistors can run at higher clockspeeds at lower voltages, allowing for higher overall clockspeeds at the same (or similar) power consumption. We see this effect to some degree with every node shift, but it’s especially potent when making the shift from planar to FinFET, as has been the case for the jump from 28nm to 16nm.

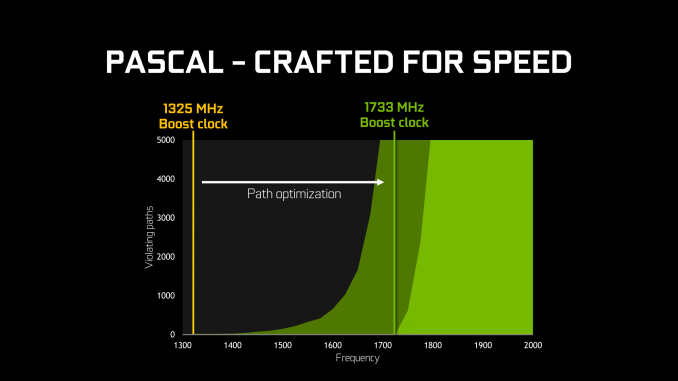

Given the already significant one-off benefits of such a large jump in the voltage/frequency curve, for Pascal NVIDIA has decided to fully embrace the idea and run up the clocks as much as is reasonably possible. At an architectural level this meant going through the design to identify bottlenecks in the critical paths – logic sections that couldn’t run at as high a frequency as NVIDIA would have liked – and reworking them to operate at higher frequencies. As GPUs typically (and still are) relatively low clocked, there’s not as much of a need to optimize critical paths in this matter, but with NVIDIA’s loftier clockspeed goals for Pascal, this changed things.

From an implementation point of view this isn’t the first time that NVIDIA has pushed for high clockspeeds, as most recently the 40nm Fermi architecture incorporated a double-pumped shader clock. However this is the first time NVIDIA has attempted something similar since they reined in their power consumption with Kepler (and later Maxwell). Having learned their lesson the hard way with Fermi, I’m told a lot more care went into matters with Pascal in order to avoid the power penalties NVIDIA paid with Fermi, exemplified by things such as only adding flip-flops where truly necessary.

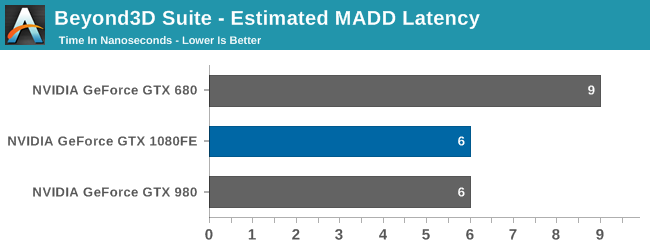

Meanwhile when it comes to the architectural impact of designing for high clockspeeds, the results seem minimal. While NVIDIA does not divulge full information on the pipeline of a CUDA core, all of the testing I’ve run indicates that the latency (in clock cycles) of the CUDA cores is identical to Maxwell. Which goes hand in hand with earlier observations about throughput. So although optimizations were made to the architecture to improve clockspeeds, it doesn’t look like NVIDIA has made any more extreme optimizations (e.g. pipeline lengthening) that detectably reduces Pascal’s per-clock performance.

Finally, more broadly speaking, while this is essentially a one-time trick for NVIDIA, it’s an interesting route for them to go. By cranking up their clockspeeds in this fashion, they avoid any real scale-out issues, at least for the time being. Although graphics are the traditional embarrassingly parallel problem, even a graphical workload is subject to some degree of diminishing returns as GPUs scale farther out. A larger number of SMs is more difficult to fill, not every aspect of the rendering process is massively parallel (shadow maps being a good example), and ever-increasing pixel shader lengths compound the problem. Admittedly NVIDIA’s not seeing significant scale-out issues quite yet, but this is why GTX 980 isn’t quite twice as fast as GTX 960, for example.

Just increasing the clockspeed, comparatively speaking, means that the entire GPU gets proportionally faster without shifting the resource balance; the CUDA cores are 43% faster, the geometry frontends are 43% faster, the ROPs are 43% faster, etc. The only real limitation in this regard isn’t the GPU itself, but whether you can adequately feed it. And this is where GDDR5X comes into play.

200 Comments

View All Comments

Robalov - Tuesday, July 26, 2016 - link

Feels like it took 2 years longer than normal for this review :Dextide - Wednesday, July 27, 2016 - link

The venn diagram is wrong -- for GP104 it says 1:64 speed for FP16 -- it is actually 1:1 for FP16 (ie same speed as FP32) (NOTE: GP100 has 2:1 FP16 -- meaning FP16 is twice as fast as FP32)extide - Wednesday, July 27, 2016 - link

EDIT: I might be incorrect about this actually as I have seen information claiming both .. weird.mxthunder - Friday, July 29, 2016 - link

its really driving me nuts that a 780 was used instead of a 780ti.yhselp - Monday, August 8, 2016 - link

Have I understood correctly that Pascal offers a 20% increase in memory bandwidth from delta color compression over Maxwell? As in a total average of 45% over Kepler just from color compression?flexy - Sunday, September 4, 2016 - link

Sorry, late comment. I just read about GPU Boost 3.0 and this is AWESOME. What they did, is expose what previously was only doable with bios modding - eg assigning the CLK bins different voltages. The problem with overclocking Kepler/Maxwell was NOT so much that you got stuck with the "lowest" overclock as the article says, but that simply adding a FIXED amount of clocks across the entire range of clocks, as you would do with Afterburner etc. where you simply add, say +120 to the core. What happened here is that you may be "stable" at the max overclock (CLK bin), but since you added more CLKs to EVERY clock bin, the assigned voltages (in the BIOS) for each bin might not be sufficient. Say you have CLK bin 63 which is set to 1304Mhz in a stock bios. Now you use Afterburner and add 150 Mhz, now all of a sudden this bin amounts to 1454Mhz BUT STILL at the same voltage as before, which is too low for 1454Mhz. You had to manually edit the table in the BIOS to shift clocks around, especially since not all Maxwell cards allowed adding voltage via software.Ether.86 - Tuesday, November 1, 2016 - link

Astonishing review. That's the way Anandtech should be not like the mobile section which sucks...Warsun - Tuesday, January 17, 2017 - link

Yeah looking at the bottom here.The GTX 1070 is on the same level as a single 480 4GB card.So that graph is wrong.http://www.hwcompare.com/30889/geforce-gtx-1070-vs...

Remember this is from GPU-Z based on hardware specs.No amount of configurations in the Drivers changes this.They either screwed up i am calling shenanigans.

marceloamaral - Thursday, April 13, 2017 - link

Nice Ryan Smith! But, my question is, is it truly possible to share the GPU with different workloads in the P100? I've read in the NVIDIA manual that "The GPU has a time sliced scheduler to schedule work from work queues belonging to different CUDA contexts. Work launched to the compute engine from work queues belonging to different CUDA contexts cannot execute concurrently."marceloamaral - Thursday, April 13, 2017 - link

Nice Ryan Smith! But, my question is, is it truly possible to share the GPU with different workloads in the P100? I've read in the NVIDIA manual that "The GPU has a time sliced scheduler to schedule work from work queues belonging to different CUDA contexts. Work launched to the compute engine from work queues belonging to different CUDA contexts cannot execute concurrently."