The NVIDIA GeForce GTX 1080 & GTX 1070 Founders Editions Review: Kicking Off the FinFET Generation

by Ryan Smith on July 20, 2016 8:45 AM ESTGPU Boost 3.0: Finer-Grained Clockspeed Controls

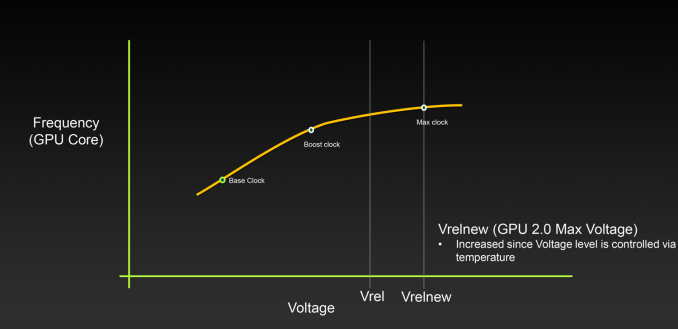

While much of this is abstracted away in everyday GPU discussions, under the hood the concept of clockspeed is a little lot more complex than the simple base clock and boost clock numbers posted in specification tables. Since the introduction of Kepler, NVIDIA has introduced fine-grained voltage points, which defines a series of GPU voltages and their respective clockspeeds. The GPU in turn operates at points along the resulting curve, shifting clockspeeds based on which voltage it’s at and what the environmental conditions are.

While these voltage points have been present since Kepler, NVIDIA has not, until now, exposed them to end users. However with Pascal this is finally changing, with the introduction of GPU Boost 3.0.

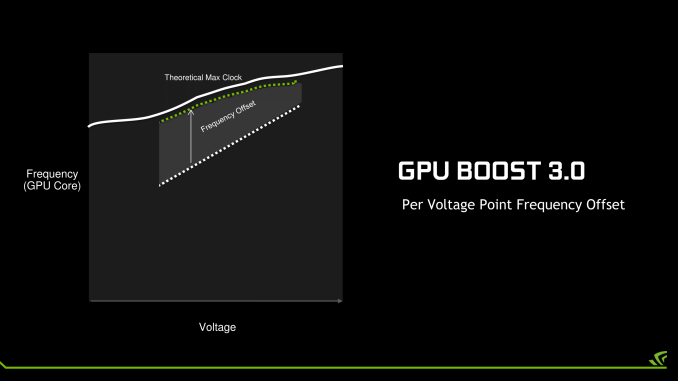

With the latest rendition of NVIDIA’s GPU clockspeed management technology, NVIDIA has made the individual voltage points programmable, and in turn they are exposing this functionality to third party overclocking programs via NVAPI. Consequently it is now possible to adjust the clockspeed of Pascal GPUs at each voltage point, a much greater level of control than before.

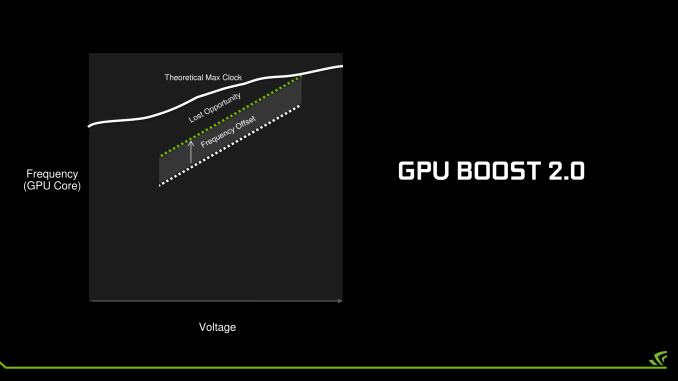

The addition of finer-grained controls is designed to improve the flexibility of overclocking on Pascal. Prior to GPU Boost 3.0, the only way to overclock was to adjust the clockspeed for all voltage points by the same amount at the same time – or in NVIDIA’s GPU Boost 3.0 vernacular, a fixed frequency offset. While this certainly works, it limits the highest stable overclock to the lowest point on the voltage/frequency curve. If the GPU can only overclock by 50MHz at the highest voltage point, but 100MHz at a middle point, then the highest stable overclock is only going to be 50MHz.

With GPU Boost 3.0 on the other hand, each point on the curve can be adjusted individually. This means the weakest points can be overclocked to a lesser degree while the strongest points can be more significantly overclocked. All other things held equal, this should improve GPU overclocking performance, as GPU tends to shift along multiple points when it’s running. Put another way: GPU Boost 3.0 seeks to wring out the last bits of overclocking headroom along the voltage frequency curve. The only way to go higher still would be to increase the voltage, which NVIDIA hasn’t truly allowed since Fermi.

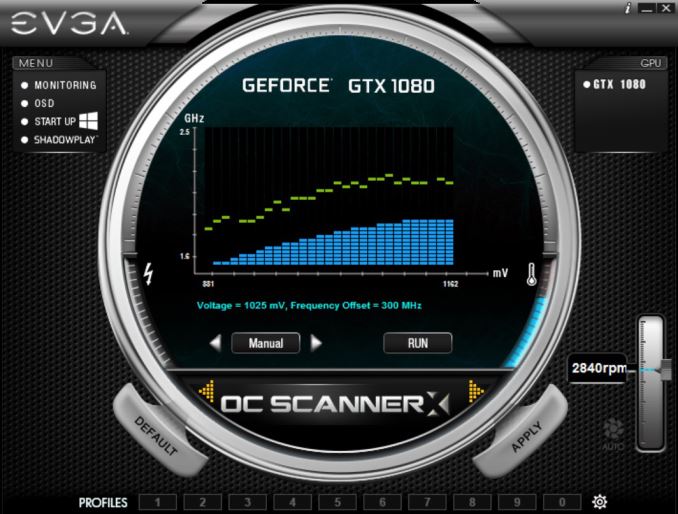

Meanwhile, the flip side of having finer-grained controls is that it’s now more work to dial in the perfect overclock. Rather than testing one overclock you now have to test nearly two-dozen voltage points to fully exploit GPU Boost 3.0’s abilities, which is time consuming at the best of times. As a result NVIDIA has also exposed a setting in NVAPI to lock the GPU at a specific voltage point. The significance of this is that it now allows overclocking utilities to go through the voltage points and discretely test each one.

The first software to implement this concept is EVGA’s Precision XOC. The latest iteration of EVGA’s overclocking software is able to go through the voltage points and run an OC ScannerX test on each one to find its stability. When a point fails, Precision XOC will then back off the frequency at that point and move on. The end result is that after a series of trials and failures, you should have the virtually-perfect overclock.

Unfortunately while this is sound in concept, in practice NVIDIA and EVGA still aren’t quite there yet. Overclocking failures can cause multiple types of failures; graphic corruption (easy to catch and recover), driver crashes (moderately difficult to recover from), and system hardlocks (very difficult to recover from). In practice, Precision XOC isn’t yet at the point where it can quickly and efficiently handle the last two cases; so driver crashes and system hardlocks still require human intervention, and Precision XOC doesn’t do a great job of resuming from where it left off.

Hopefully one day NVIDIA and EVGA will get there, but for now the only practical way to fully exploit GPU Boost 3.0 is the tedious way. This means either using traditional offset overclocking, or a mode NVIDIA calls linear overclocking, in which the slope of the voltage/frequency curve is adjusted rather than offset (think m in y=mx+b rather than b). In this case two points are picked, and all of the voltage points are overclocked to match the resulting linear curve.

Observations on Clocking with Pascal

While we’re on the subject of clockspeed management on Pascal, I want to discuss my observations with how clockspeeds work on NVIDIA’s newest GPU. When it comes to clockspeed management NVIDIA hasn’t just changed how overclocking works, but relative to Kepler/Maxwell, there are some other, subtle changes.

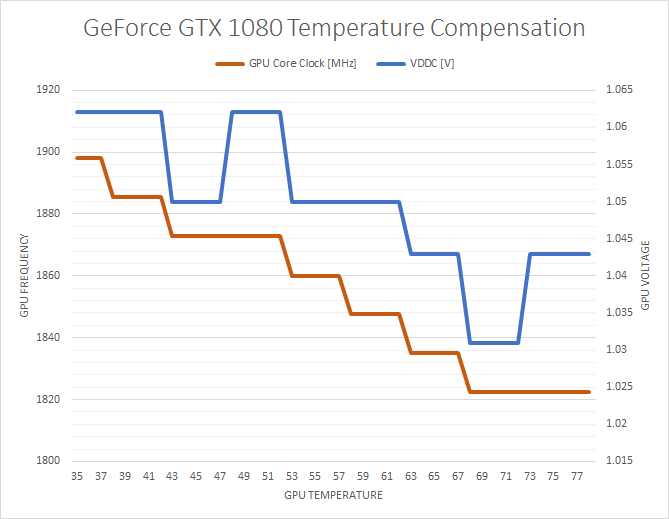

To start, Pascal clockspeeds are much more temperature-dependent than on Maxwell 2 or Kepler. Kepler would drop a single bin at a specific temperature, and Maxwell 2 would sustain the same clockspeed throughout. However Pascal will drop its clockspeeds as the GPU warms up, regardless of whether it still has formal thermal and TDP headroom to spare. This happens by backing off both on the clockspeed at each individual voltage point, and backing off to lower voltage points altogether.

To quantify this effect, I ran LuxMark 3.1 continuously for several minutes, until the GPU temperature leveled out. As a compute test, LuxMark does not cause the GTX 1080 to hit its 83C temperature limit nor its 180W TDP limit, so it’s a good example of the temperature compensation effect.

What we find is that from the start of the run until the end, the GPU clockspeed drops from the maximum boost bin of 1898MHz to a sustained 1822MHz, a drop of 4%, or 6 clockspeed bins. These shifts happen relatively consistently up to 68C, after which they stop.

For what it’s worth, the GTX 1080 gets up to 68C relatively quickly, so GPU performance stabilizes rather soon. But this does mean that GTX 1080’s performance is more temperature dependent than GTX 980’s. Throwing a GTX 1080 under water could very well net you a few percent performance increase by avoiding the compensation effect, along with any performance gained from avoiding the card’s 83C temperature throttle.

In any case, I believe this to be compensation for the effects of higher temperatures on the GPU, backing off on voltages/clockspeeds due to potential issues. What those issues are I’m not sure; it could be that 16nm FinFET doesn’t like high voltages at higher temperatures (NVIDIA takes several steps to minimize GPU degradation), or something else entirely.

Otherwise, outside of the temperature compensation effect, clockspeeds on GTX 1080 appear to mostly be a function of temperature or running out of boost bins (VREL limited). The card rarely appears to be TDP limited, especially at steady-state. This indicates that NVIDIA could probably increase the fan speed of the cooler a bit to get a bit more performance, but at the cost of generating a bit more noise.

Finally, how overvolting is being represented is a bit different from before. Previously NVIDIA (and EVGA Precision) would show the exact additional voltage (i.e. the voltage of the unlocked voltage points) when overvolting. However now overvolting is expressed on a percentage scale from 0% to 100%, which obfuscates what the higher voltage points actually are. However this hasn’t changed the underlying behavior of overvolting; one or more voltage points are calibrated by NVIDIA, but they are locked due to the potential for GPU degradation. Overvolting then unlocks these points, allowing the GPU to boost higher so long as there is thermal and power headroom to allow it.

200 Comments

View All Comments

Robalov - Tuesday, July 26, 2016 - link

Feels like it took 2 years longer than normal for this review :Dextide - Wednesday, July 27, 2016 - link

The venn diagram is wrong -- for GP104 it says 1:64 speed for FP16 -- it is actually 1:1 for FP16 (ie same speed as FP32) (NOTE: GP100 has 2:1 FP16 -- meaning FP16 is twice as fast as FP32)extide - Wednesday, July 27, 2016 - link

EDIT: I might be incorrect about this actually as I have seen information claiming both .. weird.mxthunder - Friday, July 29, 2016 - link

its really driving me nuts that a 780 was used instead of a 780ti.yhselp - Monday, August 8, 2016 - link

Have I understood correctly that Pascal offers a 20% increase in memory bandwidth from delta color compression over Maxwell? As in a total average of 45% over Kepler just from color compression?flexy - Sunday, September 4, 2016 - link

Sorry, late comment. I just read about GPU Boost 3.0 and this is AWESOME. What they did, is expose what previously was only doable with bios modding - eg assigning the CLK bins different voltages. The problem with overclocking Kepler/Maxwell was NOT so much that you got stuck with the "lowest" overclock as the article says, but that simply adding a FIXED amount of clocks across the entire range of clocks, as you would do with Afterburner etc. where you simply add, say +120 to the core. What happened here is that you may be "stable" at the max overclock (CLK bin), but since you added more CLKs to EVERY clock bin, the assigned voltages (in the BIOS) for each bin might not be sufficient. Say you have CLK bin 63 which is set to 1304Mhz in a stock bios. Now you use Afterburner and add 150 Mhz, now all of a sudden this bin amounts to 1454Mhz BUT STILL at the same voltage as before, which is too low for 1454Mhz. You had to manually edit the table in the BIOS to shift clocks around, especially since not all Maxwell cards allowed adding voltage via software.Ether.86 - Tuesday, November 1, 2016 - link

Astonishing review. That's the way Anandtech should be not like the mobile section which sucks...Warsun - Tuesday, January 17, 2017 - link

Yeah looking at the bottom here.The GTX 1070 is on the same level as a single 480 4GB card.So that graph is wrong.http://www.hwcompare.com/30889/geforce-gtx-1070-vs...

Remember this is from GPU-Z based on hardware specs.No amount of configurations in the Drivers changes this.They either screwed up i am calling shenanigans.

marceloamaral - Thursday, April 13, 2017 - link

Nice Ryan Smith! But, my question is, is it truly possible to share the GPU with different workloads in the P100? I've read in the NVIDIA manual that "The GPU has a time sliced scheduler to schedule work from work queues belonging to different CUDA contexts. Work launched to the compute engine from work queues belonging to different CUDA contexts cannot execute concurrently."marceloamaral - Thursday, April 13, 2017 - link

Nice Ryan Smith! But, my question is, is it truly possible to share the GPU with different workloads in the P100? I've read in the NVIDIA manual that "The GPU has a time sliced scheduler to schedule work from work queues belonging to different CUDA contexts. Work launched to the compute engine from work queues belonging to different CUDA contexts cannot execute concurrently."